An Insight Into Global Payment Technologies With James Booth

With the e-commerce market set to reach a predicted market volume of £92 million

by 2025, and the opportunity for cross-border expansion at an all-time high, the

demand for more localised and innovative payment methods will only continue to

grow. More and more customers are now online, looking for products or services

that suit their very specific needs. A shopper might look across borders for

what they want: better-quality products, payment methods accepted, stronger

brand loyalty, and more. But they will quickly abandon the transaction page if

their preferred payment method is not available. Ultimately, payment choice will

play a major role in driving sales in the future, meaning merchants will need a

diverse payment portfolio to ensure transactions are completed and customer

loyalty retained. This will continue to spark increased innovation for payments,

but also the proliferation of niche local payment options across the globe.

However, as digital payments head towards a global tipping point, the need for

greater regulation and security will also continue to grow.

With the e-commerce market set to reach a predicted market volume of £92 million

by 2025, and the opportunity for cross-border expansion at an all-time high, the

demand for more localised and innovative payment methods will only continue to

grow. More and more customers are now online, looking for products or services

that suit their very specific needs. A shopper might look across borders for

what they want: better-quality products, payment methods accepted, stronger

brand loyalty, and more. But they will quickly abandon the transaction page if

their preferred payment method is not available. Ultimately, payment choice will

play a major role in driving sales in the future, meaning merchants will need a

diverse payment portfolio to ensure transactions are completed and customer

loyalty retained. This will continue to spark increased innovation for payments,

but also the proliferation of niche local payment options across the globe.

However, as digital payments head towards a global tipping point, the need for

greater regulation and security will also continue to grow.Dealing With Stubbornness Of AI Autonomous Vehicles

Shifting gears, the future of cars entails self-driving cars. This stubbornness element in the flatbed truck tale brings up an interesting facet about self-driving cars and one that few are giving much attention to. First, be aware that true self-driving cars are driven by an AI-based driving system and not by a human driver. Thus, in the case of this flatbed truck scenario, if the car had been a self-driving car, the AI driving system would have been trying to get the car up that ramp and onto the flatbed. Secondly, there are going to be instances wherein a human wants a self-driving car to go someplace, but the AI driving system will “refuse” to do so. I want to clarify that the AI is not somehow sentient since the type of AI being devised today is not in any manner whatsoever approaching sentience. Perhaps far away in the future, we will achieve that kind of AI, but that’s not in the cards right now. This latter point is important because the AI driving system opting to “refuse” to drive someplace is not due to the AI being a sentient being, and instead is merely a programmatic indication that the AI has detected a situation in which it is not programmed to drive.Rise of APIs brings new security threat vector -- and need for novel defenses

The speed is important. The pandemic has been even more of a challenge for a lot

of companies. They had to move to more of a digital experience much faster than

they imagined. So speed has become way more prominent. But that speed creates a

challenge around safety, right? Speed creates two main things. One is that you

have more opportunity to make mistakes. If you ask people to do something very

fast because there’s so much business and consumer pressure, sometimes you cut

corners and make mistakes. Not deliberately. It’s just as software engineers can

never write completely bug-free code. But if you have more bugs in your code

because you are moving very, very fast, it creates a greater challenge. So how

do you create safety around it? By catching these security bugs and issues much

earlier in your software development life cycle (SDLC). If a developer creates a

new API and that API could be exploited by a hacker -- because there is a bug in

that API around security authentication check -- you have to try to find it in

your test cycle and your SDLC. The second way to gain security is by creating a

safety net. Even if you find things earlier in your SDLC, it’s impossible to

catch everything.

The speed is important. The pandemic has been even more of a challenge for a lot

of companies. They had to move to more of a digital experience much faster than

they imagined. So speed has become way more prominent. But that speed creates a

challenge around safety, right? Speed creates two main things. One is that you

have more opportunity to make mistakes. If you ask people to do something very

fast because there’s so much business and consumer pressure, sometimes you cut

corners and make mistakes. Not deliberately. It’s just as software engineers can

never write completely bug-free code. But if you have more bugs in your code

because you are moving very, very fast, it creates a greater challenge. So how

do you create safety around it? By catching these security bugs and issues much

earlier in your software development life cycle (SDLC). If a developer creates a

new API and that API could be exploited by a hacker -- because there is a bug in

that API around security authentication check -- you have to try to find it in

your test cycle and your SDLC. The second way to gain security is by creating a

safety net. Even if you find things earlier in your SDLC, it’s impossible to

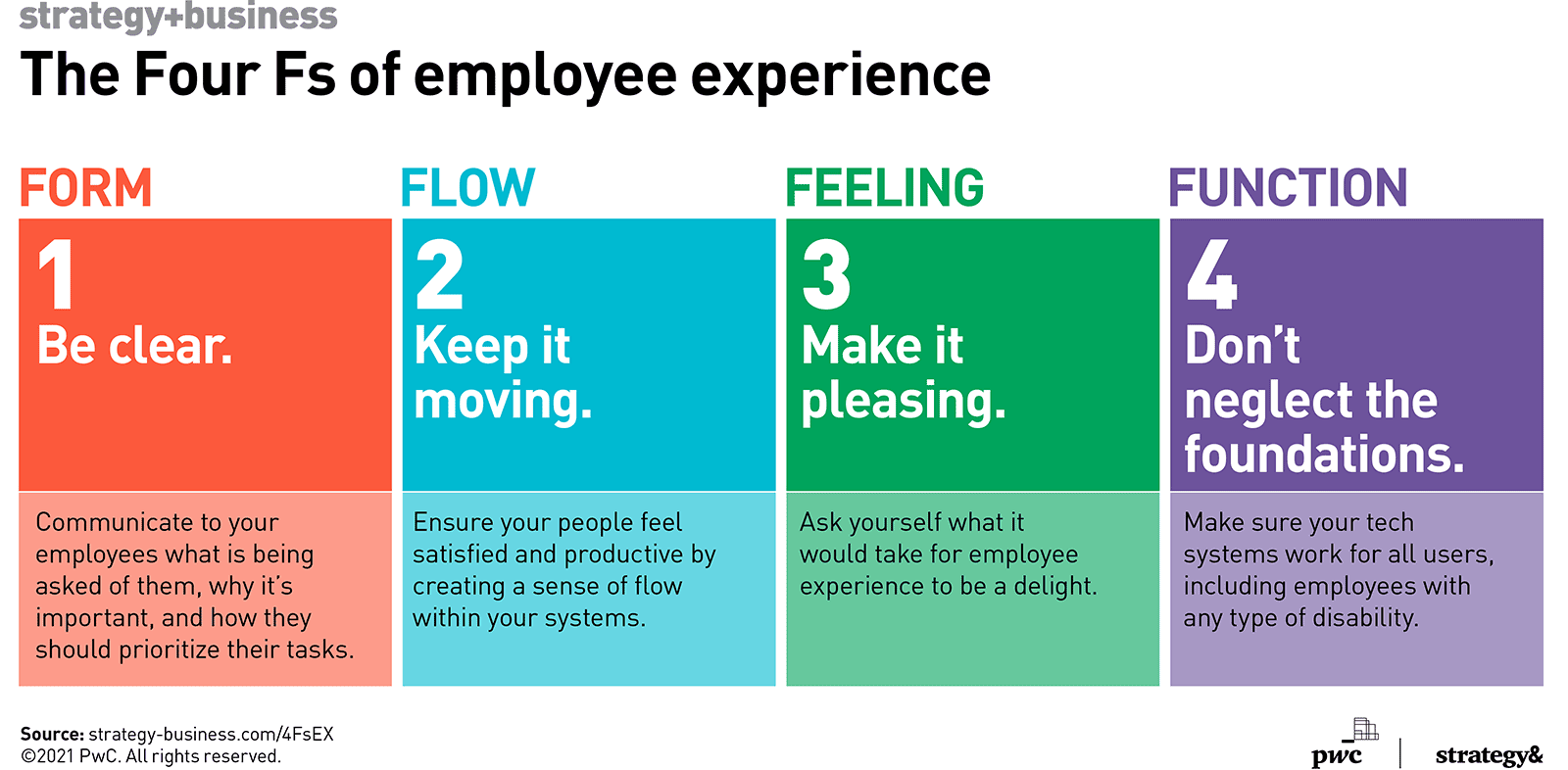

catch everything. Will you be heading back to the office? Should you?

The vast majority said it had worked out much better than they expected. They found people were more, rather than less, productive. It turns out folks welcomed not having to deal with long commutes, crowded open-plan offices, or Dilbert-like cubicle farms. Of course, not everyone is happy. Juggling kids and office work can mean misery. But in a recent Blind professional worker social network survey of 3,000 staffers 35% said they would quit their jobs if work from home ends. That's a lot. I'd hate to replace more than a third of my staff if I insisted everyone return to 1 Corporate Drive. If your people want to work from home, and they've shown they can deliver, why take a chance on losing them? Not everyone is on board with the change. As one Microsoft staffer on Blind put it, "I don’t think the 5-day work in the office will ever be relevant again. You will have Team A and Team B, working 2 days in the office and 3 days at home. Social interaction in person is needed." Notice, though, that even here, there's no assumption of a five-day work week.4 reasons to learn machine learning with JavaScript

Fortunately, not all machine learning applications require expensive servers.

Many models can be compressed to run on user devices. And mobile device

manufacturers are equipping their devices with chips to support local deep

learning inference. But the problem is that Python machine learning is not

supported by default on many user devices. MacOS and most versions of Linux come

with Python preinstalled, but you still have to install machine learning

libraries separately. Windows users must install Python manually. And mobile

operating systems have very poor support for Python interpreters. JavaScript, on

the other hand, is natively supported by all modern mobile and desktop browsers.

This means JavaScript machine learning applications are guaranteed to run on

most desktop and mobile devices. Therefore, if your machine learning model runs

on JavaScript code in the browser, you can rest assured that it will be

accessible to nearly all users. There are already several JavaScript machine

learning libraries. An example is TensorFlow.js, the JavaScript version of

Google’s famous TensorFlow machine learning and deep learning library.

Fortunately, not all machine learning applications require expensive servers.

Many models can be compressed to run on user devices. And mobile device

manufacturers are equipping their devices with chips to support local deep

learning inference. But the problem is that Python machine learning is not

supported by default on many user devices. MacOS and most versions of Linux come

with Python preinstalled, but you still have to install machine learning

libraries separately. Windows users must install Python manually. And mobile

operating systems have very poor support for Python interpreters. JavaScript, on

the other hand, is natively supported by all modern mobile and desktop browsers.

This means JavaScript machine learning applications are guaranteed to run on

most desktop and mobile devices. Therefore, if your machine learning model runs

on JavaScript code in the browser, you can rest assured that it will be

accessible to nearly all users. There are already several JavaScript machine

learning libraries. An example is TensorFlow.js, the JavaScript version of

Google’s famous TensorFlow machine learning and deep learning library.4 Software QA Metrics To Enhance Dev Quality and Speed

The caliber of code is fundamental to the quality of your product. Through

frequent reviews you can assess the health of your software, thus detecting

unreliable code and defects in the building blocks of your project. Identifying

flaws is going to help you throughout the dev process and well into the future.

Good quality code will allow you to reduce the risks of defects and avoid

application and website crashes. Today, much of this process can be automated,

avoiding human error and diverting resources toward other tasks. But, there are

a number of code quality analytics you can focus on. ... Flagging issues in the

working process can draw attention to inefficiencies, allowing the opportunity

to implement project management solutions. Once flaws are established, there’s a

whole host of management software for small businesses and large businesses

alike to improve efficiency. Automation can also help you through the testing

process. According to PractiTest, 78% of organizations currently use test

automation for functional or regression tests. This automation will ultimately

save time and money, eliminating human error and allowing resources to be

redirected elsewhere in the dev process.

The caliber of code is fundamental to the quality of your product. Through

frequent reviews you can assess the health of your software, thus detecting

unreliable code and defects in the building blocks of your project. Identifying

flaws is going to help you throughout the dev process and well into the future.

Good quality code will allow you to reduce the risks of defects and avoid

application and website crashes. Today, much of this process can be automated,

avoiding human error and diverting resources toward other tasks. But, there are

a number of code quality analytics you can focus on. ... Flagging issues in the

working process can draw attention to inefficiencies, allowing the opportunity

to implement project management solutions. Once flaws are established, there’s a

whole host of management software for small businesses and large businesses

alike to improve efficiency. Automation can also help you through the testing

process. According to PractiTest, 78% of organizations currently use test

automation for functional or regression tests. This automation will ultimately

save time and money, eliminating human error and allowing resources to be

redirected elsewhere in the dev process.

5 Fundamental But Effective IoT Device Security Controls

IoT devices introduce a host of vulnerabilities into organizations’ networks

and are often difficult to patch. With more than 30 billion active IoT device

connections estimated by 2025, it is imperative information-security

professionals find an efficient framework to better monitor and protect IoT

devices from being leveraged for distributed denial or service (DDoS),

ransomware or even data exfiltration. When the convenience of a doorbell

camera, robot vacuum cleaner or cellphone-activated thermostat could

potentially wreak financial havoc or threaten physical harm, the security of

these devices cannot be taken lightly. We must refocus our cyber-hygiene

mindset to view these devices as potential threats to our sensitive data.

There are too many examples of threat actors gaining access to a supposedly

insignificant IoT device, like the HVAC control system for a global retail

chain, only to pivot to other unsecured devices on the same network before

reaching valuable sensitive information. While phishing remains the most

popular attack vector, reinforcing the need for humans to be an integral part

of strong security program, IoT devices now offer another avenue for

cybercriminals to access accounts and networks to steal data, conduct

reconnaissance and further deploy malware.

IoT devices introduce a host of vulnerabilities into organizations’ networks

and are often difficult to patch. With more than 30 billion active IoT device

connections estimated by 2025, it is imperative information-security

professionals find an efficient framework to better monitor and protect IoT

devices from being leveraged for distributed denial or service (DDoS),

ransomware or even data exfiltration. When the convenience of a doorbell

camera, robot vacuum cleaner or cellphone-activated thermostat could

potentially wreak financial havoc or threaten physical harm, the security of

these devices cannot be taken lightly. We must refocus our cyber-hygiene

mindset to view these devices as potential threats to our sensitive data.

There are too many examples of threat actors gaining access to a supposedly

insignificant IoT device, like the HVAC control system for a global retail

chain, only to pivot to other unsecured devices on the same network before

reaching valuable sensitive information. While phishing remains the most

popular attack vector, reinforcing the need for humans to be an integral part

of strong security program, IoT devices now offer another avenue for

cybercriminals to access accounts and networks to steal data, conduct

reconnaissance and further deploy malware.Improving model performance through human participation

In order to achieve high-quality human reviews, it is important to set up a

well-defined training process for the human agents who will be responsible for

reviewing items manually. A well-thought-out training plan and a regular

feedback loop for the human agents will help maintain the high-quality bar of

the manually reviewed items over time. This rigorous training and feedback

loop help minimize human error in addition to helping maintain SLA

requirements for per item decisions. Another strategy that is slightly more

expensive is to use a best-of-3 approach for each item that is manually

reviewed, i.e., use 3 agents to review the same item and take the majority

vote from the 3 agents to decide the final outcome. In addition, log the

disagreements between the agents so that the teams can retrospect on these

disagreements to refine their judging policies. Best practices applicable to

microservices apply here as well. This includes appropriate monitoring of the

following: End-to-end latency of an item from the time it was received in

the system to the time a decision was made on it; Overall health of the

agent pool; Volume of items sent for human review; and Hourly

statistics on the classification of items.

In order to achieve high-quality human reviews, it is important to set up a

well-defined training process for the human agents who will be responsible for

reviewing items manually. A well-thought-out training plan and a regular

feedback loop for the human agents will help maintain the high-quality bar of

the manually reviewed items over time. This rigorous training and feedback

loop help minimize human error in addition to helping maintain SLA

requirements for per item decisions. Another strategy that is slightly more

expensive is to use a best-of-3 approach for each item that is manually

reviewed, i.e., use 3 agents to review the same item and take the majority

vote from the 3 agents to decide the final outcome. In addition, log the

disagreements between the agents so that the teams can retrospect on these

disagreements to refine their judging policies. Best practices applicable to

microservices apply here as well. This includes appropriate monitoring of the

following: End-to-end latency of an item from the time it was received in

the system to the time a decision was made on it; Overall health of the

agent pool; Volume of items sent for human review; and Hourly

statistics on the classification of items.The challenges of applied machine learning

One of the key challenges of applied machine learning is gathering and

organizing the data needed to train models. This is in contrast to scientific

research where training data is usually available and the goal is to create

the right machine learning model. “When creating AI in the real world, the

data used to train the model is far more important than the model itself,”

Rochwerger and Pang write in Real World AI. “This is a reversal of the typical

paradigm represented by academia, where data science PhDs spend most of their

focus and effort on creating new models. But the data used to train models in

academia are only meant to prove the functionality of the model, not solve

real problems. Out in the real world, high-quality and accurate data that can

be used to train a working model is incredibly tricky to collect.” In many

applied machine learning applications, public datasets are not useful for

training models. You need to either gather your own data or buy them from a

third party. Both options have their own set of challenges. For instance, in

the herbicide surveillance scenario mentioned earlier, the organization will

need to capture a lot of images of crops and weeds.

One of the key challenges of applied machine learning is gathering and

organizing the data needed to train models. This is in contrast to scientific

research where training data is usually available and the goal is to create

the right machine learning model. “When creating AI in the real world, the

data used to train the model is far more important than the model itself,”

Rochwerger and Pang write in Real World AI. “This is a reversal of the typical

paradigm represented by academia, where data science PhDs spend most of their

focus and effort on creating new models. But the data used to train models in

academia are only meant to prove the functionality of the model, not solve

real problems. Out in the real world, high-quality and accurate data that can

be used to train a working model is incredibly tricky to collect.” In many

applied machine learning applications, public datasets are not useful for

training models. You need to either gather your own data or buy them from a

third party. Both options have their own set of challenges. For instance, in

the herbicide surveillance scenario mentioned earlier, the organization will

need to capture a lot of images of crops and weeds.Window Snyder Launches Startup to Fill IoT Security Gaps

In the connected device market, she sees a large attack surface and small

security investment. "There are so many devices out there that don't have any

of these mechanisms in place," she explains. "Even for those that do have

security mechanisms, not all of them are built to the kind of resilience

that's appropriate for the threats they're up against." It's a big problem

with multiple reasons. Some organizations have small engineering teams and few

resources to build resilience into their products. Some have large teams but

don't prioritize security because they're in a closed-system manufacturing

operation, for example, and the machines don't have network access. Many

connected devices are in the field for long periods of time and it's hard to

deliver updates, so manufacturers don't ship them unless they have to.

"There's this combination of both security need and then additionally this

requirement for an update mechanism that is reliable," Snyder continues.

Oftentimes manufacturers lack confidence in how updates are deployed and don't

trust the mechanism will deliver medium- or high-severity security updates on

a regular basis.

In the connected device market, she sees a large attack surface and small

security investment. "There are so many devices out there that don't have any

of these mechanisms in place," she explains. "Even for those that do have

security mechanisms, not all of them are built to the kind of resilience

that's appropriate for the threats they're up against." It's a big problem

with multiple reasons. Some organizations have small engineering teams and few

resources to build resilience into their products. Some have large teams but

don't prioritize security because they're in a closed-system manufacturing

operation, for example, and the machines don't have network access. Many

connected devices are in the field for long periods of time and it's hard to

deliver updates, so manufacturers don't ship them unless they have to.

"There's this combination of both security need and then additionally this

requirement for an update mechanism that is reliable," Snyder continues.

Oftentimes manufacturers lack confidence in how updates are deployed and don't

trust the mechanism will deliver medium- or high-severity security updates on

a regular basis.Quote for the day:

"Authority without wisdom is like a heavy ax without an edge -- fitter to bruise than polish." -- Anne Bradstreet