Quote for the day:

“Too many of us are not living our dreams because we are living our fears.” -- Les Brown

Your employees are feeling ‘OK’ – and that’s a serious problem

At first glance, OK doesn’t sound dangerous. Teams aren’t unhappy enough to

trigger alarms, nor are they burning out; they keep delivering at an

acceptable level. But ‘acceptable’ is not the same as ‘successful’. Teams

stuck in OK lack the energy, creativity and ambition to truly thrive. They’re

passable, not powerful – and that complacency can quietly erode performance.

... In fact, the lifetime value of a happy employee is more than twice that of

an OK one. This is not soft sentiment – it’s hard economics. By contrast, OK

teams bring hidden costs. They are about twice as likely to miss targets as

happy teams and have 50% higher staff turnover. They are also less

collaborative, less creative and less resilient when challenges arise. ...

First, reframe happiness as a serious business metric. It’s not vague or

fluffy. It’s measurable, trackable and improvable. It connects directly to

performance, retention and, ultimately, profit. Second, focus on the drivers

of happiness. I’ve identified five ways to develop happiness at work: connect,

be fair, empower, challenge and inspire. ... Third, embed a rhythm of

measure-meet-repeat. Measure: Use light-touch weekly pulses and deeper

quarterly surveys to gather data; Meet: Bring teams together to discuss

results, identify blockers and celebrate progress; and Repeat: Build

momentum with regular reflection and action. This rhythm transforms data into

dialogue, which helps organisations to improve.

At first glance, OK doesn’t sound dangerous. Teams aren’t unhappy enough to

trigger alarms, nor are they burning out; they keep delivering at an

acceptable level. But ‘acceptable’ is not the same as ‘successful’. Teams

stuck in OK lack the energy, creativity and ambition to truly thrive. They’re

passable, not powerful – and that complacency can quietly erode performance.

... In fact, the lifetime value of a happy employee is more than twice that of

an OK one. This is not soft sentiment – it’s hard economics. By contrast, OK

teams bring hidden costs. They are about twice as likely to miss targets as

happy teams and have 50% higher staff turnover. They are also less

collaborative, less creative and less resilient when challenges arise. ...

First, reframe happiness as a serious business metric. It’s not vague or

fluffy. It’s measurable, trackable and improvable. It connects directly to

performance, retention and, ultimately, profit. Second, focus on the drivers

of happiness. I’ve identified five ways to develop happiness at work: connect,

be fair, empower, challenge and inspire. ... Third, embed a rhythm of

measure-meet-repeat. Measure: Use light-touch weekly pulses and deeper

quarterly surveys to gather data; Meet: Bring teams together to discuss

results, identify blockers and celebrate progress; and Repeat: Build

momentum with regular reflection and action. This rhythm transforms data into

dialogue, which helps organisations to improve.Are cloud providers neglecting security to chase AI?

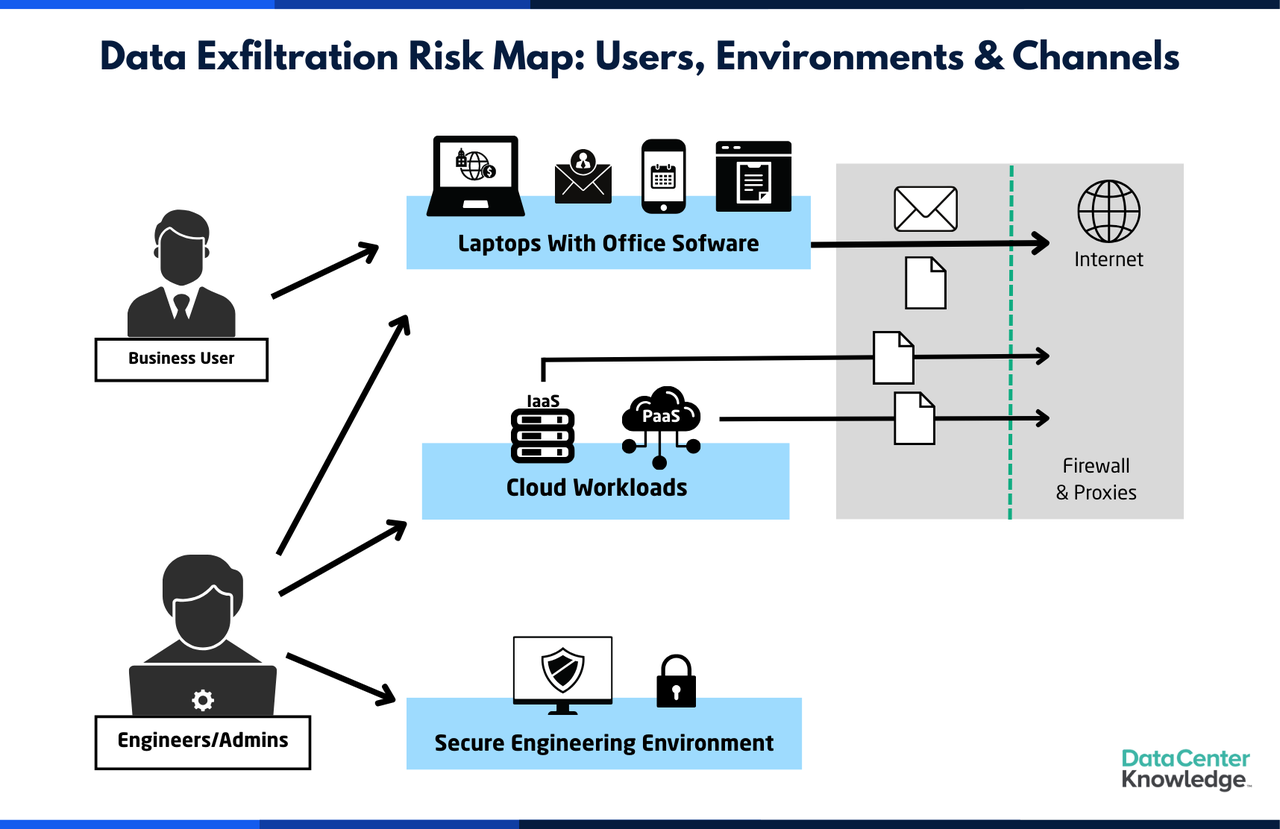

an unsettling trend now challenges this narrative. Recent research, including

the “State of Cloud and AI Security 2025” report conducted by the Cloud

Security Alliance (CSA) in partnership with cybersecurity company Tenable,

highlights that cloud security, once considered best in class, is becoming

more fragmented and misaligned, leaving organizations vulnerable. The issue

isn’t a lack of resources or funding—it’s an alarming shift in priorities by

cloud providers. As investment and innovative energies focus more on

artificial intelligence and hybrid cloud development, security efforts appear

to be falling behind. ... The dangers of this complexity are made worse by

what the report calls the weakest link in cloud security: identity and access

management (IAM). Nearly 59% of respondents cited insecure identities and

risky permissions as their main concerns, with excessive permissions and poor

identity hygiene among the top reasons for breaches. ... Deprioritizing

security in favor of AI products is a gamble cloud providers appear willing to

take, but there are clear signs that enterprises might not follow them down

this path forever. The CSA/Tenable report highlights that 31% of surveyed

respondents believe their executive leadership fails to grasp the nuances of

cloud security, and many have uncritically relied on native tools from cloud

vendors without adding extra protections.

an unsettling trend now challenges this narrative. Recent research, including

the “State of Cloud and AI Security 2025” report conducted by the Cloud

Security Alliance (CSA) in partnership with cybersecurity company Tenable,

highlights that cloud security, once considered best in class, is becoming

more fragmented and misaligned, leaving organizations vulnerable. The issue

isn’t a lack of resources or funding—it’s an alarming shift in priorities by

cloud providers. As investment and innovative energies focus more on

artificial intelligence and hybrid cloud development, security efforts appear

to be falling behind. ... The dangers of this complexity are made worse by

what the report calls the weakest link in cloud security: identity and access

management (IAM). Nearly 59% of respondents cited insecure identities and

risky permissions as their main concerns, with excessive permissions and poor

identity hygiene among the top reasons for breaches. ... Deprioritizing

security in favor of AI products is a gamble cloud providers appear willing to

take, but there are clear signs that enterprises might not follow them down

this path forever. The CSA/Tenable report highlights that 31% of surveyed

respondents believe their executive leadership fails to grasp the nuances of

cloud security, and many have uncritically relied on native tools from cloud

vendors without adding extra protections.The Future of Global A.I.

The accelerating development and adoption of AI products, services and

platforms present both challenges and opportunities for regions like the

Middle East and North Africa (MENA) and India that have ambitions of

integrating AI into their economies. Data presented in the report suggests

that the mobile user bases in India and MENA are primed for AI products and

services on mobile platforms. For the Middle East, AI is a crucial enabler of

economic diversification beyond its hydrocarbon industries, whereas for India,

AI can be transformative for its world-leading digital public infrastructure,

public service delivery, and digital payments platforms. ... The BOND

report notes that the current wave of AI development and adoption is

unprecedented when compared to previous technological waves. It uses OpenAI’s

ChatGPT as a benchmark to showcase the explosive growth of user adoption as

the platform achieved 1 million users within five days, 800 million weekly

active users within 17 months, and registered 90 percent of its users from

non-US geographies by its third year. ... In an era of increasing geopolitical

competition, countries are supporting efforts to achieve digital sovereignty.

The BOND report notes a growing interest in Sovereign AI projects, as

demonstrated by NVIDIA’s partnerships in countries like France, Spain,

Switzerland, Ecuador, Japan, Vietnam, and Singapore.

The accelerating development and adoption of AI products, services and

platforms present both challenges and opportunities for regions like the

Middle East and North Africa (MENA) and India that have ambitions of

integrating AI into their economies. Data presented in the report suggests

that the mobile user bases in India and MENA are primed for AI products and

services on mobile platforms. For the Middle East, AI is a crucial enabler of

economic diversification beyond its hydrocarbon industries, whereas for India,

AI can be transformative for its world-leading digital public infrastructure,

public service delivery, and digital payments platforms. ... The BOND

report notes that the current wave of AI development and adoption is

unprecedented when compared to previous technological waves. It uses OpenAI’s

ChatGPT as a benchmark to showcase the explosive growth of user adoption as

the platform achieved 1 million users within five days, 800 million weekly

active users within 17 months, and registered 90 percent of its users from

non-US geographies by its third year. ... In an era of increasing geopolitical

competition, countries are supporting efforts to achieve digital sovereignty.

The BOND report notes a growing interest in Sovereign AI projects, as

demonstrated by NVIDIA’s partnerships in countries like France, Spain,

Switzerland, Ecuador, Japan, Vietnam, and Singapore.Zero Trust Is 15 Years Old — Why Full Adoption Is Worth the Struggle

Effective ZT will not eliminate all breaches – there are simply too many ways

into a network – but it would certainly limit the effectiveness of stolen

credentials and inhibit lateral movement by intruders, and malicious activity

by insiders inside the enterprise network. “Here’s the part most people miss:

Zero Trust is just as important for reducing insider risk as it is for keeping

out external threats.,” comments Chad Cragle. “Zero Trust is just as important

for reducing insider risk as it is for keeping out external threats.” ...

Putting people first is good people management and good PR, but bad security.

It gives too much leeway to three basic human characteristics: a propensity to

trust on sight, a tendency to be lazy, and a deep rooted curiosity. We have a

natural tendency to trust first and ask questions later; to skirt security

controls when they are too intrusive and hinder our work, and we are naturally

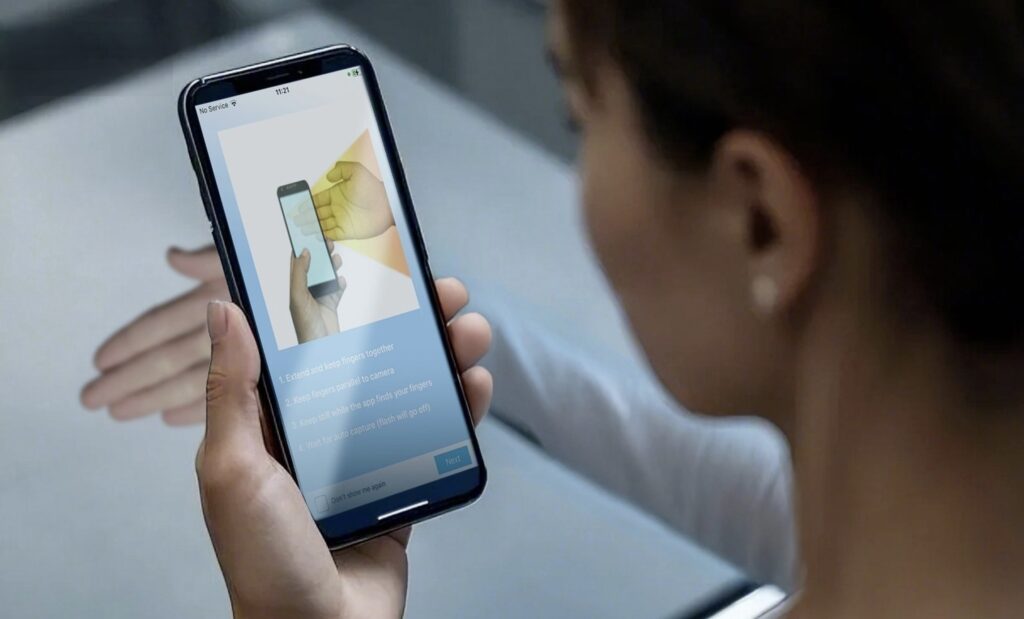

curious. ... Technology first is becoming more essential in the emerging world

of AI-enhanced deepfakes. We can no longer rely on people being able to

recognize people. We are easily fooled into believing this entity is the

entity we know and trust. ... Getting the technology ready for ZT is also

hard, partly because many applications were not built with ZT in mind. “Many

older programs just don’t play nice with modern security,” comments J Stephen

Kowski, “so businesses end up stuck between keeping things secure and not

slowing down the way they work.”

Effective ZT will not eliminate all breaches – there are simply too many ways

into a network – but it would certainly limit the effectiveness of stolen

credentials and inhibit lateral movement by intruders, and malicious activity

by insiders inside the enterprise network. “Here’s the part most people miss:

Zero Trust is just as important for reducing insider risk as it is for keeping

out external threats.,” comments Chad Cragle. “Zero Trust is just as important

for reducing insider risk as it is for keeping out external threats.” ...

Putting people first is good people management and good PR, but bad security.

It gives too much leeway to three basic human characteristics: a propensity to

trust on sight, a tendency to be lazy, and a deep rooted curiosity. We have a

natural tendency to trust first and ask questions later; to skirt security

controls when they are too intrusive and hinder our work, and we are naturally

curious. ... Technology first is becoming more essential in the emerging world

of AI-enhanced deepfakes. We can no longer rely on people being able to

recognize people. We are easily fooled into believing this entity is the

entity we know and trust. ... Getting the technology ready for ZT is also

hard, partly because many applications were not built with ZT in mind. “Many

older programs just don’t play nice with modern security,” comments J Stephen

Kowski, “so businesses end up stuck between keeping things secure and not

slowing down the way they work.”Crafting an Effective AI Strategy for Your Organization: A Comprehensive Approach

Without a deliberate strategy, AI initiatives might remain small pilot

projects that never scale, or they might stray from business needs. A

well-crafted AI strategy acts as a compass to guide AI investments and

projects. It helps answer critical questions upfront: Which problems are we

trying to solve with AI? How do these tie to our business KPIs? Do we have the

right data and infrastructure? By addressing these, the strategy ensures AI

adoption is purposeful rather than purely experimental. Crucially, the

strategy also weaves in ethical and regulatory considerations ... An AI CoE is

a dedicated team or organizational unit that centralizes AI expertise and

resources to support the entire company’s AI initiatives. Think of it as an

in-house “AI SWAT team” that bridges the gap between high-level strategy and

the technical execution of AI projects. ... As organizations deploy AI more

widely, ethical, legal, and societal responsibilities become non-negotiable.

Responsible AI is all about ensuring that systems are fair, transparent, safe,

and aligned with human values. ... Many AI models, especially deep learning

systems, are often criticized for being “black boxes”—making decisions that

are difficult to interpret. Explainable AI (XAI) is about creating methods and

tools to make these models transparent and their outputs understandable.

Without a deliberate strategy, AI initiatives might remain small pilot

projects that never scale, or they might stray from business needs. A

well-crafted AI strategy acts as a compass to guide AI investments and

projects. It helps answer critical questions upfront: Which problems are we

trying to solve with AI? How do these tie to our business KPIs? Do we have the

right data and infrastructure? By addressing these, the strategy ensures AI

adoption is purposeful rather than purely experimental. Crucially, the

strategy also weaves in ethical and regulatory considerations ... An AI CoE is

a dedicated team or organizational unit that centralizes AI expertise and

resources to support the entire company’s AI initiatives. Think of it as an

in-house “AI SWAT team” that bridges the gap between high-level strategy and

the technical execution of AI projects. ... As organizations deploy AI more

widely, ethical, legal, and societal responsibilities become non-negotiable.

Responsible AI is all about ensuring that systems are fair, transparent, safe,

and aligned with human values. ... Many AI models, especially deep learning

systems, are often criticized for being “black boxes”—making decisions that

are difficult to interpret. Explainable AI (XAI) is about creating methods and

tools to make these models transparent and their outputs understandable.Building security that protects customers, not just auditors

Good engineering usually leads to strong security, and cautions against just going through the motions to meet compliance requirements. ... Sadly, threat actors don’t need to improve, most of the market is very far behind and old-school attacks like phishing still work easily. One trend we’re seeing in the last few years is a strong focus on crypto attacks, and on crypto exchanges. Even these usually involve classic techniques. Another are “SMS abuse” attacks, where attackers exploit endpoints that trigger sending sms messages, which they send to premium numbers they want to bump up. Many such attacks are only discovered when the bill from the SMS provider arrives. ... Current Security Information and Event Management (SIEM) vendors often offer stacks and pricing models that just don’t fit the sheer scale and speed of transactions. Sure, you can make them work, if you spend millions! ... If you just check boxes, you are not protecting your customers, you are just protecting your company from the auditor. Try to understand the rationale behind the control and implement it according to your company’s architecture. Think of it philosophically, would you be happy being a box-ticker or would you prefer to have impact? ... Your goal is to find a way to collaborate with your QSA, they can be true partners for driving positive change in the company.Enterprise-Grade Data Ethics: How to Implement Privacy, Policy, and Architecture

Embedding ethics and privacy into daily business operations involves practical,

continuous steps integrated deeply into organizational processes. Core

recommendations include developing clear and understandable data policies and

making them accessible to all stakeholders, regularly training teams to maintain

updated awareness of ethical data standards, building privacy considerations

directly into system architecture from inception, and collaborating with legal

and technical teams on application programming interfaces (APIs) and data models

to incorporate explicit privacy rules. ... An enterprise architecture framework

creates fundamental support by outlining precise methods for data storage,

transfer, and access permissions. Organizations use new and emerging

technologies alongside other comprehensive tools to establish systematic

policies while implementing strong encryption and data masking approaches for

secure data management. ... Executive leaders who dedicate themselves to

ethical data handling create profound changes in corporate cultural values.

Organizations can demonstrate their strategic dedication to data ethics through

executive-level visibility of privacy and ethics system design oversight,

combined with employee training investments and performance accountability

systems.

Embedding ethics and privacy into daily business operations involves practical,

continuous steps integrated deeply into organizational processes. Core

recommendations include developing clear and understandable data policies and

making them accessible to all stakeholders, regularly training teams to maintain

updated awareness of ethical data standards, building privacy considerations

directly into system architecture from inception, and collaborating with legal

and technical teams on application programming interfaces (APIs) and data models

to incorporate explicit privacy rules. ... An enterprise architecture framework

creates fundamental support by outlining precise methods for data storage,

transfer, and access permissions. Organizations use new and emerging

technologies alongside other comprehensive tools to establish systematic

policies while implementing strong encryption and data masking approaches for

secure data management. ... Executive leaders who dedicate themselves to

ethical data handling create profound changes in corporate cultural values.

Organizations can demonstrate their strategic dedication to data ethics through

executive-level visibility of privacy and ethics system design oversight,

combined with employee training investments and performance accountability

systems.

CIOs are stressed — and more or less loving it

Not surprisingly AI has upped the ante for stress — or in Richard’s case,

concern over the quick adoption of AI tools by end users who may or may not know

what to do with them. “I would say that’s probably the thing I worry about the

most. I don’t know that it stresses me out,” but he constantly thinks about what

tools employees are using and how they are using them. “We don’t want to suck

away all the productivity gains by limiting access to great tools, but at the

same time, we don’t want to let people run wild with [personally identifiable

information] or data” by tools not managed by IT. ... Even with all the

pressures on CIOs today and the need to wear many hats, most say the job is

still worth it. Pressure, it seems, is not always a bad thing. “I’m still in it,

so it must be worth it,’’ Grinnell says. “CIOs have a certain personality; we

know you’re not getting into the job and it’ll be smooth sailing. We have to

solve a challenge — whatever the challenge is.” … It’s tiring, it’s

stressful, but I get up energized every day to go tackle that. That’s who I am.”

Driscoll says she likes pressure and finds her role “worth it more now than ever

because the job of CIO and CTO has evolved to where the expectation is you will

be responsible for the technology, but also be a core partner in where the

business is going. For me, that ability to help drive business outcomes, and

shape wherever we go as a company makes my job more exciting and worth it.”

Not surprisingly AI has upped the ante for stress — or in Richard’s case,

concern over the quick adoption of AI tools by end users who may or may not know

what to do with them. “I would say that’s probably the thing I worry about the

most. I don’t know that it stresses me out,” but he constantly thinks about what

tools employees are using and how they are using them. “We don’t want to suck

away all the productivity gains by limiting access to great tools, but at the

same time, we don’t want to let people run wild with [personally identifiable

information] or data” by tools not managed by IT. ... Even with all the

pressures on CIOs today and the need to wear many hats, most say the job is

still worth it. Pressure, it seems, is not always a bad thing. “I’m still in it,

so it must be worth it,’’ Grinnell says. “CIOs have a certain personality; we

know you’re not getting into the job and it’ll be smooth sailing. We have to

solve a challenge — whatever the challenge is.” … It’s tiring, it’s

stressful, but I get up energized every day to go tackle that. That’s who I am.”

Driscoll says she likes pressure and finds her role “worth it more now than ever

because the job of CIO and CTO has evolved to where the expectation is you will

be responsible for the technology, but also be a core partner in where the

business is going. For me, that ability to help drive business outcomes, and

shape wherever we go as a company makes my job more exciting and worth it.”

/dq/media/media_files/2025/09/15/is-software-engineering-dead-2025-09-15-12-29-16.jpg)

.webp?w=696&resize=696,0&ssl=1)