The AI-Powered IoT Revolution: Are You Ready?

AI not only reduces the cost and latency of these operations but also provides actionable intelligence, enabling smarter decisions that enhance business efficiency by preventing downtimes, minimizing losses, improving sales, and unlocking a range of benefits tailored to specific use cases. Building on this synergy, AI on Edge—where AI processes run directly on edge devices such as IoT sensors, cameras, and smartphones rather than relying solely on cloud computing—will see significant adoption by 2025. By processing data locally, edge AI enables real-time decision-making, eliminating delays caused by data transmission to and from the cloud. This capability will transform applications like autonomous vehicles, industrial automation, and healthcare devices, where fast, reliable responses are mission-critical. Moreover, AI on Edge enhances privacy and security by keeping sensitive data on the device, reduces cloud costs and bandwidth usage, and supports offline functionality in remote or connectivity-limited environments. These advantages make it an attractive option for organizations seeking to push the boundaries of innovation while delivering superior user experiences and operational efficiency.

Key strategies to enhance cyber resilience

To bolster resilience consider developing stakeholder specific playbooks,

Wyatt says. Different teams play different roles in incident response from

detecting risk, deploying key controls, maintaining compliance, recovery and

business continuity. Expect that each stakeholder group will have their own

requirements and set of KPIs to meet, she says. “For example, the security

team may have different concerns than the IT operations team. As a result,

organizations should draft cyber resilience playbooks for each set of

stakeholders that provide very specific guidance and ROI benefits for each

group.” ... Cyber resilience is as much about the ability to recover from a

major security incident as it is about proactively preparing, preventing,

detecting and remediating it. That means having a formal disaster recovery

plan, doing regular offsite back-ups of all critical systems and testing both

the plan and the recovery process on a frequent basis. ... Boards have become

very focused on managing risk and have become increasingly fluent in cyber

risk. But many boards are surprised that when a crisis occurs, broader

operational resilience is not a point of these discussions, according to

Wyatt. Bring your board along by having external experts walk through previous

events and break down the various areas of impact.

Smarter devops: How to avoid deployment horrors

Finding security issues post-deployment is a major risk, and many devops teams

shift-left security practices by instituting devops security non-negotiables.

These are a mix of policies, controls, automations, and tools, but most

importantly, ensuring security is a top-of-mind responsibility for developers.

... “Integrating security and quality controls as early as possible in the

software development lifecycle is absolutely necessary for a functioning

modern devops practice,” says Christopher Hendrich, associate CTO of SADA.

“Creating a developer platform with automation, AI-powered services, and clear

feedback on why something is deemed insecure and how to fix it helps the

developer to focus on developing while simultaneously strengthening the

security mindset.” ... “Software development is a complex process that gets

increasingly challenging as the software’s functionality changes or ages over

time,” says Melissa McKay, head of developer relations at JFrog. Implementing

a multilayered, end-to-end approach has become essential to ensure security

and quality are prioritized from initial package curation and coding to

runtime monitoring.”

How Do We Build Ransomware Resilience Beyond Just Backups?

While email filtering tools are essential, it’s unrealistic to expect them to

block every malicious message. As such, another important step is educating

your end users on identifying phishing emails and other suspicious content

that make it through the filters. User education is one of those things that

should be an ongoing effort, not a one-time initiative. Regular training

sessions help reinforce best practices and keep security in focus. To

complement training, consider using phishing attack simulators. Several

vendors offer tools that generate harmless, realistic-looking phishing

messages and send them to your users. Microsoft 365 even includes a phishing

simulation tool. ... Limiting user permissions is vital because ransomware

operates with the permissions of the user who triggers the attack. As such,

users should only have access to the resources they need to perform their

jobs—no more, no less. If a user doesn’t have access to a specific resource,

the ransomware won’t be able to encrypt it. Moreover, consider isolating

high-value data on storage systems that require additional authentication.

Doing so reduces exposure if ransomware spreads.

Azure Data Factory Bugs Expose Cloud Infrastructure

The Airflow instance's use of default, unchangeable configurations combined

with the cluster admin role's attachment to the Airflow runner "caused a

security issue" that could be manipulated "to control the Airflow cluster and

related infrastructure," the researchers explained. If an attacker was able to

breach the cluster, they also could manipulate Geneva, allowing attackers "to

potentially tamper with log data or access other sensitive Azure resources,"

Unit 42 AI and security research manager Ofir Balassiano and senior security

researcher David Orlovsky wrote in the post. Overall, the flaws highlight the

importance of managing service permissions and monitoring the operations of

critical third-party services within a cloud environment to prevent

unauthorized access to a cluster. ... Attackers have two ways to gain access

to and tamper with DAG files. They could gain write permissions to the storage

account containing DAG files by leveraging a principal account with write

permissions; or they could use a shared access signature (SAS) token, which

grants temporary and limited access to a DAG file. In this scenario, once a

DAG file is tampered with, "it lies dormant until the DAG files are imported

by the victim," the researchers explained. The second way is to gain access to

a Git repository using leaked credentials or a misconfigured repository.

Whatever happened to the three-year IT roadmap?

“IT roadmaps are now shorter, typically not exceeding two years, due to the

rapid pace of technological change,” he says. “This allows for more

flexibility and adaptability in IT planning.” Kellie Romack, chief digital

information officer of ServiceNow, is also shortening her horizon to align

with the two- or three-year timeframe that is the norm for her company. Doing

so keeps her focused on supporting the company’s overall future strategy but

with enough flexibility to adjust along the journey. “That timeframe is a

sweet spot that allows us to set a ‘dream big’ strategy with room to be agile,

so we can deliver and push the limits of what’s possible,” she says. “The pace

of technological change today is faster than it’s ever been, and if IT leaders

aren’t looking around the corner now, it’s possible they’ll fall behind and

never catch up.” ... “A roadmap is still a useful tool to provide that north

star, the objectives and the goals you’re trying to achieve, and some sense of

how you’ll get to those goals,” McHugh says. Without that, McHugh says CIOs

won’t consistently deliver what’s needed when it’s needed for their

organizations, nor will they get IT to an optimal advanced state. “If you

don’t have a goal or an outcome, you’re going to go somewhere, we can promise

you that, but you’re not going to end up in a specific location,” she adds.

Innovations in Machine Identity Management for the Cloud

Non-human identities are critical components within the digital landscape.

They enable machine-to-machine communications, providing an array of automated

services that underpin today’s digital operations. However, their growing

prevalence means they are also becoming prime targets for cyber threats. Are

existing cybersecurity strategies equipped to address this issue? Acting as

agile guardians, NHI management platforms offer promising solutions, securing

both the identities and their secrets from potential threats and

vulnerabilities. By placing equal emphasis on the management of both human and

non-human identities, businesses can create a comprehensive cybersecurity

strategy that matches the complexity and diversity of today’s digital threats.

... When unsecured, NHIs become hotbeds for cybercriminals who manipulate

these identities to procure unauthorized access to sensitive data and systems.

For companies regularly transacting in consumer data (like in healthcare or

finance), the unauthorized access and sharing of sensitive data can lead to

hefty penalties due to non-compliant data management practices. An effective

NHI management strategy acts as a pivotal control over cloud

security.

From Crisis to Control: Establishing a Resilient Incident Response Framework for Deployed AI Models

An effective incident response framework for frontier AI companies should be

comprehensive and adaptive, allowing quick and decisive responses to emerging

threats. Researchers at the Institute for AI Policy and Strategy (IAPS) have

proposed a post-deployment response framework, along with a toolkit of

specific incident responses. The proposed framework consists of four stages:

prepare, monitor and analyze, execute, and recovery and follow up. ...

Developers have a variety of actions available to them to contain and mitigate

the harms of incidents caused by advanced AI models. These tools offer a

variety of response mechanisms that can be executed individually or in

combination with one another, allowing developers to tailor specific responses

based on the incident's scope and severity. ... Frontier AI companies have

recently provided more transparency to their internal policies regarding

safety, including Responsible Scaling Policies (RSPs) published by Anthropic,

Google DeepMind, and OpenAI. When it comes to responding to post-deployment

incidents, all three RSPs lack clear, detailed, and actionable plans for

responding to post-deployment incidents.

We’re Extremely Focused on Delivering Value Sustainably — NIH CDO

Speaking of challenges in her role as the CDO, Ramirez highlights managing a

rapidly growing data portfolio. She stresses the importance of fostering

partnerships and ensuring the platform’s accessibility to those aiming to

leverage its capabilities. One of the central hurdles has been effectively

communicating the portfolio’s offerings and predicting data availability for

research purposes. She describes the critical need to align funding and

partnerships to support delivery timelines of 12 to 24 months, a task that

demanded strong leadership from the coordinating center. This dual role of

ensuring readiness and delivery has been both a challenge and a success.

Ramirez shares that the team has grown more adept at framing research data as

a product of their system, ready to meet the needs of collaborators. She also

expresses enthusiasm for working with partners to demonstrate the platform’s

benefits and efficiencies in advancing research objectives. Sharing AI

literacy and upskilling initiatives in the organization, Ramirez mentions

building a strong sense of community among data professionals. She highlights

efforts to establish a community of practice that brings together individuals

working in their federal coordinating center and awardees who specialize in

data science and systems.

5 Questions Your Data Protection Vendor Hopes You Don’t Ask

Data protection vendors often rely on high-level analysis to detect unusual

activity in backups or snapshots. This includes threshold analysis,

identifying unusual file changes, or detecting changes in compression rates

that may suggest ransomware encryption. These methods are essentially guesses

prone to false positives. During a ransomware attack, details matter. ...

Organizations snapshot or back up data regularly, ranging from hourly to daily

intervals. When an attack occurs, restoring a snapshot or backup overwrites

production data—some of which may have been corrupted by ransomware—with clean

data. If only 20% of the data in the backup has been manipulated by bad

actors, recovering the full backup or snapshot will result in overwriting 80%

of data that did not need restoration. ... Cybercriminals understand that

databases are the backbone of many businesses, making them prime targets for

extortion. By corrupting these databases, they can pressure organizations into

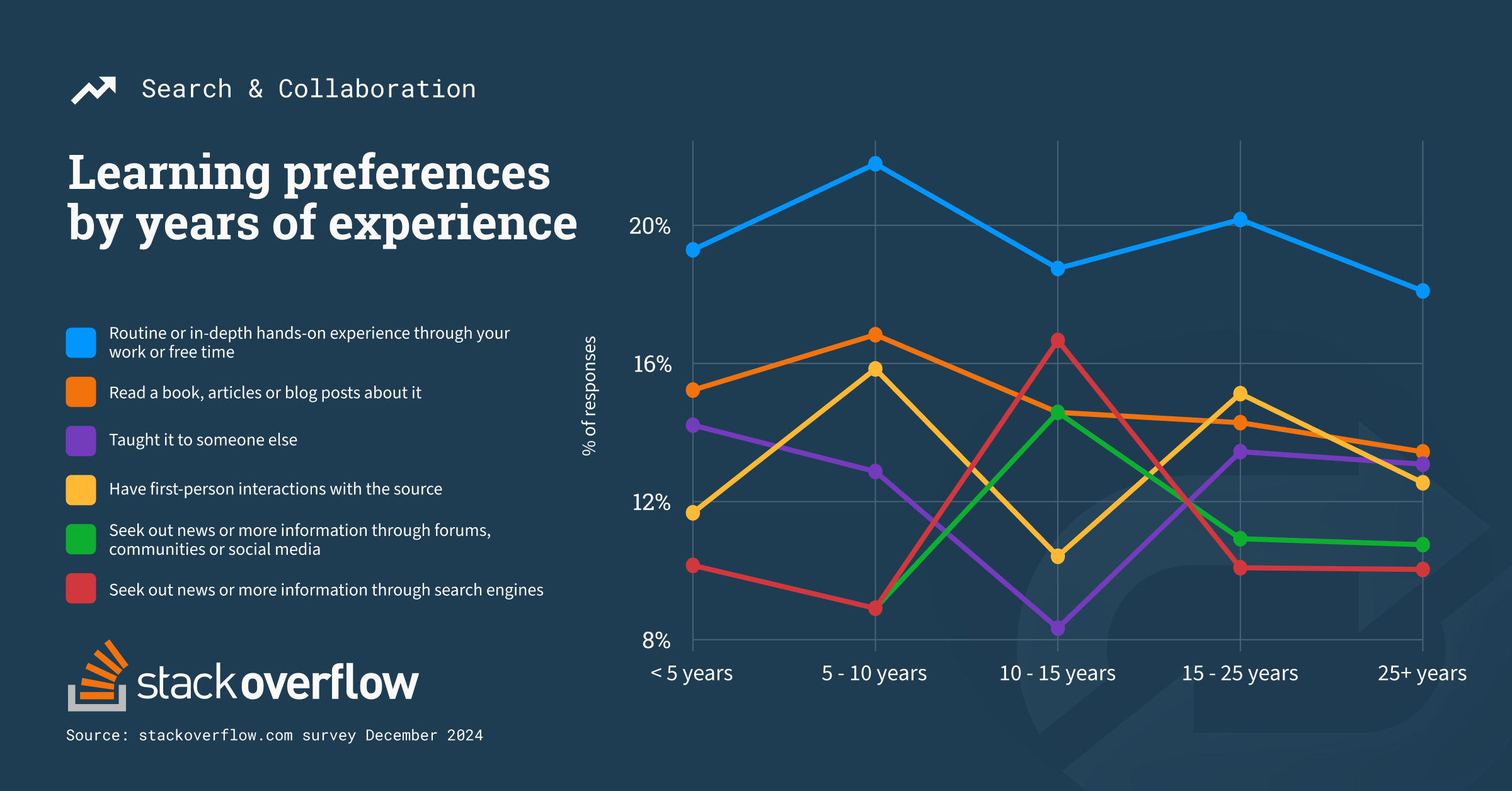

paying ransoms. ... AI is now a mainstream topic, but understanding how

an AI engine is trained is critical to evaluating its effectiveness. When

dealing with ransomware, it's important that the AI is trained on real

ransomware variants and how they impact data.

Quote for the day:

"The essence of leadership is the

capacity to build and develop the self-esteem of the workers." --

Irwin Federman