AI stack attack: Navigating the generative tech maze

Successful integration often depends on having a solid foundation of data and

processing capabilities. “Do you have a real-time system? Do you have stream

processing? Do you have batch processing capabilities?” asks Intuit’s

Srivastava. These underlying systems form the backbone upon which advanced AI

capabilities can be built. For many organizations, the challenge lies in

connecting AI systems with diverse and often siloed data sources. Illumex has

focused on this problem, developing solutions that can work with existing data

infrastructures. “We can actually connect to the data where it is. We don’t need

them to move that data,” explains Tokarev Sela. This approach allows enterprises

to leverage their existing data assets without requiring extensive

restructuring. Integration challenges extend beyond just data connectivity. ...

Security integration is another crucial consideration. As AI systems often deal

with sensitive data and make important decisions, they must be incorporated into

existing security frameworks and comply with organizational policies and

regulatory requirements.

How to Architect Software for a Greener Future

Firstly, it’s a time shift, moving to a greener time. You can use burstable or

flexible instances to achieve this. It’s essentially a sophisticated

scheduling problem, akin to looking at a forecast to determine when the grid

will be greenest—or conversely, how to avoid peak dirty periods. There are

various methods to facilitate this on the operational side. Naturally, this

strategy should apply primarily to non-demanding workloads. ... Another

carbon-aware action you can take is location shifting—moving your workload to

a greener location. This approach isn’t always feasible but works well when

network costs are low, and privacy considerations allow. ... Resiliency is

another significant factor. Many green practices, like autoscaling, improve

software resilience by adapting to demand variability. Carbon awareness

actions also serve to future-proof your software for a post-energy transition

world, where considerations like carbon caps and budgets may become

commonplace. Establishing mechanisms now prepares your software for future

regulatory and environmental challenges.

Evaluating board maturity: essential steps for advanced governance

Most boards lack a firm grasp of fundamental governance principles. I'd go so

far as to say that 8 or 9 out of 10 boards could be described this way. Your

average board director is intelligent and respected within their communities.

But they often don't receive meaningful governance training. Instead, they

follow established board norms without questioning them, which can lead to

significant governance failures. Consider Enron, Wells Fargo, Volkswagen AG,

Theranos, and, recently, Boeing—all have boards filled with recognized

experts. However inadequate oversight caused or allowed them to make serious

and damaging errors. This is most starkly illustrated by Barney Frank,

co-author of the Dodd-Frank Act (passed following the 2008 financial crisis)

and a board member of Silicon Valley Bank while it collapsed. Having brilliant

board members doesn't guarantee effective governance. The point is that, for

different reasons, consultants and experts can 'misread' where a board is at.

Frankly, this is most often due to just being lazy. But sometimes it is due to

just not being clear about what to look for.

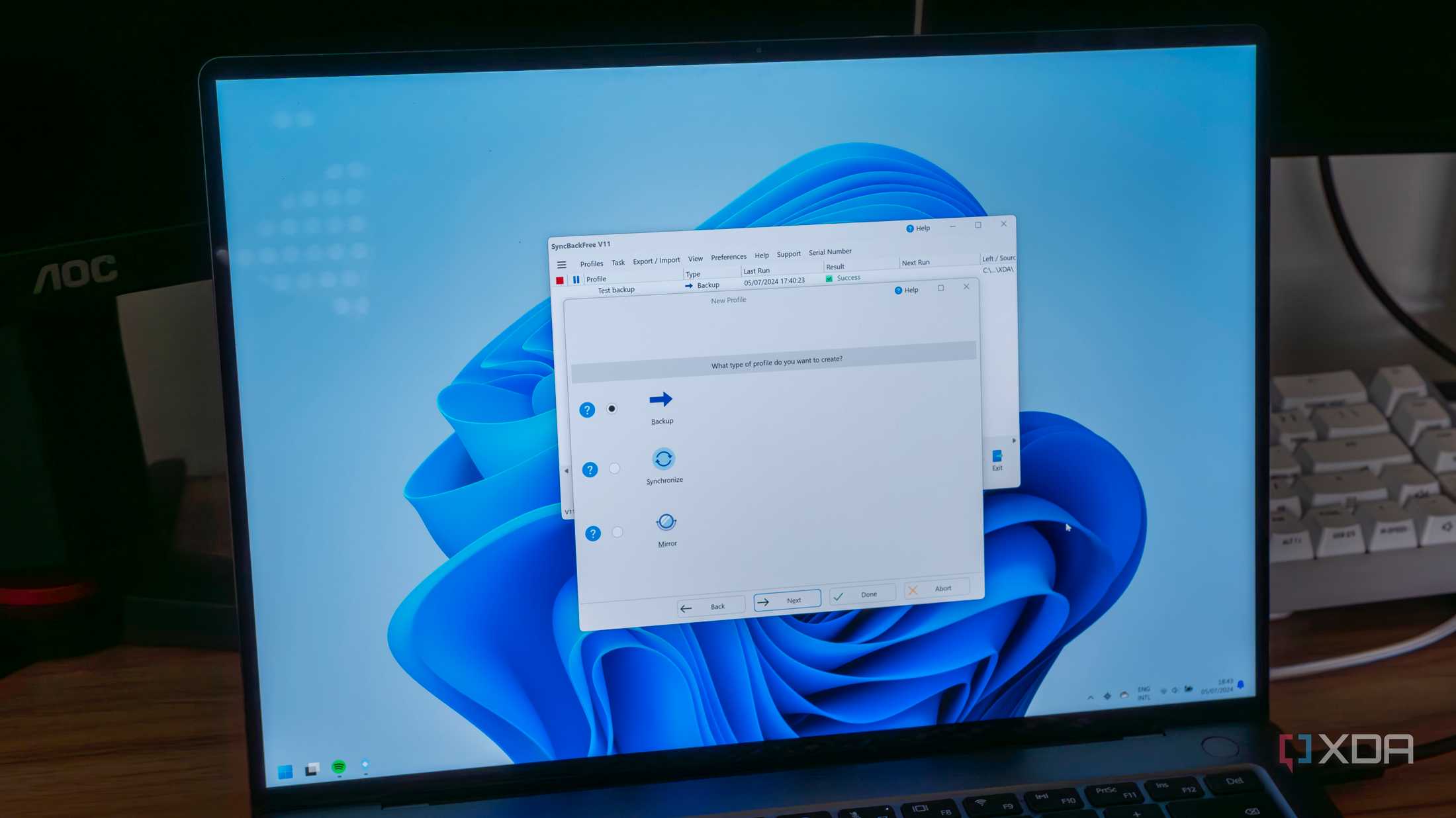

Mastering Serverless Debugging

Feature flags allow you to enable or disable parts of your application without

deploying new code. This can be invaluable for isolating issues in a live

environment. By toggling specific features on or off, you can narrow down the

problematic areas and observe the application’s behavior under different

configurations. Implementing feature flags involves adding conditional checks

in your code that control the execution of specific features based on the

flag’s status. Monitoring the application with different flag settings helps

identify the source of bugs and allows you to test fixes without affecting the

entire user base. ... Logging is one of the most common and essential tools

for debugging serverless applications. I wrote and spoke a lot about logging

in the past. By logging all relevant data points, including inputs and outputs

of your functions, you can trace the flow of execution and identify where

things go wrong. However, excessive logging can increase costs, as serverless

billing is often based on execution time and resources used. It’s important to

strike a balance between sufficient logging and cost efficiency.

Implementing Data Fabric: 7 Key Steps

As businesses generate and collect vast amounts of data from diverse sources,

including cloud services, mobile applications, and IoT devices, the challenge

of managing, processing, and leveraging this data efficiently becomes

increasingly critical. Data fabric emerges as a holistic approach to address

these challenges by providing a unified architecture that integrates different

data management processes across various environments. This innovative

framework enables seamless data access, sharing, and analysis across the

organization irrespective of where the data resides – be it on-premises or in

multi-cloud environments. The significance of data fabric lies in its ability

to break down silos and foster a collaborative environment where information

is easily accessible and actionable insights can be derived. By implementing a

robust data fabric strategy, businesses can enhance their operational

efficiency, drive innovation, and create personalized customer experiences.

Implementing a data fabric strategy involves a comprehensive approach that

integrates various Data Management and processing disciplines across an

organization.

Empowering Self-Service Users in the Digital Age

Ultimately, portals must strike the balance between freedom and control, which

can be achieved by ensuring flexibility with role-based access control.

Granting end users the freedom to deploy within a secure framework of

predefined permissions creates an environment ripe for innovation within a

robustly protected environment. This means users can explore, experiment and

innovate without concerns about security boundaries or unnecessary hurdles.

But of course, as with any project, organizations can’t afford to build

something and consider that job done. Measuring success is ongoing. Metrics

such as how often the portal is accessed, who uses what, which service

catalogs are used and how the portal usage should be tracked, along with other

relevant data will help point to any areas that need improvement. It is also

important to remember that it is collaborative work between the platform team

and end users. And in technology, there is always room for improvement. For

instance, recent advances in AI/ML could soon be leveraged to analyze

previously inaccessible datasets and generate smarter and faster

decision-making.

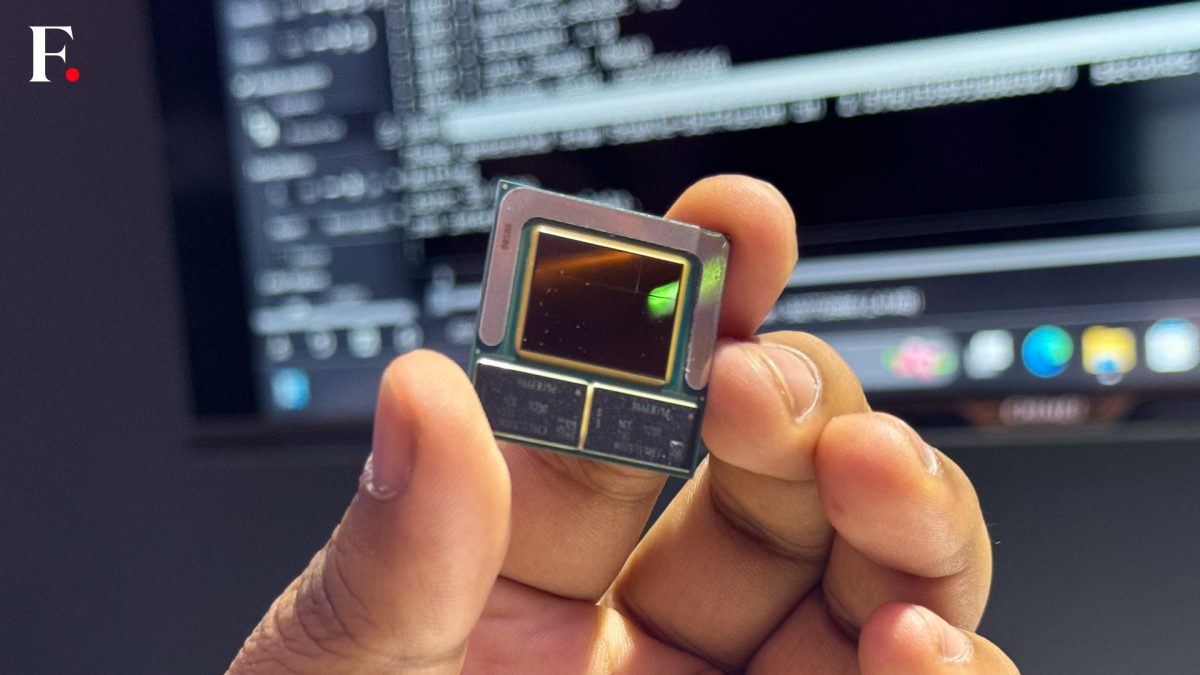

Desperate for power, AI hosts turn to nuclear industry

As opposed to adding new green energy to meet AI’s power demands, tech

companies are seeking power from existing electricity resources. That could

raise prices for other customers and hold back emission-cutting goals,

according The Wall Street Journal and other sources. According to sources

cited by the WSJ, the owners of about one-third of US nuclear power plants are

in talks with tech companies to provide electricity to new data centers needed

to meet the demands of an artificial-intelligence boom. ... “The power

companies are having a real problem meeting the demands now,” Gold said. “To

build new plants, you’ve got to go through all kinds of hoops. That’s why

there’s a power plant shortage now in the country. When we get a really hot

day in this country, you see brownouts.” The available energy could go to the

highest bidder. Ironically, though, the bill for that power will be borne by

AI users, not its creators and providers. “Yeah, [AWS] is paying a billion

dollars a year in electrical bills, but their customers are paying them $2

billion a year. That’s how commerce works,” Gold said.

Fake network traffic is on the rise — here’s how to counter it

“Attempting to homogenize the bot world and the potential threat it poses is a

dangerous prospect. The fact is, it is not that simple, and cyber

professionals must understand the issue in the context of their own goals...”

... “Cyber professionals need to understand the bot ecosystem and the

resulting threats in order to protect their organizations from direct network

exploitation, indirect threat to the product through algorithm manipulation,

and a poor user experience, and the threat of users being targeted on their

platform,” Cooke says. “As well as [understanding] direct security threats

from malicious actors, cyber professionals need to understand the impact on

day-to-day issues like advertising and network management from bot profiles as

a whole,” she adds. “So cyber professionals must ensure that the problem is

tackled holistically, protecting their networks, data and their users from

this increasingly sophisticated threat. Measures to detect and prevent

malicious bot activity must be built into new releases, and cyber

professionals should act as educational evangelists for users to help them

help themselves with a strong awareness of the trademarks of fake traffic and

malicious profiles.”

Researchers reveal flaws in AI agent benchmarking

Since calling the models underlying most AI agents repeatedly can increase

accuracy, researchers can be tempted to build extremely expensive agents so

they can claim top spot in accuracy. But the paper described three simple

baseline agents developed by the authors that outperform many of the complex

architectures at much lower cost. ... Two factors determine the total cost of

running an agent: the one-time costs involved in optimizing the agent for a

task, and the variable costs incurred each time it is run. ... Researchers and

those who develop models have different benchmarking needs to those downstream

developers who are choosing an AI to use their applications. Model developers

and researchers don’t usually consider cost during their evaluations, while

for downstream developers, cost is a key factor. “There are several hurdles to

cost evaluation,” the paper noted. “Different providers can charge different

amounts for the same model, the cost of an API call might change overnight,

and cost might vary based on model developer decisions, such as whether bulk

API calls are charged differently.”

10 ways to prevent shadow AI disaster

Shadow AI is practically inevitable, says Arun Chandrasekaran, a distinguished

vice president analyst at research firm Gartner. Workers are curious about AI

tools, seeing them as a way to offload busy work and boost productivity.

Others want to master their use, seeing that as a way to prevent being

displaced by the technology. Others became comfortable with AI for personal

tasks and now want the technology on the job. ... shadow AI could cause

disruptions among the workforce, he says, as workers who are surreptitiously

using AI could have an unfair advantage over those employees who have not

brought in such tools. “It is not a dominant trend yet, but it is a concern we

hear in our discussions [with organizational leaders],” Chandrasekaran says.

Shadow AI could introduce legal issues, too. ... “There has to be more

awareness across the organization about the risks of AI, and CIOs need to be

more proactive about explaining the risks and spreading awareness about them

across the organization,” says Sreekanth Menon, global leader for AI/ML

services at Genpact, a global professional services and solutions

firm.

Quote for the day:

“In matters of principle, stand like a

rock; in matters of taste, swim with the current. ” -- Thomas Jefferson

)

_Michael_Burrell-Alamy.jpg?width=850&auto=webp&quality=95&format=jpg&disable=upscale)