Data is choking AI. Here’s how to break free.

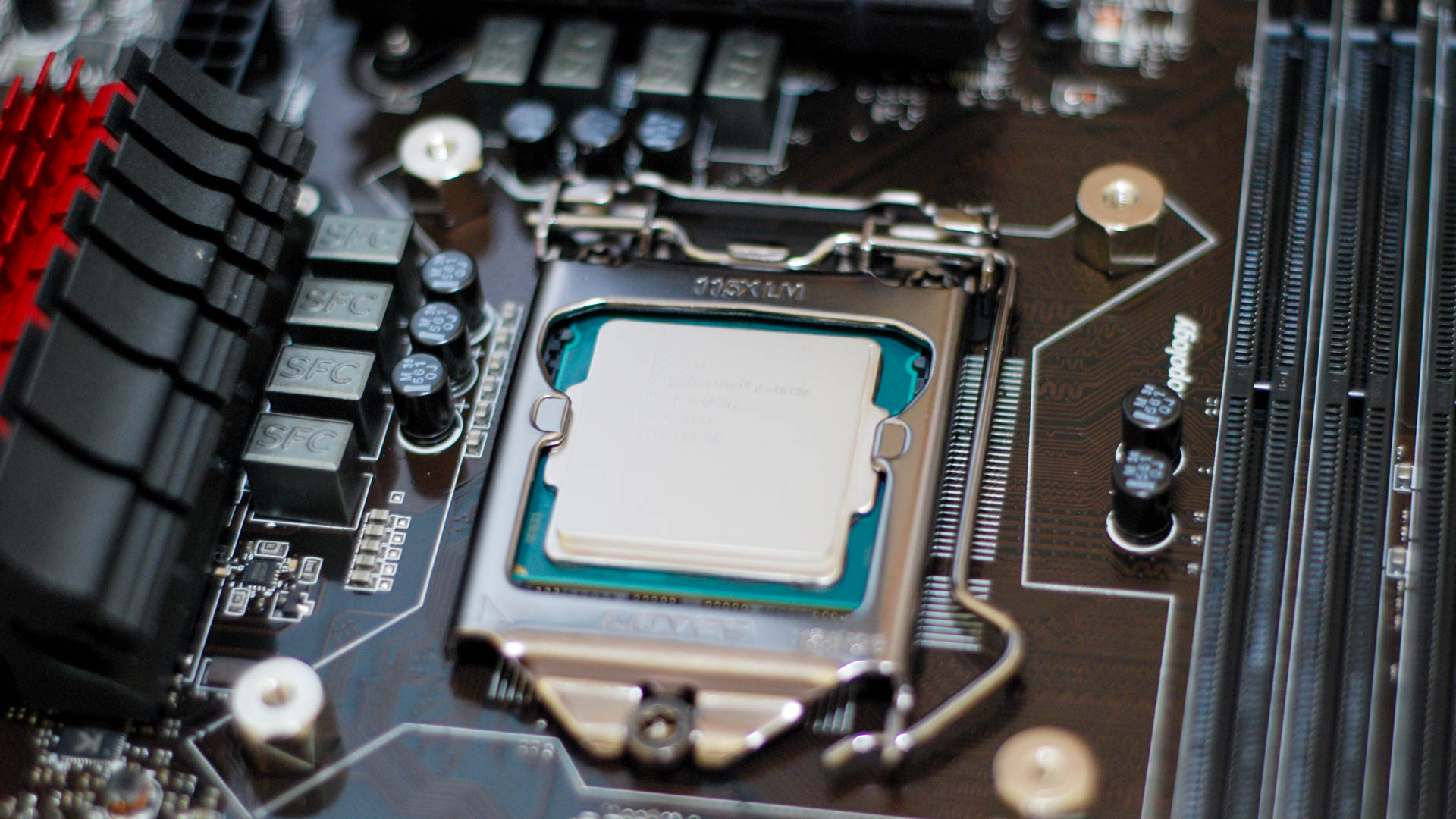

As enterprises deepen their embrace of AI and other data-driven, high-performance computing, it’s critical to ensure that performance and value are not starved by underperforming processing, storage and networking. Here are key considerations to keep in mind. Compute. When developing and deploying AI, it’s crucial to look at computational requirements for the entire data lifecycle: starting with data prep and processing (getting the data ready for AI training), then during AI model building, training, and inference. Selecting the right compute infrastructure (or platform) for the end-to-end lifecycle and optimizing for performance has a direct impact on the TCO and hence ROI for AI projects. End-to-end data science workflows on GPUs can be up to 50x faster than on CPUs. To keep GPUs busy, data must be moved into processor memory as quickly as possible. Depending on the workload, optimizing an application to run on a GPU, with I/O accelerated in and out of memory, helps achieve top speeds and maximize processor utilization.

New leadership for a new era of thriving organizations

Leading companies today seek to become learning organizations that are continually evolving, exploring, ideating, experimenting, scaling up, executing, scaling down, and exiting across many different activities in parallel. By accelerating change and allowing for positive surprises and innovations to flourish, they consistently outperform those companies that focus instead on always trying to deliver the “perfect” plan. We are in the midst of a profound shift in how work gets done, one that asks leaders to go beyond being controllers with a mindset of certainty to becoming coaches who operate with a mindset of discovery and foster continual rapid exploration, execution, and learning. Leaders and leadership teams can learn how to set and work toward outcomes rather than traditional key performance indicators; to foster rapid experimentation and learn from both successes and setbacks; and to manage risk differently, through testing, learning, and fast adaptation. The leadership practices enabling this shift include the following:operating in short cycles of decision, action, and learning.

The Fourth Industrial Revolution is here. Here’s what it means for the way we work

Herein lies the double-edged sword of the Fourth Industrial Revolution. Although

smart machines and artificial intelligence are predicted to bring unimaginable

efficiencies, they will do so by increasingly replacing a wide swath of existing

human jobs. While historically jobs have always been around for human beings

through technological revolutions, we have never had a technological revolution

that has been capable of displacing so many human beings and so much human brain

power as the one we are transitioning through now. According to a report from

Oxford Economics, a global forecasting and quantitative analysis firm, smart

machines are expected to displace about 20 million manufacturing jobs across the

world over the next decade, including more than 1.5 million in the U.S. Other

studies predict that smart machines, robotics, artificial intelligence,

blockchain technology, 3D printing, and automation will put 20% to 40% of

existing jobs at risk over the next decades. And a report from the Brookings

Institution finds that 25% of U.S. workers will face “high exposure” and risk

being displaced over the upcoming few decades.

Even Amazon can't make sense of serverless or microservices

Beyond celebrating their good sense, I think there's a bigger point here that

applies to our entire industry. Here's the telling bit: "We designed our initial

solution as a distributed system using serverless components... In theory, this

would allow us to scale each service component independently. However, the way

we used some components caused us to hit a hard scaling limit at around 5% of

the expected load." That really sums up so much of the microservices craze that

was tearing through the tech industry for a while: IN THEORY. Now the real-world

results of all this theory are finally in, and it's clear that in practice,

microservices pose perhaps the biggest siren song for needlessly complicating

your system. And serverless only makes it worse. What makes this story unique is

that Amazon was the original poster child for service-oriented architectures.

The far more reasonable prior to microservices. An organizational pattern for

dealing with intra-company communication at crazy scale when API calls beat

scheduling coordination meetings. SOA makes perfect sense at the scale of

Amazon.

The impact of ChatGPT on multi-factor authentication

As adoption of AI/ML-backed tools continues to grow, it will be important to

focus on key ways to mitigate the risks associated with their use. When the

efficacy of identity measures that companies have trusted for decades such as

voice verification and video verification erodes, strongly linked electronic

identity is even more important. Phishing-resistant credential solutions such as

security keys — that are hardware-backed and purpose-built around cryptographic

principles — excel in these scenarios. Security keys that support FIDO2 also

ensure that these credentials are tied to a specific relying party. This binding

prevents attackers from preying on simple human error. With security keys,

credentials are securely stored in hardware which prevents those credentials

from being transferred to another system without the user’s knowledge or by

accident. The use of FIDO2 authenticators also greatly reduces the efficacy of

social engineering through phishing as users cannot be tricked into vending a

one-time password to an attacker, or have SMS authentication codes stolen

directly through a SIM swapping attack.

Three Powerful Tactics Entrepreneurs Use For Instant Confidence

Tried and tested by entrepreneurs who have faced nerves and self-doubt,

reminding yourself of what you have already achieved can give your confidence

levels the boost they need. Create a metaphorical cookie jar of all your

business and life wins and dip in for instant assurance. Samantha from ICI CARE

keeps a list of her past wins and her big picture vision on the wall where she

works, ensuring they are at eye level. "By having that reminder, I win over my

brain before it spirals down,” she said. “Self-doubt is normal but I keep my

focus and energy on achievement.” ... Confidence is a state of mind, which means

it’s also a choice. Dr Amanda Foo-Ryland, founder of Your Life Live It, knows

this well, explaining that it’s also, “about how you choose to see a new

situation.” She knows, “I can either be confident or choose not to be.” Like

Sarceno, she incorporates visualisation into the way ahead. “If I choose to be

confident, I imagine the event and see myself in it being confident, being the

person I want to be. I observe myself in the movie in my head.”

White House unveils AI rules to address safety and privacy

This new effort builds on previous attempts by the Biden administration to

promote some form of responsible innovation, but to date Congress has not

advanced any laws that would rein in AI. In October, the administration unveiled

a blueprint for a so-called “AI Bill of Rights” as well as an AI Risk Management

Framework; more recently, it has pushed for a roadmap for standing up a National

AI Research Resource. The measures don’t have any legal teeth; they are just

more guidance, studies and research "and they’re not what we need now,"

according to Avivah Litan, a vice president and distinguished analyst at Gartner

Research. “We need clear guidelines on development of safe, fair and responsible

AI from the US regulators,” she said. “We need meaningful regulations such as we

see being developed in the EU with the AI Act. ... US regulators need to step up

their game and pace." In March, Senate Majority Leader Chuck Schumer, D-NY,

announced plans for rules around generative AI as ChatGPT surged in popularity.

Schumer called for increased transparency and accountability involving AI

technologies.

Court Dismisses FTC Complaint Against Data Broker Kochava

The FTC in its lawsuit filed last August against Idaho-based Kochava said the

company invades consumers' privacy by selling advertisers geolocation data sets

of mobile phone holders tied to a unique ID. That information could be used to

identify individuals who have visited abortion clinics, mental health providers

and other sensitive locations, the agency said. Kochava filed its own lawsuit in

the same Idaho federal court weeks before the FTC's action, as a bid to

preemptively counter the federal agency. The company also filed a motion last

October to dismiss the FTC's lawsuit. Winmill wrote in her Thursday ruling that

nothing prevents the FTC from asserting that an invasion of privacy by itself

can constitute a legitimate cause for suing. The agency failed, he said, by not

establishing that Kochava's business practices constitute substantial injury to

consumers. "The privacy concerns raised by the FTC are certainly legitimate.

Disclosing where a person has been every fifteen-minutes over a seven-day period

could undoubtedly reveal information that the person would consider private,

such as their travel habits, medical conditions, and social or religious

affiliations," he wrote.

The Merck appeal: cyber insurance and the definition of war

The war exclusion was found to be not applicable, and the court used the

insurer’s own words to detail the “why” behind the denial. When read by a layman

such as me, it appears the judges believed the insurers had ample time to adjust

their policy dynamics and didn’t get around to it. ... That said, when a

nation’s intelligence entities run covert operations, which Russia does on a

regular basis, the goal of the government at hand is to always maintain

plausible deniability any illegal acts. Could the NotPetya attack have been

sponsored by the Russian Federation? Absolutely, and indeed, Kroll Cyber

Security, the cyber consultant for the insurers, opined before the court “with

high confidence” that the attack was “orchestrated by actors working for or on

behalf of the Russian Federation.” Yet, one should note that when the US

Department of Justice had the opportunity to pin the tail on that same donkey,

they demurred. Thus, if a national government is not going to attribute

nation-state sponsorship to an attack, then it will be most difficult for an

insurance entity to successfully do so within the courts without explicit

verbiage in the cybersecurity exclusions.

How the influence of data and the metaverse will revolutionize businesses and industries

From machine and building performance to energy and emissions, data is the

crucial link between the physical and digital worlds. It’s also the key to

solving efficiency and sustainability challenges that are now more urgent than

ever. If the metaverse is meant to transform business and industries, it must be

built on solid data foundations. ... Digital transformation started with

connecting physical assets via IoT and edge controls. Its disruptive potential

has proven to carry operational and energy efficiency across all levels of an

enterprise. When we introduce powerful software capabilities and start

leveraging the generated data, we can create virtual representations of the

real world by combining simulation, augmented reality (AR), data sharing, and

visualization all at once. ... It seems that all these and many more possible

applications have something in common: they are all about bringing together

technologies to address challenges of the physical world, by giving real people

the means to learn, collaborate, act, and essentially create value through a

virtual, digitally augmented space.

Quote for the day:

"You always believe in other people.

But that's easy. Sooner or later you have to believe in yourself." --

Gary, The Muppets

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/archetype/4HHTBDEPMVH37L7LGPSJZIIPMI.jpg)