Ripe For Disruption: Artificial Intelligence Advances Deeper Into Healthcare

The challenges and changes needed to advance AI go well beyond technology

considerations. “With data and AI entering in healthcare, we are dealing with an

in-depth cultural change, that will not happen overnight,” according to

Pierron-Perlès at her co-authors. “Many organizations are developing their own

acculturation initiatives to develop the data and AI literacy of their resources

in formats that are appealing. AI goes far beyond technical considerations.”

There has been great concern about too much AI de-humanizing healthcare. But,

once carefully considered and planned, may prove to augment human care. “People,

including providers, imagine AI will be cold and calculating without

consideration for patients,” says Garg. “Actually, AI-powered automation for

healthcare operations frees clinicians and others from the menial, manual tasks

that prevent them from focusing all their attention on patient care. While other

AI-based products can predict events, the most impactful are incorporated into

workflows in order to resolve issues and drive action by frontline users.”

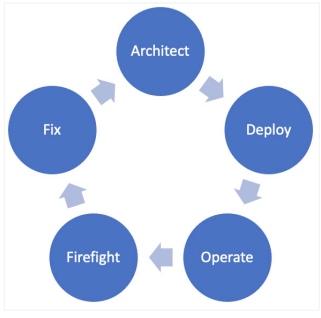

Extinguishing IT Team Burnout Through Mindfulness and Unstructured Time

Mindfulness is fundamentally about awareness. For it to grow, begin by

observing your mental state of mind, especially when you find yourself in a

stressful situation. Instead of fighting emotions, observe your mental state

as those negative ones arise. Think about how you’d conduct a deep root cause

analysis on an incident and apply that same rigor to yourself. The key to

mindfulness is paying attention to your reaction to events without judgment.

This can unlock a new way of thinking because it accepts your reaction, while

still enabling you to do what is required for the job. This contrasts being

stuck behind frustration or avoiding new work as it rolls in. ... Mindfulness

is an individual pursuit, while creativity is an enterprise pursuit, and

providing space for employees to be creative is another key to preventing

burnout. But there are other benefits as well. There is a direct correlation

between creativity and productivity. Teams that spend all their time working

on specific processes and problems struggle to develop creative solutions that

could move a company forward.

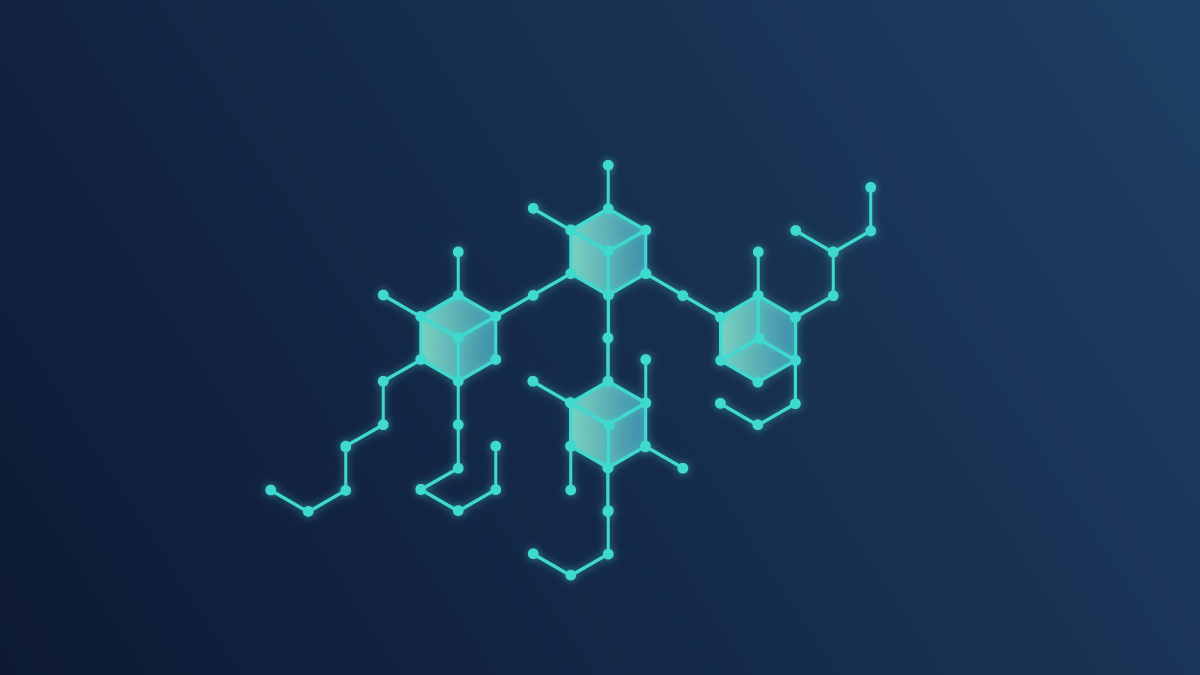

Overcoming the Four Biggest Barriers to Machine Learning Adoption

The first hurdles with adopting AI and ML are experienced by certain

businesses even before they begin. Machine learning is a vast field that

pervades most aspects of AI. It paves the way for a wide range of potential

applications, from advanced data analytics and computer vision to Natural

Language Processing (NLP) and Intelligent Process Automation (IPA). A general

rule of thumb for selecting a suitable ML use case is to “follow the money” in

addition to the usual recommendations on framing the business goals – what

companies expect Machine Learning to do for their business, like improving

products or services, improving operational efficiency, and mitigating risk.

... The biggest obstacle to deploying AI-related technologies is corporate

culture. Top management is often reluctant to take investment risks, and

employees worry about losing their jobs. Businesses must start with

small-scale ML use cases that demand realistic investments to achieve quick

wins and persuade executives in order to assure stakeholder and employee

buy-in. By providing workshops, corporate training, and other incentives, they

can promote innovation and digital literacy.

Fixing Metadata’s Bad Definition

A bad definition has practical implications. It makes misunderstandings much

more likely, which can infect important processes such as data governance and

data modeling. Thinking about this became an annoying itch that I couldn’t

scratch. What follows is my thought process working toward a better

understanding of metadata and its role in today’s data landscape. The problem

starts with language. Our lexicon hasn’t kept up with modern data’s complexity

and nuance. There are three main issues with our current discourse about

metadata: Vague language - We talk about data in terms of “data” or

“metadata”. But one category encompasses the other, which makes it very

difficult to differentiate between them. These broad, self-referencing terms

leave the door open to being interpreted differently by different people. A

gap in data taxonomy - We don’t have a name for the category of data that

metadata describes, which creates a gap at the top of our data taxonomy. We

need to fill it with a name for the data that metadata refers to. Metadata is

contextual - The same data set can be both metadata and not metadata depending

on the context. So we need to treat metadata as a role that data can play

rather than a fixed category.

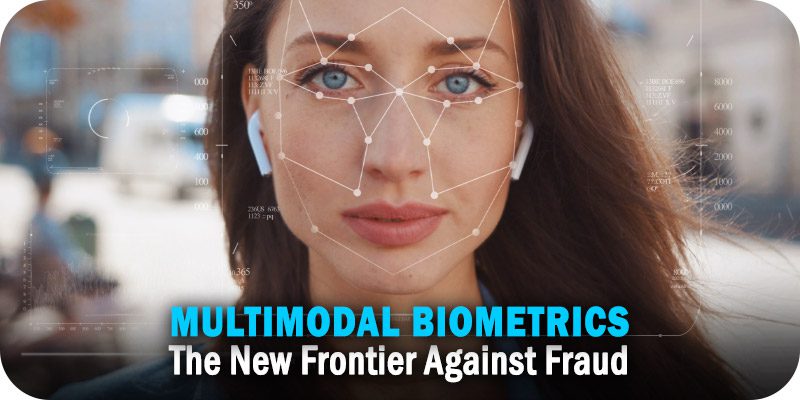

Addressing Privacy Challenges in Retail Media Networks

The top reason that consumers cite for mistrusting how companies handle their

data is a lack of transparency. Customers know at this point that companies

are collecting their data. And many of these customers won’t mind that you’re

doing it, as long as you’re upfront about your intentions and give them a

clear choice about whether they consent to have their data collected and

shared. What’s more, recent privacy laws have increased the need for companies

to shore up data security or face the consequences. In the European Union,

there’s the General Data Protection Regulation (GDPR). In the U.S., laws vary

by state, but California currently has the most restrictive policies thanks to

the California Consumer Protection Act (CCPA). Companies that have run afoul

of these laws have incurred fines as big as $800 million. Clearly, online

retailers that already have — or are considering implementing — a retail media

network should take notice and reduce their reliance on third-party data

sources that may cause trouble from a compliance standpoint.

For Gaming Companies, Cybersecurity Has Become a Major Value Proposition

Like any other vertical industry, games companies are tasked with protecting

their organizations from all nature of cybersecurity threats to their

business. Many of them are large enterprises with the same concerns for the

protection of internal systems, financial platforms, and employee endpoints as

any other firm. "Gaming companies have the same responsibility as any other

organization to protect customer privacy and preserve shareholder value. While

not specifically regulated like hospitals or critical infrastructure, they

must comply with laws like GDPR and CaCPA," explains Craig Burland, CISO for

Inversion6, a managed security service provider and fractional CISO firm.

"Threats to gaming companies also follow similar trends seen in other segments

of the economy — intellectual property (IP) theft, credential theft, and

ransomware." IP issues are heightened for these firms, like many in the

broader entertainment category, as content leaks for highly anticipated new

games or updates can give a brand a black eye at best, and at worst hit them

more directly in the financials.

Driving value from data lake and warehouse modernisation

To achieve this, Data Lakes and Data Warehouses need to grow alongside the

business requirements in order to be kept efficient and up to date. Go

Reply is a leading Google Cloud Platform Service integrator (SI) that is

helping companies that span multiple sectors along this vital journey.

Part of the Reply Group, Go Reply is a Google Cloud Premier Partner focussing

on areas to include Cloud Strategy and Migration; Big Data; Machine Learning;

and Compliance. With Data Modernisation capabilities in the GCP environment

constantly evolving, businesses can become overwhelmed and unsure on not only

next steps, but more importantly next steps for them, particularly if they

don’t have in-house Google expertise. Companies often need to utilise both

Data Lakes and Data Warehouses simultaneously so guidance on how to do this,

as well as driving value from both kinds of storage is vital. When speaking to

the Go Reply leadership team they advise that Google Cloud Platform being

the hyperscale cloud of choice for these workloads, brings technology around

Data Lake, and Data Warehouse efficiency, along with security superior to

other market offerings.

Three tech trends on the verge of a breakthrough in 2023

The second big trend is around virtual reality, augmented reality and the

metaverse. Big tech has been spending big here, and there are some suggestions

that the basic technology is reaching a tipping point, even if the broader

metaverse business models are, at best, still in flux. Headset technologies

are starting to coalesce and the software is getting easier to use. But the

biggest issue is that consumer interest and trust is still low, if only

because the science fiction writers got there long ago with their dystopian

view of a headset future. Building that consumer trust and explaining why

people might want to engage is just as a high a priority as the technology

itself. One technology trend that's perhaps closer, even though we can't see

it, is ambient computing. The concept has been around for decades: the idea is

that we don't need to carry tech with us because the intelligence is built

into the world around us, from smart speakers to smart homes. Ambient

computing is designed to vanish into the environment around us – which is

perhaps why it's a trend that has remained invisible to many, at least until

now.

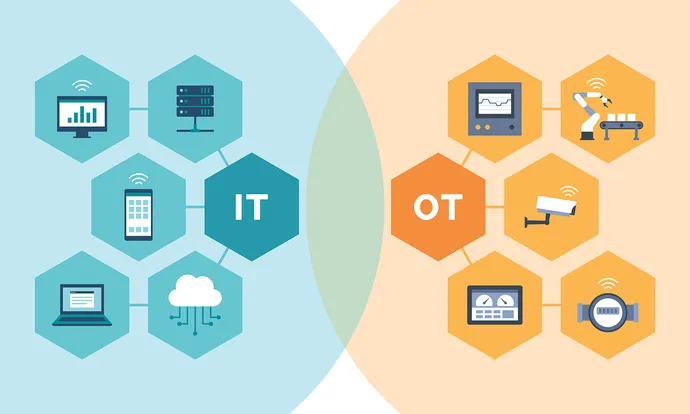

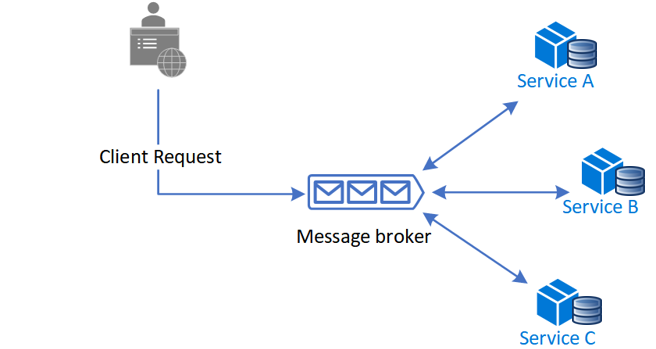

CIOs beware: IT teams are changing

The role of IT is shifting to be more strategy-oriented, innovative, and

proactive. No longer can days be spent responding to issues – instead, issues

must be addressed before they impact employees, and solutions should be

developed to ensure they don’t return. What does this look like? Rather than

waiting for an employee to flag an issue within their system – such as

recurring issues with connectivity, slow computer start time, etc. – IT can

identify potential threats to workflows before they happen. They plug the

holes, then they establish a strategy and framework to avoid the problem

entirely in the future. In short, IT plays a critical role in successful

workplace flow in both a proactive and reactive way. For those looking to

start a career in IT, the onus falls on them to make suggestions and changes

that look holistically at the organization and how employees interact within

it. IT teams are making themselves strategic assets by thinking through how to

make things more efficient and cost-effective in the long term.

A Comprehensive List of Agile Methodologies and How They Work

Extreme Programming (or XP), offers some of the best buffers against

unexpected changes or late-stage customer demands. Within sprints and from the

start of the business process development, feedback gathering takes place.

It’s this feedback that informs everything. This means the entire team becomes

accustomed to a culture of pivoting on real-world client demands and outcomes

that would otherwise threaten to derail a project and seriously warp lead time

production. Any organization with a client-based focus will understand the

tightrope that can exist between external demands and internal resources.

Continuously orienting those resources based on external demands as they

appear is the single most efficient way to achieve harmony. This is something

that XP does organically once integrated into your development culture. ,,.

Trimming the fat from the development process is what this method is all

about. If something doesn’t add immediate value, or tasks within tasks seem to

be piling up, the laser focus of Lean Development steps in.

Quote for the day:

"Confident and courageous leaders have

no problems pointing out their own weaknesses and ignorance. " --

Thom S. Rainer

/filters:no_upscale()/articles/product-mindset-devops/en/resources/22%20product%20market%20fit-1668435929840.jpeg)