Magnanimous machines: Why AI work should work for people and not the other way around

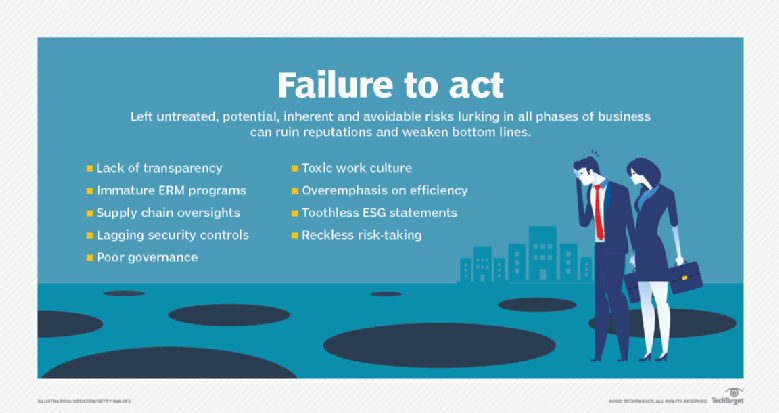

The consolidation of power amongst a Big Tech elite fused with state

intelligence grows ever stronger. These entities can know everything about us,

yet carefully hide their own clandestine obfuscated activities. The best defence

to this asymmetry is radical, mandated, and cryptographically secure

transparency. To illustrate, in commercial aircraft, we have two essential data

recorders (‘black boxes’). One monitors the aircraft itself, and the other

monitors cockpit chatter. Both recorders are necessary to understand why an

incident has occurred. For the same reasons, we need a similar approach to

humane technology. Transparency is the foundation upon which every other aspect

of ethical technology rests. It is essential to understand a system, its

functions, as well as attributes of the organisation, and not to forget, those

who steer it. Through transparency, we can understand that the incentive

structures within organisations are aligned towards producing honest good faith

outcomes. We can understand what may have gone wrong and how to fix it in the

future.

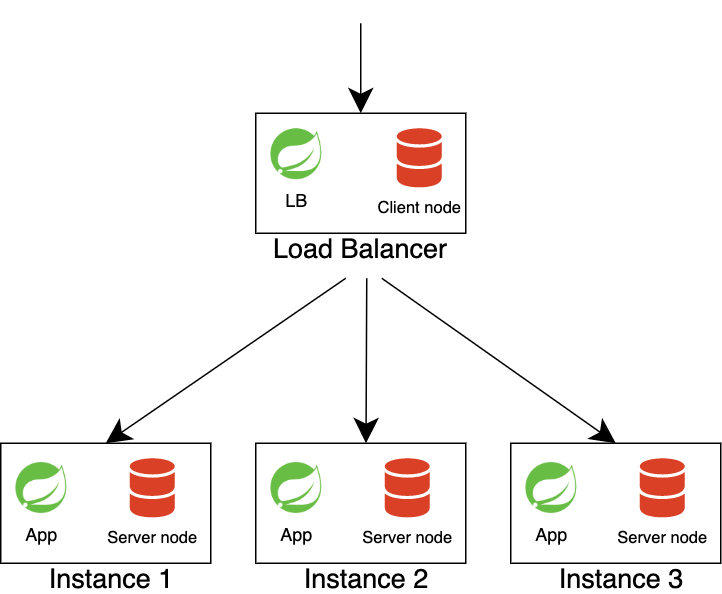

8 Keys to Failproof App Modernization

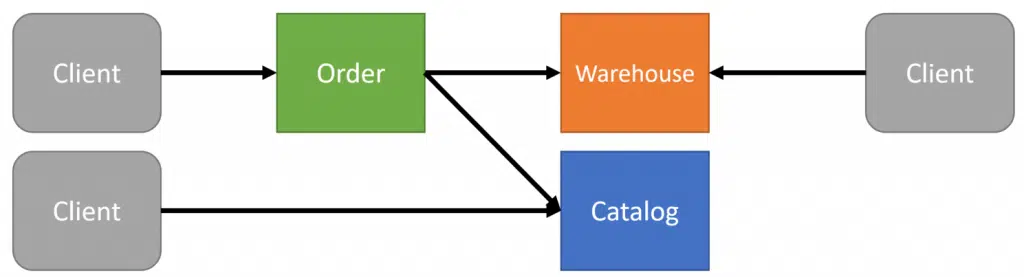

Typically, modernization initiatives are strategized or rolled out before major

events or milestones like data center contracts and vendor contracts coming up

for renewal, Software and hardware platforms going End of Service and Support

Life, government-imposed deadlines to implement regulatory and compliance

requirements, ageing workforce and the risk of shortage of skills. In all such

scenarios, since the accumulated technical debt is so high, these become

multi-year, multi-million modernization programs. Risks are equally higher in

such large programs. And to optimize costs and minimize risks, the temptation

sometimes is to somehow get these workloads to the target platform [containerize

or rehost without really changing the underlying architecture]. This will result

in more technical debt and will necessitate another modernization initiative in

a few years and so it goes. The chances of success are much higher if the

initiatives are incremental in nature and time-bound say 3-6 months. In fact, it

is a recommended practice in agile development practices to pay down technical

debt regularly every single sprint.

Three key issues to tackle before smart cities become a reality

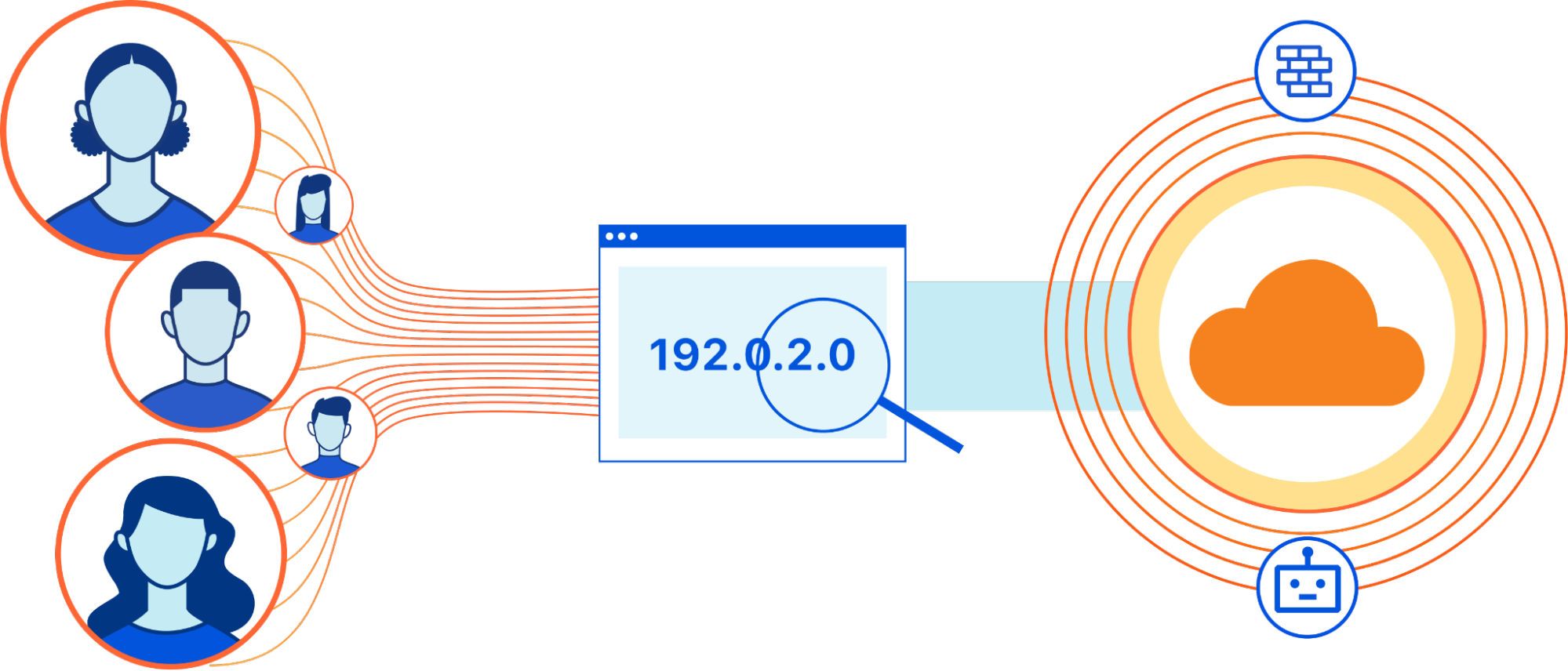

Many smart systems require data to be validated and assimilated in real-time

for it to be relevant. This poses a problem, in that it requires every citizen

to agree to their data being collected and shared, which in turn requires

trust. That means the collection of data and its use to influence critical

decisions in smart cities, needs careful consideration. However, citizens

often worry about being ‘tracked’ – a difficult perception to eradicate in a

world where privacy and security are among the biggest challenges each of us

faces. Overcoming it requires us to build a comprehensive data privacy and

security strategy into any smart city development, with local governments then

responsible for educating individuals and society on how their data will be

stored, who has access, and how it can be used. Such strategies require

careful consideration, as any mistakes that harm public trust could impact the

success of smart cities. The NHS COVID-19 app is a good example of this – once

people lacked trust in the application, it took only a matter of days for

thousands of people to delete it.

Engineering Digital Transformation for Continuous Improvement

Getting organizations to invest in improvements and embrace new ways of

working is a challenge. They don’t just need the right technical solutions,

they also need to address the organizational change management challenges that

are creating resistance to new ways of working. Organizations frequently have

champions that have ideas for improvement and are trying to influence change

without a lot of success. These champions find that the harder they push for

change, the more people resist. We can have all the best approaches in the

world, but if we can’t figure out how to overcome this resistance,

organizations will never adopt them and realize the benefits. While pushing

for change is the natural approach, research by organizational change

management experts, like Professor Jonah Bergerin his book “The Catalyst,”

suggests this is the wrong approach. His research shows that the harder you

push for change, the more people resist. Whenever they feel like they are

trying to be influenced, their anti-persuasion radar kicks in and instead they

start shooting down ideas and resisting the change being offered.

A transactional approach to power

As Battilana and Casciaro tell it, it’s not your personal or positional power

that determines your effectiveness in any given situation. It is your ability

to understand what resources the involved parties want and how the resources

are distributed—that is, the balance of power. “We find this extremely

compelling,” explains Casciaro, “because it brings power relationships—whether

they are interpersonal, intergroup, interorganizational, or international—down

to four simple factors.” Taking this a step further, the ability to shift the

balance of power within a situation determines your success at exercising

power. Battilana and Casciaro find there are several key strategies that

support this ability to rebalance power. If you have resources the other party

values, attraction is a key strategy. You try to increase the value of those

resources for the other party. Personal and corporate brand-building are

organized around this strategy. If the other party has too many paths to

access your resources, consolidation is a key strategy. You try to eliminate

or otherwise lessen the alternatives. Employees join unions to limit the

alternatives of employers and increase their power.

Treasury Dept. to Crypto Companies: Comply with Sanctions

The announcement is the latest in a series of moves from the Biden

administration to combat ransomware, following high-profile attacks this year

that have disrupted the East Coast's fuel supply during the Colonial Pipeline

incident; jeopardized the nation's meat supply by attacking JBS USA; and

knocking some 1,500 downstream organizations offline by zeroing in on managed

service provider, Kaseya, over the July Fourth holiday. Last month, the

Treasury Department blacklisted Russia-based cryptocurrency exchange, Suex,

for allegedly laundering tens of millions of dollars for ransomware operators,

scammers and darknet markets. In its latest issuance, the department alleges

that over 40% of Suex’s transaction history had been associated with illicit

actors, involving the proceeds from at least eight ransomware variants.

Similarly, this week, the White House National Security Council facilitated a

30-nation, two-day "counter-ransomware" event, which found senior officials

strategizing on ways to improve network resiliency, addressing illicit

cryptocurrency usage, and ways to heighten law enforcement collaboration and

diplomacy.

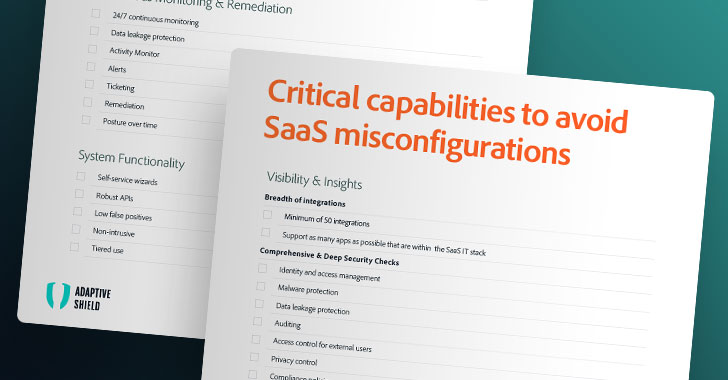

DevSecOps: 11 questions to ask about your security strategy now

Where does friction exist between security and business goals? The

question is relatively self-explanatory: DevSecOps exists in part to remove

friction and bottlenecks that have historically introduced risks rather than

reduce them. The question also has a subtext: What are we doing about it? This

friction often goes unaddressed because, well, it’s unaddressed – as in,

people avoid pointing it out or talking about it, whether because of poor

relationships, fear factors, cultural acceptance, or other reasons. Leaders

need to take an active role here by showing their willingness to talk about

it, without finger-pointing or other toxic behaviors. “Leaders should

constantly be probing and trying to understand the friction points between the

business and DevSecOps,” says Jerry Gamblin, director of security research at

Kenna Security, now part of Cisco. “These often uncomfortable conversations

will help you refocus your team’s goals on the company’s goals.” A willingness

to have those uncomfortable conversations as a pathway to positive long-term

change is a key characteristic of a healthy culture.

The importance of crisis management in the age of ransomware

How to prepare for ransomware attacks is an often-asked question. From my

point of view, the best action is to go through the checklist of security

controls that prevent hackers from taking control of your network.

Organizations like Servadus offer a Ransomware Readiness Assessment which

helps organizational leadership identify current risks to the corporation. Of

course, having up-to-date incident response and business continuity plans are

part of that assessment. Outside, the real value comes from remediating weak

cybersecurity controls. Additionally, organizations implement a framework to

shore security control implementation and sustainability. Many organizations

try to maintain compliance and security controls but are vulnerable to attacks

3 to 6 months after validating security in channels in place. The long-term

strategy is about validating sustainable security controls. The service

framework also allows organizations to evaluate threats to the organization

and vulnerabilities of the system software in use.

The importance of staff diversity when it comes to information security

The information security team’s decision-making is diversified, which

contributes to the organisation’s overall strength. The fact that each

employee provides an unique perspective to the problem makes it simpler to

recognise and address hidden vulnerabilities in the security operations, as

well as to identify and correct the deficiencies of other employees, helping

them to grow in their own areas of expertise. Consider the likelihood of a

breach, as well as the “red team” that will be assigned to deal with the

situation. SOC analyst looks for the logs, security engineer looks for the

vulnerability and some other team members look for the defensive approach to

defend against the vulnerability. As a result, in order to maintain a strong

information security team, it is critical to have a diverse workforce. It

facilitates task efficiency while encouraging alternative viewpoints.

... An organisation’s security cannot be handled by a single product, and

in order to maintain and handle those security products, companies require a

large number of employees. So, in order to work efficiently and effectively

without being reliant on a single person or product, this should be common

knowledge.

Is it right or productive to watch workers?

The recent rise in employee surveillance accelerated during the pandemic,

largely because it had to, but the bottom line is that we are now more than

ever accustomed to being watched. We accept the intrusion of cameras in novel

spaces under the promise of increased safety; doorbell cameras spring to mind,

but so too do webcams and smartphones; we accept data tracking to prove we’re

“not a robot” on websites; we accept that our information, our clicks, and our

preferences are observed and noted. We seem to be primed now to accept that

companies have a reasonable expectation to protect their own safety, so to

speak, by monitoring us. One recent survey by media researcher Clutch of 400

US workers found that only 22% of 18- to 34-year-old employees were concerned

about their employers having access to their personal information and activity

from their work computers. Meanwhile, in a pre-pandemic survey of US workers

by US media group Axios from August 2019, 62% of respondents agreed that

employers should be able to use technology to monitor employees.

Quote for the day:

"Leaders are more powerful role models

when they learn than when they teach." -- Rosabeth Moss Kantor