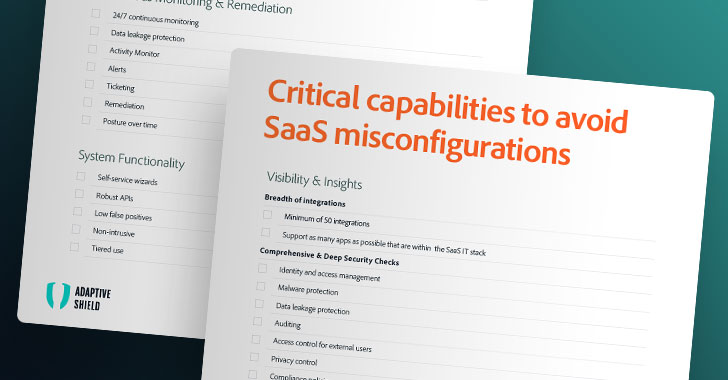

Is SASE right for your organization? 5 key questions to ask

Many analysts say that SASE is particularly beneficial for mid-market companies

because it replaces multiple, and often on-premises, tools with a unified cloud

service. Many large enterprises, on the other hand, will not only have legacy

constraints to consider, but they may also prefer to take a layered security

approach with best-of-breed security tools. Another factor to consider is that

the SASE offering might be presented as a consolidated solution, but if you dig

a little deeper is might actually be a collection of different tools from

various partnering vendors, or features obtained through acquisition that have

not been fully integrated. Depending on the service provider, SASE offers a

unified suite of security services, including but not limited to encryption,

multifactor authentication, threat protection, Data Leak Prevention (DLP), DNS,

and traditional firewall services. ... With incumbents such as Cisco, VMware,

and HPE all rolling out SASE services, enterprises with existing vendor

relationships may be able to adopt SASE without needing to worry much about

protecting previous investments.

How gamifying cyber training can improve your defences

Gamification is an attempt to enhance systems and activities by creating similar

experiences to those in games, in order to motivate and engage users, while

building their confidence. This is typically done through the application of

game-design elements and game principles (dynamics and mechanics) in non-game

contexts. Research into gamification has proved that it has positive effects.

... Gamification has been dismissed by some as a fad, but the application of

elements found within game playing, such as competing or collaborating with

others and scoring points, can effectively translate into staff training and

improve engagement and interest. “The way that cyber security training sessions

are happening is changing and it’s for the better,” says Helen McCullagh, a

cyber risk specialist for an end-user organisation. “If you look at the

engagement of sitting people down and them doing a one-hour course every year,

then it is merely a box-ticking exercise. Organisations are trying to get 100%

compliance, but what you have are people sitting there doing their shopping

list.”

The 3 Phases Of The Metaverse

There are several misconceptions about the metaverse today. In simple terms, the

metaverse is the convergence of physical and digital on a digital plane. In its

ideal phase, you can access the metaverse from anywhere, just like the internet.

Early metaverse apps were focused on creating games with tokenized incentives

(play-to-earn) and hadn’t initially been thought of as contributing to the next

phase of the internet. One of the most prominent examples is the online game

Second Life, which is regarded as the earliest web2-based metaverse platform.

Users have an identity projected through an avatar and participate in

activities—very much a limited “second” life. ... Unlike the previous phase,

Phase 2 is all about creating utilities. Brands, IP holders and companies

investing in innovation have been collaborating with gaming metaverse dApps to

understand consumer behaviors and economic dynamics. No-coding tools, as well as

software development kits, in this phase, are empowering the end user to

co-create alongside developers, designers, brands and retail investors. Still,

interoperability—the import and export of digital assets—is only possible on a

single chain, and the user experience is still seen as gaming in 2-D or 3-D

environments.

Why the Agile approach might not be working for your projects

Although Scrum is a well-described methodology, when applied in practice it is

often tailored to the specific circumstances of the organisation. These

adaptations are often called ScrumBut (“we use Scrum, but …”). Some deviations

from the fundamental principles of Scrum, however, may be problematic. These

undesirable deviations are called anti-patterns — bad habits formed and

influenced by the human factor. What exactly can we consider an anti-pattern? It

can be a disagreement on whether or not the task is completed, a disruption

caused by the customer, unclear items in the backlog, the indecisiveness of

stakeholders (customers, management, etc.), and lack of authority or poor

technical knowledge on the part of the Scrum master. We collected detailed

information in three Scrum teams using a variety of data collection procedures

over a sustained period of time — including observation, surveys, secondary

data, and semi-structured interviews – to get a detailed understanding of

anti-patterns, and their causes and consequences.

Rise of Data and Asynchronization Hyped Up at AWS re:Invent

Because it was believed that asynchronous programming was difficult, he said,

operating systems tended to have restrained interfaces. “If you wanted to

write to the disk, you got blocked until the block was written,” Vogels said.

Change began to emerge in the 1990s, he said, with operating systems designed

from the ground up to expose asynchrony to the world. “Windows NT was probably

the first one to have asynchronous communication or interaction with devices

as a first principle in the kernel.” Linux, Vogels said, did not pick up

asynchrony until the early 2000s. The benefit of asynchrony, he said, is it is

natural compared with the illusion of synchrony. When compute systems are

tightly coupled together, it could lead to widespread failure if something

goes wrong, Vogels said. With asynchronous systems, everything is decoupled.

“The most important thing is that this is an architecture that can evolve very

easily without have to change any of the other components,” he said. “It is a

natural way of isolating failures. If any of the components fails, the whole

system continues to work.”

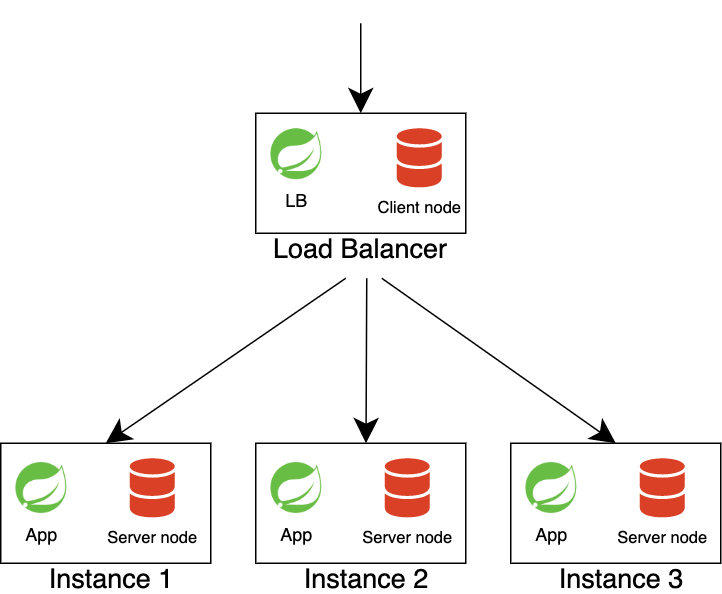

Entity Framework Fundamentals

EF has two ways of managing your database. In this tutorial, I will explain

only one of them; code first. The other one is the database first. There is a

big difference between them, but code first is the most used. But before we

dive in, I want to explain both approaches. Database first is used when there

is already a database present and the database will not be managed by code.

Code first is used when there is no current database, but you want to create

one. I like code first much more because I can write entities (these are

basically classes with properties) and let EF update the database accordingly.

It's just C# and I don't have to worry about the database much. I can create a

class, tell EF it's an entity, update the database, and all is done! Database

first is the other way around. You let the database 'decide' what kind of

entities you get. You create the database first and create your code

accordingly. ... With Entity Framework, it all starts with a context. It

associates entities and relationships with an actual database. Entity

Framework comes with DbContext, which is the context that we will be using in

our code.

How Executive Coaching Can Help You Level Up Your Organization

As we all know, the desire for personal growth is extremely valuable- however,

as employee demands from the workplace have shifted, leadership skills have

not. As employees climb the ranks, they find their way into leadership without

necessarily learning the skills and techniques required to lead. Many new

leaders turn to a trusted mentor who would only provide information based on

lived experience. On the other hand, executive coaches are tasked with

improving performances and capabilities as their day job. But there is a

misconception that executive coaches are for leaders who have done something

wrong. While it's true that an executive coach could support a difficult

employee become a better teammate, they can also be guides for leaders to

pursue their desired career paths. Leadership coaching explains that the main

drivers of innovation in an organization are the people and the corporate

culture, and it can provide leaders with the tools to master these levers. An

executive coaching professional can guide leaders through the steps that allow

them to set the foundations of an innovative and competitive company.

Ransomware: Is there hope beyond the overhyped?

The old way of thinking about cyber security was imagining it like a castle.

You’ve got the vast perimeter – the castle walls – and inside was the keep,

where employees and data would live. But now organisations are operating in

various locations. They’ve got their cloud estate in one or more providers,

source code residing in another location, and vast amounts of work devices

that are now no longer behind the castle walls, but at employees’ homes – the

list could go on for ever. These are all areas that could potentially be

breached and used to gain intelligence on the business. The attack surface is

growing, and the castle wall can no longer circle around all these places to

protect them. Attack surface management will play a big part in tackling

this issue. It allows security and IT teams to almost visualise the external

parts of the business and identify targets and assesses risks based on the

opportunities they present to a malicious attacker. In the face of a

constantly growing attack surface, this can enable businesses to establish a

proactive security approach and adopt principles such as assume breach and

cyber resilience.

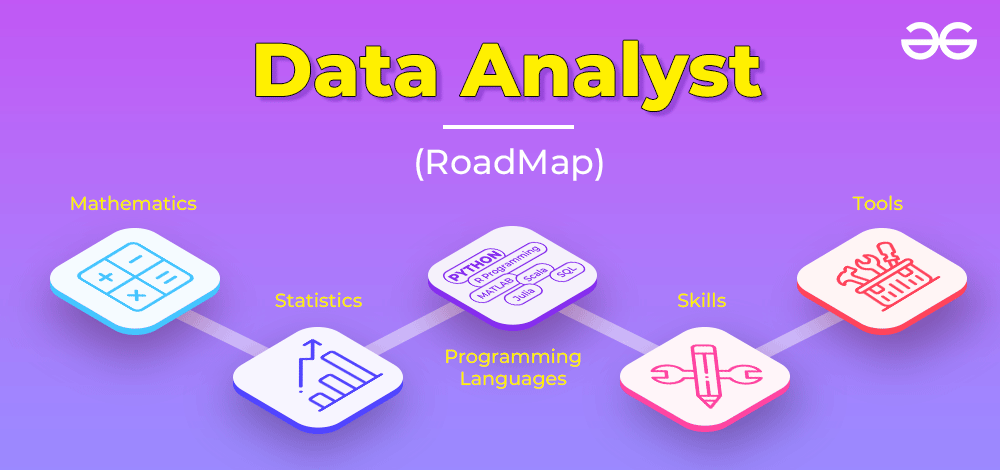

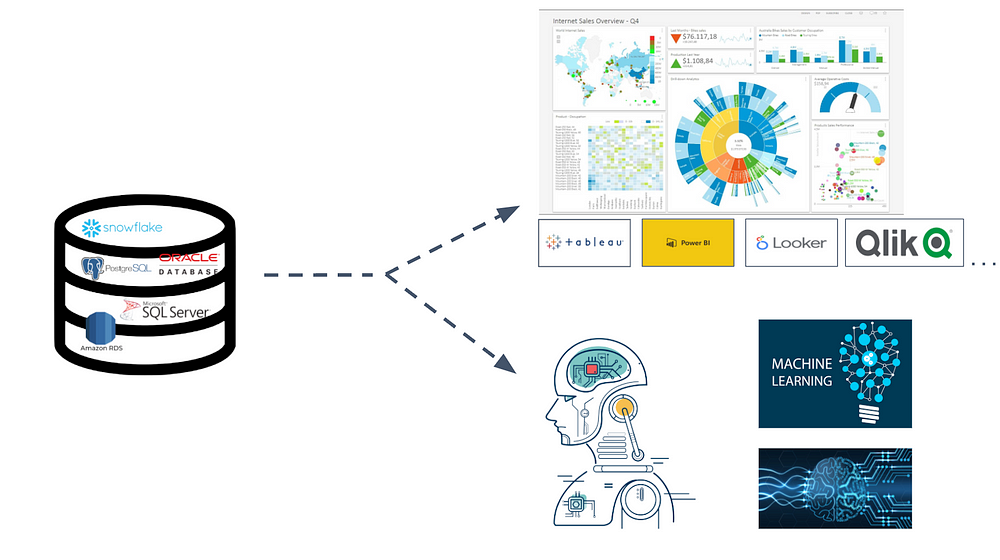

How data analysts can help CIOs bridge the tech talent shortfall

Business analytics are only as good as the data they’re using. Given the

wealth and complexity of data, it’s easy to understand why leaders are often

overwhelmed in their attempts to access better analytics and insights. This is

where data professionals can help. Data scientists and analysts are

statistics, math, databases, and systems experts. They are especially adept at

looking at historical metrics, recognizing patterns, pulling in market

insights, and identifying outlier data to ensure the best points are utilized.

They’re also able to organize vast amounts of unstructured data, which is

often very valuable but difficult to analyze, by leveraging conventional

databases and other tools to make the data more actionable. ... It’s also

important to look at the attributes of the data scientists and analysts

themselves. In addition to having technical skills, data professionals with a

background in programming, data visualization, and machine learning are also

highly valuable. On the non-technical side, they should have strong

interpersonal and communication skills to relay their findings to the tech

team and those without a tech or math background.

What Does Technical Debt Tell You?

Making most architectural decisions at the beginning of a project, often

before the QARs are precisely defined, results in an upfront architecture that

may not be easy to evolve and will probably need to be significantly

refactored when the QARs are better defined. Contrastingly, having a

continuous flow of architectural decisions as part of each Sprint results in

an agile architecture that can better respond to QAR changes. Almost every

architectural decision is a trade-off between at least two QARs. For example,

consider security vs. usability. Regardless of the decision being made, it is

likely to increase technical debt, either by making the system more vulnerable

by giving priority to usability or making it less usable by giving priority to

security. Either way, this will need to be addressed at some point in the

future, as the user population increases, and the initial decision to

prioritize one QAR over the other may need to be reversed to keep the

technical debt manageable. Other examples include scalability vs.

modifiability, and scalability vs. time to market. These decisions are often

characterized as "satisficing", i.e., "good enough".

Quote for the day:

"The ability to summon positive

emotions during periods of intense stress lies at the heart of effective

leadership." -- Jim Loehr