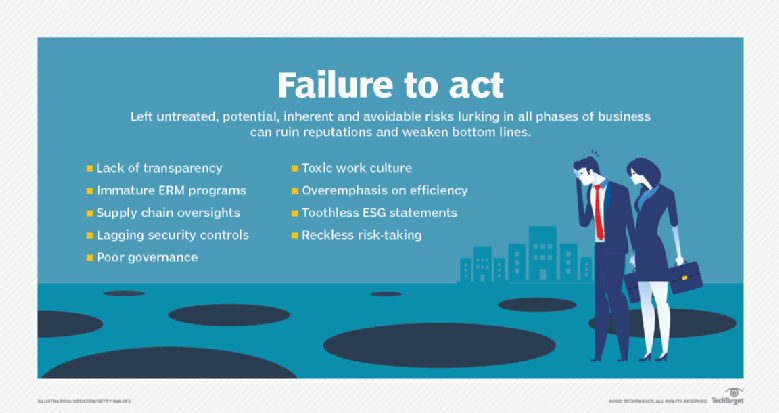

9 common risk management failures and how to avoid them

Known for decades as the hub of technical innovation, Silicon Valley has evolved

into a bastion of toxic "bro culture," according to Alla Valente, senior analyst

at Forrester Research. She also cited other forms of toxic work culture when

companies fail to mitigate risks that can alienate employees and customers.

Facebook's lukewarm response to the Cambridge Analytica scandal, Valente argued,

has significantly eroded its trustworthiness and market potential. Wells Fargo's

executives turning a blind eye to the warning signs of the bank's predatory

selling practices with their customers "was a strategic decision," Valente said.

"It could have been fixed, but fixing culture is never easy." ... Efficiency and

resiliency sit at opposite ends of the spectrum, Matlock said. Greater

efficiency can lead to greater profits when things go well. The auto industry

realized significant savings by creating a supply chain of thousands of

third-party suppliers spread across multiple tiers. But during the pandemic,

there were massive disruptions in supply chains that lacked resiliency.

Known for decades as the hub of technical innovation, Silicon Valley has evolved

into a bastion of toxic "bro culture," according to Alla Valente, senior analyst

at Forrester Research. She also cited other forms of toxic work culture when

companies fail to mitigate risks that can alienate employees and customers.

Facebook's lukewarm response to the Cambridge Analytica scandal, Valente argued,

has significantly eroded its trustworthiness and market potential. Wells Fargo's

executives turning a blind eye to the warning signs of the bank's predatory

selling practices with their customers "was a strategic decision," Valente said.

"It could have been fixed, but fixing culture is never easy." ... Efficiency and

resiliency sit at opposite ends of the spectrum, Matlock said. Greater

efficiency can lead to greater profits when things go well. The auto industry

realized significant savings by creating a supply chain of thousands of

third-party suppliers spread across multiple tiers. But during the pandemic,

there were massive disruptions in supply chains that lacked resiliency. Mandating a Zero-Trust Approach for Software Supply Chains

SBOMs are a great first step towards supply-chain transparency, but there is

more that needs to be done. As an analogy, many equate the SBOM to the

ingredient labels on food. With that perspective, we can see parallels between

our software supply chain and the food supply chain. Subsequently, the need for

end-to-end provenance and resistance against tampering should be clear. For this

reason, I am encouraged by Google’s proposed Supply-Chain Levels for Software

Artifacts (SLSA) framework that moves us towards a common language that

increases the transparency and integrity of our software supply chain. However,

for some software that performs critical functions (e.g., security), food is an

inadequate comparison. It may be more apt to compare this type of software to

medicine. This analogy brings forth additional considerations. For example, the

drug-facts label on medicines includes not just the ingredients, but also usage

guidelines and contraindications (i.e., what to look for in case something goes

wrong.) Furthermore, as we’ve all seen with the COVID-19 vaccine, medicines must

undergo intensive review and testing before it is approved for use.

SBOMs are a great first step towards supply-chain transparency, but there is

more that needs to be done. As an analogy, many equate the SBOM to the

ingredient labels on food. With that perspective, we can see parallels between

our software supply chain and the food supply chain. Subsequently, the need for

end-to-end provenance and resistance against tampering should be clear. For this

reason, I am encouraged by Google’s proposed Supply-Chain Levels for Software

Artifacts (SLSA) framework that moves us towards a common language that

increases the transparency and integrity of our software supply chain. However,

for some software that performs critical functions (e.g., security), food is an

inadequate comparison. It may be more apt to compare this type of software to

medicine. This analogy brings forth additional considerations. For example, the

drug-facts label on medicines includes not just the ingredients, but also usage

guidelines and contraindications (i.e., what to look for in case something goes

wrong.) Furthermore, as we’ve all seen with the COVID-19 vaccine, medicines must

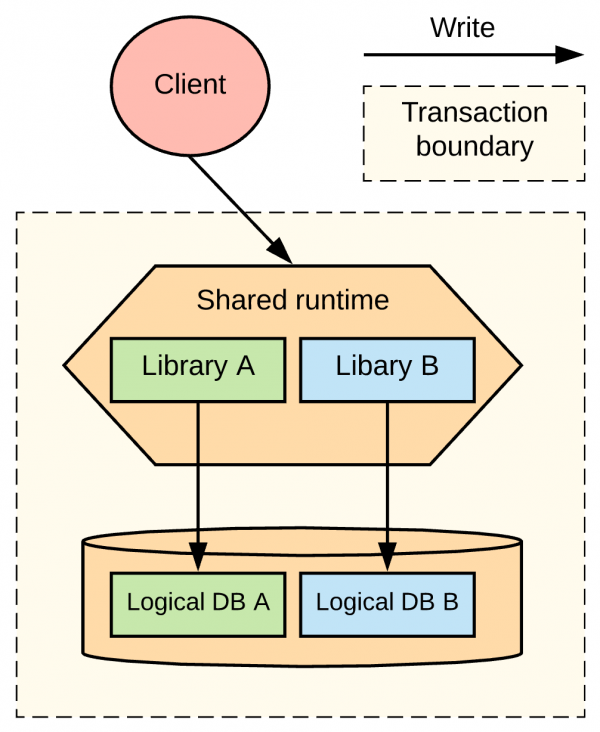

undergo intensive review and testing before it is approved for use. Data Consistency Between Microservices

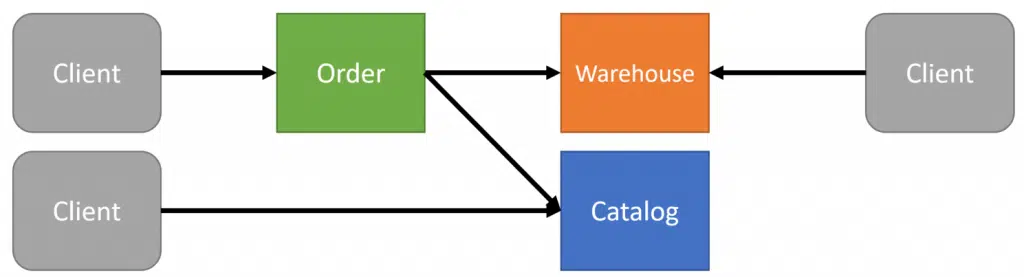

The root of the problem is querying data from other boundaries that will be

immediately inconsistent the moment it’s returned, just as in my first example

without a serializable transaction. If you’re making HTTP or gRPC calls to other

services to retrieve data that you require to perform business logic, you’re

dealing with inconsistent data. If you store a local cache copy that’s

eventually consistent, you’re dealing with inconsistent data. Is having

inconsistent data an issue? Go ask the business. If it is, then you need to get

all relevant data within the same boundary that’s required. There are two pieces

of data we ultimately needed. We required the Quantity on Hand from the

warehouse. In reality in the distribution/warehouse domain, you don’t rely on

the “Quantity on Hand”. When dealing with physical goods, the point of truth is

actually what is actually in the warehouse, not what the software/database

states. ... The system is eventually consistent with the real

world. Because of this, Sales has the concept of Available to Promise (ATP)

which is a business function for customer order promising based on what’s been

ordered but not yet shipped, purchased but not yet received, etc.

The root of the problem is querying data from other boundaries that will be

immediately inconsistent the moment it’s returned, just as in my first example

without a serializable transaction. If you’re making HTTP or gRPC calls to other

services to retrieve data that you require to perform business logic, you’re

dealing with inconsistent data. If you store a local cache copy that’s

eventually consistent, you’re dealing with inconsistent data. Is having

inconsistent data an issue? Go ask the business. If it is, then you need to get

all relevant data within the same boundary that’s required. There are two pieces

of data we ultimately needed. We required the Quantity on Hand from the

warehouse. In reality in the distribution/warehouse domain, you don’t rely on

the “Quantity on Hand”. When dealing with physical goods, the point of truth is

actually what is actually in the warehouse, not what the software/database

states. ... The system is eventually consistent with the real

world. Because of this, Sales has the concept of Available to Promise (ATP)

which is a business function for customer order promising based on what’s been

ordered but not yet shipped, purchased but not yet received, etc.5 ways CIOs are redefining teamwork for a hybrid world

Most CIOs face similar grand experiments as hybrid work environments are

becoming permanent. They are evaluating which team structures have been

successful remotely and are looking to replicate them, while balancing

innovation, collaboration, mentorship, and culture transfer, which have

traditionally been done in person. Some 30% of IT leaders surveyed by IDC say

they prefer an “online-first” policy for collaboration, and practices that

started during the pandemic will likely continue indefinitely. While many

workers say they have been more productive working remotely, that doesn’t always

equate to better teamwork. “We’ve squeezed a lot of innovation out of necessity,

but some of that serendipitous innovation that occurs through creative collision

has been less,” says Aaron De Smet, senior partner at McKinsey and Co., who

spoke at the IDG Future of Work Summit in October. “Companies have started to

get their heads around a hybrid workforce, but I don’t think they’ve cracked

what hybrid interactions look like. More of the work people do is now part of a

cross-functional team. It’s part of a collaborative effort … ” De Smet

says.

Most CIOs face similar grand experiments as hybrid work environments are

becoming permanent. They are evaluating which team structures have been

successful remotely and are looking to replicate them, while balancing

innovation, collaboration, mentorship, and culture transfer, which have

traditionally been done in person. Some 30% of IT leaders surveyed by IDC say

they prefer an “online-first” policy for collaboration, and practices that

started during the pandemic will likely continue indefinitely. While many

workers say they have been more productive working remotely, that doesn’t always

equate to better teamwork. “We’ve squeezed a lot of innovation out of necessity,

but some of that serendipitous innovation that occurs through creative collision

has been less,” says Aaron De Smet, senior partner at McKinsey and Co., who

spoke at the IDG Future of Work Summit in October. “Companies have started to

get their heads around a hybrid workforce, but I don’t think they’ve cracked

what hybrid interactions look like. More of the work people do is now part of a

cross-functional team. It’s part of a collaborative effort … ” De Smet

says. 3 Signs You’re Ready For A Machine Learning Job When You’ve Come From Another Field

Google Opens Up Spanner Database With PostgreSQL Interface

The integration of PostgreSQL into Cloud Spanner is deep; it is not just some

conversion overlay. At the database schema level, the PostgreSQL interface for

Cloud Spanner supports native PostgresSQL data types and its data description

language (DDL), which is a syntax for creating users, tables, and indexes for

databases. The upshot is that if you write a schema for the PostgreSQL interface

for Cloud Spanner is that it will port to and run on any real PostgreSQL

database, which means customers are not trapped on the Google Cloud is they use

this service in production and want to switch. But customers do have to be

careful. Spanner functions, like table interleaving, have been added to the

PostgreSQL layer because they are important features in Spanner. You can get

stuck because of these. ... The PostgreSQL interface for Cloud Spanner compiles

PostgresSQL queries down to Spanner’s native distributed query processing and

storage primitives and does not just support the PostgreSQL wire protocol, which

allows for clients and myriad third-party analytics tools to interact with the

PostgreSQL database.

The integration of PostgreSQL into Cloud Spanner is deep; it is not just some

conversion overlay. At the database schema level, the PostgreSQL interface for

Cloud Spanner supports native PostgresSQL data types and its data description

language (DDL), which is a syntax for creating users, tables, and indexes for

databases. The upshot is that if you write a schema for the PostgreSQL interface

for Cloud Spanner is that it will port to and run on any real PostgreSQL

database, which means customers are not trapped on the Google Cloud is they use

this service in production and want to switch. But customers do have to be

careful. Spanner functions, like table interleaving, have been added to the

PostgreSQL layer because they are important features in Spanner. You can get

stuck because of these. ... The PostgreSQL interface for Cloud Spanner compiles

PostgresSQL queries down to Spanner’s native distributed query processing and

storage primitives and does not just support the PostgreSQL wire protocol, which

allows for clients and myriad third-party analytics tools to interact with the

PostgreSQL database.The pursuit of transformation: Opportunities and pitfalls

Some transformations fail when there is a lack of alignment between the

company’s strategy and its employees, customers and partners. There is a famous

fable of an ant trying its hardest to change its trajectory but not realising

that it is sitting on an elephant that’s going in the opposite direction. No

matter how hard the little ant tries, it will not reach its destination as long

as the elephant is not in alignment. All organizations have a culture and an

emotional ethos, which if left unaddressed can sabotage the move to change. When

Satya Nadella took over Microsoft in 2014, he had to first restructure the

company to eliminate destructive internal competition so that all departments

could focus on a common services goal. The result is a two and a half fold

growth in the stock price over 5 years. On the other hand, when GE decided to

launch GE Digital as a transformation vehicle, it did not release the subsidiary

from the obligation of quarterly revenue and profitability targets. In addition,

the company had to continue to meet GE’s software needs across business units,

thereby not having the bandwidth to focus on true innovation and

transformation.

Some transformations fail when there is a lack of alignment between the

company’s strategy and its employees, customers and partners. There is a famous

fable of an ant trying its hardest to change its trajectory but not realising

that it is sitting on an elephant that’s going in the opposite direction. No

matter how hard the little ant tries, it will not reach its destination as long

as the elephant is not in alignment. All organizations have a culture and an

emotional ethos, which if left unaddressed can sabotage the move to change. When

Satya Nadella took over Microsoft in 2014, he had to first restructure the

company to eliminate destructive internal competition so that all departments

could focus on a common services goal. The result is a two and a half fold

growth in the stock price over 5 years. On the other hand, when GE decided to

launch GE Digital as a transformation vehicle, it did not release the subsidiary

from the obligation of quarterly revenue and profitability targets. In addition,

the company had to continue to meet GE’s software needs across business units,

thereby not having the bandwidth to focus on true innovation and

transformation. How Machine Learning can be used with Blockchain Technology?

Machine learning algorithms have amazing capabilities of learning. These

capabilities can be applied in the blockchain to make the chain smarter than

before. This integration can be helpful in the improvement in the security of

the distributed ledger of the blockchain. Also, the computation power of ML can

be used in the reduction of time taken to find the golden nonce and also the ML

can be used for making the data sharing routes better. Further, we can build

many better models of machine learning using the decentralized data architecture

feature of blockchain technology. Machine learning models can use the data

stored in the blockchain network for making the prediction or for the analysis

of data purposes. let’s take an example of any smart BT-based application where

the data is collecting by different sources such as sensors, smart devices, IoT

devices and the blockchain in this application works as an integral part of the

application where on the data the machine learning model can be applied for

real-time data analytics or predictions.

Machine learning algorithms have amazing capabilities of learning. These

capabilities can be applied in the blockchain to make the chain smarter than

before. This integration can be helpful in the improvement in the security of

the distributed ledger of the blockchain. Also, the computation power of ML can

be used in the reduction of time taken to find the golden nonce and also the ML

can be used for making the data sharing routes better. Further, we can build

many better models of machine learning using the decentralized data architecture

feature of blockchain technology. Machine learning models can use the data

stored in the blockchain network for making the prediction or for the analysis

of data purposes. let’s take an example of any smart BT-based application where

the data is collecting by different sources such as sensors, smart devices, IoT

devices and the blockchain in this application works as an integral part of the

application where on the data the machine learning model can be applied for

real-time data analytics or predictions. The tech recruiter – an unsung hero

The idiom ‘your first impression is your last impression’ holds true for

recruiters. They have one opportunity to deliver that perfect elevator pitch to

the candidate – convince them why your company provides the best opportunity for

them- in the time it takes to ride an elevator. Landing the right impression

will determine the candidate’s unalterable opinion and employment decision. To

understand this better, let’s take a quick look at the talent landscape today.

With the digitalization mega trend sweeping across Tech Inc., organizations are

scurrying to bolster their workforce across technology skill sets. Economic

Times reported that Indian IT firms plan to hire over 150,000 freshers in FY22

and NASSCOM remarked that India’s five largest companies are likely to hire

96,000 employees this year. Although this will be a huge boost for the $150

Billion industry, the demand-supply technology talent gap is only widening.

Today, it is the candidates who hold the power and have the pleasure of the last

word as prolonged notice periods allow them time to hedge their bets with the

four-five job offers they have on hand. And the more skilled they are, the more

offers they juggle.

The idiom ‘your first impression is your last impression’ holds true for

recruiters. They have one opportunity to deliver that perfect elevator pitch to

the candidate – convince them why your company provides the best opportunity for

them- in the time it takes to ride an elevator. Landing the right impression

will determine the candidate’s unalterable opinion and employment decision. To

understand this better, let’s take a quick look at the talent landscape today.

With the digitalization mega trend sweeping across Tech Inc., organizations are

scurrying to bolster their workforce across technology skill sets. Economic

Times reported that Indian IT firms plan to hire over 150,000 freshers in FY22

and NASSCOM remarked that India’s five largest companies are likely to hire

96,000 employees this year. Although this will be a huge boost for the $150

Billion industry, the demand-supply technology talent gap is only widening.

Today, it is the candidates who hold the power and have the pleasure of the last

word as prolonged notice periods allow them time to hedge their bets with the

four-five job offers they have on hand. And the more skilled they are, the more

offers they juggle. Better Scrum Through Essence

First an anecdote from Jeff Sutherland – ‘The VP of one of the biggest banks in the country [USA] said recently: “I have 300 product owners and only three were delivering. The other 297 were not delivering”. And, he said, “I checked on where the three that were delivering, where they got the right way of working. They went to your class. So, you need to tell me what you are doing differently.” I said, “What we are doing differently is using Ivar’s work with Essence to really clarify to people what is working, what is not working, what you need to do next to improve things.” By using Essence on many Scrum Master courses we (Jeff, I and others) have also observed that of the 21 components of the original Scrum Essentials, the average team implements 1/3 of them well, 1/3 of them poorly and 1/3 of them not at all. With that level and quality of implementation it is not surprising that we are not always seeing the full potential that Scrum offers. At the heart of getting better Scrum through Essence are the use of the Scrum Foundation, the Scrum Essentials and the Scrum Accelerator practices to play games, facilitate events and drive team improvements.Quote for the day:

"Leaders know the importance of having someone in their lives who will unfailingly and fearlessly tell them the truth." -- Warren G. Bennis

.png?width=1500&name=ardoq-content-SecurityMonth(2).png)