ThreatList: Pharma Mobile Phishing Attacks Turn to Malware

“The reason that mobile devices have become a primary target is because a

well-crafted attack can be close to impossible to spot,” said Schless. “Mobile

devices have smaller screens, simplified user interfaces, and people generally

exercise less caution on them than they do on computers.” Meanwhile, while

previously cybercriminals were relying on phishing attacks that attempted to

carry out credential harvesting, in 2020, the aim shifted to malware delivery.

For instance, in the fourth quarter of 2019, 83 percent of attacks aimed to

launch credential harvesting while 50 percent aimed to deliver malware.

However, in the first quarter of 2020, only 40 percent of attacks targeted

credentials, while 78 percent aimed to deliver malware. And, in the third

quarter of 2020, 27 percent targeted credentials, and 81 percent looked to

load malware. Researchers believe that this shift signifies that attackers are

investing in malware more for pharmaceutical companies. For one, successful

delivery of spyware or surveillanceware to a device could result in

longer-term success for the attacker. Furthermore, said researchers, attackers

want to be able to observe everything the user is doing and look into the

files their device accesses and stores. ...”

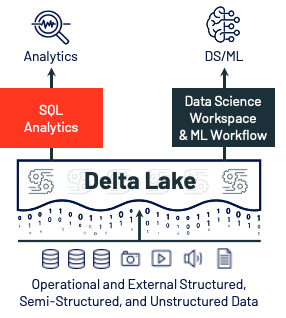

Don't put data science notebooks into production

Putting a notebook into a production pipeline effectively puts all the

experimental code into the production code base. Much of that code isn't

relevant to the production behavior, and thus will confuse people making

modifications in the future. A notebook is also a fully powered shell, which

is dangerous to include inside a production system. Safe operations require

reproducibility and auditability and generally eschews manual tinkering in the

production environment. Even well intentioned people can make a mistake and

cause unintended harm. What we need to put into production is the concluding

domain logic and (sometimes) visualizations. In most cases, this isn't

difficult since most notebooks aren't that complex. They only encourage linear

scripting, which is usually small and easy to extract and put into a full

codebase. If it's more complex, how do we even know that it works? These

scripts are fine for a few lines of code but not for dozens. You’ll generally

want to break that up into smaller, modular and testable pieces so that you

can be sure that it actually works and, perhaps later, reuse code for other

purposes without duplication. So we’ve argued that having notebooks running

directly in production usually isn’t that helpful or safe. It’s also not hard

to incorporate into a structured code base.

Why the CMO and CIO are no longer strange bedfellows

The CIO’s mandate is all systems, both customer-facing and internal. We know

that more and more this involves capturing and interpreting market and

customer data through artificial intelligence derived from data sensors. In

turn, IT leaders supply the capabilities needed to meet Line of Business

demands for agility and speed. The CMO’s mandate is to apply the derived

customer intelligence, needs, and habits, and profile customers down to the

individual level, to create an experience that meets the customer wherever,

whenever, and on any device. Understanding the customer is therefore central

to both mandates. The CIO needs to connect technology capabilities all the way

from the customer interaction back to the workload related to the customer,

sitting on the chosen infrastructure platform. The CMO needs an entire profile

of the customer, and the CIO builds the systems in order to create the

profile. In the current climate, businesses who fail to understand the

importance of the digital customer experience will undoubtedly fall behind.

Embracing the customer as a digital experience is essential for business

competitiveness and even survival.

Understanding Microsoft .NET 5

Technically this new release should be .NET Core 4, but Microsoft is

skipping a version number to avoid confusion with the current release of the

.NET Framework. At the same time, moving to a higher version number and

dropping Core from the name indicates that this is the next step for all

.NET development. Two projects still retain the Core name: ASP.NET Core 5.0

and Entity Framework Core 5, since legacy projects with the same version

numbers still exist. It’s an important milestone, marking the point where

you need to consider starting all new projects in .NET 5 and moving any

existing code from the .NET Framework. Although Microsoft isn’t removing

support from the .NET Framework, it’s in maintenance mode and won’t get any

new features in future point releases. All new APIs and community

development will be in .NET 5 (and 2021’s long-term support .NET 6). Some

familiar technologies such as Web Forms and the Windows Communication

Foundation are being deprecated in .NET 5. If you’re still using them, it’s

best to remain on .NET Framework 4 for now and plan a migration to newer,

supported technologies, such as ASP.NET’s Razor Pages or gRPC. There are

plans for community support for alternative frameworks that will offer

similar APIs

Top 8 trends shaping digital transformation in 2021

Consumers want consistent engagement with brands across their preferred

channels. Seventy-three percent of shoppers use more than one channel during

their shopping journey. Per Deloitte, seventy-five percent of consumers

expect consistent interactions across all departments of a company.

Eighty-six percent of consumers say they want the ability to move between

channels when talking to a brand. Ninty-two percent of customers are

satisfied using live chat services -- making it the support channel that

leads to the highest customer satisfaction. And 78% of consumers use mobile

devices to connect with brands for customer service -- the number jumps to

90% of Millennials. Organizations need to invest in new digital methods of

customer service. ... Research shows that Lines of business (LoBs) are

participating in digital transformation with 68% of LoB users believe IT and

LoBs should jointly drive digital transformation. In addition, 51% of LoB

users are frustrated at the speed their organizations' IT department can

deliver digital projects. Outside of IT, the top three business roles with

integration needs include business analysts, data scientists, and customer

support.

Q&A on the Book Virtual Teams Across Cultures

Firstly, it is important to understand the meaning of culture. In the book,

I go into more detail, but for now we can say that culture is the meaning

that a group of people give to understand life and interpret their

experience. Culture is a social construct, meaning that it develops through

the interaction of people. As humans, we are influenced by many cultures,

such as company culture. The book focuses on country or location

culture. When we work with people from the same culture, things tend to

go smoothly. In general, we understand each other’s communication style,

work approach, reactions and ideas. It all makes sense because the

assumptions that drive us are similar. However, when we meet someone from a

different culture, we may not understand or we may be surprised by their

communication style, work approach, reactions and ideas. The assumptions

that drive their behavior are fundamentally different. This is what we call

culture shock – that feeling of confusion because the other person does not

make sense to us. People who work internationally have most likely

experienced culture shock. The critical aspect is how we respond to

it.

Can Low Code Measure Up to Tomorrow’s Programming Demands?

There is some disagreement on whether AI and machine learning will be able

to write code, says Forrester’s Jeffrey Hammond, vice president and

principal analyst serving CIO professionals. “One camp is saying, ‘In the

future, AI is going to write a lot of the code that developers might write

today,’” he says. That could lead to less demand for developers, with fewer

positions to be filled. The counter view, Hammond says, is that software

development is a creative process and profession. For all its capabilities,

AI has limits that might not match the novel thinking of developers, he

says. “Some of the most valuable code that’s written is also the most

creative code.” Today AI is used successfully in testing, Hammond says,

which many developers might be loath to writing test cases for. He sees

market adjacencies to that with development tools such as Microsoft Visual

Studio that has a feature that can predict what a developer may type next,

then make that available for the developer to click. “You’ve got examples of

where these tools are augmenting developers’ working habits and making them

more productive,” Hammond says. In the creative space, Adobe Sensei

technology can help designers automate tedious tasks, he says, such as

stitch together photos or remove undesired artifacts from content.

Vulnerability Prioritization Tops Security Pros' Challenges

This should come as no surprise to anyone working in software development.

Software development organizations are using more application security tools

than ever before and from the earliest stages of development. Most are on top

of detection, but that's only the first step. Next comes prioritization: Once

you've detected the security issues, how can you make sure you are addressing

the most critical issues first? While prioritization is essential for

organizations that want to get ahead of their backlog, they are still

struggling to formulate a standardized prioritization process. Even though

vulnerability prioritization rated very high on application security

professionals' list of top challenges, the WhiteSource survey found that most

security and development teams don't follow a shared process for

prioritization. The survey asked to what extent the security and development

teams in their organization agree on which vulnerabilities need to be fixed,

and the results were concerning: 58% of respondents said they sometimes agree,

but each team follows ad hoc practices and separate guidelines. Only 31% of

respondents said they have an agreed-upon process to determine priorities.

Fast-Tracking AI Ethics Is Dicey And Shortsighted, Especially For Self-Driving Cars

Somehow, there needs to be a balance found that can appropriately make use of

the AI Ethics precepts and yet allow for flexibility when there is a real and

fully tangible basis to partially cut corners, as it were. Of course, some

would likely abuse the possibility of a slimmer version and always go that

route, regardless of any truly needed urgency of timing. Thus, there is a

chance of opening a Pandora’s box whereby a less-than fully AI Ethics protocol

becomes the default norm, rather than serving as a break-glass exception when

rarely so needed. It can be hard to put the Genie back into the bottle. In any

case, there are already some attempts at trying to craft a fast-track variant

of AI Ethics principles. We can perhaps temper those that leverage the urgent

version with both a stick and a carrot.The carrot is obvious that they are

seemingly able to get their AI completed sooner, while the stick is that they

will be held wholly accountable for not having taken the full nine yards on

the use of the AI Ethics. This is a crucial point that might be used against

those taking such a route and be a means to extract penalties via a court of

law, along with penalties in the court of public opinion.

How to boost your enterprise's immunity with cyber resilience

Cyber security and cyber resilience are often used interchangeably. While they

are related concepts, they're far from being synonyms, and it's crucial for

everyone to understand the difference. Security is like wearing a mask or

using other forms of personal protective equipment to reduce your risk of

being infected with a virus. Resiliency is, after having been infected,

fighting through the illness and giving your body a chance to return to good

health. This means that cyber security is the protection and restoration of IT

assets—hardware and software, in the cloud and on premises—and the data they

contain, to ensure their availability and integrity. Resiliency, on the other

hand, focuses on the ability of the business to withstand and recover from

these breaches. The scope extends beyond IT and information to business

operations and processes. The U.S. National Institute of Standards and

Technology (NIST) defines cyber resilience as "the ability of an information

system to continue to operate under adverse conditions or stress, even if in a

degraded or debilitated state, while maintaining essential operational

capabilities; and to recover to an effective operational posture in a time

frame consistent with mission needs."

Quote for the day:

"Limitations live only in our minds. But if we use our imaginations, our possibilities become limitless." -- Jamie Paolinetti

_(1).jpg)