If we put computers in our brains, strange things might happen to our minds

The difference between having a tool in your hand and having a brain-computer

interface -- essentially just another tool, albeit an advanced one -- is that

the BCI goes directly to the neurons that are helping you interact with the

world, says Justin Sanchez, a tech fellow at the Battelle Memorial Institute.

"So the potential for those neurons to be directly adapted for the brain

computer interface is that much higher [than with other tools]… there is

adaptation or plasticity of your neurons when you use a brain interface and

that plasticity can change in a wide variety of ways depending upon who you

are," he says. Research published last year found that even the use of a

non-invasive BCI (where brain signals are read by sensors worn on, rather than

in, the head) for a short time can induce brain plasticity. The study, which

asked people to imagine particular movements, found changes after just one

hour of use. The brain's ability to rewire itself in this way can come

in particularly handy in people who've had damage to their nervous systems --

for example, in people who've had strokes or spinal cord injuries. That

plasticity is particularly pertinent for BCIs, as researchers are hoping to

use the systems to help people with brain and spinal cord injuries to overcome

paralysis of their limbs or a lost sense of touch in parts of their body.

Zerologon explained: Why you should patch this critical Windows Server flaw now

Zerologon, tracked as CVE-2020-1472, is an authentication bypass vulnerability

in the Netlogon Remote Protocol (MS-NRPC), a remote procedure call (RPC)

interface that Windows uses to authenticate users and computers on

domain-based networks. It was designed for specific tasks such as maintaining

relationships between members of domains and the domain controller (DC), or

between multiple domain controllers across one or multiple domains and

replicating the domain controller database. One of Netlogon's features is that

it allows computers to authenticate to the domain controller and update their

password in the Active Directory, and it's this particular feature that makes

the Zerologon flaw dangerous. In particular, the vulnerability allows an

attacker to impersonate any computer to the domain controller and change their

password, including the password of the domain controller itself. This results

in the attacker gaining administrative access and taking full control of the

domain controller and therefore the network. Zerologon is a privilege

escalation vulnerability and is rated as critical by Microsoft even though the

company said in the original advisory that exploitation was less likely.

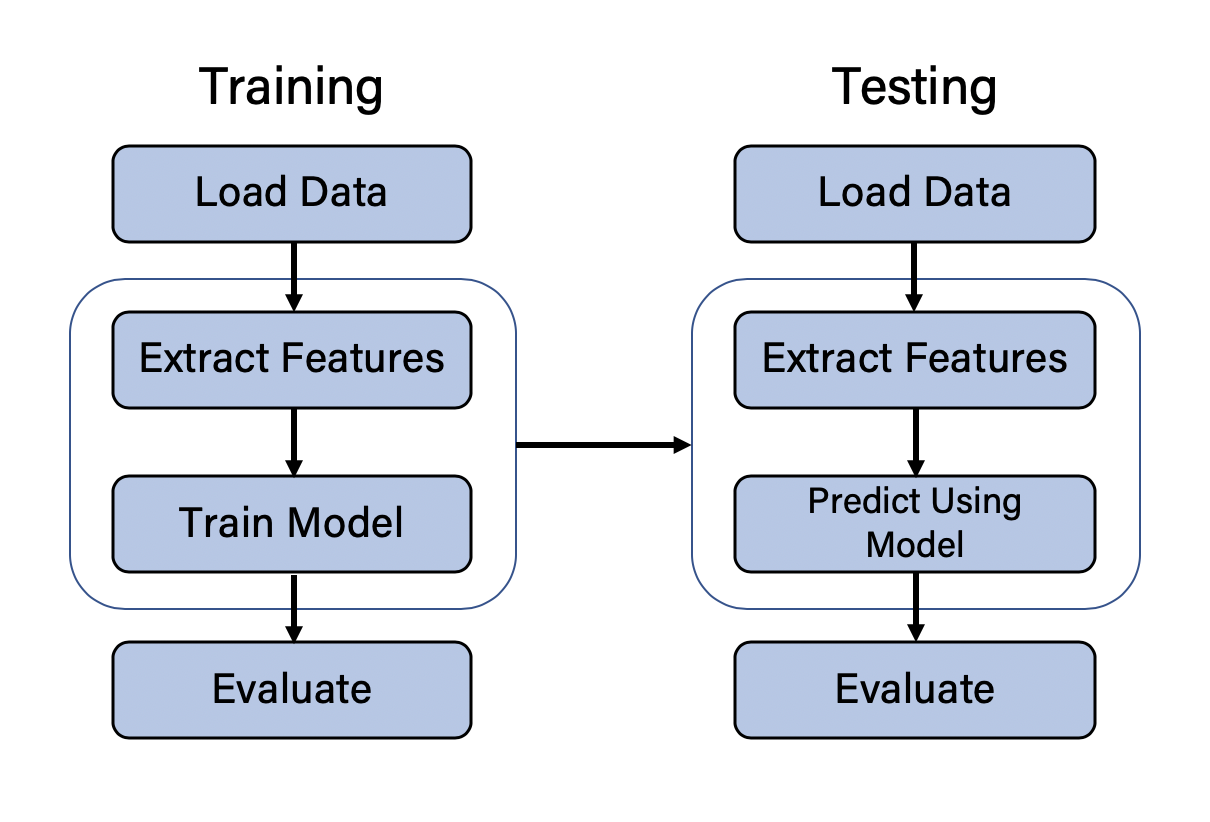

14 open source tools to make the most of machine learning

Apache Mahout provides a way to build environments for hosting machine

learning applications that can be scaled quickly and efficiently to meet

demand. Mahout works mainly with another well-known Apache project, Spark, and

was originally devised to work with Hadoop for the sake of running distributed

applications, but has been extended to work with other distributed back ends

like Flink and H2O. ... Apple’s Core ML framework lets you integrate machine

learning models into apps, but uses its own distinct learning model format.

The good news is you don’t have to pretrain models in the Core ML format to

use them; you can convert models from just about every commonly used machine

learning framework into Core ML with Core ML Tools. Core ML Tools runs as a

Python package, so it integrates with the wealth of Python machine learning

libraries and tools. Models from TensorFlow, PyTorch, Keras, Caffe, ONNX,

Scikit-learn, LibSVM, and XGBoost can all be converted. Neural network models

can also be optimized for size by using post-training quantization (e.g., to a

small bit depth that’s still accurate).

Easing the pressures of new technologies on the Internet

One constant we have witnessed over the history of the Internet is that when

underlying technologies improve, the new experiences they enable quickly

follow, taking full advantage of the new technology and pushing it to its

limits. As more and more devices are able to connect to the Internet at ever

higher speeds, including through 5G connectivity, the demand for online

content will grow dramatically. Much of this traffic will be video-heavy and

delivered in high definition. For example, Analysys Mason predicts that 5G

will be a significant enabler of cloud gaming due to the lower latencies and

higher speeds it offers. Video delivered at faster frame rates and the need

for 360-degree content for the growing use of AR and VR is likely to result in

around four times as much traffic as typical video. Another example is

streaming of live sports events. The 2019 VIVO Indian Premier League cricket

tournament set records for reported online viewership, exceeding the total

2018 viewership within the first three weeks of the 2019 tournament. In fact,

the final saw 18.6 million concurrent viewers, an increase of 80% over the

previous year – and with 91% watching via mobile.

How Automation is changing the landscape of Enterprises?

Convenience is a great category for this. However, in larger retail

environments or when the packaging is less structured, other experiences have

limited friction enabled by much less costly technology. For example, in

Europe, it is common to see retailers that provide mobile self-scanning

solutions or banks of modular self-checkout stands, which allow customers to

eliminate the wait time they typically encounter at a traditional checkout.

Technology improvements in computer vision have also helped start-ups develop

shopping carts that can automatically identify products as they are placed

within the carts, creating yet another option. One truth that will remain

constant in retail is that customer convenience is a core value proposition,

so limiting friction in the buying experience will always have a place in the

market. ... The next evolution is to leverage artificial intelligence

technologies like machine learning, computer vision, natural language

processing, prescriptive analytics, and others to further eliminate the

cognitive load on process execution. In the short term, roles focused on

repetitive tasks, especially in what has typically termed back-office

functions that do not directly interact with shoppers, patients, and customers

will be the most impacted by RPA.

What is Intelligent Automation

Just like the machines replacing humans in industries Intelligent Automation

solutions have started replacing humans in every industry, freeing their time

for more creative and innovative tasks. Areas including Marketing & Sales,

Human Resources, Customer support, Finance, IT support, Business Process

Management and Operations Excellence are using Intelligent Automation to drive

more value. In recent years these emerging technologies have gained

substantial momentum. This, in turn, increased the number of technology firms

and venture investors shifting their attention towards implementing

intelligent automation solutions. Major automakers like Audi, BMW, Mercedes

Benz, Volvo and Nissan are planning to introduce autonomous vehicles that use

IA. IBM’s Watson processes huge amounts of textual information in order to

respond quickly towards complex requests for medical treatment plans. IA is

used in commercial processes like a marketing system which avails offers for

customers based on their preferences, credit card processing system which

helps in detecting fraudulent activities and so on.

Microsoft announces Power BI Teams integration, NLP and per-user Premium subscription

While Microsoft is playing catch-up here with other BI products that already

offered narrative summarizations, it has worked hard to integrate its own

implementation fully into the Power BI paradigm. The feature is surfaced

through a drag-and-drop visual that is contextually updated when the

underlying data changes through a filter, a slicer or the cross-filtering that

takes place when a data element in another visual is selected. This makes the

learning curve negligible for existing Power BI users. And combining smart

narratives with "Q&A" natural language query capabilities will make Power

BI a now strong contender in the augmented analytics arena. Another major area

of enhancement to Power BI's usability comes in the form of a dedicated Power

BI add-in application for Microsoft's Teams collaboration platform, released

as a preview. The Teams integration includes the ability to browse reports,

dashboards and workspaces and directly embed links to them in Teams channel

chats. It's not just about linking though, as Teams users can also browse

Power BI datasets, both through an alphabetical listing of them or by

reviewing a palette of recommended ones. In both cases, datasets previously

marked as Certified or Promoted will be identified as such, and Teams users

will have the ability view their lineage, generate template-based reports on

them, or just analyze their data in Excel.

Adopting interaction analytics to improve contact centre performance

Interaction analytics allows organisation’s to analyse 100% of calls or

text-based conversations that come into the contact centre, automatically. By

adopting this technology, with the right partner, organisation’s are moving

away from relying on an from an inconsistent and subjective sample, to a

holistic, consistent and objective view. “Analytics technologies allows

organisation’s to take away the manual effort of monitoring contact centre

performance and let technology guide everything, from the calls of interest,

to the issues of interest, to the opportunities, to the challenges and the

complaints. Interaction analytics provides a holistic view of what’s really

going on with contact centre interactions,” continued Sherlock. The return on

investment is also multiple, both in terms of cost savings (there’s no need

for people to manage or monitor every call, as interaction analytics automates

the experience) and in identifying new revenue opportunities. “Analytics can

help produce sales opportunities and allow organisation’s to collect more

revenue by upselling to the customer base — a holistic view of interactions

will allow those in sales to see where they’re getting consistent issues being

spoken about a particular product or service and then go back to source to

modify that product/service,” explained Sherlock.

What Does an Enterprise Architect Do Exactly?

Enterprise architects are responsible for planning how to use and manage all

the IT functions of an organization. They must find a way to make them

affordable and efficient as possible. It’s up to the enterprise architect to

develop the plan. They have a great deal of freedom in deciding what is best.

They must balance this against the needs of the organization they work for and

its customers. The plan must be the most effective utilization of enterprise

architecture possible. It must address any issues the organization currently

faces. If there’s a better way to use available resources, it should be

included. Each plan an enterprise architect comes up with must align with the

goals of the business they work for. Perhaps they want to decrease the time it

takes to send and receive information. Switching to a faster server may be an

effective strategy. Enterprise architects must also be able to communicate

their plans to everyone else. Anyone who doesn’t understand all the steps

won’t be able to implement them in their work. Creating a strategy for how to

manage an organization’s IT is only the first step. After that, it’s the

enterprise architect’s responsibility to implement it.

Enterprise architecture strategy experts offer pandemic tips

Right now, the information needs to be sharper, and it needs to be opinionated.

Don't reinvent the wheel. Use the existing artifacts you have. Insert yourself

as the epicenter of actionable information and sharpen the insights. You really

want to drive the needle and come to the table opinionated. Don't overwhelm your

stakeholders with options. Your business model canvases and capability maps are

great in EA but far too detailed for the distracted executive of today. So,

we're pivoting into executive onboarding dossiers. When new executives come on

board, we give them almost a CliffsNotes version, and it saves them hours. Many

other examples of your application portfolios can be turned into run books,

succession plans and flex workforce plans. The key takeaway is we want to keep

EA relevant. It's about adapting to the times, sharpening your narrative with

the business and not being afraid to step on some toes. ... Lots of

organizations are realizing that business capability models are the most

powerful areas they can attack as they struggle with COVID. They're identifying

and focusing on the most important capabilities to help them survive through the

pandemic and then throwing in a couple of capabilities that differentiate the

organization when we come to the other side of COVID.

Quote for the day:

"Integrity is the soul of leadership! Trust is the engine of leadership!" -- Amine A. Ayad