Why we need XAI, not just responsible AI

There are many techniques organisations can use to develop XAI. As well as

continually teaching their system new things, they need to ensure that it is

learning correct information and does not use one mistake or piece of biased

information as the basis for all future analysis. Multilingual semantic

searches are vital, particularly for unstructured information. They can filter

out the white noise and minimise the risk of seeing the same risk or

opportunity multiple times. Organisations should also add a human element to

their AI, particularly if building a watch list. If a system automatically red

flags criminal convictions without scoring them for severity, a person with a

speeding fine could be treated in the same way as one serving a long prison

sentence. For XAI, systems should always err on the side of the positive. If a

red flag is raised, the AI system should not give a flat ‘no’ but should raise

an alert for checking by a human. Finally, even the best AI system should

generate a few mistakes. Performance should be an eight out of ten, never a

ten, or it becomes impossible to trust that the system is working properly.

Mistakes can be addressed, and performance continually tweaked, but there will

never be perfection.

What classic software developers need to know about quantum computing

There are many different parts of quantum that are exciting to study. One is

quantum computing using quantum to do any sort of information processing, the

other is communication itself. And maybe the third part that doesn't get as

much media attention but should is sensing, using quantum computers to sense

things much more sensitively than you would classically. So think about

sensing very small magnetic fields for example. So the communication aspect of

it is just as important because at the end of the day it's important to have

secure communication between your quantum computers as well. So this is

something exciting to look forward to. ... So the first tool that you need,

and one of the most important tools is the one that gives you access to the

quantum computers. So if you go to quantum-computing.ibm.com and create an

account there, we give you immediate access to several quantum computers,

which first of all, every time I say, this just blows my mind because four

years ago this wasn't a thing. You couldn't go online and access a quantum

computer. I was in grad school because I wanted to do quantum research and

needed access to a lab to do this work

Why Darknet Markets Persist

"There are two main reasons here: the lack of alternatives and the ease of use

of marketplaces," researchers at the Photon Research Team at digital risk

protection firm Digital Shadows tell Information Security Media Group. At

least for English-speaking users, such considerations often appear to trump

other options, which include encrypted messaging apps as well as forums

devoted to cybercrime or hacking. And many users continue to rely on markets

despite the threat of exit scams, getting scammed by sellers or getting

identified and arrested by police. Another option is Russian-language

cybercrime forums, which continue to thrive, with many hosting high-value

items. But researchers say that, even when armed with translation software,

English speakers often have difficulty coping with Russian cybercrime argot.

Many Russian speakers also refuse to do business with anyone from the West.

... Demand for new English-language cybercrime markets continues to be high

because so many existing markets get disrupted by law enforcement agencies or

have administrators who run an exit scam. Before Empire, other markets that

closed after their admins "exit scammed" have included BitBazaar in August,

Apollon in March and Nightmare in August 2019.

Open Data Institute explores diverse range of data governance structures

The involvement of different kinds of stakeholders in any particular

institution also has an effect on what kinds of governance structures would be

appropriate, as different incentives are needed to motivate different actors

to behave as responsible and ethical stewards of the data. In the context of

the private sector, for example, enterprises that would normally adopt a

cut-throat, competitive mindset need to be incentivised for collaboration.

Meanwhile, cash-strapped third-sector organisations, such as charities and

non-governmental organisations (NGOs), need more financial backing to realise

the potential benefits of data institutions. “Many [private sector]

organisations are well-versed in stewarding data for their own benefit, so

part of the challenge here is for existing data institutions in the private

sector to steward it in ways that unlock value for other actors, whether

that’s economic value from say a competition point of view, but then also from

a societal point of view,” said Hardinges. “Getting organisations to consider

themselves data institutions, and in ways that unlock public value from

private data, is a really important part of it.”

5 supply chain cybersecurity risks and best practices

Falling prey to the "it couldn't happen to us" mentality is a big mistake. But

despite clear evidence that supply chain cyber attacks are on the rise, some

leaders aren't facing that reality, even if they do understand techniques to

build supply chain resilience more broadly. One of the biggest supply chain

challenges is leaders thinking they're not going to be hacked, said Jorge Rey,

the principal in charge of information security and compliance for services at

Kaufman Rossin, a CPA and advisory firm in Miami. To fully address supply

chain cybersecurity, supply chain leaders must realize they need to face the

risk reality. The supply chain is veritable smorgasbord of exploit

opportunities -- there are so many information and product handoffs in even a

simpler one -- and each handoff represents risks, especially where digital

technology is involved but easily overlooked. ... Supply chain cyber attacks

are carried out with different goals in mind -- from ransom to sabotage to

theft of intellectual property, Atwood said. These cyberattacks can also take

many forms, such as hijacking software updates and injecting malicious code

into legitimate software, as well as targeting IT and operational technology

and hitting every domain and any node, Atwood said.

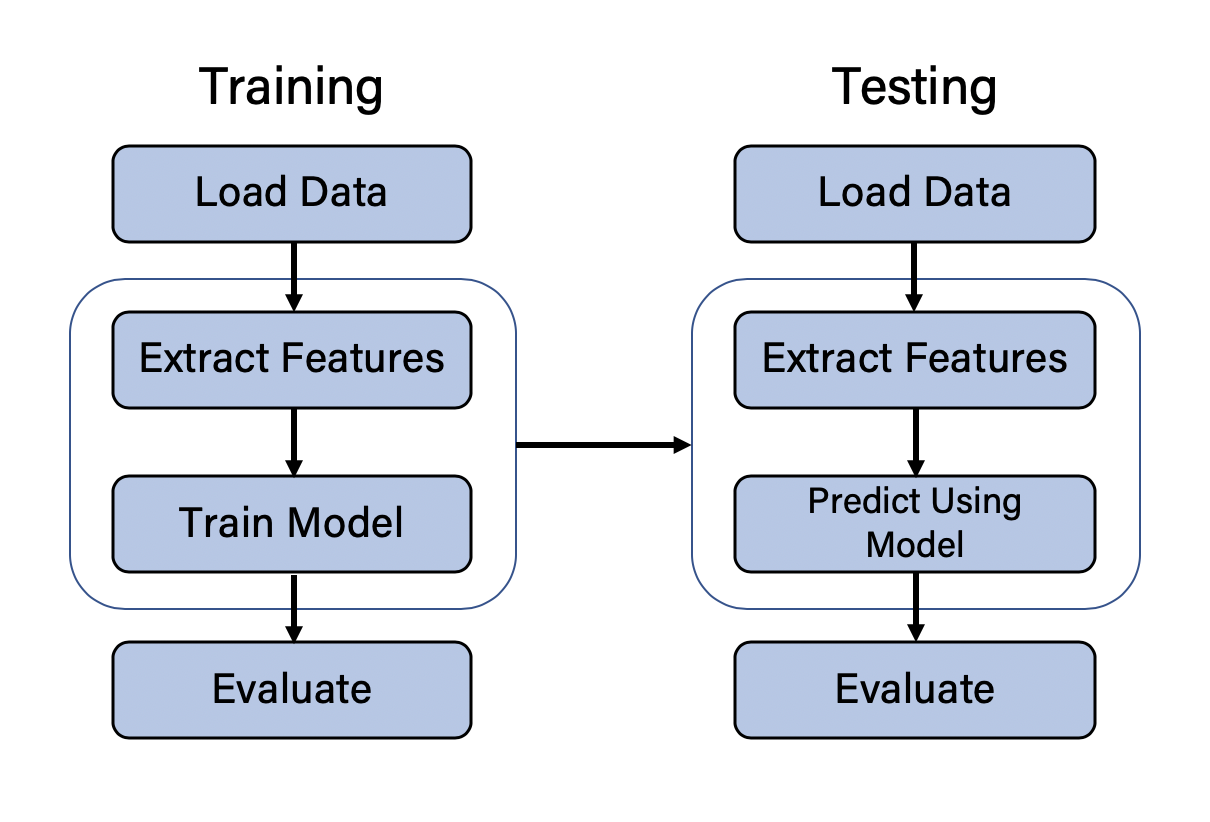

Moving Toward Smarter Data: Graph Databases and Machine Learning

Data plays a significant role in machine learning, and formatting it in ways

that a machine learning algorithm can train on is imperative. Data pipelines

were created to address this. A data pipeline is a process through which raw

data is extracted from the database (or other data sources), is transformed,

and is then loaded into a form that a machine learning algorithm can train and

test on. Connected features are those features that are inherent in the

topology of the graph. For example, how many edges (i.e., relationships) to

other nodes does a specific node have? If many nodes are close together in the

graph, a community of nodes may exist there. Some nodes will be part of that

community while others may not. If a specific node has many outgoing

relationships, that node’s influence on other nodes could be higher, given the

right domain and context. Like other features being extracted from the data

and used for training and testing, connected features can be extracted by

doing a custom query based on the understanding of the problem space. However,

given that these patterns can be generalized for all graphs, unsupervised

algorithms have been created that extract key information about the topology

of your graph data and used as features for training your model.

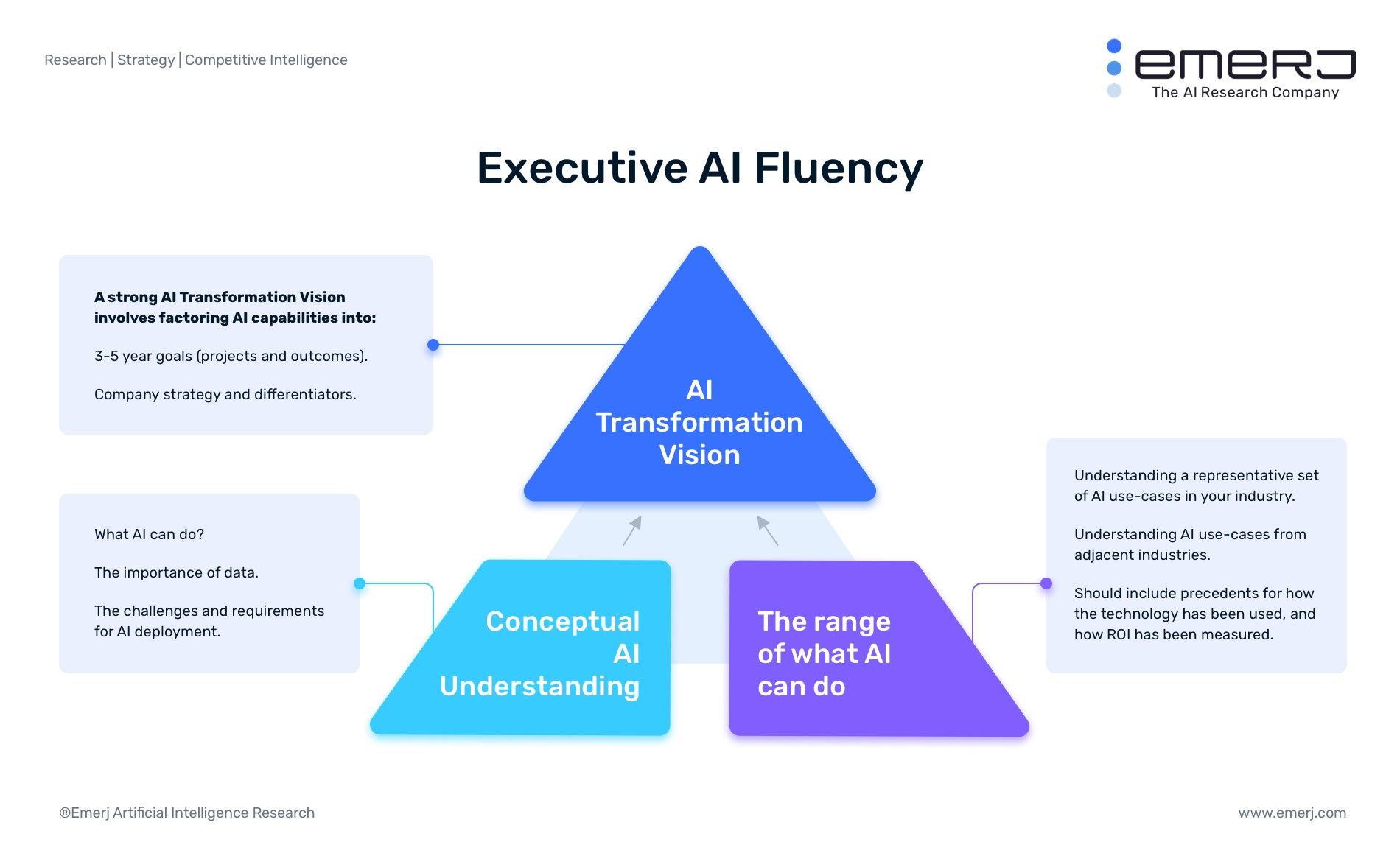

Dark Side of AI: How to Make Artificial Intelligence Trustworthy

Malicious inputs to AI models can come in the form of adversarial AI,

manipulated digital inputs or malicious physical inputs. Adversarial AI may

come in the form of socially engineering humans using an AI-generated voice,

which can be used for any type of crime and considered a “new” form of

phishing. For example, in March of last year, criminals used AI synthetic

voice to impersonate a CEO’s voice and demand a fraudulent transfer of

$243,000 to their own accounts. Query attacks involve criminals sending

queries to organizations’ AI models to figure out how it's working and may

come in the form of a black box or white box. Specifically, a black box query

attack determines the uncommon, perturbated inputs to use for a desired

output, such as financial gain or avoiding detection. Some academics have been

able to fool leading translation models by manipulating the output, resulting

in an incorrect translation. A white box query attack regenerates a training

dataset to reproduce a similar model, which might result in valuable data

being stolen. An example of such was when a voice recognition vendor fell

victim to a new, foreign vendor counterfeiting their technology and then

selling it, which resulted in the foreign vendor being able to capture market

share based on stolen IP.

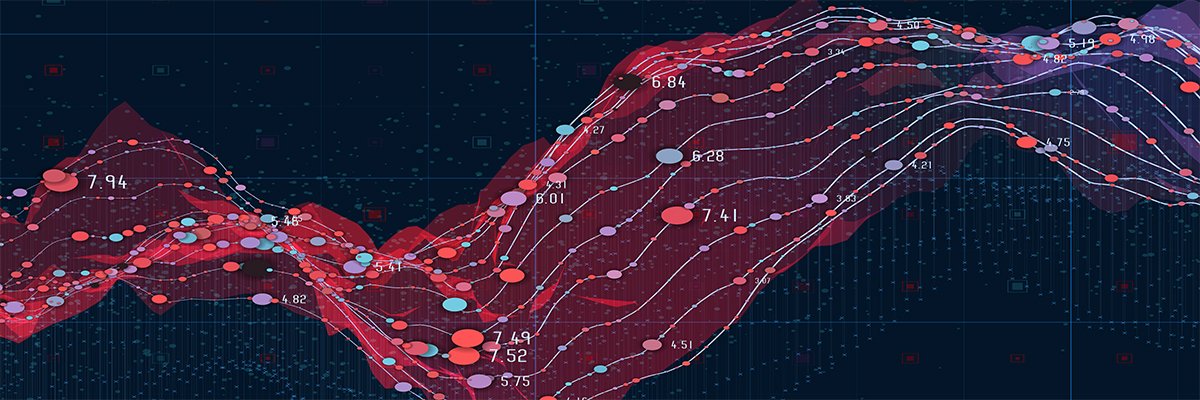

DDoS attacks rise in intensity, sophistication and volume

The total number of attacks increased by over two and a half times during

January through June of 2020 compared to the same period in 2019. The increase

was felt across all size categories, with the biggest growth happening at

opposite ends of the scale – the number of attacks sized 100 Gbps and above

grew a whopping 275% and the number of very small attacks, sized 5 Gbps and

below, increased by more than 200%. Overall, small attacks sized 5 Gbps and

below represented 70% of all attacks mitigated between January and June of

2020. “While large volumetric attacks capture attention and headlines, bad

actors increasingly recognise the value of striking at low enough volume to

bypass the traffic thresholds that would trigger mitigation to degrade

performance or precision target vulnerable infrastructure like a VPN,” said

Michael Kaczmarek, Neustar VP of Security Products. “These shifts put every

organization with an internet presence at risk of a DDoS attack – a threat

that is particularly critical with global workforces reliant on VPNs for

remote login. VPN servers are often left vulnerable, making it simple for

cybercriminals to take an entire workforce offline with a targeted DDoS

attack.”

Group Privacy and Data Trusts: A New Frontier for Data Governance?

The concept of collective privacy shifts the focus from an individual

controlling their privacy rights, to a group or a community having data rights

as a whole. In the age of Big Data analytics, the NPD Report does well to

discuss the risks of collective privacy harms to groups of people or

communities. It is essential to look beyond traditional notions of privacy

centered around an individual, as Big Data analytical tools rarely focus on

individuals, but on drawing insights at the group level, or on “the crowd” of

technology users. In a revealing example from 2013, data processors who

accessed New York City’s taxi trip data (including trip dates and times) were

able to infer with a degree of accuracy whether a taxi driver was a devout

Muslim or not, even though data on the taxi licenses and medallion numbers had

been anonymised. Data processors linked pauses in taxi trips with adherence to

regularly timed prayer timings to arrive at their conclusion. Such findings

and classifications may result in heightened surveillance or discrimination

for such groups or communities as a whole. .... It might be in the interest of

such a community to keep details about their ailment and residence private, as

even anonymised data pointing to their general whereabouts could lead to

harassment and the violation of their privacy.

Analysis: Online Attacks Hit Education Sector Worldwide

The U.S. faces a rise in distributed denial-of-service attacks, while Europe

is seeing an increase in information disclosures attempts - many of them

resulting from ransomware incidents, the researchers say. Meanwhile, in Asia,

cybercriminals are taking advantage of vulnerabilities in the IT systems that

support schools and universities to wage a variety of attacks. DDoS and other

attacks are surging because threat actors see an opportunity to disrupt

schools resuming online education and potentially earn a ransom for ending an

attack, according to Check Point and other security researchers. "Distributed

denial-of-service attacks are on the rise and a major cause of network

downtime," the new Check Point report notes. "Whether executed by hacktivists

to draw attention to a cause, fraudsters trying to illegally obtain data or

funds or a result of geopolitical events, DDoS attacks are a destructive cyber

weapon. Beyond education and research, organizations from across all sectors

face such attacks daily." In the U.S., the Cybersecurity and Infrastructure

Security Agency has warned of an increase in targeted DDoS attacks against

financial organizations and government agencies

Quote for the day:

"One of the most sincere forms of respect is actually listening to what another has to say." -- Bryant H. McGill

.png?t=1530908105849)