Crypto-Friendly Banking Platform Cashaa Expanding in India, US, Africa

India’s cryptocurrency market has been growing rapidly ever since the

country’s supreme court quashed the RBI circular that banned financial

institutions from providing services to crypto businesses. India currently

does not have any direct crypto regulations, but there are rumors of the

government discussing the bill submitted by the inter-ministerial committee

headed by former Finance Secretary Subhash Chandra Garg, which seeks to ban

cryptocurrencies like bitcoin. However, the Indian crypto industry firmly

believes that this bill is outdated and will not be the one the government

introduces. “The Indian government is currently engaging with various

stakeholders and trying to work out a solution. India today stands at a

juncture, where it can actually embrace the digital currency ecosystem as it

is pushing for the digital revolution and is leading the way in the fintech

segment,” Gaurav opined. Cashaa will also focus on the U.S. next year, the CEO

explained. “We have already started issuing USD accounts regulated by the

Banking Division of Colorado to our existing business customers as beta

users,” he further shared with news.Bitcoin.com, adding that some crypto

clients already using Cashaa’s USD accounts include Nexo, Coindcx, and

Unocoin.

Surging CMS attacks keep SQL injections on the radar during the next normal

Sending malicious commands to a web application can result in disclosure of

users’ private data, and the attacker can gain access to a user’s computer.

This method of injecting code within the same local execution infrastructure

is relatively easy when compared to remote injection, which requires more

specialized tools and skills. Here, the remote hacker only needs a security

flaw that offers a small window to send commands to the remote execution

environment, enabling the malicious code to run without any evaluation. As a

result, attackers can create a remote entrance to reach the target

environment, and oftentimes the administrator has no knowledge of the system

being compromised. Most of the time, attackers make use of remote code

execution security flaws that are on the web surface or within different

narrow-use and specific ports and protocols. When a CMS is attacked, the

remote code execution flaw often results from a connected platform such as the

.NET environment, PHP scripting language, or file-sharing service or database

that has remote code execution vulnerabilities.

Malware gang uses .NET library to generate Excel docs that bypass security checks

NVISO says the Epic Manchego gang appears to have used EPPlus to generate

spreadsheet files in the Office Open XML (OOXML) format. The OOXML spreadsheet

files generated by Epic Manchego lacked a section of compiled VBA code,

specific to Excel documents compiled in Microsoft's proprietary Office

software. Some antivirus products and email scanners specifically look for

this portion of VBA code to search for possible signs of malicious Excel docs,

which would explain why spreadsheets generated by the Epic Manchego gang had

lower detection rates than other malicious Excel files. This blob of compiled

VBA code is usually where an attacker's malicious code would be stored.

However, this doesn't mean the files were clean. NVISO says that the Epic

Manchego simply stored their malicious code in a custom VBA code format, which

was also password-protected to prevent security systems and researchers from

analyzing its content. But despite using a different method to generate their

malicious Excel documents, the EPPlus-based spreadsheet files still worked

like any other Excel document.

American Express Establishes Data Analytics, Risk & Technology Lab (DART) In IIT Madras

The company hopes to apply these technologies across dimensions such as

employee engagement and attention, evaluating and enhancing the quality of

education and learning in school. The Lab at IIT Madras will explore a range

of verticals with key emphasis on manufacturing, finance, healthcare,

operations management and smart cities. “Our collaboration with IIT Madras

reiterates our commitment to support and invest in interventions for public

good in the country. The technologies and applied sciences R&D in the Lab

will be beneficial for creating an overall societal impact through advancement

in financial services, healthcare and safety standards,” said Bharathram

Thothadri, EVP and Chief Credit Officer, American Express. It also plans to

build talent for industry by partnering with academia while promoting talent

and diversity in technology. It has also announced annual scholarships for

economically-disadvantaged and meritorious students, including ‘Ambition

Awards’ for deserving women students at IIT Madras.

Observability Strategies for Distributed Systems - Lessons Learned

All the panelists said some variation of, "make the easiest path the correct

path," with Fong-Jones observing that, "teams are super lazy." Because most

teams are focused on developing their service, find ways to create automatic

dashboards and update runbooks. Spoons emphasized the need to create

machine-readable central documentation. Similarly, using structured logging

makes information digestible. That can greatly aid looking for patterns. One

of the behaviors to encourage is being able to form and test hypotheses.

Having all the data from across a distributed system can become overwhelming,

so you need ways to narrow your focus. The practice of site-reliability

engineering requires a different mindset than "ordinary" software engineering.

Although DevOps has been an attempt to apply software engineering to IT

operations, SRE takes an opposite approach when thinking about failure. This

can be thought of as the duality between monitoring, which is looking for what

is anticipated, and observing, where the focus is on what is unexpected. Each

of the panelists had a few pitfalls that they've seen, and hope people will

avoid.

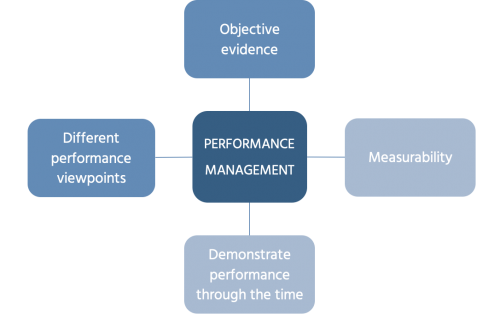

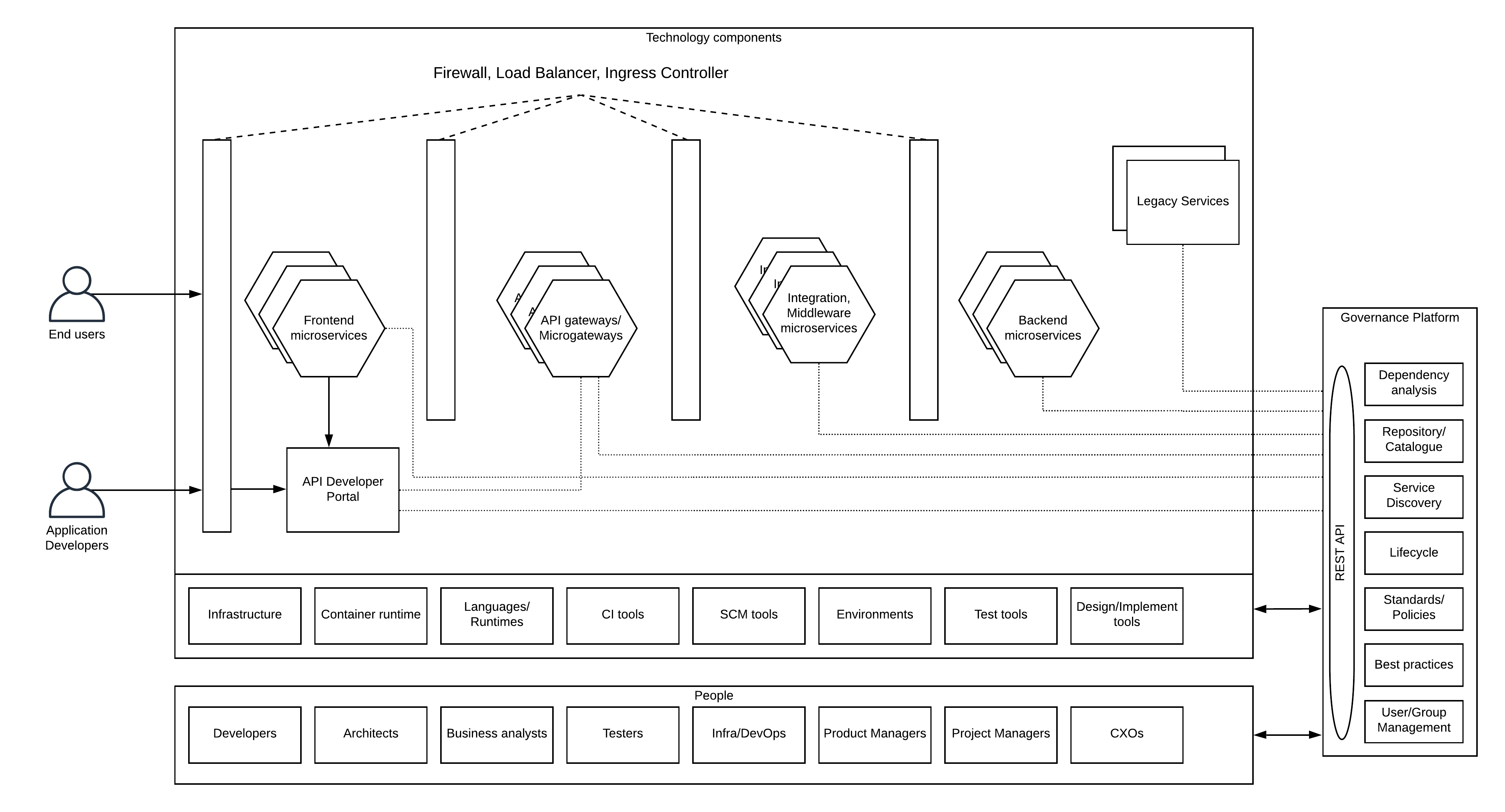

Traditional Banking is an Endangered Species

For banks to survive in a post-COVID-19 world they must review their risk

modelling strategies to accommodate the pandemics of the future, rather than

falling back to what they know once COVID-19 has been contained. Banks need to

ensure that remote working can be provisioned for effectively, in the event of

another pandemic, and need to abandon paper processes all together. All

of this is easier said than done and banks must spend time on ensuring they

are effectively communicating across the entire workforce. For years, banks

have been grappling with siloed data and now they must ensure they do not have

siloed communications – where time and money could be lost if the workforce

are not kept in the loop across the front end e.g. products, solutions and

services, and the back end e.g. banking architecture. By harnessing the

payments ecosystem, banks can collaborate with technology specialists, to keep

up with the pace of demand for international, online payments. ‘Open Banking’

will enable banks to access the right technological expertise to solve the

challenges they are facing on a daily basis, and provision fully for the needs

of their new, existing and prospective customers.

Cybersecurity Pros Face a Huge Staffing Shortage As Attacks Surge During The Pandemic

Shearer said to fill the talent gap, more outreach needs to be done to recruit

younger workers into the aging workforce, as well as more diverse

cybersecurity workers. “Diversity is a big part of it — women are

underrepresented, it’s improving. We also here in the United states need to

look at other underrepresented minority groups and get them into the fold

because it’s going to take everyone we can find to be interested in cyber,” he

said. “As people start to retire, it’s only going to exacerbate the fact that

it’s an undersized cyber workforce.” Jobs can be lucrative in the field as

well—(ISC)2′s data finds the average North American salary for cybersecurity

professionals is $90,000 a year and those who hold security certifications can

make more. ... Hiring has become somewhat easier in recent months, Wysopal

says, a silver lining in the face of a broader skilled talent shortage in the

industry. As the pandemic forced closures and layoffs in all sectors of the

economy, more cyber workers have become available and due to the nature of

remote work, candidates that are outside of the area have become more

appealing.

SASE vs SD-WAN: A Comparison

SASE’s focus is on providing secure access to distributed resources for the

network and its users. The resources can be distributed in private data

centers, colocation facilities, and the cloud. As such, security and

networking decision-making are baked into the same security tools. SASE

products have security tools that reside in a user’s device as a security

agent, as well as in the cloud as a cloud-native software stack. For example,

the security agent can contain a secure web gateway and a vendor’s cloud can

contain a firewall-as-a-service. In a branch office or other location with a

collection of people, a SASE appliance is common in order to secure agentless

devices like printers. SD-WAN technology was not designed with a focus on

security. SD-WAN security is often delivered via secondary features or by

third-party vendors. While some SD-WAN solutions do have baked-in security,

this is not in the majority. SD-WAN’s central goal is to connect

geographically separate offices to each other and to a central headquarters,

with flexibility and adaptability to different network conditions. In an

SD-WAN, security tools are usually located at offices in CPE rather than on

devices themselves.

3 Predictions For The Role Of Artificial Intelligence In Art And Design

Until we can fully understand the brain’s creative thought processes, it’s

unlikely machines will learn to replicate them. As yet, there’s still much we

don’t understand about human creativity. Those inspired ideas that pop into

our brain seemingly out of nowhere. The “eureka!” moments of clarity that stop

us in our tracks. Much of that thought process remains a mystery, which makes

it difficult to replicate the same creative spark in machines. Typically,

then, machines have to be “told” what to create before they can produce the

desired end result. The AI painting that sold at auction? It was created by an

algorithm that had been trained on 15,000 pre-20th century portraits, and was

programmed to compare its own work with those paintings. ... Intelligent

machines have no problem coming up with infinite possible solutions and

permutations, and then narrowing the field down to the most suitable options –

the ones that best fit the human creative’s “vision”. In this way, machines

could help us come up with new creative solutions that we couldn’t possibly

have come up with on our own.

Eight case studies on regulating biometric technology show us a path forward

The clearest one was the chapter on India by Nayantara Ranganathan, and the

chapter on the Australian facial recognition database by Monique Mann and Jake

Goldenfein. Both of these are massive centralized state architectures where

the whole point is to remove the technical silos between different state and

other kinds of databases, and to make sure that these databases are centrally

linked. So you’re creating this monster centralized, centrally linked

biometric data architecture. ... The second—and this is a lesson that we keep

repeating—consent as a legal tool is very much broken, and it’s definitely

broken in the context of biometric data. But that doesn’t mean that it’s

useless. Woody Hartzog’s chapter on Illinois’s BIPA [Biometric Information

Privacy Act] says: Look, it’s great that we’ve had several successful lawsuits

against companies using BIPA, most recently with Clearview AI. But we can’t

keep expecting “the consent model” to bring about structural change. Our

solution can’t be: The user knows best; the user will tell Facebook that they

don’t want their face data collected.

Quote for the day:

"The gem cannot be polished without friction, nor people perfected without trials." -- Confucius

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/67347095/1211180776.jpg.0.jpg)