Most scientists would probably agree that prediction and understanding are not the same thing. The reason lies in the origin myth of physics—and arguably, that of modern science as a whole. For more than a millennium, the story goes, people used methods handed down by the Greco-Roman mathematician Ptolemy to predict how the planets moved across the sky. Ptolemy didn’t know anything about the theory of gravity or even that the sun was at the centre of the solar system. His methods involved arcane computations using circles within circles within circles. While they predicted planetary motion rather well, there was no understanding of why these methods worked, and why planets ought to follow such complicated rules. Then came Copernicus, Galileo, Kepler and Newton. Newton discovered the fundamental differential equations that govern the motion of every planet. The same differential equations could be used to describe every planet in the solar system. This was clearly good, because now we understood why planets move.

Most scientists would probably agree that prediction and understanding are not the same thing. The reason lies in the origin myth of physics—and arguably, that of modern science as a whole. For more than a millennium, the story goes, people used methods handed down by the Greco-Roman mathematician Ptolemy to predict how the planets moved across the sky. Ptolemy didn’t know anything about the theory of gravity or even that the sun was at the centre of the solar system. His methods involved arcane computations using circles within circles within circles. While they predicted planetary motion rather well, there was no understanding of why these methods worked, and why planets ought to follow such complicated rules. Then came Copernicus, Galileo, Kepler and Newton. Newton discovered the fundamental differential equations that govern the motion of every planet. The same differential equations could be used to describe every planet in the solar system. This was clearly good, because now we understood why planets move.3 Trends in Organization Design Presenting Opportunities for Leaders

Today, nearly every business has digitized to some extent. Some companies—for example, Uber and Amazon—have used digital solutions to create business models that would have been unimaginable in the 1980s. While not every business needs to be digitized to the same extent as Uber, nearly every business can benefit from exploring the use of artificial intelligence, data and analytics, and other technology to improve capabilities and results not just incrementally but exponentially. Capitalizing on these potentials, however, does require strong leadership and a willingness to change and adapt. You can’t just plug a new technology into an old framework without affecting other aspects of the organization, such as how work is done, how the structure is designed, how metrics are used to drive performance, what skills and talent are needed, and how culture will reinforce strategy. ... Agile is another organization design trend that has its roots in the digital world. It is a way of working that enables a company to respond more quickly to changes in the marketplace, and it can result in a more nimble, resilient organization.

Are You Spending Way Too Much on Software?

Companies are allowing their data to get too complex by independently acquiring or building applications. Each of these applications has thousands to hundreds of thousands of distinctions built into it. For example, every table, column, and other element is another distinction that somebody writing code or somebody looking at screens or reading reports has to know. In a big company, this can add up to millions of distinctions. But in every company I’ve ever studied, there are only a few hundred key concepts and relationships that the entire business runs on. Once you understand that, you realize all of these millions of distinctions are just slight variations of those few hundred important things. In fact, you discover that many of the slight variations aren’t variations at all. They’re really the same things with different names, different structures, or different labels. So it’s desirable to describe those few hundred concepts and relationships in the form of a declarative model that small amounts of code refer to again and again.How do data companies get our data?

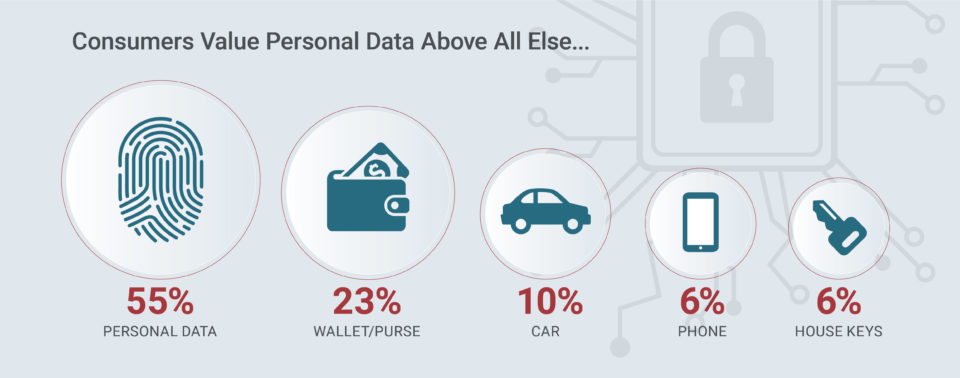

Research has shown that more than three in four Android apps contain at least on third-party tracker. Third-party app analytics companies plan a crucial role for advertisers and app developers. Though some are used to better understand how users use apps, a vast majority are used for targeted advertising, behavioural analytics, and location tracking. The problem is, that there is no actual opting-out, when it comes to such third-party tracking. In addition to third party trackers embedded in apps, apps themselves frequently access users’ entire address books, location data, photos and more, sometimes even if you have explicitly turned off access to such data. ... Another major source of data for data companies are surveys – this was at the heart of the 2018 Cambridge Analytica scandal. This includes things such as personality quizzes, online games and tests, and more. When a company asks you to rate a product, your opinion may benefit many other companies. The data company Epsilon for instance has created a database called Shopper’s Voice boasting “unique insights you won’t find anywhere else, directly from tens of millions of consumers.

Banking Giant ING Is Quietly Becoming a Serious Blockchain Innovator

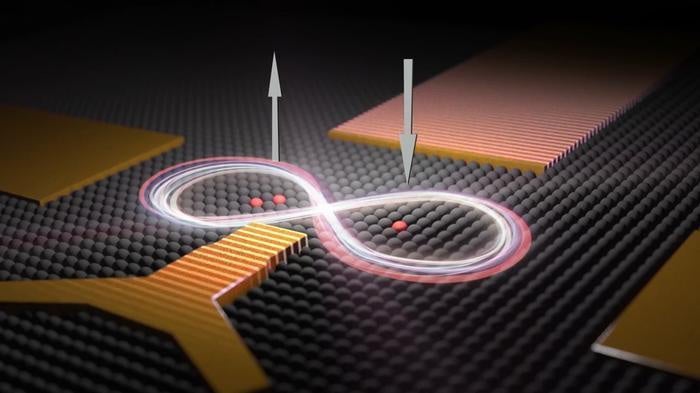

ING is out to prove that startups aren't the only ones that can advance blockchain cryptography. Rather than waiting on the sidelines for innovation to arrive, the Netherlands-based bank is diving headlong into a problem that it turns out worries financial institutions as much as average cryptocurrency users. In fact, the bank first made a splash in November of last year by modifying an area of cryptography known as zero-knowledge proofs. Simply put, the code allows someone to prove that they have knowledge of a secret without revealing the secret itself. On their own, zero-knowledge proofs were a promising tool for financial institutions that were intrigued by the benefits of shared ledgers but wary of revealing too much data to their competitors. The technique, previously applied in the cryptocurrency world by zcash, offered banks a way to transfer assets on these networks without tipping their hands or compromising client confidentiality. But ING has came up with a modified version called "zero-knowledge range proofs," which can prove that a number is within a certain range without revealing exactly what that number is.

What is data wrangling and how can you leverage it for your business?

Regardless of how unexciting the process of data wrangling might be, it’s still critical because it makes your data useful. Properly wrangled data can provide value through analysis or be fed into a collaboration and workflow tool to drive downstream action once it’s been conformed to the target form. Conformance or transforming disparate data elements into the same format also addresses the problem of siloed data. Siloed data assets cannot “talk” to each other without translating data elements between the different formats, which is often time or cost prohibitive. Another benefit of data wrangling is that it can be organized into a standardized and repeatable process that moves and transforms data sources into a common format, which can be reused multiple times. Once your data has been conformed to a standard format, you’re in a position to do some very valuable, cross-data set analytics. Conformance is even more valuable when multiple data sources are wrangled into the same format.

Digital transformation and the law of small numbers

Across industries, there is more downbeat news on digital transformation. A recent study by consulting firm Capgemini and the MIT Center for Digital Business concludes that organizations are struggling to convert their digital investments into business successes. The reasons are illuminating and many: lack of digital leadership skills, and a lack of alignment between IT and business, to name a couple. The study goes on to suggest that companies have underestimated the challenge of digital transformation and that organizations have done a poor job of engaging employees across the enterprise in the digital transformation journey. These findings may sound surprising to technology vendors, all of whom have gone “digital” in anticipation of big rewards from the digital bonanza (at least one global consulting firm has gone so far as to tie senior executive compensation to “digital” revenues). Anecdotally, “digital” revenues are still under 30 percent of total revenues for most technology firms, which further corroborates the findings of market studies on the state of digital transformation.

Containers Are Eating the World

The container delivery workflow is fundamentally different. Dev and ops collaborate to create a single container image, composed of different layers. These layers start with the OS, then add dependencies (each in its own layer), and finally the application artifacts. More important, container images are treated by the software delivery process as immutable images: any change to the underlying software requires a rebuild of the entire container image. Container technology, and Docker images, have made this far more practical than earlier approaches such as VM image construction by using union file systems to compose a base OS image with the applications and its dependencies; changes to each layer only require rebuilding that layer. This makes each container image rebuild far cheaper than recreating a full VM image. In addition, well-architected containers only run one foreground process, which dovetails well with the practice of decomposing an application into well-factored pieces, often referred to as microservices.How to build a layered approach to security in microservices

Microservices that need addresses across multiple applications make address-based security more complicated. For a different approach, you can group applications that share microservices into a common cluster, based on a common private IP address. Through this approach, all the components within the cluster are capable of addressing each other, but you will still need to expose them for communications outside that private network. If a microservice is broadly used across many applications, you should host it in its own cluster, and its address should be exposed to the enterprise virtual private network or the internet, depending on its scope. Network-based security reduces the chances of an intruder accessing a microservice API, but it won't protect against intrusions launched from within the private network. A Trojan or other hacked application could still gain access at the network level, so you may need to add another another level of security in microservices. This is the access control level. Access control relies on the microservice recognizing that a request is from an authentic source.

WhiteSource Launches Free Open Source Vulnerability Checking

After completing a scan of the user's requested libraries, the Vulnerability Checker shows all vulnerabilities detected in the software and the path, indicating which library includes which vulnerability. We also show the CVSS 3.0 score, provide links to references and even supply the suggested fix per the open source community. In the WhiteSource full platform we further provide information regarding whether you are actually making calls to the vulnerable functionality and a full trace analysis to provide insights for faster and quicker remediation for all known vulnerabilities (not just the top fifty from the previous month. WhiteSource automates the entire process of open source components management from the selection process, through the approval process and finding and fixing vulnerabilities in real-time. It is a SaaS offering priced annually per contributing developers, meaning the number of developers working on the relevant applications. We offer our full platform services free of charge for open source projects.Quote for the day:

"Your excuses are nothing more than the lies your fears have sold you." -- Robin Sharma