Quote for the day:

"We are moving from a world where we have to understand computers to a world where they will understand us." -- Jensen Huang

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 16 mins • Perfect for listening on the go.

When clean UI becomes cold UI

The article "When Clean UI Becomes Cold UI" explores the pitfalls of

over-minimalism in modern digital interface design, arguing that a "clean"

aesthetic can easily shift from elegant to emotionally distant. This "cold UI"

occurs when essential guidance—such as text labels, instructions, and

reassuring feedback—is stripped away in favor of a sleek, portfolio-worthy

appearance. While such designs may impress other designers, they often fail

real-world users by forcing them to rely on assumptions, which increases

cognitive friction and erodes the human connection. The central premise is

that designers must shift their focus from "clean" design to "clear" design.

Every element removed for the sake of aesthetics involves a trade-off that

often sacrifices functional clarity for visual simplicity. To avoid creating a

"ghost town" interface, the author encourages prioritizing meaning over

layout, ensuring icons are paired with labels and that the design supports

users during moments of uncertainty. Ultimately, a truly successful interface

is not one that is simply empty, but one that knows when to provide direction

and when to step back, balancing aesthetic minimalism with the transparency

required for a user to feel genuinely supported and understood.

The article "When Clean UI Becomes Cold UI" explores the pitfalls of

over-minimalism in modern digital interface design, arguing that a "clean"

aesthetic can easily shift from elegant to emotionally distant. This "cold UI"

occurs when essential guidance—such as text labels, instructions, and

reassuring feedback—is stripped away in favor of a sleek, portfolio-worthy

appearance. While such designs may impress other designers, they often fail

real-world users by forcing them to rely on assumptions, which increases

cognitive friction and erodes the human connection. The central premise is

that designers must shift their focus from "clean" design to "clear" design.

Every element removed for the sake of aesthetics involves a trade-off that

often sacrifices functional clarity for visual simplicity. To avoid creating a

"ghost town" interface, the author encourages prioritizing meaning over

layout, ensuring icons are paired with labels and that the design supports

users during moments of uncertainty. Ultimately, a truly successful interface

is not one that is simply empty, but one that knows when to provide direction

and when to step back, balancing aesthetic minimalism with the transparency

required for a user to feel genuinely supported and understood.5 Practical Techniques to Detect and Mitigate LLM Hallucinations Beyond Prompt Engineering

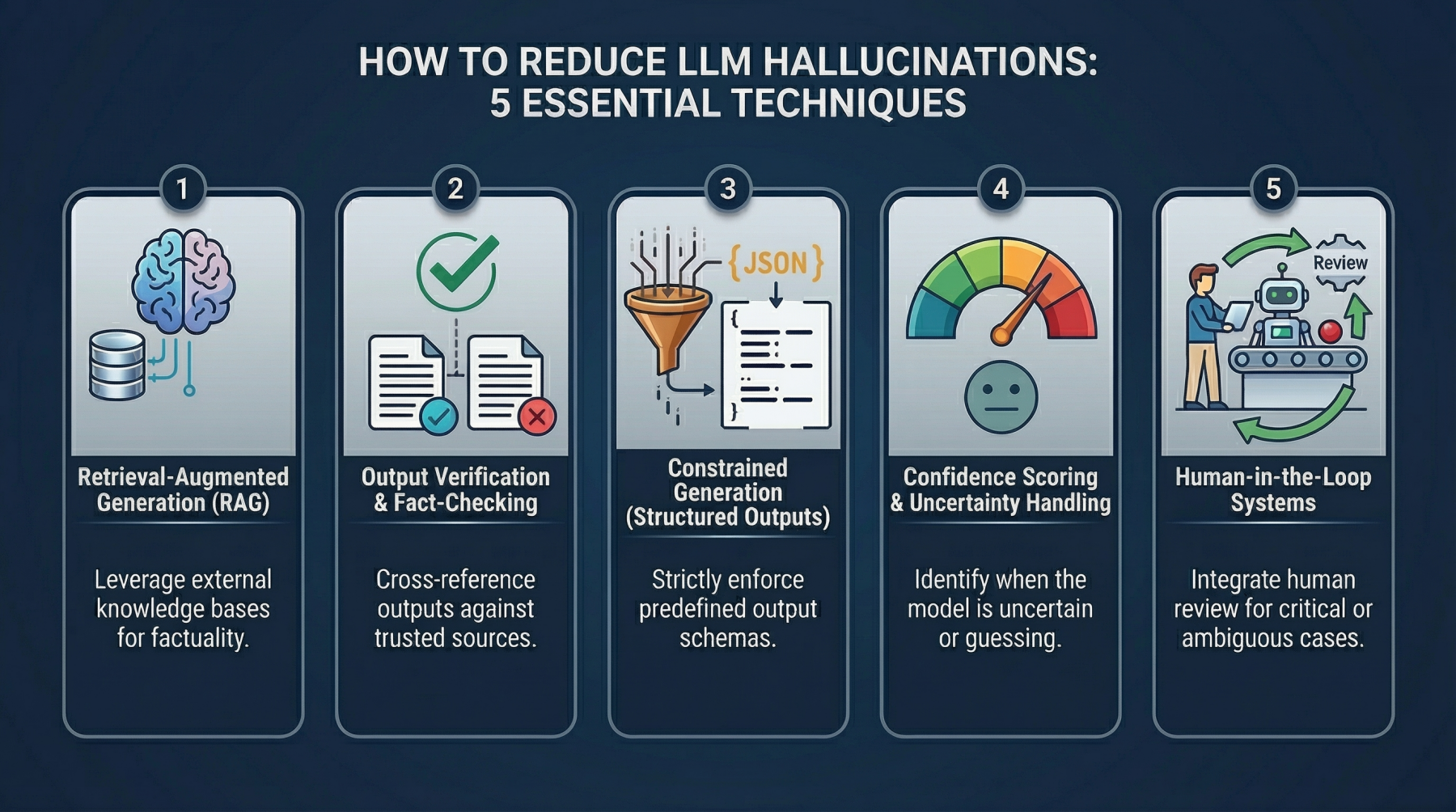

The article "5 Practical Techniques to Detect and Mitigate LLM Hallucinations

Beyond Prompt Engineering" from Machine Learning Mastery explores advanced

system-level strategies to ensure AI reliability. While basic prompting can

improve performance, it often fails in production settings where strict

accuracy is critical. The first technique, Retrieval-Augmented Generation

(RAG), anchors model responses in real-time, external verified data, moving

away from reliance on static, often outdated training memory. Second, the

article advocates for Output Verification Layers, where a secondary model or

automated cross-referencing system validates initial drafts before they reach

the user. Third, Constrained Generation utilizes structured formats like JSON

or XML to limit speculative or tangential output, ensuring machine-readable

consistency. Fourth, Confidence Scoring and Uncertainty Handling encourage

models to quantify their own reliability or admit ignorance through "I don’t

know" responses rather than guessing. Finally, Human-in-the-Loop Systems

integrate human oversight to refine results, provide feedback, and build

essential user trust. Collectively, these methods transition LLM applications

from experimental prototypes to robust, factual tools. By implementing these

architectural patterns, developers can move beyond trial-and-error prompting

to create production-ready systems capable of handling high-stakes tasks where

the cost of a hallucination is significantly high.

The article "5 Practical Techniques to Detect and Mitigate LLM Hallucinations

Beyond Prompt Engineering" from Machine Learning Mastery explores advanced

system-level strategies to ensure AI reliability. While basic prompting can

improve performance, it often fails in production settings where strict

accuracy is critical. The first technique, Retrieval-Augmented Generation

(RAG), anchors model responses in real-time, external verified data, moving

away from reliance on static, often outdated training memory. Second, the

article advocates for Output Verification Layers, where a secondary model or

automated cross-referencing system validates initial drafts before they reach

the user. Third, Constrained Generation utilizes structured formats like JSON

or XML to limit speculative or tangential output, ensuring machine-readable

consistency. Fourth, Confidence Scoring and Uncertainty Handling encourage

models to quantify their own reliability or admit ignorance through "I don’t

know" responses rather than guessing. Finally, Human-in-the-Loop Systems

integrate human oversight to refine results, provide feedback, and build

essential user trust. Collectively, these methods transition LLM applications

from experimental prototypes to robust, factual tools. By implementing these

architectural patterns, developers can move beyond trial-and-error prompting

to create production-ready systems capable of handling high-stakes tasks where

the cost of a hallucination is significantly high.Agentic GRC: Teams Get the Tech. The Mindset Shift Is What's Missing

In "Agentic GRC: Teams Get the Tech, the Mindset Shift Is What's Missing,"

Yair Kuznitsov explores the transformative impact of AI agents on Governance,

Risk, and Compliance. Traditionally, GRC professionals derived value from

operational competence, specifically manual evidence collection and audit

management. However, agentic AI now automates these workflows, creating an

identity crisis for those whose roles were defined by execution. The author

argues that while technology is ready, many teams remain reluctant because

they struggle to redefine their professional purpose beyond operational tasks.

Crucially, GRC was intended as a strategic risk management function, but it

became consumed by scaling inefficiencies. Agentic GRC offers a return to

these roots, transitioning practitioners toward "GRC Engineering" where

controls are managed as code via Git and CI/CD pipelines. This essential shift

requires moving from a "checkbox" mentality to strategic risk leadership.

Humans must provide critical judgment, define risk appetite, and translate

business context into compliance logic—capabilities AI cannot replicate.

Ultimately, successful organizations will empower their GRC teams to stop

merely managing operational machines and start leading proactive, risk-based

initiatives. This evolution represents an opportunity for professionals to

finally perform the high-level work they were originally trained to do.

In "Agentic GRC: Teams Get the Tech, the Mindset Shift Is What's Missing,"

Yair Kuznitsov explores the transformative impact of AI agents on Governance,

Risk, and Compliance. Traditionally, GRC professionals derived value from

operational competence, specifically manual evidence collection and audit

management. However, agentic AI now automates these workflows, creating an

identity crisis for those whose roles were defined by execution. The author

argues that while technology is ready, many teams remain reluctant because

they struggle to redefine their professional purpose beyond operational tasks.

Crucially, GRC was intended as a strategic risk management function, but it

became consumed by scaling inefficiencies. Agentic GRC offers a return to

these roots, transitioning practitioners toward "GRC Engineering" where

controls are managed as code via Git and CI/CD pipelines. This essential shift

requires moving from a "checkbox" mentality to strategic risk leadership.

Humans must provide critical judgment, define risk appetite, and translate

business context into compliance logic—capabilities AI cannot replicate.

Ultimately, successful organizations will empower their GRC teams to stop

merely managing operational machines and start leading proactive, risk-based

initiatives. This evolution represents an opportunity for professionals to

finally perform the high-level work they were originally trained to do.The Missing Layer in Agentic AI

The article "The Missing Layer in Agentic AI" argues that while current AI

development focuses heavily on large language models and reasoning

capabilities, a critical "middleware" layer is currently absent. This missing

component, referred to as an agentic orchestration layer, is essential for

transforming static models into truly autonomous systems capable of executing

complex, multi-step tasks in dynamic environments. The author explains that

for AI agents to be effective, they require more than just raw intelligence;

they need robust frameworks for memory management, tool integration, and state

persistence. This layer acts as the glue that connects high-level planning

with low-level execution, ensuring that agents can maintain context and

recover from errors during long-running processes. Furthermore, the piece

highlights that without this specialized infrastructure, developers are forced

to build bespoke, brittle solutions that do not scale. By establishing a

standardized orchestration layer, the industry can move toward more reliable,

observable, and interoperable agentic workflows. Ultimately, the article

suggests that the next frontier of AI progress lies not just in better models,

but in the sophisticated software engineering required to manage how those

models interact with the world and each other.

Edge clouds and local data centers reshape IT

For over a decade, enterprise cloud strategy prioritized centralization on

hyperscale platforms to achieve economies of scale and reduce infrastructure

sprawl. However, the rise of edge clouds and local data centers is

fundamentally reshaping this paradigm toward a selectively distributed

architecture. Modern digital systems increasingly require real-time

responsiveness, adherence to regional data sovereignty regulations, and

efficient handling of massive data volumes from sensors and video feeds. To

meet these demands, enterprises are adopting a dual architecture that combines

the strengths of centralized cloud platforms—well-suited for model training

and storage—with localized infrastructure positioned closer to the source of

interaction. This shift is visible in sectors like retail and manufacturing,

where proximity reduces latency and operational costs. Despite its benefits,

the transition to edge computing introduces significant complexities,

including fragmented life-cycle management, security hardening, and the need

for robust observability across hundreds of distributed sites. Rather than

replacing the cloud, the edge serves as a coordinated layer within an

integrated hybrid model. By placing workloads where they are most

operationally and economically effective, organizations can navigate bandwidth

limitations and physical-world complexities, ensuring their digital

infrastructure remains agile and resilient in a changing technological

landscape.

For over a decade, enterprise cloud strategy prioritized centralization on

hyperscale platforms to achieve economies of scale and reduce infrastructure

sprawl. However, the rise of edge clouds and local data centers is

fundamentally reshaping this paradigm toward a selectively distributed

architecture. Modern digital systems increasingly require real-time

responsiveness, adherence to regional data sovereignty regulations, and

efficient handling of massive data volumes from sensors and video feeds. To

meet these demands, enterprises are adopting a dual architecture that combines

the strengths of centralized cloud platforms—well-suited for model training

and storage—with localized infrastructure positioned closer to the source of

interaction. This shift is visible in sectors like retail and manufacturing,

where proximity reduces latency and operational costs. Despite its benefits,

the transition to edge computing introduces significant complexities,

including fragmented life-cycle management, security hardening, and the need

for robust observability across hundreds of distributed sites. Rather than

replacing the cloud, the edge serves as a coordinated layer within an

integrated hybrid model. By placing workloads where they are most

operationally and economically effective, organizations can navigate bandwidth

limitations and physical-world complexities, ensuring their digital

infrastructure remains agile and resilient in a changing technological

landscape.AI frenzy feeds credential chaos, secrets leak through code, tools, and infrastructure

GitGuardian’s State of Secrets Sprawl 2026 report highlights an alarming surge

in cybersecurity risks, revealing that 28.65 million new hardcoded secrets

were detected in public GitHub commits during 2025. This multi-year upward

trend demonstrates that credentials, including access keys, tokens, and

passwords, are increasingly leaking through code, development tools, and

infrastructure. Beyond public repositories, the report underscores a

significant shift toward internal environments, which often carry a higher

density of sensitive production credentials. The explosion of AI development

has exacerbated the problem; AI-assisted coding and the proliferation of new

model providers and agent frameworks have introduced vast numbers of fresh

credentials that are frequently mismanaged. Furthermore, collaboration

platforms like Slack and Jira, along with self-hosted Docker registries, serve

as additional points of exposure. A particularly concerning finding is the

longevity of these leaks, as many credentials remain active and usable for

years due to the operational complexities of remediation across fragmented

systems. Ultimately, the report illustrates a widening gap between the rapid

pace of software innovation and the governance required to secure the

expanding surface area of modern, interconnected development workflows,

leaving critical infrastructure vulnerable to exploitation.

GitGuardian’s State of Secrets Sprawl 2026 report highlights an alarming surge

in cybersecurity risks, revealing that 28.65 million new hardcoded secrets

were detected in public GitHub commits during 2025. This multi-year upward

trend demonstrates that credentials, including access keys, tokens, and

passwords, are increasingly leaking through code, development tools, and

infrastructure. Beyond public repositories, the report underscores a

significant shift toward internal environments, which often carry a higher

density of sensitive production credentials. The explosion of AI development

has exacerbated the problem; AI-assisted coding and the proliferation of new

model providers and agent frameworks have introduced vast numbers of fresh

credentials that are frequently mismanaged. Furthermore, collaboration

platforms like Slack and Jira, along with self-hosted Docker registries, serve

as additional points of exposure. A particularly concerning finding is the

longevity of these leaks, as many credentials remain active and usable for

years due to the operational complexities of remediation across fragmented

systems. Ultimately, the report illustrates a widening gap between the rapid

pace of software innovation and the governance required to secure the

expanding surface area of modern, interconnected development workflows,

leaving critical infrastructure vulnerable to exploitation.

/articles/architecting-autonomy-scale/en/smallimage/architecting-autonomy-scale-thumbnail-1774360140662.jpg) In “Architecting Autonomy at Scale,” Shweta Aggarwal and Ron Klein argue that

traditional, centralized architectural governance becomes a significant

bottleneck as organizations grow, necessitating a fundamental shift toward

decentralized decision-making. Utilizing a “parental metaphor,” the article

describes the evolution of architecture from “infancy,” where strong central

guidance is required to prevent chaos, to “adulthood,” where teams operate

autonomously within established systems. The authors propose a structured

framework built on clear decision boundaries, shared principles, and robust

guardrails rather than restrictive approval gates. Key technical practices

include documenting decisions via Architecture Decision Records (ADRs) to

preserve context, utilizing “fitness functions” for automated governance

within CI/CD pipelines, and leveraging AI for detecting architectural drift.

By aligning architectural authority with the C4 model levels, organizations

can clarify ownership and reduce delivery friction. Ultimately, the role of

the architect evolves from a top-down gatekeeper to a coach and platform

enabler, focusing on creating “paved roads” that allow teams to experiment

safely. This transition is framed as a socio-technical transformation that

requires cultural shifts, leadership support, and a trust-based governance

model to successfully balance local agility with enterprise-wide coherence and

long-term technical sustainability.

In “Architecting Autonomy at Scale,” Shweta Aggarwal and Ron Klein argue that

traditional, centralized architectural governance becomes a significant

bottleneck as organizations grow, necessitating a fundamental shift toward

decentralized decision-making. Utilizing a “parental metaphor,” the article

describes the evolution of architecture from “infancy,” where strong central

guidance is required to prevent chaos, to “adulthood,” where teams operate

autonomously within established systems. The authors propose a structured

framework built on clear decision boundaries, shared principles, and robust

guardrails rather than restrictive approval gates. Key technical practices

include documenting decisions via Architecture Decision Records (ADRs) to

preserve context, utilizing “fitness functions” for automated governance

within CI/CD pipelines, and leveraging AI for detecting architectural drift.

By aligning architectural authority with the C4 model levels, organizations

can clarify ownership and reduce delivery friction. Ultimately, the role of

the architect evolves from a top-down gatekeeper to a coach and platform

enabler, focusing on creating “paved roads” that allow teams to experiment

safely. This transition is framed as a socio-technical transformation that

requires cultural shifts, leadership support, and a trust-based governance

model to successfully balance local agility with enterprise-wide coherence and

long-term technical sustainability.

The European Commission is intensifying its enforcement of the Digital

Services Act (DSA) by moving away from "self-declaration" as a valid method

for online age assurance. Following a series of investigations, regulators

have determined that simple "click-to-confirm" mechanisms on major adult

content platforms, including Pornhub, Stripchat, XNXX, and XVideos, are

insufficient to protect minors from harmful material. These platforms are now

being urged to implement more robust, privacy-preserving age verification

measures to ensure compliance with EU standards. Simultaneously, the

Commission has opened a formal investigation into Snapchat over concerns that

its reliance on self-declaration fails to prevent underage children from

accessing the app or to provide age-appropriate experiences for teenagers.

Beyond the European Commission's actions, the UK Information Commissioner's

Office (ICO) is also pressuring social media giants to strengthen their

age-gate systems. Potential solutions being discussed include the use of the

European Digital Identity (EUDI) Wallet, facial age estimation technology, and

identity document scans. This coordinated regulatory crackdown signals a major

shift in the digital landscape, where platforms must now prioritize societal

risks to minors over business-centric concerns. Failure to adopt these more

stringent verification methods could lead to significant financial penalties

across the European Union.

The European Commission is intensifying its enforcement of the Digital

Services Act (DSA) by moving away from "self-declaration" as a valid method

for online age assurance. Following a series of investigations, regulators

have determined that simple "click-to-confirm" mechanisms on major adult

content platforms, including Pornhub, Stripchat, XNXX, and XVideos, are

insufficient to protect minors from harmful material. These platforms are now

being urged to implement more robust, privacy-preserving age verification

measures to ensure compliance with EU standards. Simultaneously, the

Commission has opened a formal investigation into Snapchat over concerns that

its reliance on self-declaration fails to prevent underage children from

accessing the app or to provide age-appropriate experiences for teenagers.

Beyond the European Commission's actions, the UK Information Commissioner's

Office (ICO) is also pressuring social media giants to strengthen their

age-gate systems. Potential solutions being discussed include the use of the

European Digital Identity (EUDI) Wallet, facial age estimation technology, and

identity document scans. This coordinated regulatory crackdown signals a major

shift in the digital landscape, where platforms must now prioritize societal

risks to minors over business-centric concerns. Failure to adopt these more

stringent verification methods could lead to significant financial penalties

across the European Union.5 reasons why the tech industry is failing women

The CIO.com article, “Women in Tech Statistics: The Hard Truths of an Uphill

Battle,” highlights the persistent gender gap and systemic challenges women

face in the technology sector. Despite representing 42% of the global

workforce, women hold only 26-28% of tech roles and just 12% of C-suite

positions. A significant “leaky pipeline” begins in academia, where women earn

only 21% of computer science degrees, and continues into the workplace.

Troublingly, 50% of women leave the industry by age 35—a rate 45% higher than

men—driven by toxic cultures, microaggressions, and a lack of flexible

work-life balance. Economic instability further compounds these issues, with

women being 1.6 times more likely to face layoffs; during 2022’s mass tech

layoffs, they accounted for 69% of job losses. Financial disparities remain

stark, as women earn approximately $15,000 less annually than their male

counterparts. Furthermore, the rise of artificial intelligence presents new

risks, with women’s roles 34% more likely to be disrupted by automation

compared to 25% for men. Collectively, these statistics underscore that

achieving gender parity requires more than corporate pledges; it necessitates

fundamental shifts in recruitment, retention, and structural support

systems.

The CIO.com article, “Women in Tech Statistics: The Hard Truths of an Uphill

Battle,” highlights the persistent gender gap and systemic challenges women

face in the technology sector. Despite representing 42% of the global

workforce, women hold only 26-28% of tech roles and just 12% of C-suite

positions. A significant “leaky pipeline” begins in academia, where women earn

only 21% of computer science degrees, and continues into the workplace.

Troublingly, 50% of women leave the industry by age 35—a rate 45% higher than

men—driven by toxic cultures, microaggressions, and a lack of flexible

work-life balance. Economic instability further compounds these issues, with

women being 1.6 times more likely to face layoffs; during 2022’s mass tech

layoffs, they accounted for 69% of job losses. Financial disparities remain

stark, as women earn approximately $15,000 less annually than their male

counterparts. Furthermore, the rise of artificial intelligence presents new

risks, with women’s roles 34% more likely to be disrupted by automation

compared to 25% for men. Collectively, these statistics underscore that

achieving gender parity requires more than corporate pledges; it necessitates

fundamental shifts in recruitment, retention, and structural support

systems.15+ Global Banks Exploring Quantum Technologies

The article titled "15+ global banks probing the wonderful world of quantum

technologies," published by The Quantum Insider on March 27, 2026, highlights

the accelerating integration of quantum computing within the global financial

sector. Central to this movement is the "Quantum Innovation Index," a

benchmarking tool developed in collaboration with HorizonX Consulting, which

identifies top performers like JPMorgan Chase, HSBC, and Goldman Sachs. These

institutions are leading a group of over fifteen major banks that have

transitioned from theoretical research to practical experimentation. The

report details how these banks are leveraging quantum advantages for

high-dimensional computational tasks, including portfolio optimization,

complex risk modeling through Monte Carlo simulations, and real-time fraud

detection. Furthermore, the article emphasizes a proactive shift toward

"quantum readiness" to combat cryptographic threats, with banks like HSBC

trialing quantum-secure trading for digital assets. With nearly 80% of the

world’s fifty largest banks now exploring these frontier technologies, the

narrative has shifted from whether quantum will disrupt finance to when its

full-scale implementation will occur. This trend is bolstered by significant

investments, such as JPMorgan’s backing of Quantinuum, underscoring a

strategic imperative to maintain competitiveness and ensure systemic stability

in a post-quantum world.

No comments:

Post a Comment