8 data strategy mistakes to avoid

Denying business users access to information because of data silos has been a

problem for years. When different departments, business units, or groups keep

data stored in systems not available to others, it diminishes the value of the

data. Data silos result in inconsistencies and operational inefficiencies,

says John Williams, executive director of enterprise data and advanced analytics

at RaceTrac, an operator of convenience stores. ... Data governance should be at

the heart of any data strategy. If not, the results can include poor data

quality, lack of consistency, and noncompliance with regulations, among other

issues. “Maintaining the quality and consistency of data poses challenges

in the absence of a standardized data management approach,” Williams says.

“Before incorporating Alation at RaceTrac, we struggled with these issues,

resulting in a lack of confidence in the data and redundant efforts that impeded

data-driven decision-making.” Organizations need to create a robust data

governance framework, Williams says. This involves assigning data stewards,

establishing transparent data ownership, and implementing guidelines for data

accuracy, accessibility, and security.

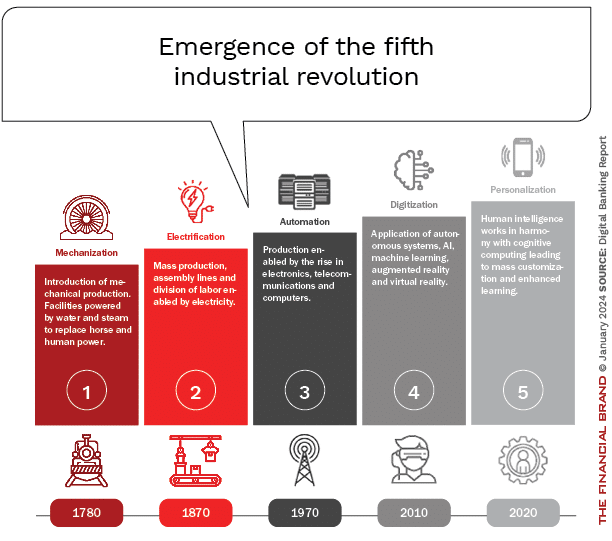

Regulators probe Microsoft’s OpenAI ties — is it only smoke, or is there fire?

Given that some of the world’s most powerful agencies regulating antitrust

issues are looking into Microsoft’s relationship with OpenAI, the company has

much to fear. Are they being fair, though? Is there not just smoke, but also

fire? You might argue that AI — and genAI in particular — is so new, and the

market so wide open, that these kinds of investigations are exceedingly

preliminary, would only hurt competition, and represent governmental overreach.

After all, Google, Facebook, Amazon, and billion-dollar startups are all

competing in the same market. That shows there’s serious competition. But that’s

not quite the point. The OpenAI soap opera shows that OpenAI is separate from

Microsoft in name only. If Microsoft can use its $13 billion investment to

reinstall Altman (and grab a seat on the board), even if it’s a nonvoting one,

it means Microsoft is essentially in charge of the company. Microsoft and OpenAI

have a significant lead over all their competitors. If governments wait too long

to probe what’s going on, that lead could become insurmountable.

AI will make scam emails look genuine, UK cybersecurity agency warns

The NCSC, part of the GCHQ spy agency, said in its latest assessment of AI’s

impact on the cyber threats facing the UK that AI would “almost certainly”

increase the volume of cyber-attacks and heighten their impact over the next two

years. It said generative AI and large language models – the technology that

underpins chatbots – will complicate efforts to identify different types of

attack such as spoof messages and social engineering, the term for manipulating

people to hand over confidential material. “To 2025, generative AI and large

language models will make it difficult for everyone, regardless of their level

of cybersecurity understanding, to assess whether an email or password reset

request is genuine, or to identify phishing, spoofing or social engineering

attempts.” Ransomware attacks, which had hit institutions such as the

British Library and Royal Mail over the past year, were also expected to

increase, the NCSC said. It warned that the sophistication of AI “lowers the

barrier” for amateur cybercriminals and hackers to access systems and gather

information on targets, enabling them to paralyse a victim’s computer systems,

extract sensitive data and demand a cryptocurrency ransom.

Burnout epidemic proves there's too much Rust on the gears of open source

An engineer is keen to work on the project, opens up the issue tracker, and

finds something they care about and want to fix. It's tricky, but all the easy

issues have been taken. Finding a mentor is problematic since, as Nelson puts

it, "all the experienced people are overworked and burned out," so the engineer

ends up doing a lot of the work independently. "Guess what you've already

learned at this point," wrote Nelson. "Work in this project doesn't happen

unless you personally drive it forward." The engineer becomes a more active

contributor. So active that the existing maintainer turns over a lot of

responsibilities. They wind up reviewing PRs and feeling responsible for

catching mistakes. They can't keep up with the PRs. They start getting tired ...

and so on. Burnout can manifest itself in many ways, and dodging it comes down

to self-care. While the Rust Foundation did not wish to comment on the subject,

the problem of burnout is as common – if not more so – in the open source world

as it is in the commercial one.

Steadfast Leadership And Identifying Your True North

When you apply the idea of true north across all facets of your organization,

you can effectively keep your team aligned and moving in tandem. But without a

clear and definitive direction, there’s no way to gauge whether everyone is

rowing in the same direction. Clarifying your distinct true north is just the

beginning. Once it’s established, team members at all levels, especially

leadership, must understand it, refer to it often, and measure performance

against it. This looks like continuously reviewing departmental metrics and the

attitudes of teams and individuals to ensure that they are in alignment with the

organization’s cardinal direction. Leaders must be able to see the connection

between the processes and goals of individual teams and how they contribute to

or inhibit long-term goals. If individuals or teams work against the desired

direction (sometimes unknowingly!), it can slow or, in some cases, even reverse

progress. The antidote is long-term alignment, but this can only come after a

deep understanding of how the day-to-day affects long-term success, which

requires accurate metrics, widespread accountability, and thorough analysis.

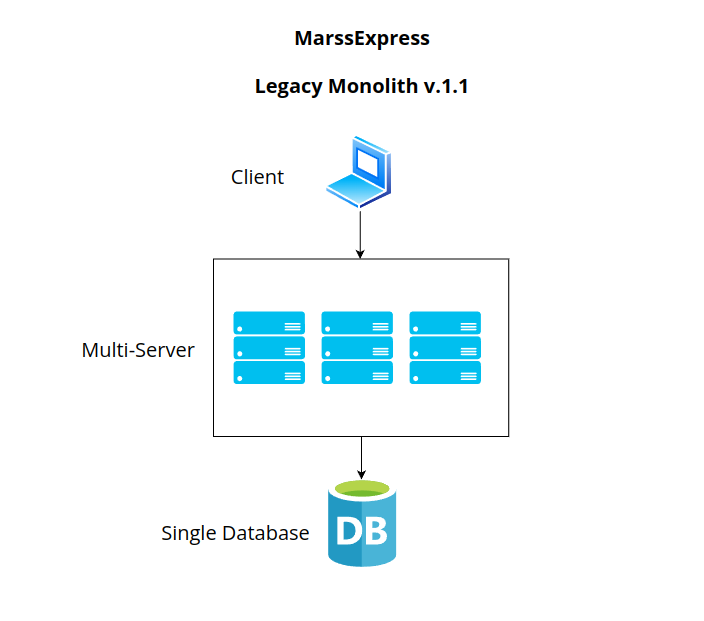

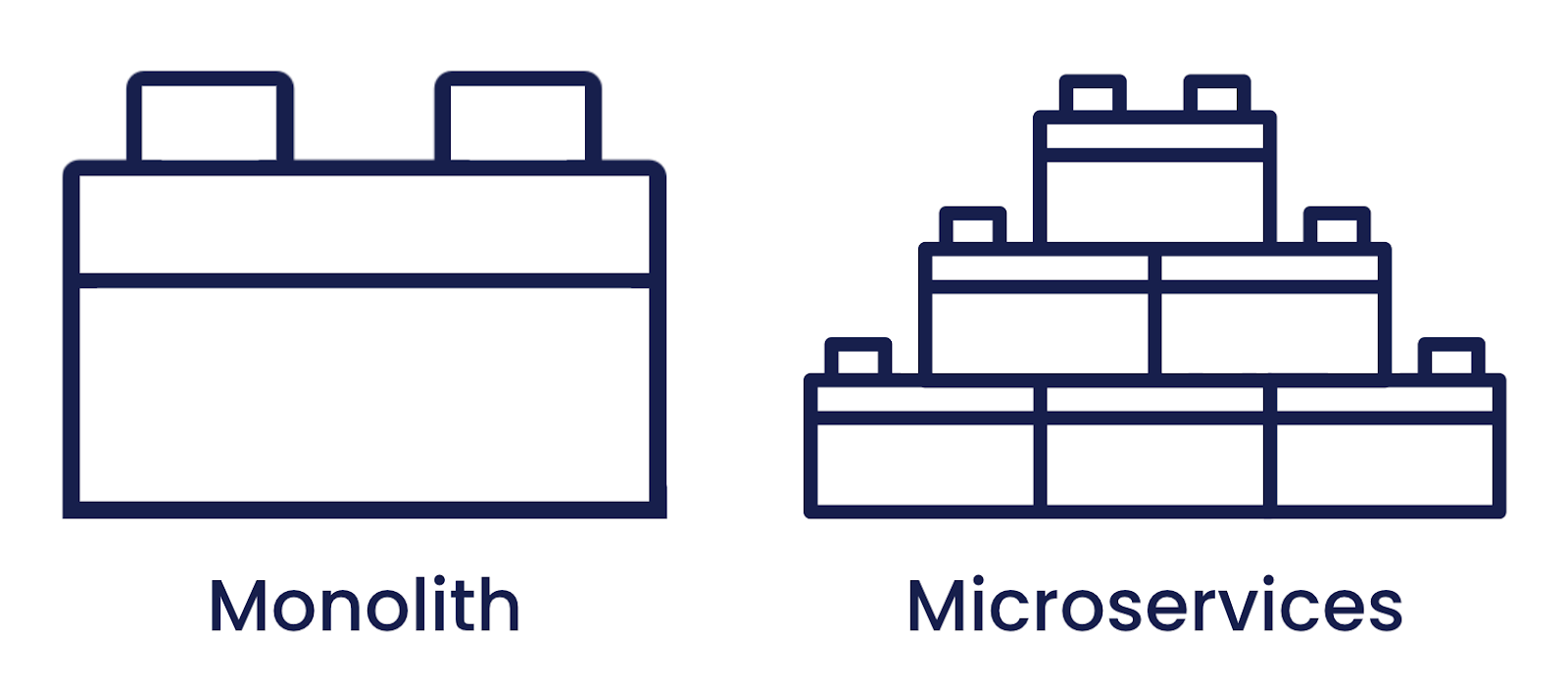

The Rise of the Serverless Data Architectures

The big lesson is that there is no free lunch. You have to understand the

tradeoffs. If I go with Aurora, I have to not think about some things, I have

to think about other things. Transactions are not an issue. Cold start is an

issue, minimum payment may be an issue. If I go with something like DynamoDB,

then things are perfect, but I have a key-value store. There's all kinds of

things to take into consideration and make sure that you understand what each

system is actually capable of delivering. The one thing to note is that while

you will have to make tradeoffs, if you decide you want a very elastic system,

look at the situation. It does not require changing the whole way you ever use

the database. Meaning if you like key-value stores, there will be several for

you to choose from. If you like relational, there will be a bunch. If you like

specific type of relational, and MySQL fans will be. If you like Postgres,

there are going to be. You don't have to change a lot about your worldview.

This is not the same case if you try serverless functions, which is, learn a

whole new way to write code and manage code and so on, because I'm still

trying to wrap my head around how to build functionality from a lot of small

independent functions.

Navigating Generative AI Data Privacy and Compliance

Developers play a crucial role in protecting companies from the legal and

ethical challenges linked to generative AI products. Faced with the risk of

unintentionally exposing information (a longstanding problem) or now having

the generative AI tool leak it on its own (as occurred when ChatGPT users

reported seeing other people’s conversation histories), companies can

implement strategies like the following to minimize liability and help ensure

the responsible handling of customer data. ... Using anonymized and aggregated

data serves as an initial barrier against the inadvertent exposure of

individual customer information. Anonymizing data strips personally

identifiable elements so that the generative AI system can learn and operate

without associating specific details with individual users. ... Through

meticulous access management, developers can restrict data access exclusively

to individuals with specific tasks and responsibilities. By creating a tightly

controlled environment, developers can proactively reduce the likelihood of

data breaches, helping ensure that only authorized personnel can interact with

and manipulate customer data within the generative AI system.

The Intersection of DevOps, Platform Engineering, and SREs

By automating manual processes, embracing continuous integration and

continuous delivery (CI/CD), and instilling a mindset of shared

responsibility, DevOps empowers teams to respond swiftly to market demands,

ensuring that software is not just developed but delivered efficiently and

reliably. Platform Engineering emerges as a key player in shaping the

infrastructure that underpins modern applications. It is the architectural

foundation that supports the deployment, scaling, and management of

applications across diverse environments. The importance of Platform

Engineering lies in providing a standardized, scalable, and efficient platform

for development and operations teams. By offering a set of curated tools,

services, and environments, Platform Engineers enable seamless collaboration

and integration of DevOps practices. ... The importance of SREs lies in their

dedication to ensuring the reliability, scalability, and high performance of

systems and applications. SREs introduce a data-driven approach, defining

service level objectives (SLOs) and error budgets to align technical

operations with business objectives.

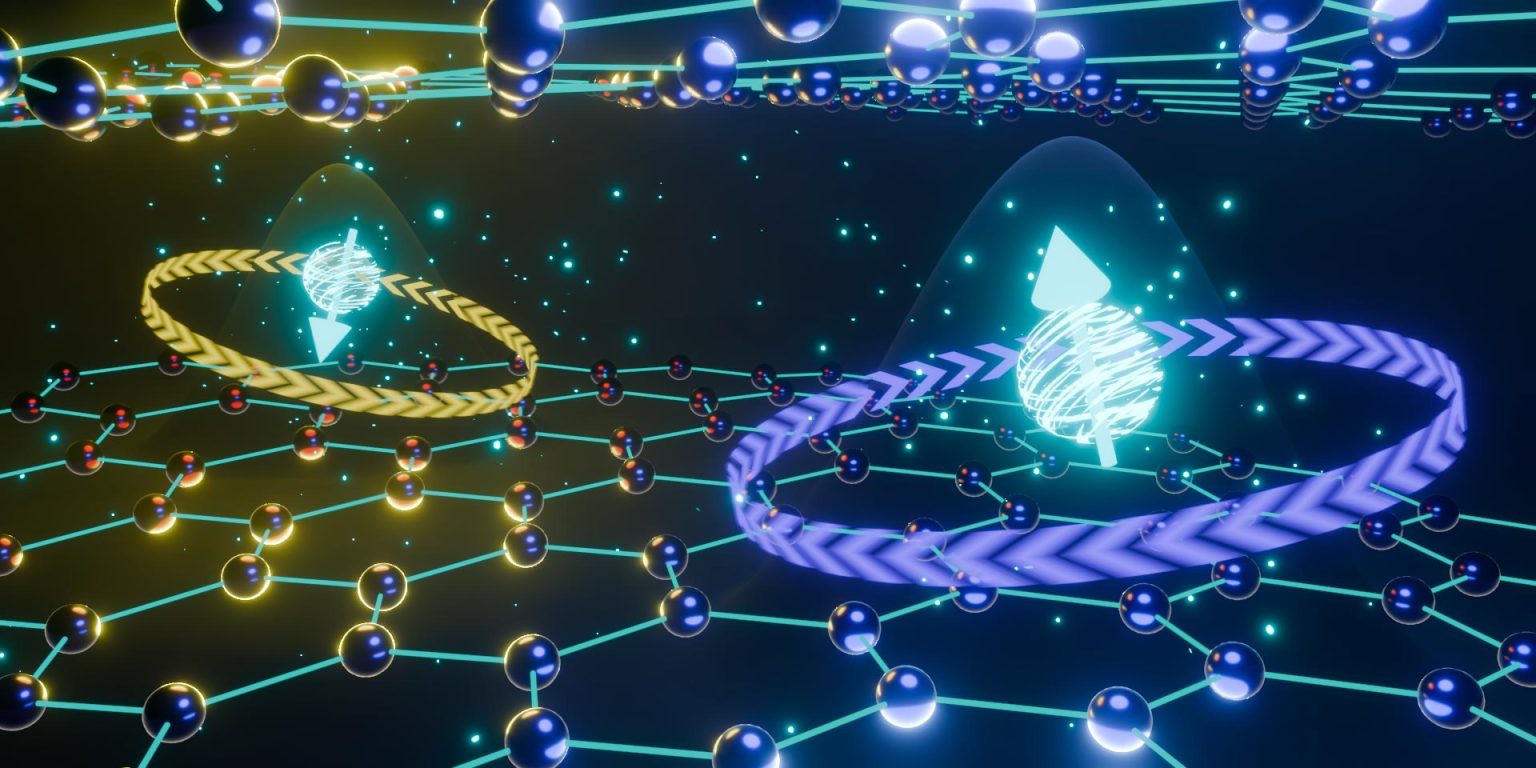

Quantum-secure online shopping comes a step closer

The researchers’ QDS protocol involves three parties: a merchant, a client and

a third party (TP). It begins with the merchant preparing two sequences of

coherent quantum states, while the client and the TP prepare one sequence of

coherent states each. The merchant and client then send a state via a secure

quantum channel to an intermediary, who performs an interference measurement

and shares the outcome with them. The same process occurs between the merchant

and the TP. These parallel processes enable the merchant to generate two keys

that they use to create a signature for the contract via one-time universal

hashing. Once this occurs, the merchant sends the contract and the signature

to the client. If the client agrees with the contract, they use their quantum

state to generate a key in a similar way as the merchant and send this key to

the TP. Similarly, the TP generates a key from their quantum state after

receiving the contract and signature. Both the client and the TP can verify

the signature by calculating the hash function and comparing their result to

the signature.

The Top 10 Things Every Cybersecurity Professional Needs to Know About Privacy

The intersection between privacy and cybersecurity is ever increasing and the

boundaries between the two ever blurring. By way of example – data breaches

lived firmly in the realm of cybersecurity for many years. However, since the

adoption of GDPR and mandatory disclosure requirements of several data

protection and privacy laws around the world, the balance of responsibility

and ownership of data breaches has become blurred. ... the language of privacy

is very different from that of cybersecurity – cybersecurity professionals

talk about penetration tests, vulnerability assessments, ransomware attacks,

firewalls, operating systems, malware, anti-virus, etc. Meanwhile, privacy

professionals talk about data protection impact assessments, case law

judgements, privacy by design and default, legitimate interest assessments,

proportionality, etc. In fact, the language of privacy is not even consistent

in its own right, with much confusion between the fundamental differences

between data protection and privacy and its definitions across

jurisdictions.

Quote for the day:

"Leaders should influence others in

such a way that it builds people up, encourages and edifies them so they can

duplicate this attitude in others." -- Bob Goshen