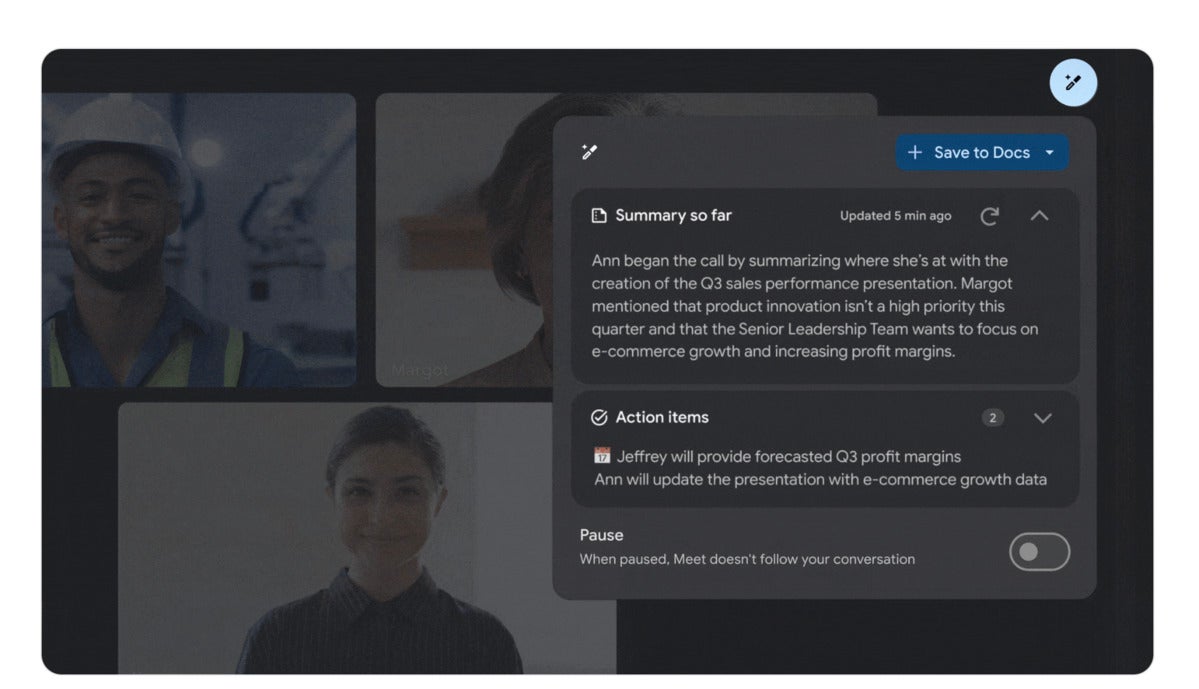

Peril vs. Promise: Companies, Developers Worry Over Generative AI Risk

One widespread concern over AI is that the systems will replace developers: 36% of developers worry that they will be replaced by an AI system. Yet the GitLab survey also gave more weight to arguments that disruptive technologies result in more work for people: Nearly two-thirds of companies hired employees to help manage AI implementations. Part of the concern seem to be generational. More experienced developers tend not to accept the code suggestions made by AI systems, while more junior developers are more likely to accept them, Lemos says. Yet both are looking to AI to assist them with the most boring work, such as documentation and creating unit tests. "I'm seeing a lot more developers raising the idea of having their documentation written by AI, or having test coverage written by AI, because they care less about the quality of that code, but just that the test works," he says. "There's both a security and a development benefit in having better test coverage, and it's something that they don't have to spend time on."

Feds Urge Immediately Patching of Zoho and Fortinet Products

CISA found that beginning in January, multiple APT groups separately exploited two different critical vulnerabilities to gain unauthorized access and exfiltrate data from the organization. Both of the unrelated flaws - CVE-2022-47966 in Zoho ManageEngine and CVE-2022-42475 in Fortinet FortiOS SSL VPN - have been classified as being of critical severity, meaning they can be exploited to remotely execute code, allowing attackers to take control of the system and pivot to other parts of the network. Each of the vendors issued updates patching their flaws in late 2022. Researchers refer to these as N-day vulnerabilities, meaning known flaws, as opposed to zero-day vulnerability for which no patch is yet available. The alert, issued by CISA, the FBI and U.S. Cyber Command's Cyber National Mission Force, includes details of how attackers used each of the flaws to gain wider access to victims' networks. The advisory doesn't state which nation or nations' APT groups have been tied to known exploits of these flaws.

Scrum Master Skills We Rarely Talk About: Change Management

The initial stride towards constructing a "compelling case for change" is the vision of the type of Organization we aspire to become. It's crucial to emphasize that the organization's mode of operation should never serve as the ultimate goal in itself. Rather, it serves as a supplementary element that "enables" the organization in the pursuit of its objectives. This, in turn, gives rise to the necessity for change, marking the starting point of the entire process. A clearly expressed need for change (or the response to the question "Why exactly?") opens the gateway to the subsequent consideration: how should our Organization function to realize its goals? This is what we refer to as the Ideal State. Once we've defined the Ideal State of the organization, we can precisely articulate the exact optimizations required, alongside the pivotal indicators we will employ to monitor our progress throughout the change process. The Optimization Goal acts as our compass, guiding the direction of change or indicating precisely what adjustments need to be made.

Cloud first is dead—cloud smart is what’s happening now

Cloud smart involves making the best use of cloud concepts whether they are on

premises or off and fundamentally making the most rational choice of locality as

part of the thinking. A cloud smart architectural approach is essential because

it enables enterprises to optimize their on-premises IT infrastructure and

leverage the benefits of the cloud as well. With cloud smart architecture,

enterprises can design and deploy highly available, scalable, and resilient

solutions that have cloud operating characteristics to adapt to their changing

business needs. After the initial rush to public cloud, this belated dose of

reality is a positive. It reflects the recognition that there needs to be a

smarter balance right between what's on premises vs. what's in the public cloud.

Knowing how to strike the right balance—with the understanding that not every

application is meant for the cloud—can ensure that you optimize performance,

reliability, and cost, driving better long-term outcomes for your organization.

Are We Ready for a World Without Passwords?

Passwordless authentication simply means eliminating passwords. FIDO Alliance

introduced FIDO2, a universally accepted authentication protocol offering

frictionless, phishing-resistant, passwordless authentication. FIDO2 allows

users to authenticate a web, SaaS, or mobile application using native device

biometrics or PIN from their laptop, desktop or mobile phone. The user can

access any application with a simple swipe on the fingerprint reader, a face nod

to the camera or by entering a static PIN on their device. FIDO2 passwordless

authentication is MFA by default and phishing resistant since the attacker needs

physical access to the device and also access to the user’s PIN or biometrics.

FIDO2 uses cryptographic keys (public and private) where the private key and the

user’s biometric data do not leave the user’s device, thereby protecting the

user’s privacy. It also prevents user activity tracking across services since a

unique set of credentials is generated for each service.

Is Security a Dev, DevOps or Security Team Responsibility?

Security is not the job of any one group or type of role. On the contrary,

security is everyone’s job. Forward-thinking organizations must dispense with

the mindset that a certain team “owns” security, and instead embrace security as

a truly collective team responsibility that extends across the IT organization

and beyond. After all, there is a long list of stakeholders in cloud security,

including: Security teams, who are responsible for understanding threats and

providing guidance on how to avoid them; Developers, who must ensure that

applications are designed with security in mind and that they do not contain

insecure code or depend on vulnerable third-party software to run; ITOps

engineers, whose main job is to manage software once it is in production and who

therefore play a leading role both in configuring application-hosting

environments to be secure and in monitoring applications to detect potential

risks; DevOps engineers, whose responsibilities span both development and ITOps

work, placing them in a position to secure code during both the development and

production stages.

Windows desktop apps are the future (with or without Windows)

Microsoft is betting big on this with Windows 365. Currently available only for

businesses, Windows 365 is a Windows desktop-as-a-service hosted by Microsoft.

Businesses can set up their employees with remotely accessed Windows desktops.

Those employees can access them through nearly any device: a Chromebook, Mac,

iPad, Android tablet, smart TV, smartphone, or whatever — even from a PC.

Microsoft is building better support for accessing Windows 365 desktops into

Windows 11, letting you flip between your cloud PC and local PC from the “Task

View” button on your taskbar or even boot straight to a Windows 365 cloud PC

desktop on a physical Windows 11 PC. While this is only for businesses at the

moment, internal documents show Microsoft is working on Windows 365 cloud PC

plans for home users. It’s not just about Microsoft, either. Even Google now has

a new solution for running Windows apps natively in ChromeOS called “ChromeOS

Virtual App Delivery.”

How Failures Lead to Innovation

When failure occurs, not giving up or abandoning your idea is essential.

Instead, look at the problem differently and find a new solution. This process

involves a series of steps that, when combined, can lead to groundbreaking

innovation. First, there’s a need to reassess your vision and redefine your

objectives. What was the original goal? Is it still relevant, or does the

failure open up a new direction that could be more beneficial? Second, identify

the root cause of the failure and understand its implications. This is where a

deep dive into the details is crucial. In doing so, you might uncover overlooked

opportunities or hidden insights. Third, brainstorm new solutions. Use the

knowledge from the failure to think of innovative approaches or strategies that

could work better. Fourth, prototype and test these new ideas. Not every new

idea will be successful, but through prototyping and testing, you’ll get closer

to finding a solution that works. Fifth, iterate on the process. Innovation is

rarely a one-off event. It’s a continuous learning process, designing, testing,

and refining.

Velocity Over Speed, A Winner Every Time

Precision Bias is the utterly false belief we can predict any time length ever.

No one saw covid coming. So, every damn prediction at the time did not come

true. And while most delays are not caused by such global meltdowns, they still

happen. But the addiction to speed itself is one of the largest factors in

slowing down our delivery times. To understand velocity, we have to understand

value. Both intangible value and direct value. I call this ‘soaking in numbers’.

When I am with a new client (read my article on clients vs. customers) I like to

read here and learn every value metric they find important. I want mean time to

recover. I want the number of new customers per day. I want net promoter scores,

profitability, lead times, partner surveys, employee turnover, all of it. These

are the language of value that a set of stakeholders uses to describe value.

Notice how few of those measures involve speed numbers? I guesstimate that only

10-15 % of any set of measures will be speed related. In fact, speed will cause

many of those metrics to fail. Too many new hires, too many orders, too many

acquisitions.

How to Succeed with Unifying DataOps and MLOps Pipelines

How to actually integrate data and ML pipelines depends on an organization’s

existing overall structure. “Organizations are essentially either centralized or

decentralized,” Kobielus said. For those that are already centralized to one

degree or another, unifying data and ML pipelines is really just a question of

converging the existing back ends -- often in the form of a data lakehouse. In

the case of a more decentralized organization, Kobielus explained, unification

of the different back ends requires an abstraction layer that enables users to

query data in a uniform, simplified way across all the disparate environments

where it may reside. For many organizations, this layer is taking the form of a

data mesh or a data fabric that consolidates access to data and analytics across

a range of environments. “The bottom line for success,” Kobielus said, “is to

what extent you can build more monetizable data and analytics and the degree to

which you can automate all of it. That automation needs to happen on the back

end.”

Quote for the day:

"If you set your goals ridiculously high

and it's a failure, you will fail above everyone else's success." --James Cameron