Why CIOs must think of themselves as products—and hostage negotiators

Despite the growing breadth of the CIO’s role, Tyler believes that technology executives can go further still, extending their value and influence within the organization by thinking of themselves less of a service provider and more of a ‘powerful, valuable product’, which senior executives, partners and peers need to do their jobs effectively. “Product value proposition in business terms is something we develop when we’re trying to create the next generation of goods and services for our customers or citizens—any stakeholder we’re working with,” he said ... “Your leadership is a product that all of your executive team, partners, peers, and all of the people in your organization needs,” he said. Tyler also suggested that CIOs must build their own product value proposition to deliver the maximum value to the business, and make a promise to stakeholders of how technology will help them achieve their desired outcomes, adding that technology leaders can take simple steps to start by understanding who consumes IT, and by deeply understanding their jobs, and how IT can remove pains and create gains

Digital transformation trends in 2023

Automation adoption is often viewed as one of the best methods that companies

can employ to streamline processes and boost revenues without causing costs to

spiral out of control. Recognising the benefits that can be brought by

automation, 54 per cent of organisations have already begun implementing robotic

process automation [RPA] into their processes, according to a Deloitte survey.

With the economy in such dire straits, and the landscape continuing to look so

grim for businesses, it is highly likely that we will see a greater number of

companies investing in this technology than ever before. Not only will

implementing automation be an affordable alternative to investing in a full

digital transformation project for many businesses, it will also provide a basis

upon which to create new efficiencies in the years to come. Indeed, Gartner

predicts that, by 2024, hyper automation will enable organisations to lower

their operational costs by 30 per cent. At a time of great economic uncertainty,

when every penny that businesses spend needs to be clearly justified, automation

is a proven and dependable cost saver.

5 Ways to Embrace Next-Generation AI

While AI can solve big problems, it doesn’t have to do so all at once. “In the

projects I’ve seen successful from our customers or internally, it is just

getting moving with the tools you have or small investments and then growing

from there,” Mark Maughan, chief analytics officer at cloud business

intelligence platform Domo, asserted. “Just get moving. Get started. Test,

learn, grow, and iterate.” ... A significant part of gaining that buy-in is

having the talent that can communicate effectively with the various

stakeholders. Leveraging storytelling to illustrate the problem and how AI can

solve it is a powerful tool. The earlier organizations engage relevant

stakeholders, the more likely the project is to be successful. That ability to

communicate may or may not come naturally, but it can be learned. “One thing

that we've always found very helpful is either rotations or shadowing for anyone

in the data science analytic organization around the different business and

operational stakeholder groups to get a better understanding of what is actually

going on,” Finnerty said.

The cybersecurity challenges and opportunities of digital twins

Unfortunately, while CISOs should be key stakeholders in digital twin projects,

they are almost never the ultimate decision maker, says Alfonso Velosa, research

vice president of IoT at Gartner. “Since digital twins are tools to drive

business process transformation, the business or operational unit will often

lead the initiative. Most digital twins are custom-built to address a specific

business requirement,” he says. When an enterprise buys a new smart asset,

whether a truck, backhoe, elevator, compressor, or freezer, it will often come

with a digital twin, according to Velosa. “Most of the operational teams will

need a streamlined and cross-IT—not just CISO—set of support to integrate them

into their broader business processes and to manage security.” If proper

cybersecurity controls aren’t put in place, digital twins can expand a company’s

attack surface, give threat actors access to previously inaccessible control

systems, and expose pre-existing vulnerabilities. When the digital twin of a

system is created, the potential attack surface effectively doubles—adversaries

can go after the systems themselves or attack the digital twin of that

system.

RegTech Can Help Solve ESG Data Management and Trust Challenges

The benefits that RegTech offers sustainability data officers go beyond mere

automation of processes; they also include a guarantee that the data will be

seamlessly integrated into a financial institution’s broader data assets and can

ensure that it is traceable and auditable. Katie Carrasco agreed. The head of

ESG at Global Innovation Fund, an impact investment vehicle said that RegTech

could provide a firm with a 360-degree view of its ESG data, a matter that’s

critical to good governance, which in turn is important in ensuring the accurate

identification of opportunities and risks. Data traceability and auditability

will also provide for credibility, argued Mary Anne Bullock, global strategic

account director for Solidatus. At a time when greenwashing is making headlines

and undermining the ESG project, Bullock said that being able to trace data from

source to use-case would help demonstrate its veracity, offer transparency into

firms’ activities and build trust. Building trust comes down to credible

metrics, said Seethepalli, and that would come when auditability is added into

the data management mix.

As Complexity Challenges Security, Is Time the Solution?

"Complexity leaves me in a very depressing place; complexity is just forever

increasing," said Moss, who's the founder of Black Hat and regularly opens the

conference by detailing leading challenges as well as potential solutions. For

addressing complexity, he said, "time has got me pretty excited." Simply put,

being strategic about doing things faster - including detection and recovery -

gives organizations one tactic to blunt the impact of increased complexity. Not

all complexity involves technological evolution, such as malware built to better

evade defenses, or criminals wielding zero-day exploits. Researchers debuted

last week ChatGPT, a prototype, conversational AI chatbot that can sometimes

appear to be human. This means added complexity for security professionals,

since many security tools use attackers' poor command of English to detect and

block phishing attacks. Expect criminals to soon use tools such as ChatGPT to

write lures that seem to have been crafted by a native speaker, said Daniel

Cuthbert, a veteran cybersecurity researcher who's a member of the U.K.

government's new cyber advisory board.

Meta’s behavioral ads will finally face GDPR privacy reckoning in January

If Meta is forced to ask users if they want “personalized” ads (its favored

euphemism for surveillance ads), that is definitely big news — given that rates

of denials when web users are actually given a choice over targeted ads are

typically very high. The crux of noyb’s original complaints against Meta

services was that users were not offered a choice to deny its processing for

advertising — despite the GDPR stipulating that if consent is the legal basis

being claimed for processing personal data, it must be specific, informed and

freely given. However — plot twist! — it later emerged that as the GDPR

came into application, Meta had quietly switched from claiming consent as its

legal basis for this behavioral advertising processing to saying it is necessary

for the performance of a contract — and claiming users of Facebook and Instagram

are in a contract with Meta to receive targeting ads. This argument implies that

Meta’s core service is not social networking; it’s behavioral advertising. Max

Schrems, noyb’s honorary chairman and long-time privacy law thorn in Facebook’s

side, has called this an exceptionally shameless attempt to bypass the GDPR.

Introduction to Interface-Driven Development (IDD)

This concept already existed in some areas, such as Protocol-oriented

programming in Swift or Interface-based programming in Java, and it is based on

Design by Contract by Bertrand Meyer, described in his book “Object-Oriented

Software Construction”. In the book, he discusses standards for contracts

between a method and a caller. Also, Hunt and Thomas rely upon a similar concept

in their “The Pragmatic Programmer” book, in the section on Prototyping

Architecture: “Most prototypes are constructed to model the entire system under

consideration. As opposed to tracer bullets, none of the modules in the

prototype system need to be particularly functional. What you are looking for is

how the system hangs together as a whole, again deferring details.“ The problem

that this process needs to solve is components that are vaguely defined during

design, and we tend to give more responsibility to some components than is

necessary. A usual implication of such design is bad and untestable code.

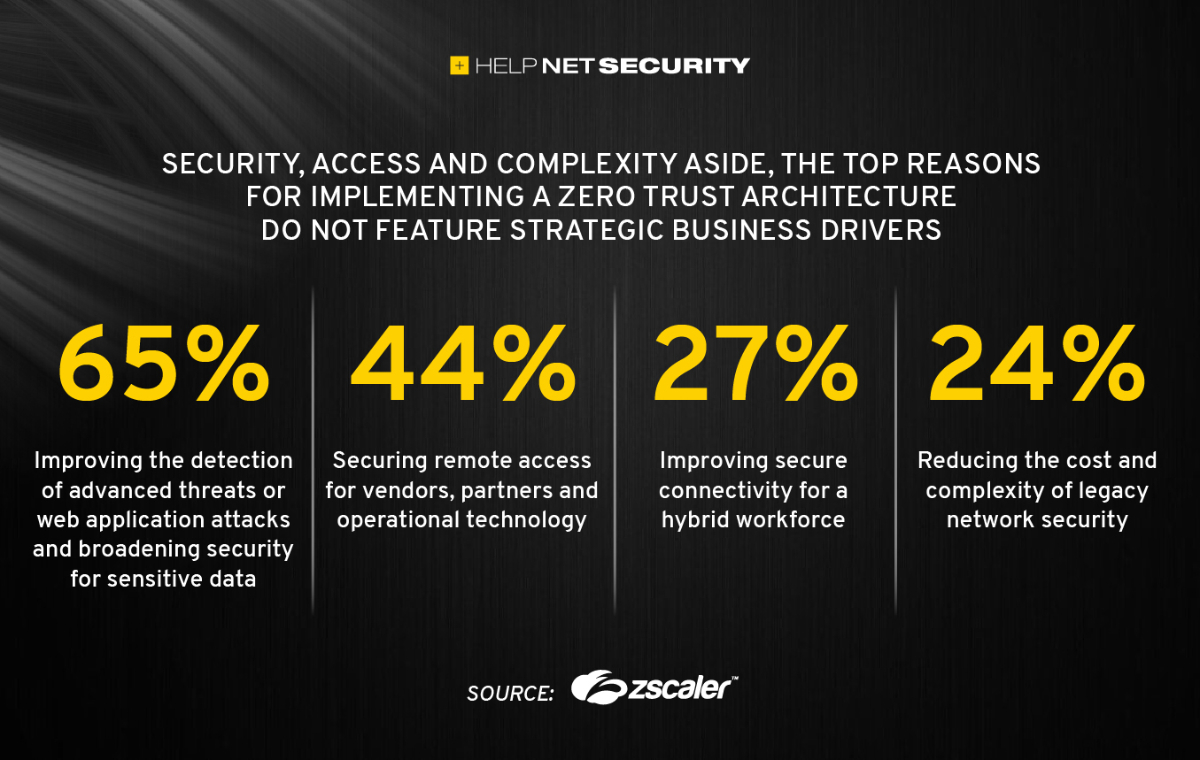

Leveraging the full potential of zero trust

In line with the motivations behind cloud migration, Zscaler found that a focus

on wider strategic outcomes is missing from how organizations are planning

emerging technology initiatives. Regarding the single most challenging aspect of

implementing emerging technology projects, 30% cited adequate security, followed

by budget requirements for further digitization (23%). However, only 19% cited

dependency on strategic business decisions as a challenge. While budget concerns

are natural, the focus on securing the network while ignoring strategic business

alignment suggests organizations are focused on security without a full

understanding of its business benefit, and that zero trust itself is not yet

understood as a business enabler. “The state of zero trust transformation within

organizations today is promising – implementation rates are strong,” said Nathan

Howe, VP of Emerging Tech, 5G at Zscaler. “But organizations could be more

ambitious. There’s an incredible opportunity for IT leaders to educate business

decision-makers on zero trust as a high-value business driver, especially as

they grapple with providing a new class of hybrid workplace or production

environment and reliant on a range of emerging technologies, such as IoT and OT,

5G and even the metaverse.

Going from Architect to Architecting: the Evolution of a Key Role

A fundamental principle of today’s software architecture is that it's an

evolutionary journey, with varying routes and many influences. That evolution

means we change our thinking based on what we learn, but the architect has a

key role in enabling that conversation to happen. ... The architect playing a

sole role in the software development game is no longer the case. Architecting

a system is now a team sport. That team is the cross functional capability

that now delivers a product and is made up of anyone who adds value to the

overall process of delivering software, which still includes the architect.

Part of the reason behind this, as discussed earlier, is that the software

development ecosystem is a polyglot of technologies, languages (not only

development languages, also business and technical), experiences (development

and user) and stakeholders. No one person can touch all bases. This change has

surfaced the need for a mindset shift for the architect; working as part of a

team has great benefits but in turn has its challenges.

Quote for the day:

"Leaders are more powerful role models

when they learn than when they teach." -- Rosabeth Moss Kantor