How will self-driving cars affect public health?

The researchers created a conceptual model to systematically identify the

pathways through which AVs can affect public health. The proposed model

summarizes the potential changes in transportation after AV implementation

into seven points of impact: transportation infrastructure; land use and the

built environment; traffic flow; transportation mode choice; transportation

equity; and jobs related to transportation and traffic safety. The changes in

transportation are then attributed to potential health impacts. In optimistic

views, AVs are expected to prevent 94% of traffic crashes by eliminating

driver error, but AVs’ operation introduces new safety issues such as the

potential of malfunctioning sensors in detecting objects, misinterpretation of

data, and poorly executed responses, which can jeopardize the reliability of

AVs and cause serious safety consequences in an automated environment. Another

possible safety consideration is the riskier behavior of users because of

their overreliance on AVs—for example, neglecting the use of seatbelts due to

an increased false sense of safety. AVs have the potential to shift people

from public transportation and active transportation such as walking and

biking to private vehicles in urban areas, which can result in more air

pollution and greenhouse gas emissions and create the potential loss of

driving jobs for those in the public transit or freight transport industries.

Now’s The Time For Long-Term Thinking

For most financial institutions, the strategic planning process for 2021 is

far different than any in the past. As opposed to an iterative adjustment to

plans from the previous year, this year’s planning must take into account a

level of change in technology, competition, consumer behaviors, society and

many other areas that is far less defined than before. The uncertainty about

the future requires a combination of a solid strategic foundation with sensing

capabilities and the ability to respond to threats and opportunities as

quickly as possible. For many banks and credit unions, this will require

organizational restructuring, the reallocation of resources, revamping

processes, finding new outside partners and a culture that will support

flexibility in plans that never was required before. There is also the need to

build a marketplace sensing capability across the entire organization and from

a broader array of sources. This includes customers, internal staff

(especially customer-facing employees), suppliers, strategic partners,

research organizations, boards of directors and even competition. Gathering

the insights is only half the battle. There must also be a centralized

location to gather and analyze the insights collected.

Rapid Threat Evolution Spurs Crucial Healthcare Cybersecurity Needs

Cybercriminals have been actively taking advantage of the global pandemic,

with an increase in cyberattacks, phishing, spear-phishing, and business email

compromise (BEC) attempts. And on the healthcare side of things, NSCA

Executive Director, Kelvin Coleman, said it’s not a huge surprise. Even

in the early 1900s during the Spanish flu pandemic, folks would put articles

in newspapers to take advantage of the crisis with hoaxes and scams, Coleman

explained. “Bad actors take advantage of crises,” he said. “Hackers are

being aggressive, leveraging targeted emails and phishing attempts. Josh

Corman, cofounder of IAmTheCalvary.org and DHS CISA Visiting Researcher,

stressed that when a provider is forced into EHR downtime and to divert

patient care, it’s even more nightmarish during a pandemic. In Germany, a

patient died earlier this month after a ransomware attack shut down operations

at a hospital, and she was diverted to another hospital. These are

criminals without scruples, Corman explained. The attacks were happening

before the pandemic, but there’s been no cease- fire amid the crisis. In

healthcare, hackers continue to rely on previously successful attack methods –

especially phishing. It continues to be a successful attack method.

FBI, CISA: Russian hackers breached US government networks, exfiltrated data

US officials identified the Russian hacker group as Energetic Bear, a codename

used by the cybersecurity industry. Other names for the same group also

include TEMP.Isotope, Berserk Bear, TeamSpy, Dragonfly, Havex, Crouching Yeti,

and Koala. Officials said the group has been targeting dozens of US state,

local, territorial, and tribal (SLTT) government networks since at least

February 2020. Companies in the aviation industry were also targeted, CISA and

FBI said. The two agencies said Energetic Bear "successfully compromised

network infrastructure, and as of October 1, 2020, exfiltrated data from at

least two victim servers." The intrusions detailed in today's CISA and FBI

advisory are a continuation of attacks detailed in a previous CISA and FBI

joint alert, dated October 9. The previous advisory described how hackers had

breached US government networks by combining VPN appliances and Windows bugs.

Today's advisory attributes those intrusions to the Russian hacker group but

also provides additional details about Energetic Bear's tactics. According to

the technical advisory, Russian hackers used publicly known vulnerabilities to

breach networking gear, pivot to internal networks, elevate privileges, and

steal sensitive data.

Secure NTP with NTS

NTP can be secured well with symmetric keys. Unfortunately, the server has to

have a different key for each client and the keys have to be securely

distributed. That might be practical with a private server on a local network,

but it does not scale to a public server with millions of clients. NTS

includes a Key Establishment (NTS-KE) protocol that automatically creates the

encryption keys used between the server and its clients. It uses Transport

Layer Security (TLS) on TCP port 4460. It is designed to scale to very large

numbers of clients with a minimal impact on accuracy. The server does not need

to keep any client-specific state. It provides clients with cookies, which are

encrypted and contain the keys needed to authenticate the NTP packets. Privacy

is one of the goals of NTS. The client gets a new cookie with each server

response, so it doesn’t have to reuse cookies. This prevents passive observers

from tracking clients migrating between networks. The default NTP client in

Fedora is chrony. Chrony added NTS support in version 4.0. The default

configuration hasn’t changed. Chrony still uses public servers from the

pool.ntp.org project and NTS is not enabled by default. Currently, there are

very few public NTP servers that support NTS. The two major providers are

Cloudflare and Netnod.

Non-Intimidating Ways To Introduce AI/ML To Children

The brainchild of IBM, Machine Learning for Kids is a free, web-based tool to

introduce children to machine learning systems and applications of AI in the

real world. Machine Learning for Kids is built by Dale Lane using APIs from

IBM Watson. It provides hands-on experiments to train ML systems that

recognise texts, images, sounds, and numbers. It leverages platforms such as

Scratch and App Inventor to create interesting projects and games. It is also

being used in schools as a significant resource to teach AI and ML to

students. Teachers can also form their own admin page to manage their access

to students. A product from the MIT Media Lab, Cognimates is an open-source AI

learning platform for young children starting from age 7. Children can learn

how to build games, robots, and train their own AI modes. Like Machine

Learning for Kids, Cognimates is also based on Scratch programming language.

It provides a library of tools and activities for learning AI. This platform

even allows children to program intelligent devices such as Alexa. Another

offering from Google in order to make learning AI fun and engaging is AIY. The

name is an intelligent wordplay with AI and do-it-yourself (DIY).

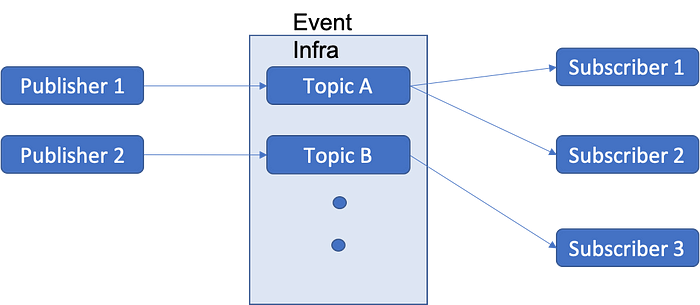

How RPA differs from conversational AI, and the benefits of both

Enterprises are working to digitally transform core business processes to

enable greater automation of backend processes and to encourage more seamless

customer experiences and self-service at the frontend. We are seeing banks,

insurers, retailers, energy providers and telcos working to develop their own

digital assistants with a growing number of skills, while still providing a

consistent brand experience. Developing bots doesn’t have to be complex. It is

more important to carefully identify the right use cases where these

technologies will deliver clear ROI with the least amount of effort. Whether

an enterprise is applying RPA or conversational AI, or both, it’s important to

first understand the business problem that needs to be solved, and then

identify where bots will make an immediate difference. Then consider the

investment required, barriers to successful implementation, and the expected

business outcomes. It’s better to start small with a narrowly focused use case

and achievable KPIs, rather than trying to do too much at once. Conversational

AI and RPA are very powerful automation technologies. When designed well, a

chatbot can automate up to 80% of routine queries that come into a customer

service centre or IT helpdesk, saving an organisation time and money and

enabling it to scale its operations.

Things to consider when running visual tests in CI/CD pipelines: Getting Started

Testing – it’s an important part of a developer’s day-to-day, but it’s also

crucial to the operations engineer. In a world where DevOps is more than just

a buzzword, where it’s become accepted as a mindset shift and culture change,

we all need to consider running quality tests. Traditional testing may include

UI testing, integration testing, code coverage checks, and so forth, but at

some point, we still need eyeballs on a physical page. How many times have we

seen a funny looking page because of CSS errors? Or worse yet, an important

button like say, “Buy now” “missing” because someone changed the CSS and now

the button blends in with the background? Logically, the page still works, and

even from a traditional test perspective, the button can be clicked, and the

DOM (used in UI Test verification) is perfect. Visually, however, the page is

broken; this is where visual testing comes into play. Visual testing allows us

to use automated UI testing with the power of AI to help us determine if a

page “looks right” aside from just “functions right.” Earlier this year, I

partnered with Angie Jones from Applitools in a joint webinar where we talked

about best practices as it pertains to both Visual Testing and also CI/CD.

This blog post is a summary of that webinar and how to handle visual testing

in CI/CD.

Design patterns – for faster, more reliable programming

Every design has a pattern and everything has a template, whether it be a cup,

house, or dress. No one would consider attaching a cup’s handle to the inside

– apart from novelty item manufacturers. It has simply been proven that these

components should be attached to the outside for practical purposes. If you

are taking a pottery class and want to make a pot with handles, you already

know what the basic shape should be. It is stored in your head as a design

pattern, in a manner of speaking. The same general idea applies to computer

programming. Certain procedures are repeated frequently, so it was no great

leap to think of creating something like pattern templates. In our guide, we

will show you how these design patterns can simplify programming. The term

“design pattern” was originally coined by the American architect Christopher

Alexander who created a collection of reusable patterns. His plan was to

involve future users of the structures in the design process. This idea was

then adopted by a number of computer scientists. Erich Gamma, Richard Helm,

Ralph Johnson, and John Vlissides (sometimes referred to as the Gang of Four

or GoF) helped software patterns break through and gain acceptance with their

book “Design Patterns – Elements of Reusable Object-Oriented Software” in

1994.

Public and Private Blockchain: How to Differentiate Them and Their Use Cases

Public blockchain is the model of Bitcoin, Ethereum, and Litecoin and is

essentially considered to be the original distributed ledger structure. This

type of blockchain is completely open and anyone can join and participate in

the network. It can receive and send transactions from anybody in the world,

and can also be audited by anyone who is in the system. Each node (a computer

connected to the network) has as much transmission and power as any other,

making public blockchains not only decentralized, but fully distributed, as

well. ... Private blockchains, on the other hand, are essentially forks of the

originator but are deployed in what is called a permissioned manner. In order

to gain access to a private blockchain network, one must be invited and then

validated by either the network starter or by specific rules that were put

into place by the network starter. Once the invitation is accepted, the new

entity can contribute to the maintenance of the blockchain in the customary

manner. Due to the fact that the blockchain is on a closed network, it offers

the benefits of the technology but not necessarily the distributed

characteristics of the public blockchain.

Quote for the day:

"Every moment is a golden one for those who have the vision to recognize it as such." -- Henry Miller