Financial crime group FIN11 pivots to ransomware and stolen data extortion

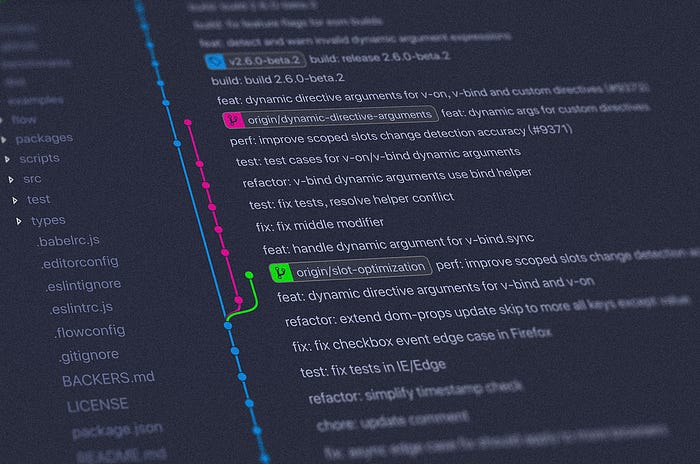

Despite casting a wide net with its phishing campaigns, FIN11 choses to

perform deeper compromises on only a small subset of its victims, which are

likely selected based on their size, industry and likelihood of paying. Like

several other sophisticated ransomware gangs, FIN11 uses manual hacking to

move laterally through networks and deploy its ransomware, so the group might

not have enough manpower to do this on a large scale. If a victim looks

interesting, after the initial intrusion the FIN11 attackers deploy multiple

backdoors with the goal of moving laterally and obtaining domain administrator

privileges. Even though its exclusive tools like FlawedAmmyy and MIXLABEL are

used to gain the initial foothold, the lateral movement activity involves the

use of many publicly available tools. This is similar to how an increasing

number of hacker groups operate. Once domain admin credentials have been

obtained, the attackers use various tools to disable Windows Defender and

deploy the CLOP ransomware to hundreds of computers using Group Policy

Objects. FIN11's ransom notes include only an email address for victims to

contact and do not specify a ransom amount, suggesting the ransom is later

customized based on who the victim organization is.

How to ignite a mainframe transformation with three key mindset changes

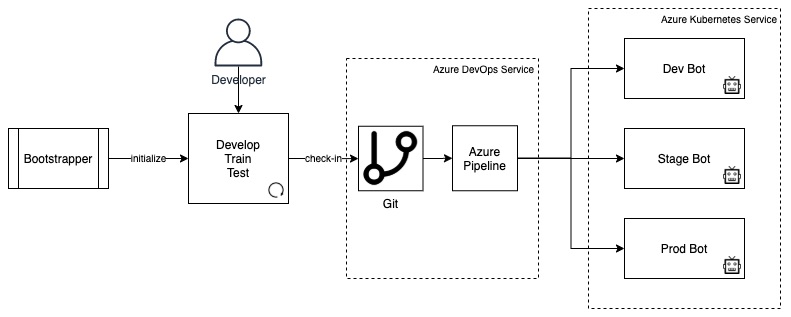

There’s often a misconception that IT departments have to plan their entire

mainframe transformation at the same time, which usually leads to delays and

pushback from teams who believe the effort is simply too ambitious, or fear it

will take too long to achieve. It’s important to remember that mainframe teams

usually have a backlog of essential, customer-impacting work to complete, so

it’s difficult to take resources away from those tasks to support an internal

transition project. It’s far more effective to break the transformation down

into smaller steps, using Agile thinking to enable incremental change, and

establish continuous feedback and improvements. Instead of trying to build a

complete environment for Agile delivery on the mainframe, it’s better to break

the process down into steps, using shorter sprints to manage the transition

and mitigate any risk and resource constraints. Start by modernising a single

aspect of mainframe delivery, such as improving the developer experience with

an integrated development environment (IDE), then add automated testing

processes, or application analysis and visualisation in stages, to avoid

overwhelming teams with a major transition project all at once. It also helps

get more people on board, by allowing them to see the benefits of each step

before they take the next one.

Agile resilience in the UK: Lessons from COVID-19 for the ‘next normal’

Alongside establishing a guiding purpose, the most effective organizations

focused on more frequent communications, taking an adult-to-adult tone that

explained decisions and shared a realistic assessment. During the COVID-19

pandemic at UK Power Networks, for example, the CEO shared daily video

messages showing the rationale behind corporate decisions. Feedback from

employees demonstrated the positive effect of this clear communication and

transparency. For organizations that have found a new focus during the

COVID-19 crisis, the next key step should be to consider if they can enhance

and develop their common purpose to hold true in more normal times, giving

employees the same clarity of decision making and ability to act as during the

COVID-19 crisis. Agile organizations often speak of a shared purpose and

vision—the “North Star”—which helps people feel personally and emotionally

invested in the organization. This North Star allows employees to individually

and proactively watch for changes in customer preferences and the external

environment, and then, act upon them. ... The second shared practice we found

was that organizations created new forums and structures, or repurposed

existing ones, to act as rapid-decision-making bodies.

Build Next-Generation Cloud Native Applications with the SMOKE Stack

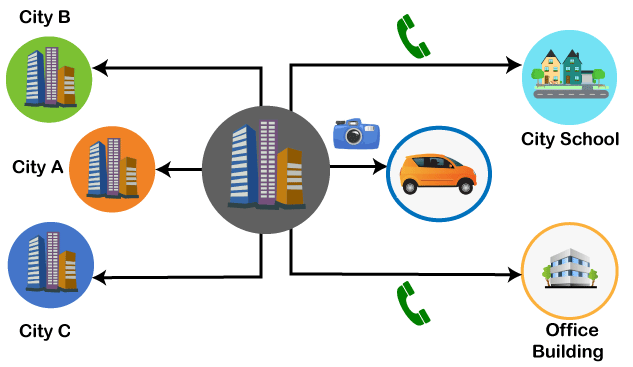

Enterprise technology needs to help organizations take action in real time.

Doing this effectively means modernizing application architecture from batch

processing to event-driven. Serverless computing is an event-driven

architecture that abstracts infrastructure, so developers can focus on writing

the application code. With serverless, application teams don’t need to worry

about the complexity of maintaining, patching, supporting and paying for

infrastructure that they need on an elastic basis. This makes serverless

perfect as the glue to integrate services from anywhere. At TriggerMesh, we

think serverless is only the beginning. The real power comes from what

serverless enables. Serverless architectures allow even the largest

enterprises with years or decades of legacy code to break out of the

constraints of their own data centers and a single cloud. Open source,

standards and specifications free enterprise developers to mash-up services

from on-premises and any cloud, to rapidly compose event-driven applications

that support high velocity — so that you can bring new features and products

to market fast.

Ransomware: It’s time to bring cybersecurity audits up to GDPR status

According to Check Point, the number of daily ransomware attacks worldwide has

increased by half over the past three months -- close to doubling in the

United States alone -- as threat actors take advantage of the operational

disruption and rapid shift to home working caused by COVID-19. Ezat Dayeh,

Senior Engineer Manager UK&I at Cohesity, told ZDNet in an interview that

the company has seen a recent and "dramatic" increase in the volumes of

ransomware incidents. As more people are working from home due to

COVID-19, this may have introduced new risk factors -- but the increasing

sophistication of such attacks is of concern, too. "When we think about two or

three years ago, when people were hit with ransomware, nine out of ten times

they would basically say, "it's definitely impacted production, we've got

issues, but we can go back to our backups," and worst-case scenario, we will

just do a restore," Dayeh said. "But now, with that sophistication, the bad

guys know this. Ransomware can come into a network [and] it won't do anything

but it will start looking around and see what it can access on the network."

Facebook’s New Open Source Framework For Training Graph-Based ML Models

The use of WFST data structure is prevalent among speech recognition,

natural language processing, and handwriting recognition applications. WFST,

especially in the speech recognition systems, provides a common and natural

representation for the hidden Markov models (HMM), context-dependency,

grammar, pronunciation dictionaries, and weighted determinization algorithms

to optimise time and space requirement. One of the most popular WFST-based

products is the Kaldi toolkit for speech recognition which is trained to

decode speeches. Kaldi heavily relies on OpenFST, which is an open-source

WFST toolkit. To understand the importance of GTN framework for a WFST

graph, we consider a general speech recogniser. A speech recogniser consists

of an acoustic model that predicts the letters in the speech, its language

model, and also identifies the word that may follow. These models are

represented as WFSTs and are trained separately before combining to output

the most likely transcription. It is, at this juncture, that the GTN library

steps in to train the different models, which in turn provides better

results. Before GTN, the use of the individual graphs at the training time

was implicit, and the graph structure needed to be hard-coded in the

software.

What will quantum computing mean for business?

There are four main areas that are already a focus of attention.

Cybersecurity is the obvious first one, because if quantum computers render

existing encryption worthless, they can also be used to produce more secure

algorithms, random number generators and keys that can’t be defeated by

their own processing prowess. The other areas revolve around the capacity

quantum computing has for comparing lots of different possibilities and

finding the optimum one amongst them or best fit. For example, in financial

services this could provide portfolio optimisation, high-frequency trading

advantages, and more efficient fraud detection. Goldman Sachs, RBS and

Citigroup are already recruiting towards taking advantage of these

possibilities. Logistics is another obvious beneficiary. Traffic management,

delivery route optimisation, and other traffic-related problems are finding

potential quantum solutions, with Daimler and Honda already aiming to

acquire quantum computers for these kinds of activities. Similarly,

manufacturing, pharmaceuticals, and materials science can optimise their

processes, such as the manufacturing supply chain. Existing quantum

computers with just 50 qubits are delivering good results for applications

such as protein folding and new drug formula discovery.

Windows “Ping of Death” bug revealed – patch now!

Interestingly, the bug you see triggering in the video above that provokes

the BSoD is caused by a buffer overflow. TCPIP.SYS doesn’t correctly check

the size of one of the data fields that can optionally appear in IPv6 ICMP

packets, so you can shove too much data at it and corrupt the system stack.

Bang! Down it goes. Two decades ago, almost any stack-based buffer overflow

on Windows could be used not only to crash a system, but also, with a bit of

care and planning,to take over the processor’s flow of execution and divert

it into a program fragment – known as shellcode – of your own choosing. In

other words, Windows stack overflows in neworking software almost always

used to lead to so-called remote code execution exploits, where attackers

could trigger the bug from afar with specially crafted network traffic, run

code of their own choosing, and thereby inject malware without you even

being aware. But numerous security improvements in Windows, from Windows XP

SP3 onwards, have made stack overflows harder and harder to exploit, and

these days they can often only be used to force crashes, not to take over

completely. Nevertheless, a malcontent on your network who could crash any

computers at will, servers and laptops alike, could cause plenty of harm

just through what’s known as a denial of service attack, especially because

recovering from each crash requires a complete reboot.

The CISO’s newest responsibility: Building trust

As part of this evolution, CISOs have had to build confidence among all

stakeholders—customers, partners, employees, board members and other

executives—that they and their security teams have the organization’s best

interests in mind when it comes to cybersecurity decisions. ... “Things

are all upside down now. No one is working the same, and there’s a lot of

discomfort out there. So as a security person you have to build that trust.

It’s part of your job, and it’s what you get paid to do,” says Gene

Fredriksen, a veteran security executive now serving as executive director

of the National Credit Union Information Sharing & Analysis Organization

(NCU-ISAO) and cybersecurity principal for Pure IT Credit Union Services.

... The CISO’s capacity to cultivate trust is more than an esoteric

discussion or business-school exercise: Experts say it’s an essential

element for any CISO who wants to be successful in the role because it

enables him or her to enact the policies, procedures and technologies needed

to secure the organization and, thus, prove to others—including

customers—that their interactions with the company are safe.

Data Analytics Without a Plan is Like Panning for Gold

Of the many lessons COVID-19 has to teach, data analysis is one of the least

appreciated. A lack of quality data has led to unanswerable questions about

the availability of ventilators, hospital beds, and personal protective

equipment. Poor data collection has hindered contact tracing efforts. In a

pandemic, collecting the right data and applying it in the right way can save

lives. A hospital in Boston was lauded for using a forecasting model to

anticipate how many bags of blood it would need. Singapore, one of the

countries with the slowest spread of COVID-19, uses blockchain and analytics

to reduce exposures through contact tracing. Many of the economy’s heavy

hitters, like Amazon and Facebook, were designed from the outset to apply

data. If a shopper looks repeatedly at an item on Amazon, the site will show

similar items, adjust the price, or offer promotions to prod a purchase.

Facebook’s Cambridge Analytica scandal demonstrates what can happen when data

is applied indiscriminately. People felt violated by the depth of information

the company was able to glean from their internet use.

Quote for the day:

"Leaders lead when they take positions, when they connect with their tribes, and when they help the tribe connect to itself." -- Seth Godin