Data: The Fabric of Developers’ Lives

Storage-as-a-Service—we hardly knew about it. Thanks in large part to containers, which offer exceptional scalability, simplicity and high availability, the speed of application development has increased dramatically. Developers need to be able to quickly provision their own data, in just the right amounts, to match that velocity. And, like containers, that data needs to be portable. Provisioning quickly means no more going through storage administrators to get the services they need, which can be a cumbersome and time-consuming process. Solutions like Kubernetes’ on-demand clusters enable developers to procure the data they need when they need it. The abstraction layer provided by a data fabric can empower developers even further. They can write their own APIs, provision data services as needed and move that data between clouds with ease. This is particularly important when dealing with cloud providers that offer different services. Sometimes a developer may need a service that exists in one cloud but not another. It’s critical to have an underlying storage infrastructure that enables applications and their data to be transferred as needs require.

Remember when open source was fun?

When Daniel Stenberg set out to make currency exchange rates available to IRC users, he wasn’t trying to “do open source.” It was 1996 and the term “open source” hadn’t even been coined yet (that came in February 1998). No, he just wanted to build a little utility (“how hard can it be?”), so he started from an existing tool (httpget), made some adjustments, and released what would eventually become known as cURL, a way to transfer data using a variety of protocols. It wasn’t Stenberg’s full-time job, or even his part-time job. “It was completely a side thing,” he says in an interview. “I did it for fun.” Stenberg’s side project has lasted for over 20 years, attracted hundreds of contributors, and has a billion users. Yes, billion with a B. Some of those users contact him with urgent requests to fix this or that bug. Their bosses are angry and they need help RIGHT NOW. “They are getting paid to use my stuff that I do at home without getting paid,” Stenberg notes. Is he annoyed? No. “I do it because it’s fun, right? So I’ve always enjoyed it. And that’s why I still do it.”

New research by the data protection and management software supplier has found 5.8 million tonnes of carbon dioxide will be pumped into the atmosphere this year resulting from the use of storage systems to house and process dark data. Veritas derived the figure by mapping industry data on power consumption from data storage, industry data on emissions from datacentres and its own research. On average, 52% of all data stored by organisations worldwide is likely to be dark data, according to Veritas. With the amount of data growing from 33 zettabytes in 2018 to 175 zettabytes by 2025, there will be 91 zettabytes of dark data in five years’ time – over four times the volume of dark data today. Ravi Rajendran, vice-president and managing director for the Asia South region at Veritas Technologies, said that although companies are trying to reduce their carbon footprint, dark data is often neglected. And with dark data producing more carbon dioxide than 80 countries do individually, Rajendran called for organisations to start taking it seriously.

How different generations approach remote work

Maybe it's more millennials that are really pushing the work from home, but if you would think it would be more of your generation. I say that I'm Gen X. Veronica and I both are, of course. But, you would think that it'd be the younger ones that would be all for working from home, to have that freedom. ... When I'm in an office, as you both know, I tend to be a bit of a chatterbox, so it's good for me to have that alone time to really lock things down. But it's different for people. But, Veronica, you and I would be able to speak on this for Gen X, at least, in the research that I saw, NRG found that most Gen X-ers enjoyed working from home because they were really comfortable, and they liked that independence. And they also liked being around their families, and having that quality time, and felt a little more relaxed. Would you say that's accurate? ... You can get up and take a break whenever, and reset your brain to shift tasks, or to find inspiration if you're stuck on something. I think if you can close the door or close your family off, it's OK. My kids are older now, but if they were little, it would be so hard to work from home now. I have an 11-year-old and a 15-year-old, so they can make their own lunch, and walk the dog, and be self-sufficient while I'm down here.

Netgear is ahead of the game with its WiFi 6 router portfolio and it is paying off as the company is seeing a surge in home network upgrades. The catch for Netgear is that its supply chain, sales channels and markets have all been upended by the COVID-19 pandemic. CEO Patrick Lo outlined the moving parts of Netgear's first quarter. We saw two distinct phenomena during the Covid-19 pandemic. Whenever a shelter in place lockdown was declared, business activities fell and demand for our SMB products dropped significantly. At the same time, consumers are quickly finding out that high performance WiFi at home is a necessity and are rushing to upgrade their home WiFi, driving upticks in our consumer WiFi and mobile hotspot sales. We also saw significant channel shift from physical retail channel purchases to online purchases which put strain on the logistics of some of our online sales partners. On an earnings conference call, it became clear that Netgear had a lot to navigate as it pulled its guidance due to COVID-19. The company reported a first quarter net loss of $4.17 million on revenue of $229.96 million, down from $249 million a year ago. On a non-GAAP basis, Netgear's earnings of 21 cents a share were a nickel better than estimates.

Researchers say deep learning will power 5G and 6G ‘cognitive radios’

For decades, amateur two-way radio operators have communicated across entire continents by choosing the right radio frequency at the right time of day, a luxury made possible by having relatively few users and devices sharing the airwaves. But as cellular radios multiply in both phones and Internet of Things devices, finding interference-free frequencies is becoming more difficult, so researchers are planning to use deep learning to create cognitive radios that instantly adjust their radio frequencies to achieve optimal performance. As explained by researchers with Northeastern University’s Institute for the Wireless Internet of Things, the increasing varieties and densities of cellular IoT devices are creating new challenges for wireless network optimization; a given swath of radio frequencies may be shared by a hundred small radios designed to operate in the same general area, each with individual signaling characteristics and variations in adjusting to changed conditions. The sheer number of devices reduces the efficacy of fixed mathematical models when predicting what spectrum fragments may be free at a given split second.

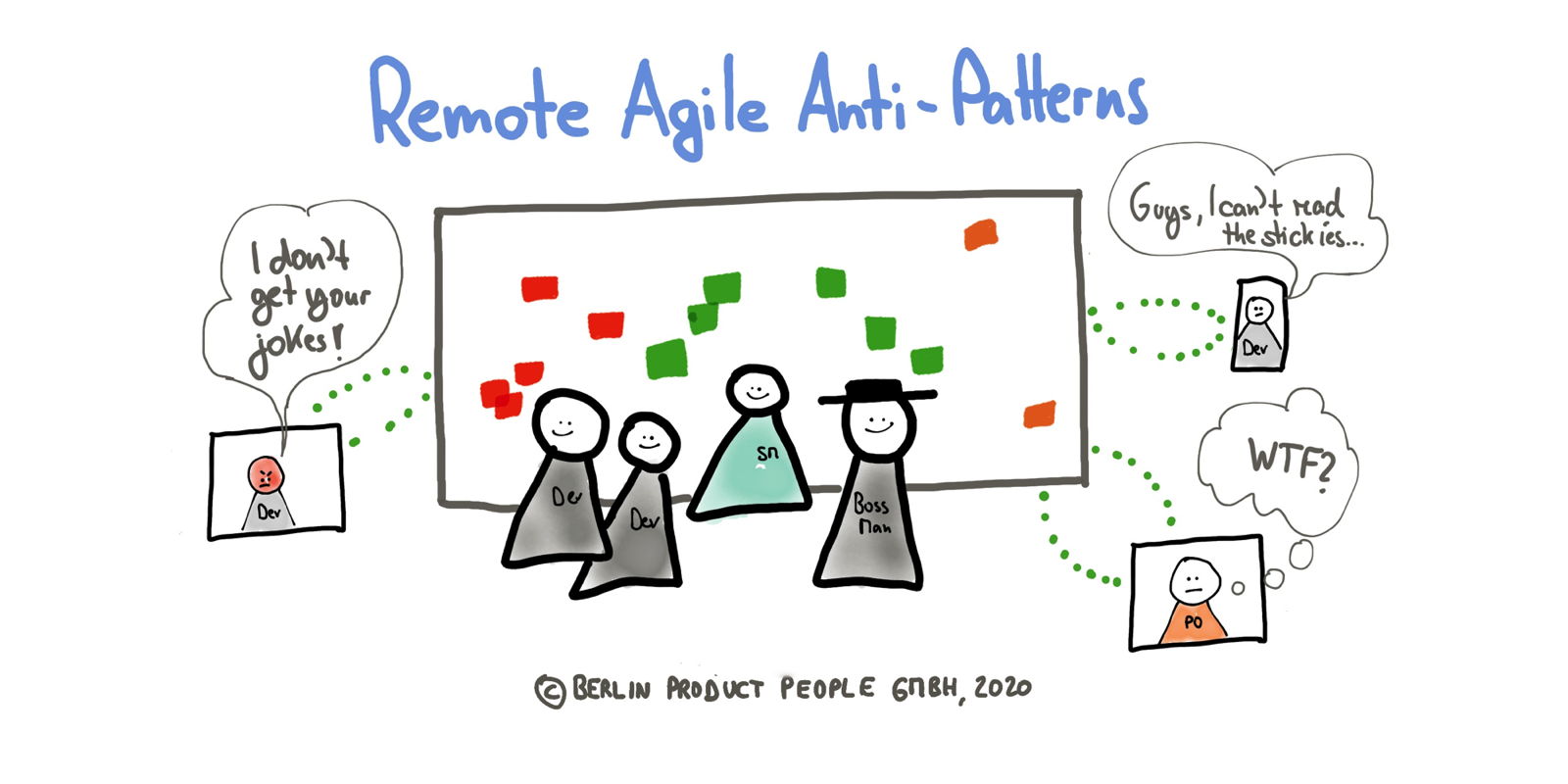

Outsourced DevOps brings benefits, and risks, to IT shops

When IT teams outsource DevOps planning to a third-party service provider, it only exacerbates existing planning issues. Another option is to hire a contract Scrum Master or product manager with DevOps experience to work with the in-house teams. Either way, proceed with an end game of knowledge transfer to build in-house planning expertise. Depending on the organization's attitude toward contractors, the addition of an outside contractor to work on planning can bring some cultural challenges. Some organizations treat contractors as valued members of the team, while others treat them as outsiders -- which makes it challenging to have a contractor in any subject matter expert position. Planning tools, however, are ripe for outsourcing. For example, if an organization lacks the in-house expertise to implement and maintain Atlassian Jira or another planning tool, it can outsource that platform and use a managed version. While it's more common to outsource the build phase of DevOps than it is the planning phase, it still has risks.

Tech Leaders Map Out Post-Pandemic Return to Workplace

Businesses will be turning to enterprise technology to smooth out the process of getting employees back to the workplace in the wake of the coronavirus pandemic, according to a report by Forrester Research. Technology leaders say safety will be a top priority. The information-technology research firm’s report lays out an early-stage road map for IT executives preparing to reopen corporate offices—a process that will vary by industry, but for most businesses will involve multiple stages. Chief information officers and their teams will likely be in the first wave of employees returning to the job site, said Andrew Hewitt, a Forrester analyst serving infrastructure and operations professionals. He said their initial task will be to develop a strategy for keeping employee tech tools—including PCs, mobile devices, monitors, keyboards and mice—germ-free without damaging them. “IT teams will need to have a staging area that’s outside of the front door of the office where employees can bring their home technology in and sanitize it,” Mr. Hewitt said.

Five Attributes of a Great DevOps Platform

Culture plays a significant role in establishing the guidelines while embracing DevOps in any organization. Through DevOps culture, companies seek to bring dev and ops teams into harmony to promote collaboration, automation, process improvements, continuous iterative development and deployment methodologies. But above everything else, a sound DevOps culture fundamentally solves one of IT’s biggest people problems: bridging the gap between dev and ops teams to get them to stop working in silos and have common goals. According to Gartner estimation, DevOps efforts fail 90% of the time when infrastructure and operations teams try to drive a DevOps initiative without nurturing a cultural shift in the first place. It is not just about the efficient tools or experts working; it is about the behavioral modifications and mentality necessary to effect cultural change. Hence, it is important for the firms to consider the culture of the company before selecting its tool as a potential DevOps tool for their development.

Use tokens for microservices authentication and authorization

STS enables clients to obtain the credentials they need to access multiple services that live across distributed environments. It issues digital security tokens that stay with users from the beginning of their session and continuously validate their permission for each service they call. An STS can also reissue, exchange and cancel security tokens as needed. The STS must connect with an enterprise user directory that contains all the details about user roles and responsibilities. This directory, and any connection made to it, should be properly secured as well, otherwise users could elevate their permissions just by editing policies on their own. Consider segmenting user access policies based on roles and activities. For instance, identify the individuals who have administrative capabilities. Or, you might limit a developer's access permissions to only include the services they are supposed to work on. ... Not all microservices permission and security checks are based around a human user.

Quote for the day:

"I'm not crazy about reality, but it's still the only place to get a decent meal." -- Groucho Marx