Quote for the day:

"Knowledge is being aware of what you can do. Wisdom is knowing when not to do it." -- Anonymous

Consumer rights group: Why a 10-year ban on AI regulation will harm Americans

AI is a tool that can be used for significant good, but it can and already has

been used for fraud and abuse, as well as in ways that can cause real harm, both

intentional and unintentional — as was thoroughly discussed in the House’s own

bipartisan AI Task Force Report. These harms can range from impacting employment

opportunities and workers’ rights to threatening accuracy in medical diagnoses

or criminal sentencing, and many current laws have gaps and loopholes that leave

AI uses in gray areas. Refusing to enact reasonable regulations places AI developers and deployers into a lawless and unaccountable zone, which will

ultimately undermine the trust of the public in their continued development and

use. ... Proponents of the 10-year moratorium have argued that it would prevent

a patchwork of regulations that could hinder the development of these

technologies, and that Congress is the proper body to put rules in place. But

Congress thus far has refused to establish such a framework, and instead it’s

proposing to prevent any protections at any level of government, completely

abdicating its responsibility to address the serious harms we know AI can cause.

It is a gift to the largest technology companies at the expense of users — small

or large — who increasingly rely on their services, as well as the American

public who will be subject to unaccountable and inscrutable systems.

AI is a tool that can be used for significant good, but it can and already has

been used for fraud and abuse, as well as in ways that can cause real harm, both

intentional and unintentional — as was thoroughly discussed in the House’s own

bipartisan AI Task Force Report. These harms can range from impacting employment

opportunities and workers’ rights to threatening accuracy in medical diagnoses

or criminal sentencing, and many current laws have gaps and loopholes that leave

AI uses in gray areas. Refusing to enact reasonable regulations places AI developers and deployers into a lawless and unaccountable zone, which will

ultimately undermine the trust of the public in their continued development and

use. ... Proponents of the 10-year moratorium have argued that it would prevent

a patchwork of regulations that could hinder the development of these

technologies, and that Congress is the proper body to put rules in place. But

Congress thus far has refused to establish such a framework, and instead it’s

proposing to prevent any protections at any level of government, completely

abdicating its responsibility to address the serious harms we know AI can cause.

It is a gift to the largest technology companies at the expense of users — small

or large — who increasingly rely on their services, as well as the American

public who will be subject to unaccountable and inscrutable systems.

Putting agentic AI to work in Firebase Studio

An AI assistant is like power steering. The programmer, the driver, remains in

control, and the tool magnifies that control. The developer types some code, and

the assistant completes the function, speeding up the process. The next logical

step is to empower the assistant to take action—to run tests, debug code, mock

up a UI, or perform some other task on its own. In Firebase Studio, we get a

seat in a hosted environment that lets us enter prompts that direct the agent to

take meaningful action. ... Obviously, we are a long way off from a

non-programmer frolicking around in Firebase Studio, or any similar AI-powered

development environment, and building complex applications. Google Cloud

Platform, Gemini, and Firebase Studio are best-in-class tools. These kinds of

limits apply to all agentic AI development systems. Still, I would in no wise

want to give up my Gemini assistant when coding. It takes a huge amount of busy

work off my shoulders and brings much more possibility into scope by letting me

focus on the larger picture. I wonder how the path will look, how long it will

take for Firebase Studio and similar tools to mature. It seems clear that

something along these lines, where the AI is framed in a tool that lets it take

action, is part of the future. It may take longer than AI enthusiasts predict.

It may never really, fully come to fruition in the way we envision.

An AI assistant is like power steering. The programmer, the driver, remains in

control, and the tool magnifies that control. The developer types some code, and

the assistant completes the function, speeding up the process. The next logical

step is to empower the assistant to take action—to run tests, debug code, mock

up a UI, or perform some other task on its own. In Firebase Studio, we get a

seat in a hosted environment that lets us enter prompts that direct the agent to

take meaningful action. ... Obviously, we are a long way off from a

non-programmer frolicking around in Firebase Studio, or any similar AI-powered

development environment, and building complex applications. Google Cloud

Platform, Gemini, and Firebase Studio are best-in-class tools. These kinds of

limits apply to all agentic AI development systems. Still, I would in no wise

want to give up my Gemini assistant when coding. It takes a huge amount of busy

work off my shoulders and brings much more possibility into scope by letting me

focus on the larger picture. I wonder how the path will look, how long it will

take for Firebase Studio and similar tools to mature. It seems clear that

something along these lines, where the AI is framed in a tool that lets it take

action, is part of the future. It may take longer than AI enthusiasts predict.

It may never really, fully come to fruition in the way we envision.Edge AI + Intelligence Hub: A Match in the Making

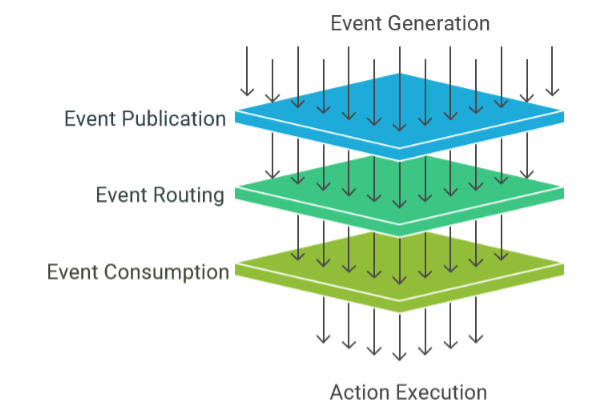

The shop floor looks nothing like a data lake. There is telemetry data from

machines, historical data, MES data in SQL, some random CSV files, and most of

it lacks context. Companies that realize this—or already have an Industrial

DataOps strategy—move quickly beyond these issues. Companies that don’t end up

creating a solution that works with only telemetry data (for example) and then

find out they need other data. Or worse, when they get something working in the

first factory, they find out factories 2, 3, and 4 have different technology

stacks. ... In comes DataOps (again). Cloud AI and Edge AI have the same

problems with industrial data. They need access to contextualized information

across many systems. The only difference is there is no data lake in the

factory—but that’s OK. DataOps can leave the data in the source systems and

expose it over APIs, allowing edge AI to access the data needed for specific

tasks. But just like IT, what happens if OT doesn’t use DataOps? It’s the same

set of issues. If you try to integrate AI directly with data from your SCADA,

historian, or even UNS/MQTT, you’ll limit the data and context to which the

agent has access. SCADA/Historians only have telemetry data. UNS/MQTT is report

by exception, and AI is request/response based (i.e., it can’t integrate). But

again, I digress. Use DataOps.

The shop floor looks nothing like a data lake. There is telemetry data from

machines, historical data, MES data in SQL, some random CSV files, and most of

it lacks context. Companies that realize this—or already have an Industrial

DataOps strategy—move quickly beyond these issues. Companies that don’t end up

creating a solution that works with only telemetry data (for example) and then

find out they need other data. Or worse, when they get something working in the

first factory, they find out factories 2, 3, and 4 have different technology

stacks. ... In comes DataOps (again). Cloud AI and Edge AI have the same

problems with industrial data. They need access to contextualized information

across many systems. The only difference is there is no data lake in the

factory—but that’s OK. DataOps can leave the data in the source systems and

expose it over APIs, allowing edge AI to access the data needed for specific

tasks. But just like IT, what happens if OT doesn’t use DataOps? It’s the same

set of issues. If you try to integrate AI directly with data from your SCADA,

historian, or even UNS/MQTT, you’ll limit the data and context to which the

agent has access. SCADA/Historians only have telemetry data. UNS/MQTT is report

by exception, and AI is request/response based (i.e., it can’t integrate). But

again, I digress. Use DataOps.AI-driven threats prompt IT leaders to rethink hybrid cloud security

Public cloud security risks are also undergoing renewed assessment. While the

public cloud was widely adopted during the post-pandemic shift to digital

operations, it is increasingly seen as a source of risk. According to the

survey, 70 percent of Security and IT leaders now see the public cloud as a

greater risk than any other environment. As a result, an equivalent proportion

are actively considering moving data back from public to private cloud due to

security concerns, and 54 percent are reluctant to use AI solutions in the

public cloud citing apprehensions about intellectual property protection. The

need for improved visibility is emphasised in the findings. Rising

sophistication in cyberattacks has exposed the limitations of existing security

tools—more than half (55 percent) of Security and IT leaders reported lacking

confidence in their current toolsets' ability to detect breaches, mainly due to

insufficient visibility. Accordingly, 64 percent say their primary objective for

the next year is to achieve real-time threat monitoring through comprehensive

real-time visibility into all data in motion. David Land, Vice President, APAC

at Gigamon, commented: "Security teams are struggling to keep pace with the

speed of AI adoption and the growing complexity of and vulnerability of public

cloud environments.

Public cloud security risks are also undergoing renewed assessment. While the

public cloud was widely adopted during the post-pandemic shift to digital

operations, it is increasingly seen as a source of risk. According to the

survey, 70 percent of Security and IT leaders now see the public cloud as a

greater risk than any other environment. As a result, an equivalent proportion

are actively considering moving data back from public to private cloud due to

security concerns, and 54 percent are reluctant to use AI solutions in the

public cloud citing apprehensions about intellectual property protection. The

need for improved visibility is emphasised in the findings. Rising

sophistication in cyberattacks has exposed the limitations of existing security

tools—more than half (55 percent) of Security and IT leaders reported lacking

confidence in their current toolsets' ability to detect breaches, mainly due to

insufficient visibility. Accordingly, 64 percent say their primary objective for

the next year is to achieve real-time threat monitoring through comprehensive

real-time visibility into all data in motion. David Land, Vice President, APAC

at Gigamon, commented: "Security teams are struggling to keep pace with the

speed of AI adoption and the growing complexity of and vulnerability of public

cloud environments.

Taming the Hacker Storm: Why Millions in Cybersecurity Spending Isn’t Enough

The key to taming the hacker storm is founded on the core principle of

trust: that the individual or company you are dealing with is who or what

they claim to be and behaves accordingly. Establishing a high-trust

environment can largely hinder hacker success. ... For a pervasive selective

trusted ecosystem, an organization requires something beyond trusted user

IDs. A hacker can compromise a user’s device and steal the trusted user ID,

making identity-based trust inadequate. A trust-verified device assures that

the device is secure and can be trusted. But then again, a hacker stealing a

user’s identity and password can also fake the user’s device. Confirming the

device’s identity—whether it is or it isn’t the same device—hence becomes

necessary. The best way to ensure the device is secure and trustworthy is to

employ the device identity that is designed by its manufacturer and

programmed into its TPM or Secure Enclave chip. ... Trusted actions are

critical in ensuring a secure and pervasive trust environment. Different

actions require different levels of authentication, generating different

levels of trust, which the application vendor or the service provider has

already defined. An action considered high risk would require stronger

authentication, also known as dynamic authentication.

AWS clamping down on cloud capacity swapping; here’s what IT buyers need to know

For enterprises that sourced discounted cloud resources through a broker or

value-added reseller (VAR), the arbitrage window shuts, Brunkard noted.

Enterprises should expect a “modest price bump” on steady‑state workloads

and a “brief scramble” to unwind pooled commitments. ... On the other hand,

companies that buy their own RIs or SPs, or negotiate volume deals through

AWS’s Enterprise Discount Program (EDP), shouldn’t be impacted, he said.

Nothing changes except that pricing is now baselined. To get ahead of the

change, organizations should audit their exposure and ask their managed

service providers (MSPs) what commitments are pooled and when they renew,

Brunkard advised. ... Ultimately, enterprises that have relied on vendor

flexibility to manage overcommitment could face hits to gross margins,

budget overruns, and a spike in “finance-engineering misalignment,” Barrow

said. Those whose vendor models are based on RI and SP reallocation tactics

will see their risk profile “changed overnight,” he said. New commitments

will now essentially be non-cancellable financial obligations, and if cloud

usage dips or pivots, they will be exposed. Many vendors won’t be able to

offer protection as they have in the past.

For enterprises that sourced discounted cloud resources through a broker or

value-added reseller (VAR), the arbitrage window shuts, Brunkard noted.

Enterprises should expect a “modest price bump” on steady‑state workloads

and a “brief scramble” to unwind pooled commitments. ... On the other hand,

companies that buy their own RIs or SPs, or negotiate volume deals through

AWS’s Enterprise Discount Program (EDP), shouldn’t be impacted, he said.

Nothing changes except that pricing is now baselined. To get ahead of the

change, organizations should audit their exposure and ask their managed

service providers (MSPs) what commitments are pooled and when they renew,

Brunkard advised. ... Ultimately, enterprises that have relied on vendor

flexibility to manage overcommitment could face hits to gross margins,

budget overruns, and a spike in “finance-engineering misalignment,” Barrow

said. Those whose vendor models are based on RI and SP reallocation tactics

will see their risk profile “changed overnight,” he said. New commitments

will now essentially be non-cancellable financial obligations, and if cloud

usage dips or pivots, they will be exposed. Many vendors won’t be able to

offer protection as they have in the past.The new C-Suite ally: Generative AI

/dq/media/media_files/2025/05/21/YEYrWoduJEOJSC7iWMtt.png) While traditional GenAI applications focus on structured datasets, a

significant frontier remains largely untapped — the vast swathes of

unstructured "dark data" sitting in contracts, credit memos, regulatory

reports, and risk assessments. Aashish Mehta, Founder and CEO of nRoad,

emphasizes this critical gap.

While traditional GenAI applications focus on structured datasets, a

significant frontier remains largely untapped — the vast swathes of

unstructured "dark data" sitting in contracts, credit memos, regulatory

reports, and risk assessments. Aashish Mehta, Founder and CEO of nRoad,

emphasizes this critical gap."Most strategic decisions rely on data, but the reality is that a lot of that data sits in unstructured formats," he explained. nRoad’s platform, CONVUS, addresses this by transforming unstructured content into structured, contextual insights. ... Beyond risk management, OpsGPT automates time-intensive compliance tasks, offers multilingual capabilities, and eliminates the need for coding through intuitive design. Importantly, Broadridge has embedded a robust governance framework around all AI initiatives, ensuring security, regulatory compliance, and transparency. Trustworthiness is central to Broadridge’s approach. "We adopt a multi-layered governance framework grounded in data protection, informed consent, model accuracy, and regulatory compliance," Seshagiri explained. ... Despite the enthusiasm, CxOs remain cautious about overreliance on GenAI outputs. Concerns around model bias, data hallucination, and explainability persist. Many leaders are putting guardrails in place: enforcing human-in-the-loop systems, regular model audits, and ethical AI use policies.

Building a Proactive Defence Through Industry Collaboration

Trusted collaboration, whether through Information Sharing and Analysis

Centres (ISACs), government agencies, or private-sector partnerships, is a

highly effective way to enhance the defensive posture of all participating

organisations. For this to work, however, organisations will need to establish

operationally secure real-time communication channels that support the rapid

sharing of threat and defence intelligence. In parallel, the community will

also need to establish processes to enable them to efficiently disseminate

indicators of compromise (IoCs) and tactics, techniques and procedures (TTPs),

backed up with best practice information and incident reports. These

collective defence communities can also leverage the centralised cyber fusion

centre model that brings together all relevant security functions – threat

intelligence, security automation, threat response, security orchestration and

incident response – in a truly cohesive way. Providing an integrated sharing

platform for exchanging information among multiple security functions, today’s

next-generation cyber fusion centres enable organisations to leverage threat

intelligence, identify threats in real-time, and take advantage of automated

intelligence sharing within and beyond organisational boundaries.

Trusted collaboration, whether through Information Sharing and Analysis

Centres (ISACs), government agencies, or private-sector partnerships, is a

highly effective way to enhance the defensive posture of all participating

organisations. For this to work, however, organisations will need to establish

operationally secure real-time communication channels that support the rapid

sharing of threat and defence intelligence. In parallel, the community will

also need to establish processes to enable them to efficiently disseminate

indicators of compromise (IoCs) and tactics, techniques and procedures (TTPs),

backed up with best practice information and incident reports. These

collective defence communities can also leverage the centralised cyber fusion

centre model that brings together all relevant security functions – threat

intelligence, security automation, threat response, security orchestration and

incident response – in a truly cohesive way. Providing an integrated sharing

platform for exchanging information among multiple security functions, today’s

next-generation cyber fusion centres enable organisations to leverage threat

intelligence, identify threats in real-time, and take advantage of automated

intelligence sharing within and beyond organisational boundaries.

3 Powerful Ways AI is Supercharging Cloud Threat Detection

AI’s strength lies in pattern recognition across vast datasets. By analysing

historical and real-time data, AI can differentiate between benign anomalies

and true threats, improving the signal-to-noise ratio for security teams. This

means fewer false positives and more confidence when an alert does sound. ...

When a security incidents strike, every second counts. Historically,

responding to an incident involves significant human effort – analysts must

comb through alerts, correlate logs, identify the root cause, and manually

contain the threat. This approach is slow, prone to errors, and doesn’t scale

well. It’s not uncommon for incident investigations to stretch hours or days

when done manually. Meanwhile, the damage (data theft, service disruption)

continues to accrue. Human responders also face cognitive overloads during

crises, juggling tasks like notifying stakeholders, documenting events, and

actually fixing the problem. ... It’s important to note that AI isn’t about

eliminating the need for human experts but rather augmenting their

capabilities. By taking over initial investigation steps and mundane tasks, AI

frees up human analysts to focus on strategic decision-making and complex

threats. Security teams can then spend time on thorough analysis of

significant incidents, threat hunting, and improving security posture, instead

of constant firefighting.

AI’s strength lies in pattern recognition across vast datasets. By analysing

historical and real-time data, AI can differentiate between benign anomalies

and true threats, improving the signal-to-noise ratio for security teams. This

means fewer false positives and more confidence when an alert does sound. ...

When a security incidents strike, every second counts. Historically,

responding to an incident involves significant human effort – analysts must

comb through alerts, correlate logs, identify the root cause, and manually

contain the threat. This approach is slow, prone to errors, and doesn’t scale

well. It’s not uncommon for incident investigations to stretch hours or days

when done manually. Meanwhile, the damage (data theft, service disruption)

continues to accrue. Human responders also face cognitive overloads during

crises, juggling tasks like notifying stakeholders, documenting events, and

actually fixing the problem. ... It’s important to note that AI isn’t about

eliminating the need for human experts but rather augmenting their

capabilities. By taking over initial investigation steps and mundane tasks, AI

frees up human analysts to focus on strategic decision-making and complex

threats. Security teams can then spend time on thorough analysis of

significant incidents, threat hunting, and improving security posture, instead

of constant firefighting.

/articles/AI-legacy-modernization/en/smallimage/AI-legacy-modernization-thumbnail-1746528855677.jpg)