Quote for the day:

"Leadership has a harder job to do than just choose sides. It must bring sides together." -- Jesse Jackson

Can AI Fix Digital Banking Service Woes?

For banks in India, an AI-driven system for handling customer complaints can be

a game changer by enhancing operational efficiency, boosting customer trust and

ensuring strict regulatory compliance. The success of this system hinges on

addressing data security, integrating with legacy systems, and multi-lingual

challenges while fostering a culture of continuous improvement. "By following

this detailed road map, banks can build a resilient AI system that not only

improves customer service but also supports broader financial risk management

and compliance objectives, said Abhay Johorey, managing director, Protiviti

Member Firm for India. An AI chatbot could drive operational efficiency, perform

enhanced data analytics and risk management, increase customer trust and have

compliance benefits if designed well. A badly executed one could run the risk of

providing inaccurate financial information to customers or infringe on their

privacy and data. ... "We are entering a transformative era where AI can

significantly improve the speed, accuracy and fairness of complaint resolution.

AI can categorize complaints based on urgency, complexity or subject matter,

ensuring faster escalation to the appropriate teams. AI optimizes complaint

routing and assists in decision-making, reducing processing times," the RBI

said.

Ethernet roadmap: AI drives high-speed, efficient Ethernet networks

The Ethernet Alliance’s 10th anniversary roadmap references the consortium’s

2024 Technology Exploration Forum (TEF), which highlighted the critical need for

collaboration across the Ethernet ecosystem: “Industry experts emphasized the

importance of uniting different sectors to tackle the engineering challenges

posed by the rapid advancement of AI. This collective effort is ensuring that

Ethernet will continue to evolve to provide the network functionality required

for next-generation AI networks.” Some of those engineering challenges include

congestion management, latency, power consumption, signaling, and the

ever-increasing speed of the network. ... “One of the outcomes of [the TEF]

event was the realization the development of 400Gb/sec signaling would be an

industry-wide problem. It wasn’t solely an application, network, component, or

interconnect problem,” stated D’Ambrosia, who is a distinguished engineer with

the Datacom Standards Research team at Futurewei Technologies, a U.S. subsidiary

of Huawei, and the chair of the IEEE P802.3dj 200Gb/sec, 400Gb/sec, 800Gb/sec

and 1.6Tb/sec Task Force. “Overcoming the challenges to support 400 Gb/s

signaling will likely require all the tools available for each of the various

layers and components.”

Dealing With Data Overload: How to Take Control of Your Security Analytics

Organizations face several challenges when it comes to security analytics. They

need to find a better way to optimize high volumes of data, ensure they are

getting maximum bang for the buck, and bring balance between cost and

visibility. This allows more of the "right" or optimized data to be brought in

for advanced analytics, filtering out the noise or useless data that isn't

needed for analytics/machine learning. ... If you're a SOC manager, and your

team is triaging alerts all day, perhaps you've got one full-time staffer who

does nothing but look at Microsoft O365 alerts, and another person who just

looks at Proofpoint alerts. The goal is to think about the bigger operational

picture. When searching for a solution, it's easy to focus only on your

immediate challenges and overlook future ones. As a result, you invest in a fix

that solves today's problems but leaves you unprepared for the next ones that

arise. You've shot yourself in the foot. ... Organizations tend to buy different

tools to solve different problems, when what they need is a data analytics

platform that can apply analytics, machine learning, and data science to their

data sets. That will provide the intelligence to make business decisions,

whether that's to reduce risk or something else. Look for a tool, regardless of

what it's called, that can solve the most problems for the least amount of

money.

Cyber insurance isn’t always what it seems

Still, insurance is no silver bullet. Policies often come with limitations, high

premiums, and strict requirements around security posture. “Insurers scrutinize

security postures, enforce stringent requirements, and may deny claims if proper

controls are not in place,” he said. Many policies also include exclusions and

coverage gaps that add complexity to the decision. When used appropriately,

cyber insurance plays a supporting role, not a leading one. “They should

complement the defensive capabilities that focus on avoiding and minimizing

loss,” Rosenquist said, serving as a safety net rather than a frontline defense.

“Cyber insurance can provide important financial relief, but it should never be

the first or only line of defense.” ... “Many businesses still believe they’re

too small to be targeted, that cyber insurance is only for large companies, or

that it’s too expensive. However, the reality is that over 60% of small

businesses have been victims of cyberattacks, privacy breaches affect

organizations of all sizes, and the cyber insurance market offers competitive,

tailored options. Working with a skilled broker brings real value. They offer

broad expertise and help build tailored solutions. With the proper guidance,

organizations can create programs that address their specific risks and needs,“

explained

Tijana Dusper,

a licensed broker for insurance and reinsurance at InterOmnia.

RFID Hacking: Exploring Vulnerabilities, Testing Methods, and Protection Strategies

When an RFID reader scans an object, it emits a radio frequency (RF) signal that

interacts with nearby RFID tags, potentially up to 1.14 million tags in a single

area. The antenna on each tag absorbs this energy, powering the embedded

microchip. The chip then encodes its stored data into a binary format (0s and

1s) and transmits it back to the RFID reader using reverse signal modulation.

The collected data is then stored and processed, either for human interpretation

or automated system operations. ... As with many wireless technologies, RFID

technology adheres to certain standards and communication protocols. ... As RFID

technology becomes increasingly embedded in everyday operations, from access

control and inventory tracking to cashless payments, the risks associated with

RFID hacking cannot be ignored. The same features that make RFID efficient and

convenient, wireless communication and automatic identification, also make it

vulnerable to cyber threats. RFID hacking techniques, such as cloning, skimming,

eavesdropping, and relay attacks, allow cybercriminals to intercept sensitive

information, manipulate access controls, or even exploit entire systems. Without

proper security measures, businesses and individuals risk unauthorized data

breaches, financial fraud, and identity theft.

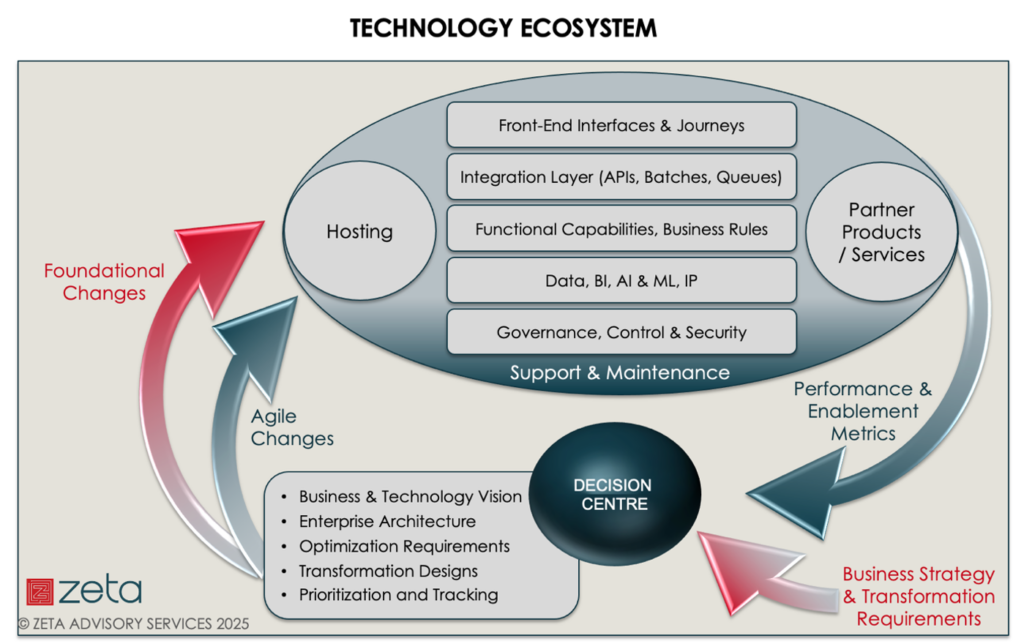

How Organizational Rewiring Can Capture Value from Your AI Strategy

McKinsey’s research indicates that while AI use is accelerating dramatically

(78% of organizations now use AI in at least one function, up from 55% a year

ago), most organizations are still in early implementation stages. Only 1% of

company executives describe their generative AI rollouts as "mature." For retail

banking leaders, this reality check suggests both opportunity and urgency. The

potential for competitive advantage remains substantial for early transformation

leaders, but the window for gaining this advantage is narrowing as adoption

accelerates. As McKinsey senior partner Alex Singla observes: "The organizations

that are building a genuine and lasting competitive advantage from their AI

efforts are the ones that are thinking in terms of wholesale transformative

change that stands to alter their business models, cost structures, and revenue

streams — rather than proceeding incrementally." For retail banking executives,

this means embracing AI as a strategic imperative that requires rethinking

fundamental business models, not merely implementing new technology tools. The

most successful banking institutions will be those that undertake comprehensive

organizational rewiring, driven by active C-suite leadership, clear strategic

roadmaps, and a willingness to fundamentally redesign how they operate.

Securing AI at the Edge: Why Trusted Model Updates Are the Next Big Challenge

Edge AI is no longer experimental. It is running live in environments where

failure is not an option. Environmental monitoring systems track air quality in

realtime across urban areas. Predictive maintenance tools keep industrial

equipment running smoothly. Smart traffic networks optimize vehicle flow in

congested cities. Autonomous vehicles assist drivers with advanced safety

features. Factory automation systems use AI to detect product defects on

high-speed production lines. In all these scenarios, AI models must continuously

evolve to meet changing demands. But every update carries risks, whether through

technical failure, security breaches, or operational disruption. ... These

challenges cannot be solved with isolated patches or last-minute fixes. Securing

AI updates at the edge requires a fundamental rethink of the entire lifecycle.

The update process from cloud-to-edge must be secure from start to finish.

Models need protection from the moment they leave development until they are

safely deployed. Authenticity must be guaranteed so that no malicious code can

slip in. Access control must ensure that only authorized systems handle updates.

And because no system is immune to failure, updates need built-in recovery

mechanisms that minimize disruption.

Beyond the Black Box: Rethinking Data Centers for Sustainable Growth

To thrive under the growing pressure, the data center sector must rethink its

relationship with the communities it enters. Instead of treating public

engagement as an afterthought, what if the planning process started with people?

Now, reimagine the development timeline. What if the public-facing engagement

was prioritized from the very start? Imagine a data center operator purchasing a

parcel of land for a new data center campus near a mid-sized city. Instead of

presenting a fully formed plan months later, the client begins the conversation

by asking the community: “How can we improve things while becoming your

neighbor?” While commercial viability is essential, early engagement and

collaboration can deliver positive outcomes without substantially increasing

costs. ... For data centers in urban environments where space is

limited, the listen-first ethos still holds value. In these cases, the focus

might shift to educational initiatives, such as training programs or

partnerships with local schools and universities. Early public engagement

ensures that urban projects align with the needs and priorities of residents

while addressing their concerns. This inclusive approach benefits all

stakeholders: for local authorities, it supports broader sustainability and net

zero goals, and for communities, it delivers tangible benefits that clarify the

data centre’s impact and value to the area.

Generative AI In Business: Managing Risks in The Race for Innovation

The issue is that businesses lack the appropriate processes, guidelines, or

formal governance structures needed to regulate AI use, which, at the end of the

day, makes them prone to accidental security breaches. In many instances, the

culprits are employees who introduce GenAI systems on corporate devices with no

understanding of the risks that come with it or their use even permitted based

on the company’s existing data security and privacy guidelines. ... Never

overestimate the power of employee education, which is essential in times when

new innovations are far ahead of education. Put in place an educational program

that delves into the risks of AI systems. Include training sessions that give

people the tools they need to recognize red flags, such as suspicious

AI-generated outputs or unusual system behaviors. In a world of AI-enabled

threats, it’s important to empower employees to act as the first line of defense

is essential. ... A preemptive approach that leverages tools such as Automated

Moving Target Defense (AMTD) can help organizations stay ahead of attackers. By

anticipating potential threats and implementing measures to address them before

they occur, companies can reduce their vulnerability to AI-enabled exploits.

This proactive stance is particularly important given the speed and adaptability

of modern cyber threats.

How to Get a Delayed IT Project Back on Track

The best way to launch a project revival is to look backward. "Conduct a

thorough project reassessment to identify the root causes of delays, then

re-prioritize deliverables using a phased, agile-based approach," suggests Karan

Kumar Ratra, an engineering leader at Walmart specializing in e-commerce

technology, leadership, and innovation. "Start with high-impact, manageable

milestones to restore momentum and stakeholder confidence," he advises in an

online interview. "Clear communication, accountability, and aligning leadership

with revised goals are critical." ... Recall past team members, yet supplement

them with new members with similar skills and project experience, recommends

Pundalika Shenoy, automation and modernization project manager at business

consulting firm Smartbridge, via email. "Outside perspectives and expertise will

help the team." While new team members should be welcomed, try to retain at

least some past contributors to ensure project continuity, Rahming advises.

Fresh ideas and insights may be what the legacy project needs to succeed but try

to retain at least some past contributors to ensure project continuity, Rahming

advises. "The new team members may well bring a sense of urgency, enthusiasm and

skills ... that weren't present in the previous team at the time of the delay."

_Federico_Caputo_Alamy.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)