Business Continuity vs Disaster Recovery: A Guide to Key Differences

Business continuity is like an umbrella, covering every aspect of your business

that could be impacted by disruptions – not just technology. Think of it as the

master plan that keeps your entire operation functioning when faced with

challenges. In contrast, IT disaster recovery is more specific; its focus lies

in restoring systems, applications and data after an interruption occurs in tech

infrastructure due to any number of causes – natural disasters, cyber-attacks or

human error. The first major difference between these two concepts comes down to

their scope. While business continuity covers all areas affected by potential

disruptions, IT disaster recovery focuses on ensuring technological

infrastructures remain functional following crises. Secondly, they have

different end goals: while business continuity aims at maintaining essential

functions across the organization during a crisis situation till normalcy

returns; IT disaster recovery’s objective is getting systems back up and running

post-interruption. A third distinction lies within timeframes: A Business

Continuity Plan often has longer-term solutions compared to quicker response

times expected from an effective Disaster Recovery Plan.

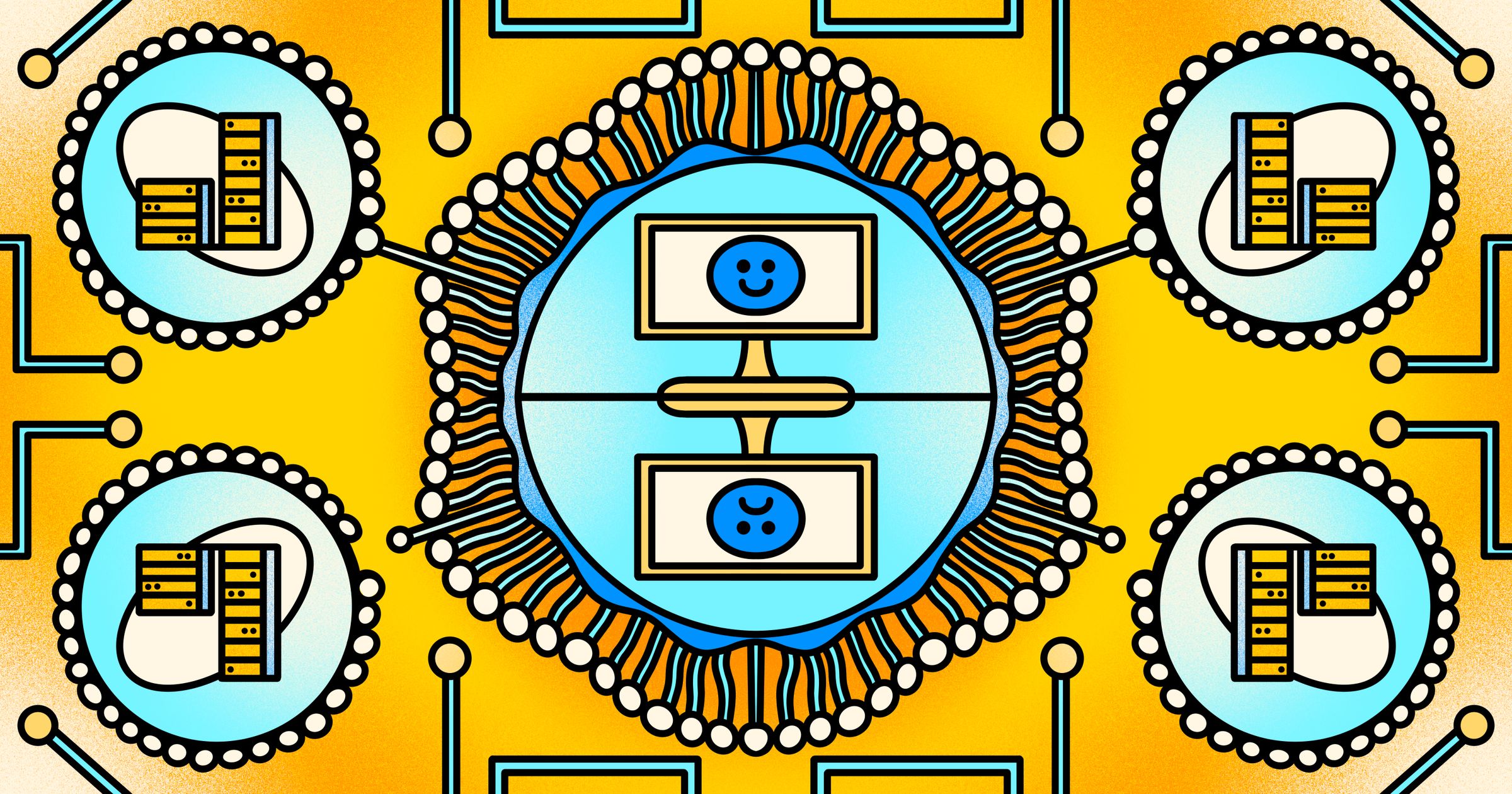

Unlocking the power of multi-cloud

In the era of digital transformation and widespread cloud migration, ensuring

robust data security has become a paramount concern for enterprises. The

introduction of regulations, such as the Digital Personal Data Protection Act

2023, extends the scope of compliance to smaller businesses, emphasizing the

need for comprehensive data protection strategies. End-to-End Data Security

Platforms: To address the evolving landscape of data security, businesses are

advised to adopt full end-to-end data security platforms. These platforms serve

a multifaceted role, helping organizations discover, protect, monitor, and

respond to threats across on-premises and cloud environments. Structured and

Unstructured Data Management: Platforms should enable the discovery and

classification of both structured and unstructured data, providing a

comprehensive view of data assets. This capability is crucial for effective data

management and compliance efforts. Continuous Monitoring for Risk Mitigation:

Implementing continuous monitoring practices is essential for reducing the risk

of data breaches. This involves vigilant oversight of data access across

on-premises and multiple cloud environments.

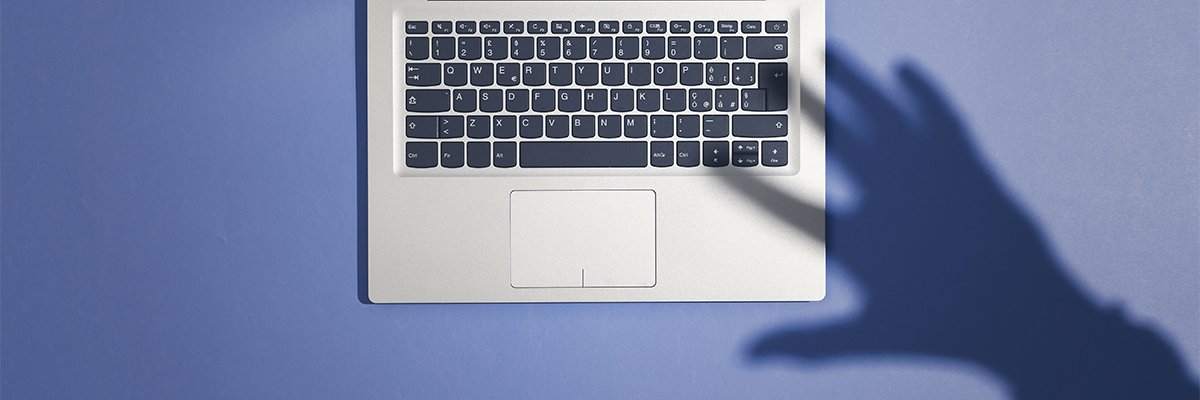

Shadow IT use at Okta behind series of damaging breaches

Okta CISO David Bradbury said: “The unauthorised access to Okta’s customer

support system leveraged a service account stored in the system itself. This

service account was granted permissions to view and update customer support

cases. “During our investigation into suspicious use of this account, Okta

Security identified that an employee had signed into their personal Google

profile on the Chrome browser of their Okta-managed laptop. “The username and

password of the service account had been saved into the employee’s personal

Google account. The most likely avenue for exposure of this credential is the

compromise of the employee’s personal Google account or personal device,” he

said. Bradbury added: “We offer our apologies to those affected customers, and

more broadly to all our customers that trust Okta as their identity provider. We

are deeply committed to providing up-to-date information to all our customers.”

Okta said its investigation had been complicated by a failure to identify file

downloads in customer support vendor logs.

Getting Aggressive with Cloud Cybersecurity

The best way to get started is by evaluating vendors that offer proactive cloud

security tools and determining their capabilities, Dalling advises. He also

suggests reviewing the existing cloud-native inventory and security techniques.

“Work with your organization’s security operations center to determine the most

effective way to integrate a proactive cloud security tool into their monitoring

and incident response workflows,” Dalling adds. By adopting a proactive cloud

security approach, organizations can safeguard themselves against security

threats, ensure compliance, and increase customer trust, says Ravi Raghava, vice

president of cloud solutions at technology integrator SAIC via email. “This

approach is often more cost effective than dealing with the aftermath of a

security breach, which can result in substantial financial and reputational

losses.” He notes that business partners are more likely to trust organizations

that prioritize the protection of their data through proactive security

steps.

Lessons From 100+ Ransomware Recoveries

Your data retention policy is how long you keep data for regulatory or

compliance reasons, and how you remove it when it’s no longer needed. Ransomware

attackers have evolved their methods. They know you are less likely to pay out

if you can quickly switch over to Disaster Recovery systems. They are now

delaying detonation of ransomware to outlast typical retention policies. This is

the limitation of DR solutions. While they are the fastest way to recover, they

have a limited number of versions or days you can recover to. For one of our

manufacturing customers – using both our BaaS and DRaaS products – the

ransomware was present on their systems for around three months. That meant that

every DR recovery point was compromised, and we had to recover from backups. The

Recovery Time Objective (RTO) was a day. We recovered from backups, so it took

longer than DR but relatively speaking, it was a fast recovery. The Recovery

Point Objective (RPO), however, was from three months prior. The challenge that

the organisation then faced was how to re-create that lost data.

Exploring the global shift towards AI-specific legislation

It is vital that the public – but moreover, all stakeholders – be involved in

discussions around AI. The technology companies developing AI, for example, are

likely the best placed to understand the technology fully and can help guide any

such discussion. Those organizations deploying the technology must also be

closely involved, as they have a particular viewpoint to offer. Governments also

need to be a part of the discussion. The position of various nations can offer

value and help steer the decision-making of all those governments represented in

this context. Finally, let’s not forget the general public, the individuals

whose data will likely be processed by the technology. All play valuable yet

different roles and will come with different viewpoints that should be aired and

considered. ... Legislation or any form of regulation is often seen as

restrictive: by its very nature, it comprises a set of rules that govern. That

is often interpreted as “restrictive” and hinders development, innovation, and

technological advancement in this context. That is a generalist, simplistic, and

somewhat dismissive view.

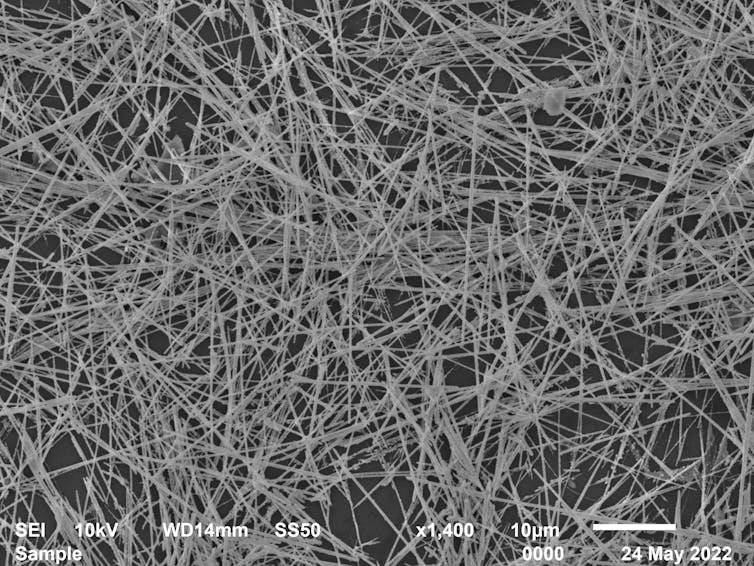

The 10 Biggest Cyber Security Trends In 2024 Everyone Must Be Ready For Now

With the work-from-home revolution continuing, the risks posed by workers

connecting or sharing data over improperly secured devices will continue to be a

threat. Often, these devices are designed for ease of use and convenience rather

than secure operations, and home consumer IoT devices may be at risk due to weak

security protocols and passwords. The fact that industry has generally dragged

its feet over the implementation of IoT security standards, despite the fact

that the vulnerabilities have been apparent for many years, means it will

continue to be a cyber security weak spot – though this is changing. ... Two

terms that are often used interchangeably are cyber security and cyber

resilience. However, the distinction will become increasingly important during

2024 and beyond. While the focus of cyber security is on preventing attacks, the

growing value placed on resilience by many organizations reflects the hard truth

that even the best security can’t guarantee 100 percent protection. Resilience

measures are designed to ensure continuity of operations even in the wake of a

successful breach.

Andrew McAfee – ‘Human beings are chronically overconfident’

All of us, as human beings, are chronically overconfident. It’s the most common

cognitive bias. That means that your brain children are going to be very, very

dear to you, to the point that you’re probably unable to see the holes and the

flaws. So that’s a problem. The solution is other people. This is how science

works. This is why I describe one of the great geek norms is simply as

“science”. Science is really subjecting your ideas to the scrutiny of other

people, and then having evidence-based discussions about the merits of those

ideas. Is this good? Is this correct or not? One thing you can absolutely start

doing is being a little less fond of your own ideas and stress testing those

ideas early and often with other people. Another thing we can do is acknowledge

other people’s good ideas. Just start saying, “That’s a really good idea,

thanks. I hadn’t thought of that. Maybe we should take a different approach

here.” Those kinds of statements are super powerful, especially when they are

coming from leader in an organisation, because as humans we are wired to take

are cues from the people who have high status in an organisation. especially

coming from leaders in an organisation.

IT leader’s survival guide: 8 tips to thrive in the years ahead

With so many disruptive technologies emerging at once, and IT leaders pulled in

to solving so many more business challenges, it’s easy to get caught up in the

fervor. But in addition to embracing change, IT leaders need to develop a

multifaceted approach to navigating current technology and business challenges,

says Sanjay Srivastava, chief digital strategist at Genpact. “IT leaders need to

adapt by adopting a holistic approach that focuses on resilience, agility,

diversification, and collaboration,” Srivastava says. “In this evolving IT

investment landscape, the definition of risk has not changed, but the timeframe

for response has shortened.” ... It can be difficult to adapt quickly as

technology advances, while working to comply with varying regulations across

state lines and borders. “The challenge is that the technology footprint — and

our understanding of potentials and pitfalls — is still maturing, for instance

with generative AI. It’s understandable and expected that regulations will

evolve, and working through the changes coming in an otherwise long-term tech

stack will be key to getting it right,” he says.

Empowered Agile Transformation – Beyond the Framework

The Executive team could be working 10 to 20 years out of date, because their

expertise and experience that got them to their current position has lost its

relevance in a world of accelerated change. Their approach can be to apply past

experience to current problems. Their 20-year-old solutions are incompatible

with contemporary problems. They need to retrain to adopt flexible systems that

adapt to new challenges. Otherwise, the workers are constrained by the level of

understanding of group executives, and progress is inhibited. They are impeding

their teams’ potential. We have all the tools to work contemporaneously today.

We have the technology, tools, and experience to leverage agility in delivering

value. It is now the executive leaders and company boards fighting the new way;

a more collaborative way to generate value for businesses and their customers.

The solution is to understand their current customers’ problems, and identify

threats to their business models, while gaining the skills and competencies to

apply contemporary ways of working.

Quote for the day:

"The most difficult thing is the

decision to act, the rest is merely tenacity." --

Amelia Earhart