Insider risk management: Where your program resides shapes its focus

Choi says that while the information security team is ultimately responsible for

the proactive protection of an organization’s information and IP, most of the

actual investigation into an incident is generally handled by the legal and HR

teams, which require fact-based evidence supplied by the information security

team. “The CIO/CISO team need to be able to supply facts and evidence in a

consumable, easy-to-understand fashion and in the right format so their legal

and HR counterparts can swiftly and accurately conduct their investigation.” ...

Water flows downhill and so does messaging on topics that many consider

ticklish, such as IRM programs. Payne noted that “few, if any CEOs wish to

discuss their threat risk management programs as it projects negativity — i.e.,

‘we don’t trust you’ and they prefer to have positive messaging.” Few CISOs

enjoy having an IRM program under their remit as “who wants to monitor

their colleagues?” Payne adds, “Whacking external threats is easy; when it’s

your colleague it becomes more problematic.”

What is the medallion lakehouse architecture?

The medallion architecture describes a series of data layers that denote the

quality of data stored in the lakehouse. Databricks recommends taking a

multi-layered approach to building a single source of truth for enterprise data

products. This architecture guarantees atomicity, consistency, isolation, and

durability as data passes through multiple layers of validations and

transformations before being stored in a layout optimized for efficient

analytics. The terms bronze (raw), silver (validated), and gold (enriched)

describe the quality of the data in each of these layers. It is important to

note that this medallion architecture does not replace other dimensional

modeling techniques. Schemas and tables within each layer can take on a variety

of forms and degrees of normalization depending on the frequency and nature of

data updates and the downstream use cases for the data. Organizations can

leverage the Databricks Lakehouse to create and maintain validated datasets

accessible throughout the company.

AppSec ‘Worst Practices’ with Tanya Janca

Having reasonable service-level agreements is so important. When I work with

enterprise clients, they already have tons of software that’s in production

doing its thing, but they’re also building and updating new stuff. So I have

two service-level agreements and one is the crap that was here when I got

here and the other stuff is all the beautiful stuff we’re making now. So

I’ll set up my tools so that you can have a low vulnerability, but if it’s

medium or above, it’s not going to production if it’s new. But all the stuff

that was there when I scanned for the first time, we’re going to do a slower

service-level agreement. That way we can chip away at our technical debt.

The first time I came up with parallel SLAs was when this team lead asked,

“Am I going to get fired because we have a lot of technical debt, and it

would literally take us a whole year just to do the updates from the little

software compositiony thing you were doing.” “No one’s getting fired!” I

said. So that’s how we came up with the parallel SLAs so we could pay legacy

technical debt down slowly like a student loan versus handling new

development like credit card debt that gets paid every single month. There’s

no running a ticket on the credit card!

Revolutionizing the Nine Pillars of DevOps With AI-Engineered Tools

Leadership Practices: Leadership is vital to drive cultural changes, set

vision and goals, encourage collaboration and ensure resources are allocated

properly. Strong leadership fosters a successful DevOps environment by

empowering teams and supporting innovation. AI can assist leaders in

decision-making by analyzing large datasets to identify trends and predict

outcomes, providing valuable insights to guide strategic planning.

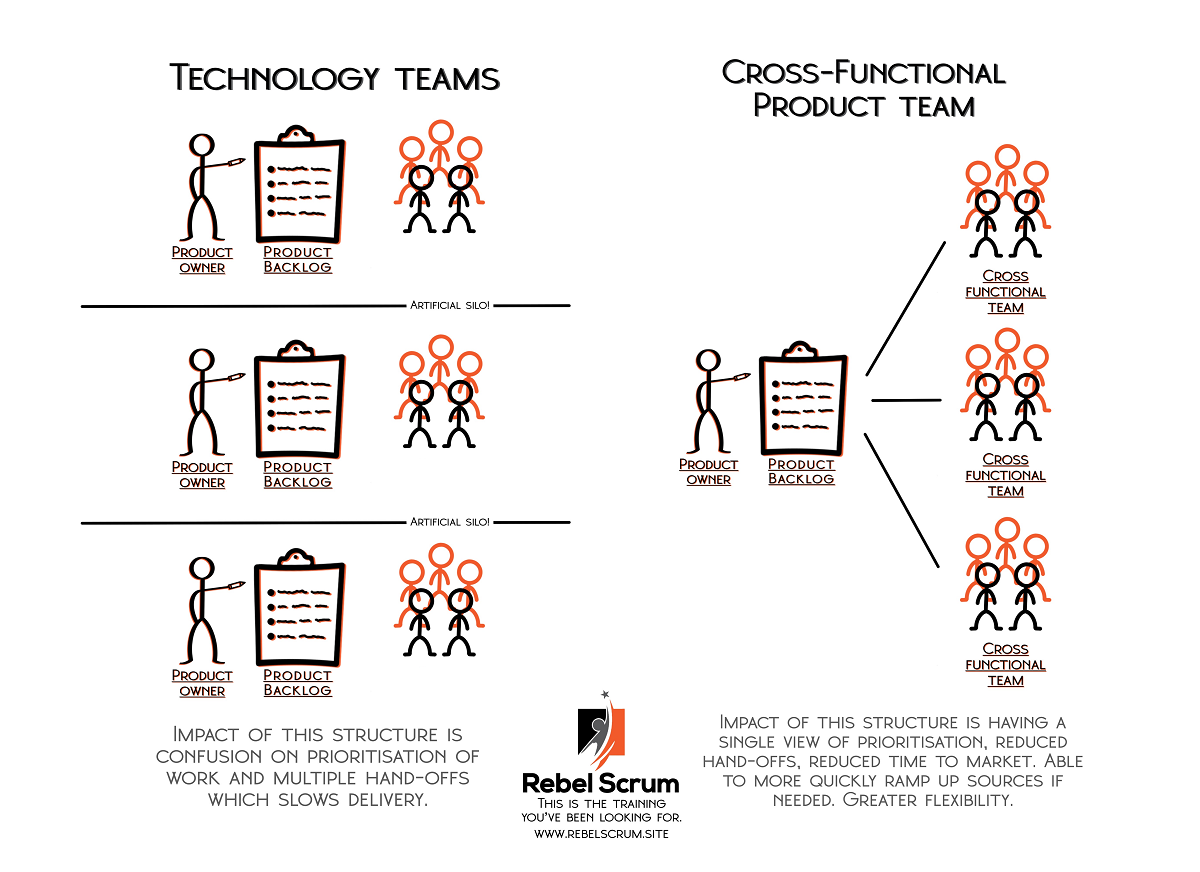

Collaborative Culture Practices: DevOps thrives in a culture of openness,

transparency and shared responsibility. It’s about breaking down the silos

that can exist between different teams and promoting effective communication

and collaboration. AI-powered tools can improve collaboration through smart

recommendations, fostering more effective communication and knowledge

sharing. Design-for-DevOps Practices: This involves designing software in a

way that supports the DevOps model. This can include aspects like

microservices architecture, modular design and considering operability and

deployability from the earliest stages of design.

The ethics of innovation in generative AI and the future of humanity

Humans answer questions based on our genetic makeup (nature), education,

self-learning and observation (nurture). A machine like ChatGPT, on the

other hand, has the world’s data at its fingertips. Just as human biases

influence our responses, AI’s output is biased by the data used to train it.

Because data is often comprehensive and contains many perspectives, the

answer that generative AI delivers depends on how you ask the question. AI

has access to trillions of terabytes of data, allowing users to “focus”

their attention through prompt engineering or programming to make the output

more precise. This is not a negative if the technology is used to suggest

actions, but the reality is that generative AI can be used to make decisions

that affect humans’ lives. ... We have entered a crucial phase in the

regulatory process for generative AI, where applications like these must be

considered in practice. There is no easy answer as we continue to research

AI behavior and develop guidelines

7 CIO Nightmares And How Enterprise Architects Can Help

The deeper you dig into cyber security, the more you find. Do you know what

data your business actually needs to secure? A mission-critical application

might be dependent on a spreadsheet in an outdated system. That data may be

protected under regulation, but supplied from a cloud-based application

that's reliant on open-source coding, and so on. Every CIO needs to know the

top-ten, mission-critical, crown jewel applications and data centers that

their business cannot live without, and what their connections and

dependencies are. Each needs to have a clear plan of action in case of a

security breach. The Solution: Mapping your tech stack with an

enterprise architecture management (EAM) tool allows you to see exactly how

mission critical each application is. This equates one-to-one with how much

you need to invest in cyber security for each area. You can also gain

clarity on which application is dependent on which platform. Likewise, you

can find where crucial data is stored and where it feeds to.

7 Stages of Application Testing: How to Automate for Continuous Security

Pen testing allows organizations to simulate an attack on their web

application, identifying areas of weaknesses that could be exploited by a

malicious attacker. When done correctly, pen testing is an effective way to

detect and remediate security vulnerabilities before they can be exploited.

... Traditional pen testing delivery often takes weeks to set up and the

results are point in time. With the rise of DevOps and cloud technology,

traditional once-a-year pen testing is no longer sufficient to ensure

continuous security. To protect against emerging threats and

vulnerabilities, organizations need to execute ongoing assessments:

continuous application pen testing. Pen Testing as a Service (PTaaS)

offers a more efficient process for proactive and continuous security

compared to traditional pen testing approaches. Organizations are able to

access a view into to their vulnerability finding in real time, via a portal

that displays all relevant data for parsing vulnerabilities and verify the

effectiveness of a remediation as soon as vulnerabilities are discovered.

Technological Innovation Poses Potential Risk of Rising Agricultural Product Costs

While technology has undeniably improved farming practices, its

implementation requires significant financial investment. The upfront costs

associated with purchasing advanced machinery, upgrading infrastructure, and

adopting new technologies can burden farmers, particularly smaller-scale

operations. These costs can ultimately be passed on to consumers,

potentially leading to an increase in the prices of agricultural products.

The seductive promises of cutting-edge machinery, precision agriculture, and

genetically modified crops have mesmerised farmers worldwide. It is true,

these technological marvels have unleashed unprecedented efficiency, capable

of revolutionising the way we grow and harvest our sustenance. Yet, in their

wake, they leave a trail of exorbitant expenses, shaking the very foundation

of the agricultural landscape. ... Modern farming equipment is often

equipped with advanced technology and features that improve efficiency,

precision, and productivity.

Open Source Jira Alternative, Plane, Lands

Indeed, “Plane is a simple, extensible, open source project and product

management tool powered by AI. It allows users to start with a basic

task-tracking tool and gradually adopt various project management frameworks

like Agile, Waterfall, and many more, wrote Vihar Kurama, co-founder and COO

of Plane, in a blog post. Yet, “Plane is still in its early days, not

everything will be perfect yet, and hiccups may happen. Please let us know

of any suggestions, ideas, or bugs that you encounter on our Discord or

GitHub issues, and we will use your feedback to improve on our upcoming

releases,” the description said. Plane is built using a carefully selected

tech stack, comprising Next.js for the frontend and Django for the backend,

Kurama said. “We utilize PostgreSQL as our primary database and Redis to

manage background tasks,” he wrote in the post. “Additionally, our

architecture includes two microservices, Gateway and Pilot. Gateway serves

as a proxy server to our database, preventing the overloading of our primary

server, while Pilot provides the interface for building integrations.

...”

Emerging AI Governance is an Opportunity for Business Leaders to Accelerate Innovation and Profitability

Firstly, regulation can help establish clear guidelines and standards for

developing and deploying AI systems, for example, standards in accuracy,

reliability, and risk management. Such guidelines can provide a stable and

predictable framework for innovation, reducing uncertainty and risk in AI

system development. This will increase participation in the field from

developers and encourage greater investment from public and private

organizations, thereby boosting the industry as a whole. ... Governments and

governance organizations have a strong history of successfully investing in

AI technologies and their inputs (e.g., Open Data Institute, Horizon

Europe), as well as acting as demand side stimulators for long-term,

high-risk innovations that are the foundations of many of the technologies

we use today. Such examples include innovation at DARPA that formed the

foundations of the Internet, or financial support to novel technologies

through subsidy systems e.g., consumer solar panels.

Quote for the day:

"Try not to become a man of success but a man of value." --

Albert Einstein

/filters:no_upscale()/articles/impact-machine-learning-climate/en/resources/25figure-1-1685092294544.jpg)

/filters:no_upscale()/articles/kafka-clusters-cloudflare/en/resources/24figure-1-1684771375174.jpg)