Primary Reasons That Deteriorate Digital Transformation Initiatives

Fallacious organizational culture can readily fail transformation initiatives.

Adopting better cultural changes is as essential as digital transformation.

Hence, businesses must embrace the cultural differences that digital

transformation demands. IT initiatives require amendments in products, internal

processes, and better engagement with customers. As per a recent report by Tek

Systems, “State Of Digital Transformation,” 46% of businesses believe digital

transformations enhance customer experience and engagement. To achieve these

factors, involving teams from various departments is an excellent way to work

together more coherently. Not having a collaborative culture across enterprises

can be a significant reason for digital transformation failures. Establishing a

change management process is recommended to bring the needed cultural change.

This process can help identify people actively resistant to change, followed by

adequate training and education, and transform them to adopt the cultural

difference quickly.

Why data leaders struggle to produce strategic results

The top impediment? Skills and staff shortages. One in six (17%) survey

respondents said talent was their biggest issue, while 39% listed it among their

top three. And the tight talent pool isn’t helping, Medeiros says. “CDAOs must

have a talent strategy that doesn’t count on hiring data and analytics talent

ready-made.” To counter this, CDAOs need to build a robust talent management

strategy that includes education, training, and coaching for data-driven culture

and data literacy, Medeiros says. That strategy must apply not only to the core

data and analytics team but also the broader business and technology communities

in the organization. ... Strategic missteps in realizing data goals may signal

an organizational issue at the C-level, with company leaders recognizing the

importance of data and analytics but falling short on making the strategic

changes and investments necessary for success. According to a 2022 study from

Alation and Wakefield Research, 71% of data leaders said they were “less than

very confident” that their company’s leadership sees a link between investing in

data and analytics and staying ahead of the competition.

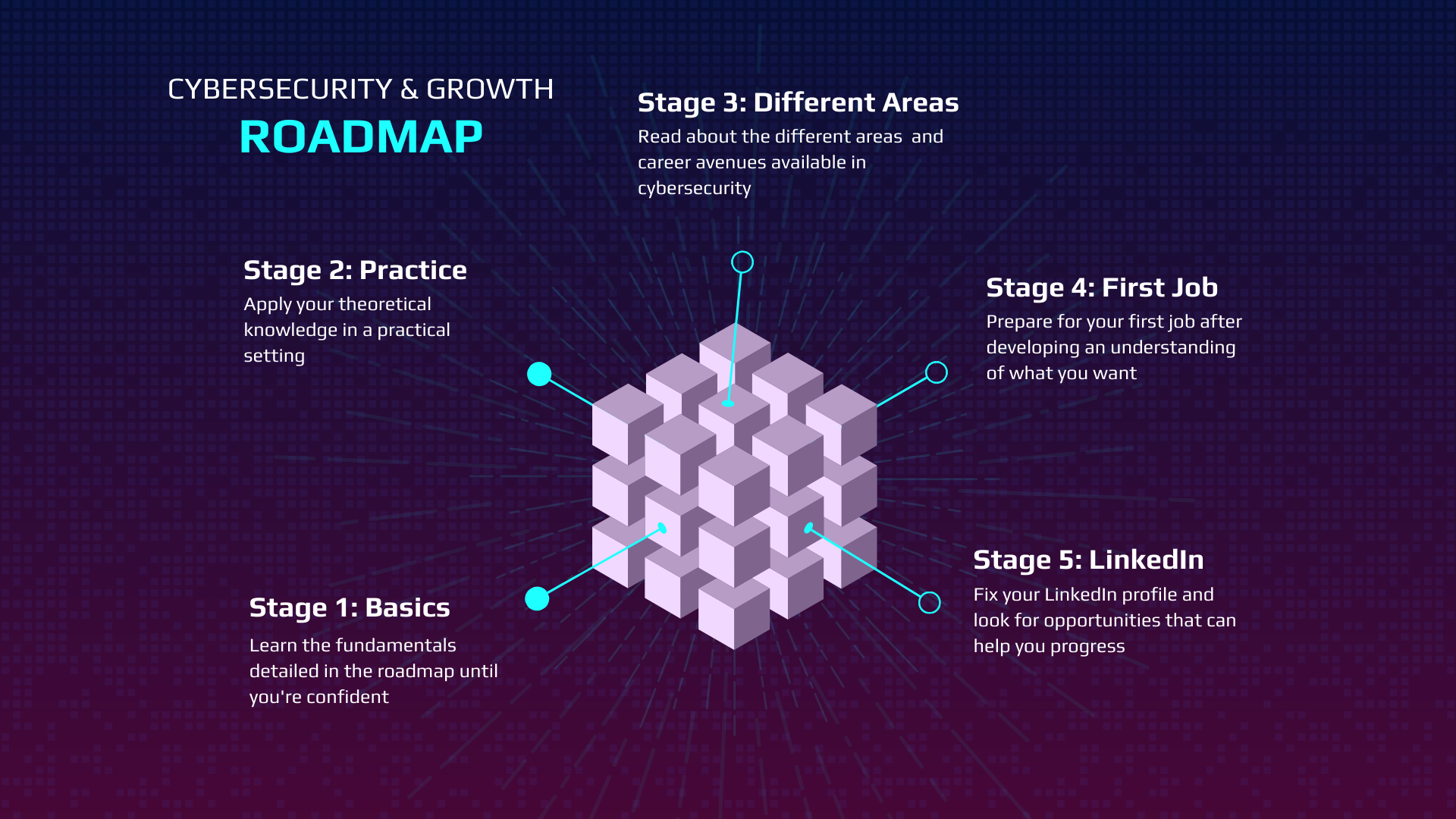

A Roadmap For Transitioning Into Cybersecurity

The best way to discover information is to search for specific points related to

the categories above. For example, if I'm looking at SQL injection

vulnerabilities, I would specifically input that into Google and try to learn as

much as I can about SQL injection. I don't recommend relying on just one

resource to learn everything. This is where you need to venture out on your own

and do some research. I can only provide examples of what good material looks

like. In the beginning, your approach will likely be more theoretical, but I

firmly believe that the most effective way to learn is through practical

experience. Therefore, you should aim to engage in hands-on activities as much

as possible. ... Writing blog posts helps you solidify your understanding of a

topic, improve your communication skills, and build an online portfolio that

showcases your expertise. Opinion: Producing blog posts is an excellent way

to engage with the community, share your knowledge, and give back. Plus, it

helps you establish a personal brand and network with like-minded

professionals.

What Are Microservices Design Patterns?

Decomposition patterns are used to break down large or small applications into

smaller services. You can break down the program based on business capabilities,

transactions, or sub-domains. If you want to break it down using business

capabilities, you will first have to evaluate the nature of the enterprise. As

an example, a tech company could have capabilities like sales and accounting,

and each of these capabilities can be considered a service. Decomposing an

application by business capabilities may be challenging because of God classes.

To solve this problem, you can break down the app using sub-domains. ... One

observability pattern to discuss is log aggregation. This pattern enables

clients to use a centralized logging service to aggregate logs from every

service instance. Users can also set alerts for specific texts that appear in

the logs. This system is essential since requests often spam several service

instances. The third aspect of observability patterns is distributed tracing.

This is essential since microservice architecture requests cut across different

services. This makes it hard to trace end-to-end requests when finding the root

causes of certain issues.

A New Field of Computing Powered by Human Brain Cells: “Organoid Intelligence”

It might take decades before organoid intelligence can power a system as smart

as a mouse, Hartung said. But by scaling up production of brain organoids and

training them with artificial intelligence, he foresees a future where

biocomputers support superior computing speed, processing power, data

efficiency, and storage capabilities. ... Organoid intelligence could also

revolutionize drug testing research for neurodevelopmental disorders and

neurodegeneration, said Lena Smirnova, a Johns Hopkins assistant professor of

environmental health and engineering who co-leads the investigations. “We want

to compare brain organoids from typically developed donors versus brain

organoids from donors with autism,” Smirnova said. “The tools we are developing

towards biological computing are the same tools that will allow us to understand

changes in neuronal networks specific for autism, without having to use animals

or to access patients, so we can understand the underlying mechanisms of why

patients have these cognition issues and impairments.”

'Critical gap': How can companies tackle the cybersecurity talent shortage?

The demand for cybersecurity is on the rise with no signs of it slowing down

anytime soon. The cybersecurity talent shortage is a challenge, but that doesn't

mean it has to be a problem. Companies today are taking critical steps to bridge

this gap through innovative ways. It is imperative to deploy skilled data

security professionals who can focus on critical thinking and innovation,

allowing the automated bots to take over the tedious, repetitive tasks. With

this, companies can predict and be in front of even the most sophisticated

cyber-attacks without them having to hire more manpower to account for them. ...

Cybersecurity is critical to the economy and across industries. This field is a

good fit for professionals looking to solve complex problems and navigate the

different aspects of client requirements. The first step to building a career in

data security is by entering the tech workforce. Pursuing an associate degree,

bachelor’s degree, or online cybersecurity degree should create a smooth gateway

to the sector.

The Economics, Value and Service of Testing

Clearly writing tests is additional to getting the code correct from the start,

right? If we could somehow guarantee getting the code correct from the start,

you could argue we wouldn’t need the written tests. But, then again, if we

somehow had that kind of guarantee, performing tests wouldn’t matter much

either. The trick is that all testing is in response to imperfection. We can’t

get the code “correct” the first time. And even if we could, we wouldn’t

actually know we did until that code was delivered to users for feedback. And

even then we have to allow for the idea that notions of quality can be not just

objective, but subjective. ... When people make arguments against written tests,

they are making (in part) an economical argument. But so are those who are

making a case for written tests. When framed this way, people can have fruitful

discussions about what is and isn’t economical but backed up by judgment.

Judgment, in this context, is all about assigning value. Economists see judgment

as abilities related to determining some payoff or reward or profit. Or, to use

the term I used earlier, a utlity.

Operation vs. innovation: 3 tips for a win-win

One of the biggest innovation inhibitors is the organizational silo. When IT and

business teams don’t collaborate or communicate, IT spins its wheels on projects

that aren’t truly aligned with business goals. When IT teams are simply trying

to “keep the lights on,” a lack of alignment between business leaders and

IT (who may not fully understand how projects support business objectives) is a

recipe for failure. On the technology side, innovations like low-code and

no-code applications are helping to bridge the technical and tactical gap

between business and IT teams. With no-code solutions, business users can build

apps and manage and change internal workflows and tasks without tapping into IT

resources. IT governance and guardrails over these solutions are important, but

they free up time for IT and software teams to work on higher-level innovation.

... Internal IT teams are often equipped with and experienced in maintaining

operational excellence. Depending on the company, these internal teams may also

excel at building new solutions and applications from the ground up.

Cloud Skills Gap a Challenge for Financial Institutions

“While the cloud seems like a very simple technology, that’s not the case,” he

says. “Not knowing the cloud default configurations and countermeasures that

should be taken against it might keep your application wide open.” Siksik adds

that changes in the cloud also happen quite frequently and with less level of

control over changes than the bank normally has. “The cloud is open to many

developers and DevOps, which could push a change without the proper change

process, as things are more dynamic,” he explains. “This mindset is new to

banks, where normally you will have strict and long change processes.” James

McQuiggan, security awareness advocate at KnowBe4, explains cloud architects

design and oversee the bank's cloud infrastructure implementation and need

experience in cloud computing platforms and knowledge of network architecture,

security, and compliance. “The security specialists are to ensure the bank's

cloud environment is secure and compliant with any applicable regulatory

requirements,” he adds.

The era of passive cybersecurity awareness training is over

Justifying the need for cybersecurity investment to the executive team may be

challenging for tech leaders. Compared to other business functions, the return

from investing in IT security could be more apparent to executives. However, the

importance of investing in a strong security posture becomes more evident when

compared to the damage from data breaches and ransomware attacks. By

highlighting savings in terms of improved quality of execution of cybersecurity

policies and improved IT productivity through automation, it becomes easier to

articulate the value of cybersecurity initiatives to the executive team. Modern

social engineering attacks often use a combination of communication channels

such as email, phone calls, SMS, and messengers. With the recent theft of

terabytes of data, attackers are increasingly using this information to

personalize their messaging and pose as trusted organizations. In this context,

organizations can no longer rely on a passive approach to cybersecurity

awareness training.

Quote for the day:

"Leadership is being the first egg in

the omelet." -- Jarod Kintz

:format(webp)/cloudfront-us-east-1.images.arcpublishing.com/coindesk/WPB2NS6BSFDVNAUP4GXNGCR4XI.jpg)