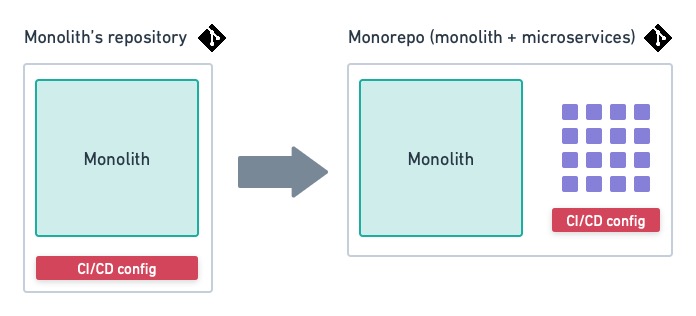

The Shared Responsibility of Taming Cloud Costs

The cost of cloud impacts the bottom line and therefore, cloud cost management

cannot be the job of the CIO alone. It’s important to create a culture or

framework where managing cloud costs is a shared responsibility among business,

product, and engineering teams, and where it’s a consideration throughout the

software development process and in IT operations. In order to do just this,

it’s important to shift education left. Like many DevOps principles,

“shift-left” once had a specific meaning that has become more generalized over

time. At its core, the idea of shifting left is to be proactive when it comes to

cost management in all management and operational processes. It means empowering

developers and making operational considerations a key part of application

development. Change management must be connected in the context of cost. If

organizations educate and empower developers to understand the impact of cloud

cost as software is written, they will reap the benefits of building more cost

effective software that improves operational visibility and control.

How AI Regulations Are Shaping Its Current And Future Use

Examining some of the many laws that have been passed in relation to AI, I have

identified some of the best practices for both statewide and nationwide

regulation. On a national level, it is crucial to both develop public trust in

AI as well as have advisory boards to monitor the use of AI. One such example is

having specific research teams or committees dedicated to identifying and

studying deepfakes. In the U.S., Texas and California have legally banned the

use of deepfakes to influence elections, and the EU created a self-regulating

Code of Practice on Disinformation for all online platforms to achieve similar

results. Another necessity is to have an ethics committee that monitors and

advises the use of AI in digitization activities, a practice currently in place

in Belgium (pg. 179). Specifically, this committee encourages companies that use

AI to weigh the costs and benefits of implementation compared to the systems

that will get replaced. Finally, it’s important to promote public trust in AI on

a national level.

5 key considerations for your 2023 cybersecurity budget planning

The cost of complying with various privacy regulations and security obligations

in contracts is going up, Patel says. “Some contracts might require independent

testing by third-party auditors. Auditors and consultants are also raising fees

due to inflation and rising salaries,” he says. ... “When an organization is

truly secure, the cost to achieve and maintain compliance should be reduced,” he

says. Evolving regulatory compliance requirements, especially for those

organizations supporting critical infrastructure, require significant support,

Chaddock says. “Even the effort to determine what needs to happen can be costly

and detract from daily operations, so plan for increased effort to support

regulatory obligations if applicable,” he says. ... If paying for such policies

comes out of the security budget, CISOs will need to take into consideration the

rising costs of coverage and other factors. Companies should be sure to include

the cost of cyber insurance over time, and more important the costs associated

with maintaining effective and secure backup/restore capabilities, Chaddock

says.

CISA pulls the fire alarm on Juniper Networks bugs

The networking and security company also issued an alert about critical

vulnerabilities in Junos Space Security Director Policy Enforcer — this piece

provides centralized threat management and monitoring for software-defined

networks — but noted that it's not aware of any malicious exploitation of these

critical bugs. While the vendor didn't provide details about the Policy Enforcer

bugs, they received a 9.8 CVSS score, and there are "multiple" vulnerabilities

in this product, according to the security bulletin. The flaws affect all

versions of Junos Space Policy Enforcer prior to 22.1R1, and Juniper said it has

fixed the issues. The next group of critical vulnerabilities exist in

third-party software used in the Contrail Networking product. In this security

bulletin, Juniper issued updates to address more than 100 CVEs that go back to

2013. Upgrading to release 21.4.0 fixes the Open Container Initiative-compliant

Red Hat Universal Base Image container image from Red Hat Enterprise Linux 7 to

Red Hat Enterprise Linux 8, the vendor explained in the alert.

HTTP/3 Is Now a Standard: Why Use It and How to Get Started

As you move from one mast to another, from behind walls that block or bounce

signals, connections are commonly cut and restarted. This is not what TCP likes

— it doesn’t really want to communicate without formal introductions and a good

firm handshake. In fact, it turns out that TCP’s strict accounting and waiting

for that last stray packet just means that users have to wait around for

webpages to load and new apps to download, or a connection timeout to be

re-established. So to take advantage of the informality of UDP, and to allow the

network to use some smart stuff on-the-fly, the new QUIC (Quick UDP Internet

Connections) format got more attention. While we don’t want to see too much

intelligence within the network itself, we are much more comfortable these days

with automatic decision making. QUIC understands that a site is made up of

multiple files, and it won’t blight the entire connection just because one file

hasn’t finished loading. The other trend that QUIC follows up on is built-in

security. Whereas encryption was optional before (i.e. HTTP or HTTPS) QUIC is

always encrypted.

The enemy of vulnerability management? Unrealistic expectations

First and most importantly, you need to be realistic. Many organizations want

critical vulnerabilities fixed within seven days. That is not realistic if you

only have one maintenance window per month. Additionally, if you do not have the

ability to reboot all your systems every weekend, you are setting yourself up

for failure. If you only have one maintenance window per month, there is no

reason to set a due date on critical vulnerabilities any less than 30 days. For

obvious reasons, organizations are nervous about speaking publicly about how

quickly they remediate vulnerabilities. One estimate states that the mean time

to remediate for private sector organizations is between 60 and 150 days. You

can get into that range by setting due dates of 30, 60, 90, and 180 days for

severities of critical, high, medium, and low, respectively. Better yet, this is

achievable with a single maintenance window each month. As someone who has

worked on both sides of this problem, getting it fixed eventually is more

important than taking a hard line on getting it fixed lightning fast, and then

having it sit there partially fixed indefinitely. Setting an aggressive policy

that your team cannot deliver on looks tough.

‘Callback’ Phishing Campaign Impersonates Security Firms

Researchers likened the campaign to one discovered last year dubbed BazarCall by

the Wizard Spider threat group. That campaign used a similar tactic to try to

spur people to make a phone call to opt-out of renewing an online service the

recipient purportedly is currently using, Sophos researchers explained at the

time. If people made the call, a friendly person on the other side would give

them a website address where the soon-to-be victim could supposedly unsubscribe

from the service. However, that website instead led them to a malicious

download. ... Researchers did not specify what other security companies

were being impersonated in the campaign, which they identified on July 8, they

said. In their blog post, they included a screenshot of the email sent to

recipients impersonating CrowdStrike, which appears legitimate by using the

company’s logo. Specifically, the email informs the target that it’s coming from

their company’s “outsourced data security services vendor,” and that “abnormal

activity” has been detected on the “segment of the network which your

workstation is a part of.”

The next frontier in cloud computing

Terms that are beginning to emerge, such as “supercloud,” “distributed cloud,”

“metacloud” (my vote), and “abstract cloud.” Even the term “cloud native” is up

for debate. To be fair to the buzzword makers, they all define the concept a bit

differently, and I know the wrath of defining a buzzword a bit differently than

others do. The common pattern seems to be a collection of public clouds and

sometimes edge-based systems that work together for some greater purpose. The

metacloud concept will be the single focus for the next 5 to 10 years as we

begin to put public clouds to work. Having a collection of cloud services

managed with abstraction and automation is much more valuable than attempting to

leverage each public cloud provider on its terms rather than yours. We want to

leverage public cloud providers through abstract interfaces to access specific

services, such as storage, compute, artificial intelligence, data, etc., and we

want to support a layer of cloud-spanning technology that allows us to use those

services more effectively. A metacloud removes the complexity that multicloud

brings these days.

A CIO’s guide to guiding business change

When it comes to supporting business change, the “it depends answer” amounts to

choosing the most suitable methodology, not the methodology the business analyst

has the darkest belt in. But on the other hand, the idea of having to earn belts

of varying hue or their equivalent levels of expertise in several of these

methodologies, just so you can choose the one that best fits a situation, might

strike you as too intimidating to bother with. Picking one to use in all

situations, and living with its limitations, is understandably tempting. If

adding to your belt collection isn’t high on your priority list, here’s what you

need to know to limit your hold-your-pants-up apparel to suspenders, leaving the

black belts to specialists you bring in for the job once you’ve decided which

methodology fits your situation best. Before you can be in a position to choose,

keep in mind the six dimensions of process optimization: Fixed cost, incremental

cost, cycle time, throughput, quality, and excellence. You need to keep these

center stage, because: You can only optimize around no more than three of them;

the ones you choose have tradeoffs; and each methodology is designed to optimize

different process dimensions.

7 Reasons to Choose Apache Pulsar over Apache Kafka

Apache Pulsar is like two products in one. Not only can it handle high-rate,

real-time use cases like Kafka, but it also supports standard message queuing

patterns, such as competing consumers, fail-over subscriptions, and easy message

fan out. Apache Pulsar automatically keeps track of the client's read position

in the topic and stores that information in its high-performance distributed

ledger, Apache BookKeeper. Unlike Kafka, Apache Pulsar can handle many of the

use cases of a traditional queuing system, like RabbitMQ. So instead of running

two systems — one for real-time streaming and one for queuing — you do both with

Pulsar. It’s a two-for-one deal, and those are always good. ... Well, with

Apache Pulsar it can be that simple. If you just need a topic, then use a topic.

You don’t have to specify the number of partitions or think about how many

consumers the topic might have. Pulsar subscriptions allow you to add as many

consumers as you want on a topic with Pulsar keeping track of it all.

Quote for the day:

"Be willing to make decisions. That's

the most important quality in a good leader." --

General George S. Patton, Jr.