Revisit Your Password Policies to Retain PCI Compliance

PCI version 4.0 requires multifactor authentication to be more widely used.

Whereas multifactor authentication had previously been required for

administrators who needed to access systems related to card holder data or

processing, the new requirement mandates that multifactor authentication must be

used for any account that has access to card holder data. The new standards also

require user’s passwords to be changed every 12 months. Additionally, user’s

passwords must be changed any time that an account is suspected to have been

compromised. A third requirement is that PCI requires users to use strong

passwords. While strong passwords have always been required by the PCI standard,

the password requirements are more stringent than before. Passwords must now be

at least 15 characters in length, and they must include numeric and alphanumeric

characters. Additionally, user’s passwords must be compared against a list of

passwords that are known to be compromised. Another requirement of PCI 0 is

that organizations must review access privileges every six months to make sure

that only those who specifically require access to card holder data are able to

access that data.

Making the world a safer place with Microsoft Defender for individuals

Today’s sophisticated cyber threats require a modern approach to security. And

this doesn’t apply only to enterprises or government entities—in recent years

we’ve seen attacks increase exponentially against individuals. There are 921

password attacks every second.1 We’ve seen ransomware threats extending beyond

their usual targets to go after small businesses and families. And we know, as

bad actors become more and more sophisticated, we need to increase our personal

defenses as well. That is why it is so important for us to protect your entire

digital life, whether you are at home or work—threats don’t end when you walk

out of the office or close your work laptop for the day. We need solutions that

help keep you and your family secure in how you work, play, and live. That’s why

I’m excited to share the availability of Microsoft Defender for individuals, a

new online security application for Microsoft 365 Personal and Family

subscribers. We believe every person and family should feel safe online. This is

an exciting step in our journey to bring security to all and I’m thrilled to

share with you more about this new app, available with features for you to try

today.

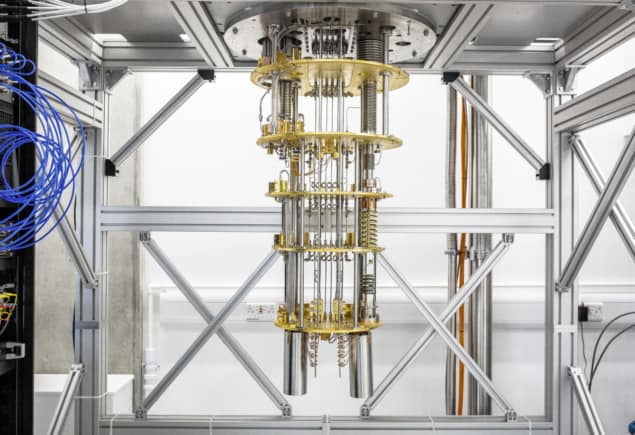

Data Is Vulnerable to Quantum Computers That Don’t Exist Yet

To stay ahead of quantum computers, scientists around the world have spent the

past two decades designing post-quantum cryptography (PQC) algorithms. These are

based on new mathematical problems that both quantum and classical computers

find difficult to solve. In January, the White House issued a memorandum on

transitioning to quantum-resistant cryptography, underscoring that preparations

for this transition should begin as soon as possible. However, after

organizations such as the National Institute of Standards and Technology (NIST)

help decide which PQC algorithms should become the new standards the world

should adopt, there are billions of old and new devices that will need to get

updated. Sandbox AQ notes that such efforts could take decades to implement.

Although quantum computers are currently in their infancy, there are already

attacks that can steal encrypted data with the intention to crack it once

codebreaking quantum computers become a reality. Therefore, the Sandbox AQ

argues that governments, businesses, and other major organizations must begin

the shift toward PQC now.

Developer, Beware: The 3 API Security Risks You Can’t Overlook

By design, the majority of APIs send data from the data store to the client.

Excessive data exposure results when the API has been designed to return large

amounts of data to the client. Attackers can collect or harvest sensitive data

from such API responses. For example, a group fitness app displays the home

location of the group’s participants. The locations are displayed on a map using

the latitude and longitude of each athlete. A well-designed API is intended to

return only the latitude and longitude of each athlete. Conversely, a poorly

designed API returns user information about each athlete, including their full

name, address, email, phone number, latitude and longitude, and more. This is an

example of excessive data exposure as the API is returning more data than it was

designed to do. This might occur when a poorly designed API pulls a record from

the database and returns it to the client in its entirety, exposing all the data

in the file. In this situation, the true business use case was not fully

understood during development.

Apple finally embraces open source

Apple is open-sourcing a reference PyTorch implementation of the Transformer

architecture to help developers deploy Transformer models on Apple devices. In

2017, Google launched the Transformers models. Since then, it has become the

model of choice for natural language processing (NLP) problems. ... As a

company, Apple behaves like a cult. Nobody knows what goes inside Apple’s four

walls. For the common man, Apple is a consumer electronics firm unlike tech

giants such as Google or Microsoft. Google, for example, is seen as a leader

in AI, with top AI talents working for the company and has released numerous

research papers over the years. Google also owns Deepmind, another company

leading in AI research. Apple is struggling with recruiting top AI talents,

and for good reasons. “Apple with its top-five rank employer brand image is

currently having difficulty recruiting top AI talent. In fact, in order to let

potential recruits see some of the exciting machine-learning work that is

occurring at Apple, it recently had to alter its incredibly secretive culture

and to offer a publicly visible Apple Machine Learning Journal,” said Dr

author John Sullivan.

Early adopters position themselves for quantum advantage

Perhaps most significant, however, is funding for a series of collaborative

projects aimed at demonstrating specific applications for today’s quantum

computers. Following a call for proposals in the autumn, for each successful

bid the NQCC will first work with the project team to analyse the use case,

assess the requirements, and determine whether the application can be usefully

tackled with current technologies. “The next stage would be to identify

appropriate algorithms or develop new ones, and then run them on a physical

quantum computer,” says Decaroli. “We can then benchmark the results against

classical solutions and potentially across different quantum-computing

platforms.” One crucial partner in the SparQ programme is Oxford Quantum

Circuits (OQC), the only UK company to offer cloud-based access to a quantum

computer. Its latest eight-qubit processor, named “Lucy” after the pioneering

quantum physicist Lucy Mensing, was released on Amazon Web Services in

February this year. “We are looking forward to working with end users in

different industry sectors to provide access to our hardware,” commented Ilana

Wisby, CEO of OQC.

How decentralization and Web3 will impact the enterprise

For one, over time, Web3 will almost certainly become a vital approach to the

way our IT systems work. Decentralization is now a significant industry trend

that will be insisted on by a growing number of tech consumers and businesses

as well. Instead of storing information in our own databases and running code

in parts of the cloud that we pay for or otherwise control, businesses will

have to get used to relying on Web3 resources (data, compute, etc.) and

sharing more of that control. Much of the important data we need to run our

businesses will increasingly be kept in more private and protected places,

stored in blockchain and other types of distributed ledgers. A rising share of

our applications over time will be more akin to open source projects and run

using smart contracts that all stakeholders can transparently view, verify,

and agree to. Even our businesses will have strange new subsidiaries that are

actually embodied entirely in code and run automatically on their own, using

digital inputs from stakeholders. And this is just the beginning. The

cryptographic systems and immutable transaction ledgers of Web3 have now stood

enough of the test of time to prove out and show the way.

Blockchain's potential: How AI can change the decentralized ledger

When asked whether AI is too nascent a technology to have any sort of impact

on the real world, he stated that like most tech paradigms including AI,

quantum computing and even blockchain, these ideas are still in their early

stages of adoption. He likened the situation to the Web2 boom of the 90s,

where people are only now beginning to realize the need for high-quality data

to train an engine. Furthermore, he highlighted that there are already several

everyday use cases for AI that most people take for granted in their everyday

lives. “We have AI algorithms that talk to us on our phones and home

automation systems that track social sentiment, predict cyberattacks, etc.,”

Krishnakumar stated. Ahmed Ismail, CEO and president of Fluid — an AI

quant-based financial platform — pointed out that there are many instances of

AI benefitting blockchain. A perfect example of this combination, per Ismail,

are crypto liquidity aggregators that use a subset of AI and machine learning

to conduct deep data analysis, provide price predictions and offer optimized

trading strategies to identify current/future market phenomena

We don’t need another infosec hero

Instead of thinking of ourselves as heroes—we aren’t Wonder Woman, or Batman,

or Superman—it’s time to think of ourselves as sidekicks. On a good day, we

help someone else make wiser risk choices, and those choices result in more

profitable outcomes for everyone. But it is someone else who is the hero; we

just hold their cape and refill their utility pouch. How do we do that? It

begins with some humility. Most people in our profession work in cost centers.

To the rest of the company, we are a drag on the business, and while we like

to talk about business enablement, our first goal has to be removing the

business impediment we’ve become. Are you responsible for product

security? Engage the software architects who write the code and teach them how

to do their own safety and security reviews earlier in their process.

... No matter what part of the business you support, start learning what

they need to do to get the job done. Identify opportunities where you can get

out of their way first, and then look for ways to help improve their processes

to be faster and safer.

Entering the metaverse: How companies can take their first virtual steps

If the virtual world experiment is successful, it will be because of superior

immersivity. Concerts, movies, sporting events and consumer experiences must

offer interactivity and wholistic engagement that makes the real world appear

dull and lacking in possibilities by comparison. While entertainment companies

will more easily master the metaverse experience offered to audiences, brands

and businesses in the vast majority of other industries will likely struggle

to conceptualize and develop the level of immersivity that will be required to

be effective. Healthcare, education and financial services could all prosper

from virtual properties and offerings – medical professionals seeing patients

and patients building communities of support, classrooms that are not confined

to textbooks but bring subject matter to life for greater curiosity and stock

markets with available real-time multidimensional metrics that make Bloomberg

terminals appear outdated. These virtual theme parks of consumerism and

participation allow for brand reinvention, offer the possibility for novel

sources of revenue and obviously skew to a younger audience that may not have

yet come across or interacted with these same brands in the real world.

Quote for the day:

"Good leaders make people feel that

they're at the very heart of things, not at the periphery." --

Warren G. Bennis