Cloud computing security: Where it is, where it's going

Most businesses use multiple cloud services and cloud providers, a hybrid

approach that can support granular security options where vital data is kept

close (perhaps in a private cloud) while less sensitive applications run in a

public cloud to take advantage of big tech's economies of scale. But the hybrid

model also introduces new complications, as every provider will have a slightly

different set of security models that cloud customers will need to understand

and manage. That takes time and (often elusive) expertise. But misconfigured

services are high on the list of the causes for security incidents, along with

even more basic failures like poor passwords and identity controls. Little

surprise that companies are evaluating tools to automate much of this. That's

leading to interest in new technologies such as Cloud Security Posture

Management (CSPM) tools, which can help security teams spot and fix potential

security issues around misconfiguration and compliance in the cloud, so they

know the same rules are being enforced across their cloud services.

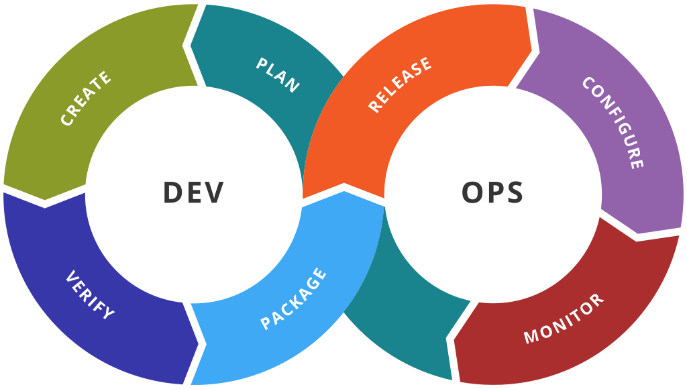

Jump Into the DevOps Pool: The Water Is Fine

If you’re thinking that becoming a member of a DevOps team sounds interesting,

what are the things you need to consider? Having experience in just about any

aspect of IT gives you the technical foundation to make yourself a viable

candidate. Do some research. What does it take to hone your existing skills to

become a successful member of a DevOps team? You’ll likely find that it takes

you in a direction well within your reach. Your technical skills are just the

beginning though. Your skills will contribute to the broader objective of the

DevOps team. Valuable DevOps team members understand how their role fits into

the bigger picture. It’s not necessary to know the details of another team

member’s discipline. It is, however, important to understand how each of your

roles contributes to the DevOps process. This implies that you take some time to

learn about each role’s function. Becoming an invaluable DevOps team member goes

one step further. DevOps engineers who possess or develop the interpersonal

skills to work beyond their team in guiding others, become key players within an

organization.

How to prioritize cloud spending: 5 strategies for architects

The price of spot instances changes over days and weeks, so you can't predict

the cost at the time of purchase. The amount of money saved varies depending on

the type of resource: Low-priority instances are the least expensive, but they

may be unavailable or turn off abruptly depending on capacity demand in the

region. But such cases are rare. For example, AWS states that the average

interruption frequency across all regions and instance types doesn't exceed 10%.

Spot instances are best for stateless workloads, batch operations, and other

fault-tolerant or time-flexible tasks. ... Begin by examining your cloud

provider's transfer fees. Then, find ways to limit the number of data transfers

in your cloud architecture. For example, you may need to change your application

behavior and architecture to use computing resources in the closest data

location. Transfer on-premises apps that often access cloud-hosted data to the

cloud. In contrast to the cloud, specific resources (such as network bandwidth)

are considered free in traditional datacenters. So if you move applications from

on-premises datacenters, modify your application architecture to limit the

amount of data transferred.

Defensive Cyber Attacks Declared Legal by UK AG

The move highlights a general lack of international agreement about when

defensive cyber attacks should be considered appropriate. There has long been a

murky world of online espionage in which countries have tacitly agreed to not

respond with military force, due in no small part to degrees of plausible

deniability and a great difficulty in displaying concrete evidence to the public

that another nation’s covert hacking teams were behind a virtual break-in. This

unofficial understanding has survived in the internet age, even as allies have

been caught spying on each other, so long as everyone refrained from using cyber

attacks to cause physical damage. Some developments in recent years have

strained that arrangement, including Russia’s repeated cyber attacks on services

in Ukraine and the recent willingness of cyber criminals to hit foreign critical

infrastructure and government agencies with ransomware attacks. The UK AG has

expressed that there is a pressing need to establish formal rules regarding

defensive cyber attacks given the demonstrated possibility of devastating

incidents that could cause actual damage to civilians, and that existing

non-intervention agreements could serve as a launch point.

How AI can give companies a DEI boost

Although many companies are experimenting with AI as a tool to assess DEI in

these areas, Greenstein noted, they aren’t fully delegating those processes to

AI, but rather are augmenting them with AI. Part of the reason for their caution

is that in the past, AI often did more harm than good in terms of DEI in the

workplace, as biased algorithms discriminated against women and non-white job

candidates. “There has been a lot of news about the impact of bias in the

algorithms looking to identify talent,” Greenstein said. For example, in 2018,

Amazon was forced to scrap its secret AI recruiting tool after the tech giant

realized it was biased against women. And a 2019 study conducted by Harvard

Business Review concluded that AI-enabled recruiting algorithms introduced

anti-Black bias into the process. AI bias is caused, often unconsciously, by the

people who design AI models and interpret the results. If an AI is trained on

biased data, it will, in turn, make biased decisions. For instance, if a company

has hired mostly white, male software engineers with degrees from certain

universities in the past, a recruiting algorithm might favor job candidates with

similar profiles for open engineering positions.

A CFO’s perspective on sustainable, inclusive growth

We’ve faced an ongoing health crisis that turned into a social crisis that went

to an economic crisis and, unfortunately, we’re facing humanitarian crises, such

as the war in Ukraine. But the fact of the matter is, people are making

decisions, different decisions than where we were three to five years ago. And I

believe they’re challenging the purpose of organizations, businesses, and

leadership. As we talk about sustainability and inclusivity with that

combination of the foundation for growth, that’s what the priorities of people

are today. You asked about today’s CFOs and sustainability, inclusivity, growth.

I truly believe that history will be written about these times that we’ve been

operating in. As CFOs, we’re always—Eric, as you know quite well—focused on the

what: productivity, efficiency, operational stability, liquidity. But I think

these times will be less about pure financials and more about a culture. And

when I think about culture, IBM—let me give a little shout out to my company—has

a framework. We’ve been in existence for 111 years. We have a framework around

culture that’s really grounded in purpose, united in values, and demonstrated

through growth behaviors.

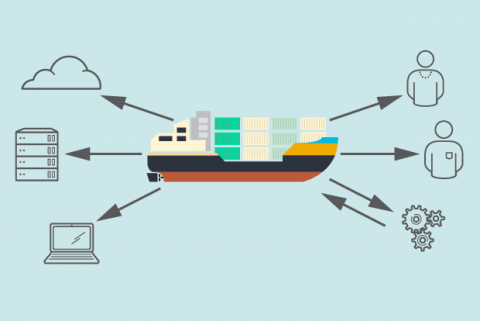

Container adoption: 5 expert tips

“If you want to move beyond containers as a tool for developers and put them

into production, that means you’ll also be adopting an orchestration layer like

Kubernetes and the various monitoring, CI/CD, logging, and tracing tools that go

with it,” Haff says. “Which is exactly what many organizations are doing.”

Containers and Kubernetes tend to go hand-in-hand because without that

orchestration layer, teams otherwise find that managing containers at any kind

of scale in production requires untenable effort. Haff notes that 70 percent of

IT leaders surveyed in the State of Enterprise Open Source 2022 report said

their organizations were using Kubernetes. Speaking of open source,

containerization has open source DNA – and adoption often leads to uptake of

other open source technologies, too. Make sure you’re using up-to-date,

reliable, and secure code. “Containerization leads to more use of open source

and other public components,” Korren says. “There are a lot of useful,

well-maintained code components on the Internet, but there are many that are

not.”

Create End-To-End Integration Of Tools & Data For Flow Insight & Traceability

Without a long-term strategy or clearly assigned data-custody across the digital

product lifecycle, data access and management is fragmented between process

owners, application owners, or development teams, becoming more unstable with

every company re-organization or staff departure. Many organizations reluctantly

determine that data islands, duplicate data stores, and conflicting data are

inevitable. The chain reaction of resulting issues is both overwhelming and

costly. It may not be possible to do a meaningful root cause analysis to resolve

incidents, assess the efficiency of digital product delivery, assess the value

compared with cost, or receive valuable feedback from development before

deployment. Design flaws are repeated, and incorrect processes are

unintentionally reinforced. The lack of end-to-end visibility results in a slow

response time to development, change, and incident tickets because there is no

traceability or data integrity for tracking down the root cause of problems. Add

that when data ownership is transferred or unclear, frustrated teams may dodge

responsibility and throw issues “over the fence” to other stakeholders through

the course of the digital product’s lifecycle.

Using Behavioral Analytics to Bolster Security

Josh Martin, product evangelist at security firm Cyolo, explains that behavioral

analytics would not be possible without ML and AI. “The data collected from the

detection phase will be fed into multiple AI and ML models that will allow for

deeper inspection of access habits to detect patterns or outliers for specific

users,” he says. He outlines a potential use case for behavioral analytics and

zero trust focused on a team member working from home. This user logs in every

day from their corporate Mac around 8:00 in the morning and will either log into

Salesforce or O365 first thing. “Considering this is normal for the user, the

AI/ML mechanisms will start to look for anything outside of this baseline,”

Martin says. “So, when the user takes a vacation to a different state and uses a

personal Windows laptop to access ADP around 10 o’clock at night, this would

raise a flag and shut down further authentication attempts until a security

analyst can investigate. In this case, it could have been a malicious entity

using stolen credentials to access payroll information.” From his perspective,

behavioral analytics is likely to become the new norm as AI/ML products and

knowledge become more accessible to the masses.

Rekindling the thrill of programming

We could say that programming is an activity that moves between the mental and

the physical. We could even say it is a way to interact with the logical nature

of reality. The programmer blithely skips across the mind-body divide that has

so confounded thinkers. “This admitted, we may propose to execute, by means of

machinery, the mechanical branch of these labours, reserving for pure intellect

that which depends on the reasoning faculties.” So said Charles Babbage,

originator of the concept of a digital programmable computer. Babbage was

conceiving of computing in the 1800s. Babbage and his collaborator Lovelace were

conceiving not of a new work, but a new medium entirely. They wrangled out of

the ether a physical ground for our ideations, a way to put them to concrete

test and make them available in that form to other people for consideration and

elaboration. In my own life of studying philosophy, I discovered the discontent

of thought form whose rubber never meets the road. In this vein, Mr. Brooks

completes his thought above when he writes, “Yet the program construct, unlike

the poet’s words, is real in the sense that it moves and works, producing

visible outputs separate from the construct itself.”

Quote for the day:

"Great Groups need to know that the

person at the top will fight like a tiger for them." --

Warren G. Bennis

No comments:

Post a Comment