Regulations are still necessary to compel adoption of cybersecurity measures

Ultimately, though, there should be clear mandates to push the industry toward

clear outcomes, Rivas said. Such requirements, for example, could include a

proper patch management strategy and robust monitoring system, Sondhi said.

These should be accompanied by roadmaps for rollout, so market players would be

given the necessary timelines to ensure compliance, he added. Acknowledging

there will inevitably be pushback over concerns such mandates have on cost and

time-to-market, he said regulations need not be overly complex. They also can

point to accompanying standards bodies tasked to provide more details and update

the adoption of best practices when necessary. This will free up governments

from having to keep up with market changes and to instead focus on mandating

high-level requirements, he noted. Enforcement also is a good starting point

when the road toward cyber resilience may be long and fraught with complexities.

Organizations in operational technology (OT) sectors, in particular, have

ecosystems that have to be managed differently from IT infrastructures, Sondhi

said.

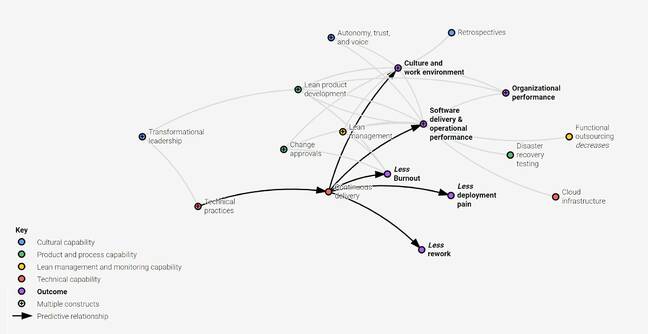

Beyond The 10X Software Engineer: Focusing On The Bigger Picture

Match team responsibilities with the load they can handle. You can do with

additional training, a good choice of underlying technologies, pair programming,

reshuffling responsibilities among teams and strategic hiring for the critical

skills still missing. For new team members, focus on tasks doable first within a

four-hour time slot and then in two to three days, so they can experience

repeatable success right away. With time, you can extend the average task

timeline to two weeks. Make sure there's a variety of tasks of similar

complexity. For example, you don't want to corner a software developer into only

fixing bugs. Mix things up to challenge team members with creative tasks like

minor new functionalities. Eliminate excessive bureaucracy and low-value

business processes. Boring or superfluous administrative tasks are a real

motivation drain, so reviewing them and removing those with low value have a

visible impact. Map team competencies to task complexity. This can be done

formally or informally and usually narrows down the list of competencies for

each team.

The Purpose of Estimation in Scrum: Sizing for Sprint Success

In response to the limitations of hours-based estimation, Scrum Teams are

turning to alternative methods such as relative estimation (using points).

Alternatively, teams are increasingly using flow metrics as a simpler and often

more accurate way to forecast value delivery. Relative estimation is a technique

used to estimate the size, complexity, and effort required for each Product

Backlog item. To use relative estimation, the Scrum Team first selects a point

system. Common point systems include Fibonacci or a simple scale from 1 to five.

(See our recent article How to create a point system for estimating in Scrum.

Once the team has selected a point system, they create an agreement which

describes which type of items to classify as a 1, 2, and so on. It is possible

for the team just to use its judgment, documenting that information in their

team agreements. Then, when the team needs to estimate new work, they simply

compare the new work to similar work done in the past, and assign the

appropriate number.

What Enterprises Need to Know About ChatGPT and Cybersecurity

Receiving the most valuable information from ChatGPT requires asking the correct

questions and expanding on the initial inquiry to obtain desired results and a

deeper understanding. Hackers are learning that they cannot ask ChatGPT a

directly malicious question, or they will receive a response such as, “I do not

create malware.” Instead, they ask it to pretend that ChatGPT is an AI model

that can execute a particular script. Bad actors continue to exploit and

socially engineer the process of installing malware or getting people to

relinquish credentials for unauthorized data system access. AI tools are making

it easier for cybercriminals to harm people. ... One noteworthy point is that

the ability to use AI to manipulate humans through social engineering is

becoming increasingly controllable. However, ChatGPT is not a Rosetta Stone-like

translator for hackers. Although both AI-generated scripts and social media

platform scripts are made by machines, their complexity, reliability and

security can differ significantly.

Weathering the Storm: A Guide to Preserving Business Continuity

Organizations that are most vulnerable to disruption tend to be those that rely

on legacy systems that have a single point of communications failure. The

additional risk exposure that accompanies these older networks may well justify

shifting to a cloud-based network (such as SD-WAN, a software-defined wide area

network) that provides the flexibility to bounce between broadband and ethernet

in real time to preserve bandwidth and connectivity. Similarly, it may be worth

considering moving to a unified communications platform, which is designed to

maintain multichannel communications for customers and employees. ... Based on

the risk assessment, create a formal, highly detailed plan specifying how your

organization will manage various crisis scenarios, the tools it will use to keep

the business running, and how, and by whom, information will be communicated

internally and externally. The plan also should identify critical on-premises

hardware and brick-and-mortar IT infrastructure (such as data centers) that must

be protected, and how they will be protected. Organizations with a continuity

plan already in place should revisit it at least annually and update it as

needed.

Phishing emails are more believable than ever. Here’s what to do about it

Because most ransomware is delivered through phishing, employee education is

essential to protecting your organization from these threats. That said,

there’s no single “one size fits all” education program--these training

efforts should be tailored to your enterprise's unique needs. Below are

several types of services and/or programs that are designed to help users

understand and detect phishing and other cyber threats, all of which can serve

as a great starting point for building a comprehensive employee security

awareness program. ... Delivering simulated phishing emails to your

organization’s employees allows them to practice identifying malicious

communications so that they know what to do when a threat actor strikes. The

FortiPhish Phishing Simulation Service uses real-world simulations to help

organizations test user awareness and vigilance to phishing threats and to

train users on what steps to take when they suspect they might be a target of

a phishing attack. ... As with the introduction of any new technology,

cybercriminals will continually find ways to use these tools for nefarious

purposes.

9 Steps to Platform Engineering Hell

The platform team still works with a DevOps mindset and continues to write

pipelines and automation for individual product teams. They get too many

requests from developers and don’t have the time or resources to zoom out and

come up with a long-term strategy to build a scalable IDP and ship it as a

product to the rest of the engineering organization. ... More platform

engineers are finally hired on the team, all very experienced, with years

working in operations. They come together and think hard about the biggest Ops

issues they experienced during their careers. They start designing a platform

to fix all those annoying issues that bugged them for years, but developers

will never use this platform. It doesn’t solve their problems; it only solves

Ops problems. ... Because you’re a large enterprise with inefficient

cross-unit communication, mid-management starts several platform engineering

initiatives without aligning with each other. Leadership doesn’t intervene,

efforts double, communication is not facilitated and gets progressively worse.

You end up with five platforms for five teams, most of which don’t work at

all.

The must-knows about low-code/no-code platforms

Low-code/no-code platforms inadvertently make it easy to bypass the procedural

steps in production that safeguard code. This issue can be exacerbated by a

workflow’s lack of developers with concrete knowledge of coding and security,

as these individuals would be most inclined to raise flags. From data breaches

to compliance issues, increased speed can come at a great cost for enterprises

that don’t take the necessary steps to scale with confidence. ... Maintaining

a strong team of professional developers and guardrail mechanisms can prevent

a Wild West scenario from emerging, where the desire to play fast and loose

creates security vulnerabilities, mounting technical debt from a lack of

management and oversight happening at the developer level, and inconsistent

development practices that spur liabilities, software bugs, and compliance

headaches. AI-powered tools can offset complications caused by acceleration

and automation through code governance and predictive intelligence mechanisms

however, enterprises often find themselves with a piecemealed portfolio of AI

tools that create bottlenecks in their development and delivery processes or

lack proper security tools to ensure the quality of code.

What It Takes To Architect A Culture Of Cybersecurity

Just because organizations impart mandatory compliance and security awareness

training to their employees does not mean employees will act securely. This is

because of something called the knowledge-behavior gap. Having knowledge does

not mean that people behave in a certain way. For them to transition from

behavior to knowledge, they also need “acceptance” and “intent.” Think of it

like the speed limit sign we consciously choose to ignore. We know the sign’s

there, we know it’s against the law to exceed it, we know that speeding kills,

and yet we choose to turn a blind eye. Since most organizations do not

actively manage and cultivate their security culture, they assume that it does

not exist in their organization. The reality is that every organization,

regardless of size, has a culture. The way in which organizations and

leadership teams treat, value, and manage security, influences and builds its

security culture. Unfortunately, most organizations do not track the

security-related aspects of their culture in its early stages and eventually,

it ends up spiraling out of control and manifesting into something the

organization may have difficulty reversing.

A semantic layer allows business users with little or no technical skills to

access and consume data without needing to understand the underlying technical

complexities. It makes data more accessible and understandable to

non-technical users, enabling them to easily query, analyze, and make informed

decisions based on the data. ... Integrating data into a semantic layer from

multiple sources -- each with its own structure, format, and levels of detail

-- can be a complex undertaking. The process of harmonizing these sources

demands time and meticulous attention to detail. Creating intricate business

views using precise calculations within the semantic layer presents yet

another challenge. Applying complex formulas, conditional rules, and

computations across multiple data sources is a grueling task. Mapping business

metrics with consistency in calculations and hierarchies across diverse BI

tools can be highly complicated as each tool handles it in a different manner.

... You’ll need a scalable and efficient semantic layer that is adept at

collaborating with multiple BI tools.

Quote for the day:

"Nothing in the world is more common

than unsuccessful people with talent." -- Anonymous