Business Architecture - A New Depiction

Crucial to this depiction are components which exist in both the vertical

pillars and the horizontal Business Architecture layer as follows: Application

Architecture: includes the Business Process component, to associate application

components (logical & operational) with the business activity they support.

Information Architecture: includes the Information Component from a business

perspective separately from any logical or operational representation of that

information by data (structured or unstructured). Infrastructure

Architecture: contains the location component. This is to recognize that

business infrastructure is linked to an organization / location either by

physical installation or network access. Business Architecture consists of these

business components – shared with the other domains – and, in addition, more

complex views which link the architecture with the business plans. For example,

an architecture view for a business capability (as defined through

capability-based planning) would show how the components support that

capability. The 3 vertical domains can be considered to constitute IT

Architecture (for the enterprise).

Meet Web Push

One goal of the WebKit open source project is to make it easy to deliver a

modern browser engine that integrates well with any modern platform. Many

web-facing features are implemented entirely within WebKit, and the maintainers

of a given WebKit port do not have to do any additional work to add support on

their platforms. Occasionally features require relatively deep integration with

a platform. That means a WebKit port needs to write a lot of custom code inside

WebKit or integrate with platform specific libraries. For example, to support

the HTML <audio> and <video> elements, Apple’s port leverages

Apple’s Core Media framework, whereas the GTK port uses the GStreamer project. A

feature might also require deep enough customization on a per-Application basis

that WebKit can’t do the work itself. For example web content might call

window.alert(). In a general purpose web browser like Safari, the browser wants

to control the presentation of the alert itself. But an e-book reader that

displays web content might want to suppress alerts altogether. From WebKit’s

perspective, supporting Web Push requires deep per-platform and per-application

customization.

Introduction to Infrastructure as Code - Part 1: Introducing IaC

In recent years, development has shifted away from monolithic applications and

towards microservices architectures and cloud-native applications. However,

modernizing apps introduces complexity, as maintaining the cloud computing

architecture requires infrastructure automation tools, efficient provisioning,

and scaling of new resources. Too many developers still see infrastructure

provisioning and management as an opaque process that Ops teams perform using

GUI tools like the Azure Portal. Infrastructure as code (IaC) challenges that

notion. The practice of IaC unifies development and operations, creating a close

bond between code and infrastructure. Why should we use IaC? When you develop an

application, you create code, build and version it, and deploy the artifact

through the DevOps pipeline. IaC allows you to create your infrastructure in the

cloud using code, enabling you to version and execute that code whenever

necessary. This three-article series starts with an introduction to IaC. Then,

the following two articles in this series show how to use the Bicep language and

Terraform HCL syntax to create templates and automatically provision resources

on Azure.

VPN providers flee Indian market ahead of new data rules

The new directive by India's top cybersecurity agency, the Indian Computer

Emergency Response Team (Cert-In), requires VPN, Virtual Private Server (VPS)

and cloud service providers to store customers' names, email addresses, IP

addresses, know-your-customer records, and financial transactions for a period

of five years. SurfShark announced on Wednesday in a post titled "Surfshark

shuts down servers in India in response to data law," that it "proudly operates

under a strict "no logs" policy, so such new requirements go against the core

ethos of the company." SurfShark is not the first VPN provider to pull its

servers from the country following the directive. ExpressVPN also decided to

take the same step just last week, and NordVPN has also warned that it will be

removing physical servers if the directives are not reversed. ... Like many

businesses around the world, Indian companies have increased their reliance on

VPNs since the COVID-19 pandemic forced many employees to work from home. VPN

adoption grew to allow employees to access sensitive data remotely, even as

companies started adopting other secure means to allow remote access such as

Zero Trust Network Access and Smart DNS solutions.

5 top deception tools and how they ensnare attackers

To work, deception technologies essentially create decoys, traps that emulate

natural systems. These systems work because of the way most attackers operate.

For instance, when attackers penetrate the environment, they typically look for

ways to build persistence. This typically means dropping a backdoor. In addition

to the backdoor, attackers will attempt to move laterally within organizations,

naturally trying to use stolen or guessed access credentials. As attackers find

data and systems of value, they will deploy additional malware and exfiltrate

data, typically using the backdoor(s) they dropped. With traditional anomaly

detection and intrusion detection/prevention systems, enterprises try to spot

these attacks in progress on their entire networks and systems. Still, the

problem is these tools rely on signatures or susceptible machine learning

algorithms and throw off a tremendous number of false positives. Deception

technologies, however, have a higher threshold to trigger events, but these

events tend to be real threat actors conducting real attacks.

MIT built a new reconfigurable AI chip that can reduce electronic waste

The team's optical communication system comprises paired photodetectors and LEDs

patterned with tiny pixels. The photodetectors feature an image sensor for

receiving data, and LEDs transmit that data to the next layer. Since the

components must work like a LEGO-like reconfigurable AI chip, they must be

compatible. "The sensory chip at the bottom receives signals from the outside

environment and sends the information to the next chip above by light signals.

The next chip, which is a processor layer, receives the light information and

then processes the pre-programmed function. Such light-based data transfer

continues to other chips above, thus performing multi-functional tasks as a

whole," the team explained. ... The team fabricated a single chip with a

computing core that measured about four square millimeters. The chip is stacked

with three image recognition "blocks", each comprising an image sensor, optical

communication layer, and artificial synapse array for classifying one of three

letters, M, I, or T. They then shone a pixellated image of random letters onto

the chip and measured the electrical current that each neural network array

produced in response.

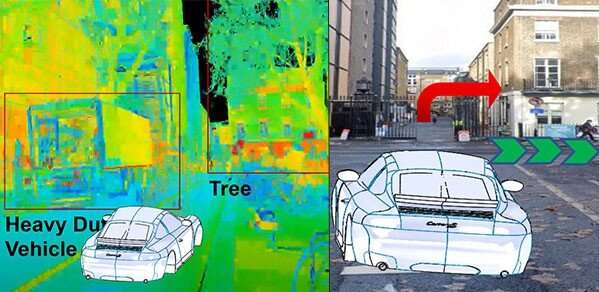

Augmented reality head-up displays: Navigating the next-gen driving experience

HUDs work by projecting a transparent 2D or 3D digital image of navigational and

hazard warning information, for example, onto the windscreen of the vehicle.

These projected images then merge with the driver's view of the road ahead.

Windshield HUDs, for example, are set up so that the driver does not need to

shift their gaze away from the road in order to view the relevant, timely

information. This technology helps to keep the driver's attention on the road,

as opposed to the driver having to look down at the dashboard or navigation

system. Technological advances in this area have led to HUDs with holographic

displays and AR in 3D. This added depth perception makes it possible to project

computer-generated virtual objects in real time into the driver's field of view

to warn, inform or entertain the user. The driver's alertness to road obstacles

is increased by enabling shorter obstacle visualization times, and eye strain

and driving stress levels are reduced. "Holographic HUDs are paramount if we are

to explore the possibilities of augmented and mixed reality for road safety,"

said Jana

Nigerian Police Bust Gang Planning Cyberattacks on 10 Banks

The operation was a coordinated effort between the Economic and Financial Crimes

Commission of Nigeria, Interpol, the National Central Bureaus and law

enforcement agencies of 11 countries across Southeast Asia, according to

Interpol. The operation was initiated after Interpol's private sector partner

Trend Micro provided operational intelligence to the agency about the "emergence

and usage of Agent Tesla malware" in this case. Agent Tesla was found on the

mobile phones and laptops of the syndicate members that were seized by the EFCC

during the bust. "Through its global police network and constant monitoring of

cyberspace, Interpol had the globally sourced intelligence needed to alert

Nigeria to a serious security threat where millions could have been lost without

swift police action," Interpol Director of Cybercrime Craig Jones says in the

statement. "Further arrests and prosecutions are foreseen across the world as

intelligence continues to come in and investigations unfold."

10 ways DevOps can help reduce technical debt

In most cases, technical debt occurs because development teams take shortcuts to

meet tight deadlines and struggle with constant changes. But better

collaboration between dev and ops can shorten SDLC, fasten deployments, and

increase their frequency. Moreover, CI/CD and continuous testing make it easier

for teams to deal with changes. Overall, the collaborative culture encourages

code reviews, good coding practices, and robust testing with mutual help. ...

Technical debt is best controlled when managed continuously, which becomes

easier with DevOps. As it facilitates constant communication, teams can track

debt, facilitate awareness and resolve it as soon as possible. Team leaders can

also include technical debt review into backlog and schedule maintenance sprints

to deal with it promptly. Moreover, DevOps reduces the chances of incomplete or

deferred tasks in the backlog, helping prevent technical debt. ... A true DevOps

culture can be the key to managing technical debt over long periods. DevOps

culture encourages strong collaboration between cross-functional teams, provides

autonomy and ownership, and practices continuous feedback and improvement.

Once is never enough: The need for continuous penetration testing

The traditional attitude to manual pen testing is kind of like the traditional

approach to driving navigation: nothing can replace the sophistication and

accrued knowledge of a human. A taxi driver will always beat Google Maps, and a

trained pen testing professional will find vulnerabilities and attacks that

automated tests may miss, or identify responses that appear legitimate to

automated software but are actually a threat. The truth is, on a case-by-case

basis, this could conceivably be true. But with off-the-shelf tools and services

like RaaS (Ransomware as a Service) or MaaS (Malware as a Service) that use

AI/ML capabilities to enhance attack efficiency – you’d need an army of pen

testers to truly meet the challenges of today’s cyber threats. And once you’d

found, trained and employed them – cyberattackers would simply increase their

automation efforts and you’d need to draft another army. Not a sustainable

cybersecurity model, clearly. Similarly, the widescale adoption of agile

development methodologies has translated into increasingly frequent software

releases.

Quote for the day:

"If you are truly a leader, you will

help others to not just see themselves as they are, but also what they can

become." -- David P. Schloss

No comments:

Post a Comment