Technical Debt and Modular Software Architecture

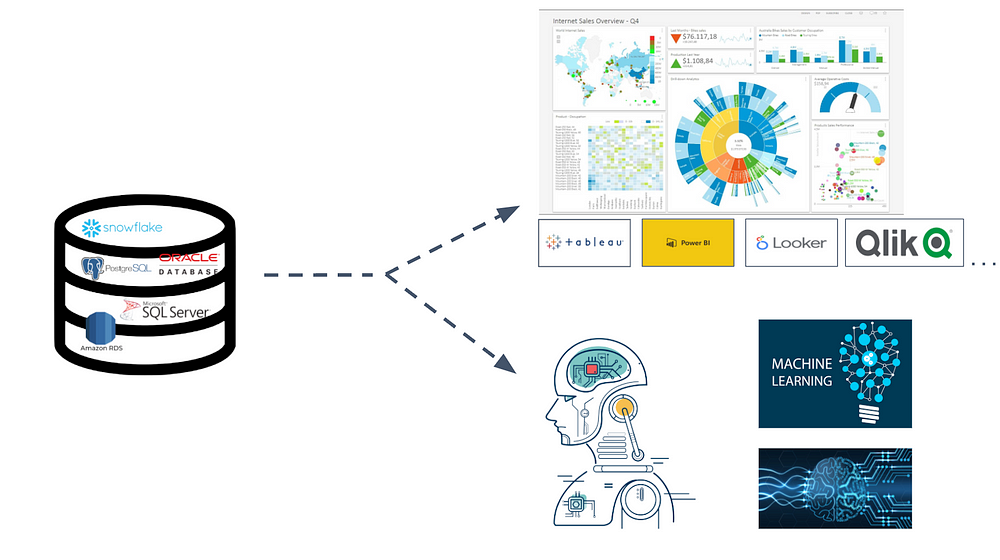

An organization incurs technical debt whenever it cedes its rights and

perquisites as a customer to a cloud service provider. To get a feel for how

this works in practice, consider the case of a hypothetical SaaS cloud

subscriber. The subscriber incurs technical debt when it customizes the software

or redesigns its core IT and business processes to take advantage of features or

functions that are specific to the cloud provider’s platform (for example,

Salesforce’s, Marketo’s, Oracle’s, etc.). This is fine for people who work in

sales and marketing, as well as for analysts who focus on sales and marketing.

But what about everybody else? Can the organization make its SaaS data available

to high-level decision-makers and to the other interested consumers dispersed

across its core business function areas? Can it contextualize this SaaS data

with data generated across its core function areas? Is the organization taking

steps to preserve historical SaaS data. In short: What is the opportunity cost

of the SaaS model and its convenience? What steps must the organization take to

offset this opportunity cost?

Data Breaches Affected Nearly 6 Billion Accounts in 2021

Breaches grew rapidly in 2021, noted Lucas Budman, founder and CEO of

TruU, a multifactor authentication company in

Palo Alto, Calif. “We exceeded the number of breach events in 2020 by the third

quarter of 2021,” he told TechNewsWorld. A number of factors have been

contributing to that increase, he added. “The ever-increasing sophistication of

threat actors, a greater number of connected IoT devices, and the protracted

shortage of skilled security talent all play a role in increased breach

activity,” he said. Budman also maintained that Covid-19 has contributed to

growing data breach numbers. “Data shows that the surge in remote and hybrid

work and other factors resulting from the Covid-19 pandemic have fueled the rise

of cybercrime by 600 percent or more,” he said. ... “Since an exceedingly large

percentage of attacks focus on the end-user, this move to remote has proven very

fruitful for attackers,” he told TechNewsWorld. “Similarly,” he continued, “the

pandemic has dramatically changed the way goods and services are manufactured,

dispatched and consumed. ...”

Council Post: How to develop a comprehensive AI governance & ethics function

Biases including cognitive bias, incomplete data, flaws in the algorithm, etc,

slow down the growth of AI in an organisation. Research and development play an

important role in addressing these issues. Who understands this better than

ethicists, social scientists, and experts? Therefore, businesses should include

such experts in their AI projects across applications. Data architects also play

a key role in governing AI products. Companies should have a complete pipeline

of data or metadata for AI modelling. Remember, AI’s success depends on a

well-sorted data architecture that is error and noise-free. To do so, data

standardisation, data governance, and business analytics are a must. HR plays a

key role in shaping the AI governance function. For instance, they should find

candidates who “fit” into the organisation’s existing AI framework and create

training material for the existing workforce to help them understand how to

create ethical AI applications. Ensuring AI products don’t cross any legal

boundaries is critical for smooth deployment. AI solutions meet the stipulated

compliance guidelines of the organisation and the industry in which the

organisation operates.

Importance of Binding Business and System Architecture

An architecture of the enterprise is a carefully designed structure of a

business or company entrepreneurial economic activity. One can easily assert

that these entrepreneurial or economic activities include people, processes, and

systems working in harmony to yield important business outcomes. These

structures include organizational design, operational processes producing value,

and the systems used by people during the execution of their mission. Enterprise

architects use the business-prescribed operational end-state (results of value)

to guide (like a blueprint) the enterprise to accomplish its mission—frequently,

the end-states include vision, goals, objectives, and capabilities. Can a

business exchange goods and services without technology and survive? Of course

not. ... The enterprise architecture is neither the business architecture

(operational viewpoint) nor the system architecture (technical

viewpoint)—rather, the enterprise architecture is both architectures created in

an integrated form, using a standardized method of design, and usable and

consumable by both operational and technology people.

Cloud Native Winners and Losers

Enterprises that don’t plan ahead to move an application off a specific cloud

but are forced to do so at some future point will also become losers. There is a

lot of cost and risk involved in modifying applications to remove specific cloud

native services and replace them with other cloud native services or open

services. Clearly, this is the dreaded “vendor lock in.” Most applications that

move to cloud platforms won’t ever move off that platform during the life of the

application, mostly due to the costs and risks involved. Another drawback is

that you’ll need cloud specific skills to take full advantage of cloud native

features. This talent may not be available in-house or in the general labor

pool, and/or it could drive staffing costs over the budget. The pandemic drove a

massive rush to public cloud providers, which meant the demand for cloud

migration skills exploded as well, driving up salaries and consulting fees.

Moreover, the scarcity of qualified skills increases the risk that you won’t

find the skills needed for cloud native systems builds, and/or the required

level of talent will be unavailable to create optimized and efficient

systems.

Leveraging small data for insights in a privacy-concerned world

While big data focuses on the huge volumes of information that individuals and

consumers produce for businesses to look at and AI programs to sift through,

small data is made up of far more accessible bite-sized chunks of information

that humans can interpret to gain actionable insights. While big data can be a

hindrance to small businesses due to its unstructured nature, masses of required

storage space, and oftentimes the necessity of being held in SQL servers, small

data holds plenty of appeal in that it can arrive ready to sort with no need for

merging tables. It can also be stored on a local PC or database for ease of

access. However, as it is generally stored within a company, it’s essential that

businesses utilize the appropriate levels of cybersecurity to protect the

privacy of their customers and to keep their confidential data safe. Maxim

Manturov, head of investment research at Freedom Finance Europe has identified

Palo Alto as a leading firm for businesses looking to protect their small data

centrally. “Its security ecosystem includes the Prisma cloud security platform

and the Cortex artificial intelligence AI-based threat detection platform,”

Manturov notes.

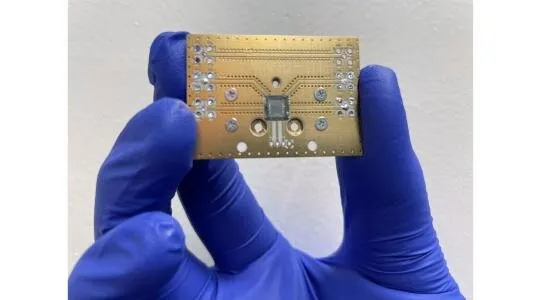

EvilModel: Malware that Hides Undetected Inside Deep Learning Models

While this scenario is alarming enough, the team points out that attackers can

also choose to publish an infected neural network on online public repositories

like GitHub, where it can be downloaded on a larger scale. In addition,

attackers can also deploy a more sophisticated form of delivery through what is

known as a supply chain attack, or value-chain or third-party attack. This

method involves having the malware-embedded models posing as automatic updates,

which are then downloaded and installed onto target devices. ... The team notes,

however, that it is possible to destroy the embedded malware by retraining and

fine-tuning models after they are downloaded, as long as the infected neural

network layers are not “frozen”, meaning that the parameters in these frozen

layers are not updated during the next round of fine-tuning, leaving the

embedded malware intact. “For professionals, the parameters of neurons can be

changed through fine-tuning, pruning, model compression or other operations,

thereby breaking the malware structure and preventing the malware from

recovering normally,” said the team.

Moore’s Not Enough: 4 New Laws of Computing

Law 1. Yule’s Law of Complementarity - From a strategic point of view,

technology firms ultimately need to know which complementary element of their

product to sell at a low price—and which complement to sell at a higher price.

And, as the economist Bharat Anand points out in his celebrated 2016 book The

Content Trap, proprietary complements tend to be more profitable than

nonproprietary ones. ... Law 3. Evans’s Law of Modularity - Evans’s Law could be

formulated as follows: The inflexibilities, incompatibilities, and rigidities of

complex and/or monolithically structured technologies could be simplified by the

modularization of the technology structures (and processes). ... In other words,

modularization of software projects and the development process makes such

endeavors more efficient. As outlined in a helpful 2016 Harvard Business Review

article, the preconditions for an agile methodology are as follows: The problem

to be solved is complex; the solutions are initially unknown, with product

requirements evolving; the work can be modularized; and close collaboration with

end users is feasible.

Cisco announces Wi-Fi 6E, private 5G to assist with hybrid work

The new Cisco Wi-Fi 6E products are the first high-end 6E access points that

will assist businesses and their workers with high-traffic hybrid setups. The

Wi-Fi 6E will also open up a new spectrum in the form of the 6GHz band. The

access points will use the newly available spectrum that matches wired speeds

and gigabit performance. Wi-Fi 6E will also greatly expand capacity and

performance for the latest devices using collaborative applications designed for

hybrid work and coupled with Cisco’s DNA Center and DNA Spaces. With these

upgrades, the company is promoting collaboration in the offices, campuses and

branches by delivering Internet of Things and operational technology benefits in

smart buildings at scale. Also announced were three new varieties of the

expansion of the Catalyst 9000x line of switches in the forms of the 9300x, the

9400x and the 9500x/9600x to help support the traffic brought in from increased

wireless capacity. The 9300x will sport 48 Universal Power Over Ethernet (UPOE)

ports for small to large campus access and aggregation deployments with

Multigigabit Ethernet (mGig) speeds and 100G Uplink Modules in a stackable

switching platform.

Supercomputers, AI and the metaverse: here’s what you need to know

Meta has promised a host of revolutionary uses of its supercomputer, from

ultrafast gaming to instant and seamless translation of mind-bendingly large

quantities of text, images and videos at once — think about a group of people

simultaneously speaking different languages, and being able to communicate

seamlessly. It could also be used to scan huge quantities of images or videos

for harmful content, or identify one face within a huge crowd of people. The

computer will also be key in developing next-generation AI models, it will power

the Metaverse, and it will be a foundation upon which future metaverse

technologies can rely. But the implications of all this power mean that there

are serious ethical considerations for the use of Meta’s supercomputer, and for

supercomputers more generally. ... The age of AI also brings with it key

questions about human privacy and the privacy of our thoughts. To address these

concerns, we must seriously examine our interaction with AI. When we look at the

ethical structures of AI, we must ensure its usage is transparent, explainable,

bias-free, and accountable.

Quote for the day:

"Strong leaders encourage you to do

things for your own benefit, not just theirs." -- Tim Tebow

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/70462359/acastro_181017_1777_brain_ai_0002.0.jpg)