We are in an age of rapid technological progress. But many are not ‘progressing’

Even risk-averse companies that readily adapt and invest in new technologies and

processes encounter hurdles. One example is what is known as the ‘productivity

paradox‘, which is when anticipated gains in productivity and ROI are not fully

realized straightaway. When Apple, Microsoft and Dell Computer arrived on the

scene in the 1980s, computer usage was limited to early adopters or those who

could afford a personal computer. They did not receive widespread consumer

acceptance until the mid-1990s; now, computers and smart devices are an

indispensable part of society. The benefits of the computer age are difficult to

gauge in simple fashion. MIT’s Nobel Prize-winning economist Robert Solow stated

during the internet boom of the 1990s: “You could see the computer age

everywhere but in the productivity statistics.” Why? One explanation is that GDP

is an imperfect measure for capturing meaningful data and translating

technology’s impact on productivity, sustainability and overall well-being. The

same can be said of the Gini coefficient used to measure income distribution and

economic inequality among a huge swath of the population.

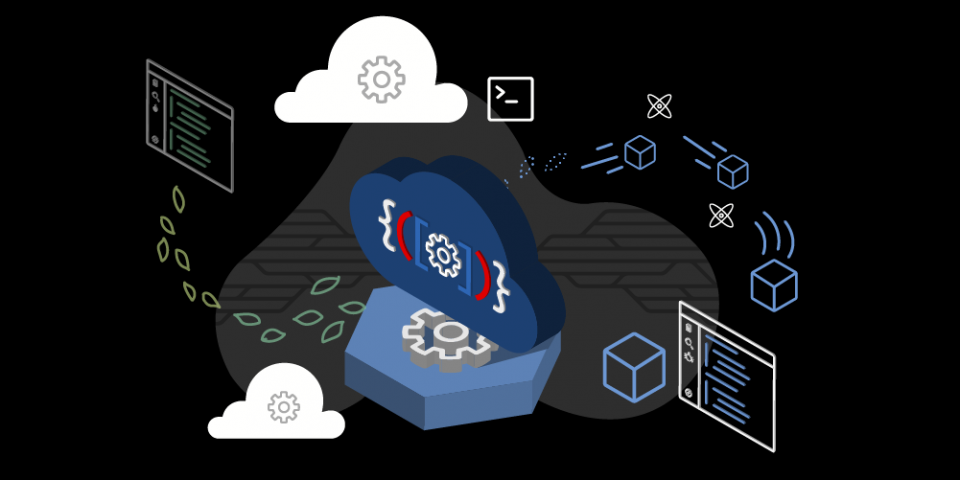

Zero-Trust Model Gains Luster Following Azure Security Flaw

In light of this coming tsunami, enterprises need to rethink their security

strategies to embrace zero-trust and identity-based authentication. Both of

those strategies are ones that experts recommend for dealing with risks like

those posed by the ChaosDB vulnerability. And they will help prepare enterprises

for future problems of the same kind, where much of the underlying architecture

and processes are out of their control. "The cloud provider can become a single

point of failure," said Dan Petro, lead researcher at security testing firm

Bishop Fox. And as the industry moves even further toward serverless

infrastructure, vulnerabilities like ChaosDB are likely to increase in

occurrence and severity, he told Data Center Knowledge. "Anytime we have these

highly visible, high-profile weaknesses, attackers are going to notice that, and

it's going to inspire similar attacks, similar offensive research," said Mark

Orlando, co-founder and CEO at Bionic Cyber; security operations instructor at

the SANS Institute; and former security team manager at the Pentagon, the White

House and the Department of Energy.

The common vulnerabilities leaving industrial systems open to attack

According to the research, industrial systems are especially open to attack when

there’s a low level of protection around an external network perimeter that is

accessible from the internet. Device misconfigurations and flaws in network

segmentation and traffic filtering are also leaving the industrial sector

particularly vulnerable. Lastly, the report also cites the use of outdated

software and dictionary passwords as risky vulnerabilities. To uncover these

insights, the researchers set out to actually imitate hackers and see what path

they’d take to gain access. “When analyzing the security of companies’

infrastructure, Positive Technologies experts look for vulnerabilities and

demonstrate the feasibility of attacks by simulating the actions of real

hackers,” reads the report. “In our experience, most industrial companies have a

very low level of protection against attacks.” Once inside the internal network,

Positive Technologies found that attackers can obtain user credentials and full

control over the infrastructure in 100% of cases.

8 must-ask security analyst interview questions

For those who excel in cybersecurity, their interest in the topic is not a

9-to-5 thing; it’s a passion that pervades their everyday lives. To find out if

that’s the case, Lindemoen likes to ask about the candidates’ home network

setup. “I look for whether they’re using WPA2 vs. WPA and WEP and whether they

set up a separate network for when guests use their home wireless network,” he

says. “They’re simple things, but it provides some insight into how they think

about security in their personal lives.” Lindemoen also asks about which

cybersecurity conferences they’d most like to attend if they could, and why.

Rather than naming a well-known conference, “they might mention one that’s in a

niche they’re focused on or are truly passionate about.” Participation in

capture-the-flag (CTF) and other cyber calisthenics events and activities is

another good barometer, Glavach says. Because these programs are free, they can

be even better about revealing passion than costly certifications are. “If

there’s a candidate with no certifications but they participated in CTFs similar

to a DEFCON CTF or a SANS Holiday Hack, that shows me they’re very committed,”

he says.

10 Most Practical Data Science Skills You Should Know in 2022

It’s one thing to build a visually stunning dashboard or an intricate model with

over 95% accuracy. BUT if you can’t communicate the value of your projects to

others, you won’t get the recognition that you deserve, and ultimately, you

won’t be as successful in your career as you should. Storytelling refers to

“how” you communicate your insights and models. Conceptually, if you were to

think about a picture book, the insights/models are the pictures and the

“storytelling” refers to the narrative that connects all of the pictures.

Storytelling and communication are severely undervalued skills in the tech

world. From what I’ve seen in my career, this skill is what separates juniors

from seniors and managers. ... A/B testing is a form of experimentation where

you compare two different groups to see which performs better based on a given

metric. A/B testing is arguably the most practical and widely-used statistical

concept in the corporate world. Why? A/B testing allows you to compound 100s or

1000s of small improvements, resulting in significant changes and improvements

over time.

How To Address Bias-Variance Tradeoff in Machine Learning

Bias and variance are inversely connected and It is nearly impossible

practically to have an ML model with a low bias and a low variance. When we

modify the ML algorithm to better fit a given data set, it will in turn lead to

low bias but will increase the variance. This way, the model will fit with the

data set while increasing the chances of inaccurate predictions. The same

applies while creating a low variance model with a higher bias. Although it will

reduce the risk of inaccurate predictions, the model will not properly match the

data set. Hence it is a delicate balance between both biases and variance. But

having a higher variance does not indicate a bad ML algorithm. Machine learning

algorithms should be created accordingly so that they are able to handle some

variance. Underfitting occurs when a model is unable to capture the underlying

pattern of the data. Such models usually present with high bias and low

variance. It happens when we have very little data to build a model or when we

try to build a model with linear features making use of nonlinear data.

The benefits of Bare-Metal-as-a-Service for fintech

Dedicated servers are a better fit for resource-heavy apps. In the world of

financial services, there’s a lot of transactions going on. Virtual machines are

not the best choice for such an environment, since the “virtualisation tax”

prevents you from using 100% of their capacity. Another issue is the

distribution of the platform’s resources between users – when one of them uses

too much of the server’s capacity, their neighbours pay for it. ... Bare metal

solutions are often harder to order than a virtual machine, and you must wait

longer for the server to be prepared for operation. Another issue is the

management of the disparate infrastructure of dedicated servers, virtual

machines and clouds when purchased from different providers. G-Core Labs’ new

offering, Bare-Metal-as-a-Service, solves these problems. With this service, a

user can get a ready-for-use dedicated server as easily as a virtual one. Just

select the right features, connect a private or public network, or several

networks at once, and in a few minutes, the physical server will be ready for

use.

Israel’s fintech community readies for ‘dramatic’ changes in banking sector

The first calls for establishing “a unique regulatory sandbox” for fintech

companies in which regulators will monitor their activities while hedging their

risks, and allowing them to introduce products into the Israeli market to

benefit consumers. The regulatory system proposal was coordinated by an

inter-ministerial team led by the Justice and Finance ministries and included

representatives from the Securities Authority, the Bank of Israel (BOI), the

Capital Market Authority, the Anti-Money Laundering and Terrorist Financing

Authority, and the Tax Authority. The second proposal — the one watched closely

by Israeli fintech startups and the legacy banks — requires banks and financial

entities to transfer information about their customers, with the customers’

approval, to technology firms that can provide these customers with information

about the financial services they consume, how much exactly they are paying for

them and how much they could save if they switch to another financial services

provider.

5 Surefire Things That’ll Get You Targeted by Ransomware

Using a password manager has become a common practice for many, but it seems

like there are a lot of people who unfortunately still don’t understand the

risks. There are some valid concerns with using password managers in

general—like losing access to your master file, having it fall into the wrong

hands, or the issue with hosted services where your passwords are hosted by a

third party. But all of those are minor compared to the issues that you’re

bringing about by reusing passwords as an alternative. Sure, it’s convenient.

But as soon as one of your accounts is compromised, you’re going to run into a

lot of trouble on many fronts. And this happens more often than you might think;

companies get attacked regularly, and credentials are leaked as a

result. ... As an extension to the above, watch out for the kinds of

contacts you make online. People might not be who they claim, and you should

always keep an eye open for potential shady intentions. When you combine this

with some of the above points, things can get quite scary. Some people might

target you because they’ve gathered information about you from other sources,

and they can make the whole interaction seem very natural and legitimate.

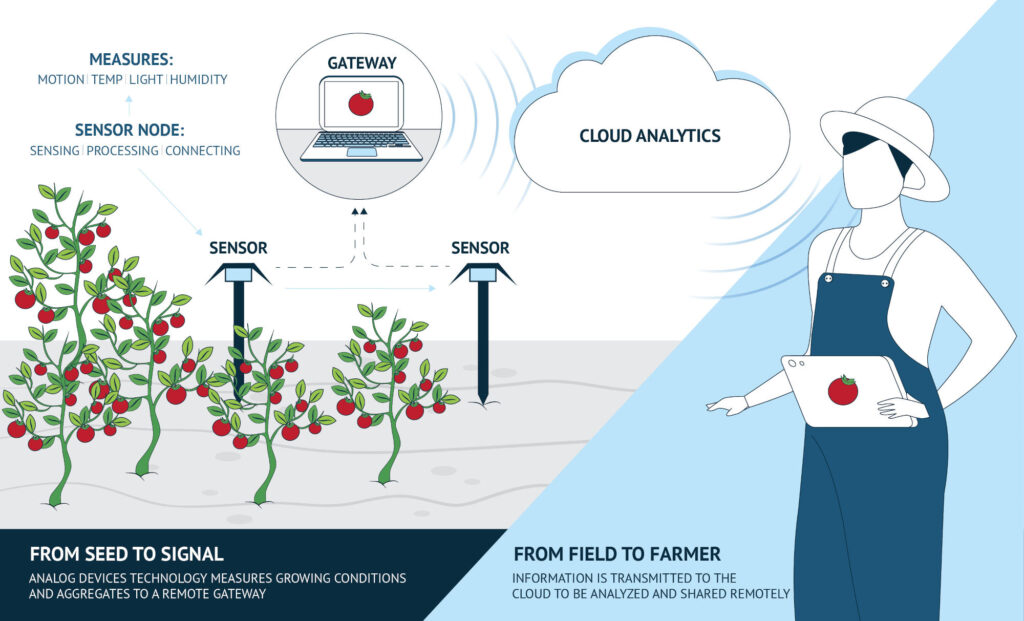

Utilising digital skills to tackle climate change

Upskilling is crucial to the major transition that the energy industry is

currently going through. A 2020 report by EY on Oil and Gas Digital

Transformation, found that 43% of respondents cite “too few workers with the

right skills in the current workforce” as a major challenge to digital

technology adoption. Upskilling will not only equip workers with new skills but

also enable organisations to reach their digital transformation goals. By

embracing the rapid change of innovation with upskilling, employers can take a

proactive and agile approach to keep workforces engaged and employees focused on

their own personal development. It’s not to say the skills that current workers

hold are not useful for today’s needs, as many in energy industries possess

transferable skills. Workers typically possess foundational knowledge in STEM

fields and soft skills which can be integrated seamlessly into newer

applications. For example, skills in the oil, gas and coal sectors can be

brought into the growing renewable energy sector, offering a huge rise in job

opportunities.

Quote for the day:

"Becoming a leader is synonymous with

becoming yourself. It is precisely that simple, and it is also that

difficult." -- Warren G. Bennis

/cloudfront-us-east-2.images.arcpublishing.com/reuters/BL3X4VW4RJOB7OA75TFWXTADSY.jpg)