Why VMware’s Tom Gillis Calls APIs ‘the Future of Networking’

Gillis says there are three steps to building “world-class security” in the data

center. Step one is software-based segmentation, which can be as simple as

separating the production environment from the development environment. “And

then you want to make those segments smaller and smaller until we get them down

to per-app segmentation, which we call microsegmentation,” he explained. Step

two requires visibility into in-band network traffic. “We’re gonna go through on

a flow-by-flow basis, and starting looking at, okay, this one is legitimate, and

this one is WannaCry, and being able to figure that out using a distributed

architecture,” Gillis said. Step three involves the ability to do anomaly

detection, which allows analysts to find unknown threats as the attackers

continually change their tactics. “Most security-conscious companies do this

with a network TAP,” Gillis sas. These test access points (TAPs) allow companies

to access and monitor network traffic by making copies of the packets. However,

deploying all of these network TAPS, and storing the copies in a data lake

becomes “very cumbersome, very operationally deficient,” Gillis said.

Gillis says there are three steps to building “world-class security” in the data

center. Step one is software-based segmentation, which can be as simple as

separating the production environment from the development environment. “And

then you want to make those segments smaller and smaller until we get them down

to per-app segmentation, which we call microsegmentation,” he explained. Step

two requires visibility into in-band network traffic. “We’re gonna go through on

a flow-by-flow basis, and starting looking at, okay, this one is legitimate, and

this one is WannaCry, and being able to figure that out using a distributed

architecture,” Gillis said. Step three involves the ability to do anomaly

detection, which allows analysts to find unknown threats as the attackers

continually change their tactics. “Most security-conscious companies do this

with a network TAP,” Gillis sas. These test access points (TAPs) allow companies

to access and monitor network traffic by making copies of the packets. However,

deploying all of these network TAPS, and storing the copies in a data lake

becomes “very cumbersome, very operationally deficient,” Gillis said.'FragAttacks' eavesdropping flaws revealed in all Wi-Fi devices

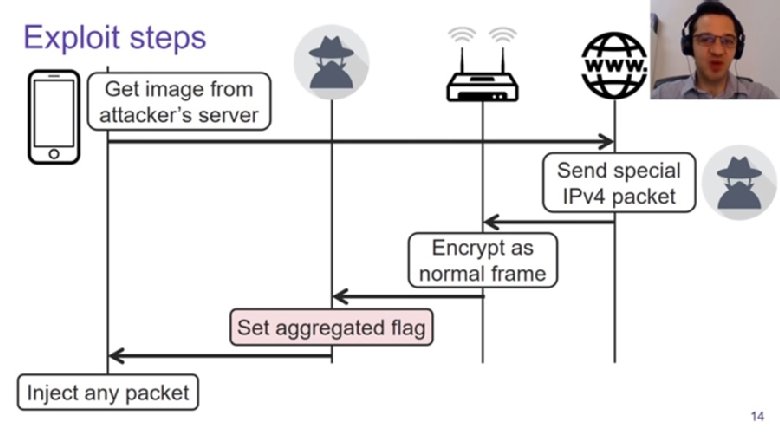

To be clear, these attacks would require the threat actor to be on the local

network alongside the targets; these are not remotely exploitable flaws that

could, for instance, be embedded in a webpage or phishing email. The attacker

would either have to be on a public Wi-Fi network, have gotten access to a

private network by obtaining the password or tricked their mark into connecting

with a rogue access point. Thus far, there have been no reports of the

vulnerabilities being exploited in the wild. Vanhoef opted to hold the public

disclosure until vendors could be briefed and given time to patch the bugs. So

far, at least 25 vendors have posted updates and advisories. Both Microsoft and

the Linux Kernel Organization were warned ahead of time, and users can protect

themselves by updating to the latest version of their operating systems. In a

presentation set for the Usenix Security conference, Vanhoef explained how by

manipulating the unauthenticated "aggregated" flag in a frame, instructions can

be slipped into the frame and executed by the target machine. This could, for

example, allow an attacker to redirect a victim to a malicious DNS server.

To be clear, these attacks would require the threat actor to be on the local

network alongside the targets; these are not remotely exploitable flaws that

could, for instance, be embedded in a webpage or phishing email. The attacker

would either have to be on a public Wi-Fi network, have gotten access to a

private network by obtaining the password or tricked their mark into connecting

with a rogue access point. Thus far, there have been no reports of the

vulnerabilities being exploited in the wild. Vanhoef opted to hold the public

disclosure until vendors could be briefed and given time to patch the bugs. So

far, at least 25 vendors have posted updates and advisories. Both Microsoft and

the Linux Kernel Organization were warned ahead of time, and users can protect

themselves by updating to the latest version of their operating systems. In a

presentation set for the Usenix Security conference, Vanhoef explained how by

manipulating the unauthenticated "aggregated" flag in a frame, instructions can

be slipped into the frame and executed by the target machine. This could, for

example, allow an attacker to redirect a victim to a malicious DNS server.The state of digital transformation in Indonesia

Indonesian firms are facing people-related challenges most often in their

digital transformation. Lack of technology skills and knowledge, and a shortage

of employee availability, indicate a critical talent crunch. Firms may pay a

price already for not putting employee experience higher on their business

agenda. Besides data issues, challenges also include securing the digital

transformation. Only 17% of firms in Indonesia are currently adopting a zero

trust strategic cybersecurity framework. Fewer than 20% mention securing

budgeting and funding for DT as a key challenge. This indicates that early

successes are recognized in boardrooms and with executive leaders and that the

vast majority of firms in Indonesia have prepared their transformation budgets

well. Indonesian firms face fewer budget challenges for digital transformation

than their peers in other markets. Firms are also prioritizing cloud and are

building new applications and services primarily on public cloud. Tech

executives in Indonesia therefore face their most immediate challenges around

people, skills, and culture. Upskilling, retention, and aligning employee

priorities to digital transformation are crucial for ongoing success—and firms

must act immediately. Agile has successfully taken hold in IT organizations, but

tech executives must take the lead and collaborate across lines of business to

drive adaptiveness across the organization.

Indonesian firms are facing people-related challenges most often in their

digital transformation. Lack of technology skills and knowledge, and a shortage

of employee availability, indicate a critical talent crunch. Firms may pay a

price already for not putting employee experience higher on their business

agenda. Besides data issues, challenges also include securing the digital

transformation. Only 17% of firms in Indonesia are currently adopting a zero

trust strategic cybersecurity framework. Fewer than 20% mention securing

budgeting and funding for DT as a key challenge. This indicates that early

successes are recognized in boardrooms and with executive leaders and that the

vast majority of firms in Indonesia have prepared their transformation budgets

well. Indonesian firms face fewer budget challenges for digital transformation

than their peers in other markets. Firms are also prioritizing cloud and are

building new applications and services primarily on public cloud. Tech

executives in Indonesia therefore face their most immediate challenges around

people, skills, and culture. Upskilling, retention, and aligning employee

priorities to digital transformation are crucial for ongoing success—and firms

must act immediately. Agile has successfully taken hold in IT organizations, but

tech executives must take the lead and collaborate across lines of business to

drive adaptiveness across the organization. Law firms are building A.I. expertise as regulation looms

Just because A.I. is an emerging area of law doesn’t mean there aren’t plenty of ways companies can land in legal hot water today using the technology. He says this is particularly true if an algorithm winds up discriminating against people based on race, sex, religion, age, or ability. “It’s astounding to me the extent to which A.I. is already regulated and people are operating in gleeful bliss and ignorance,” he says. Most companies have been lucky so far—enforcement agencies have generally had too many other priorities to take too hard a look at more subtle cases of algorithmic discrimination, such as a chat bot that might steer certain white customers and Black customers to different car insurance deals, Hall says. But he thinks that is about to change—and that many businesses are in for a rude awakening. Working with Georgetown University’s Centre for Security and Emerging Technology and Partnership on A.I., Hall was among the researchers who have helped document 1,200 publicly reported cases of A.I. “system failures” in just the past three years. The consequences have ranged from people being killed to false arrests based on facial recognition systems misidentifying people to individuals being excluded from job interviews.BRD’s Blockset unveils its white-label cryptocurrency wallet for banks

“The concept is really a result of learnings from working with our customers,

tier one financial institutions, who need a couple things,” Traidman told

TechCrunch. “Generally they want to custody crypto on behalf of their customers.

For example, if you’re running an ETF, like a Bitcoin ETF, or if you’re offering

customers buying and selling, you need a way to store the crypto, and you need a

way to access the blockchain.” “The Wallet-as-a-Service is the nomenclature we

use to talk about the challenge that customers are facing, whereby blockchain is

really complex,” he added. “There are three V’s that I talk about: variety, a

lot of velocity because there’s a lot of transactions per second, and volume

because there’s a lot of total aggregate data.” Blockset also enables clients to

add features like trading crypto or fiat or lending Bitcoin or Stablecoins to

take advantage of high interest rates. Enterprises can develop and integrate

their own solutions or work with Blockset’s partners. Other companies that offer

enterprise blockchain infrastructure include Bison Trails, which was recently

acquired by Coinbase, and Galaxy Digital.

“The concept is really a result of learnings from working with our customers,

tier one financial institutions, who need a couple things,” Traidman told

TechCrunch. “Generally they want to custody crypto on behalf of their customers.

For example, if you’re running an ETF, like a Bitcoin ETF, or if you’re offering

customers buying and selling, you need a way to store the crypto, and you need a

way to access the blockchain.” “The Wallet-as-a-Service is the nomenclature we

use to talk about the challenge that customers are facing, whereby blockchain is

really complex,” he added. “There are three V’s that I talk about: variety, a

lot of velocity because there’s a lot of transactions per second, and volume

because there’s a lot of total aggregate data.” Blockset also enables clients to

add features like trading crypto or fiat or lending Bitcoin or Stablecoins to

take advantage of high interest rates. Enterprises can develop and integrate

their own solutions or work with Blockset’s partners. Other companies that offer

enterprise blockchain infrastructure include Bison Trails, which was recently

acquired by Coinbase, and Galaxy Digital.Democratize Machine Learning with Customizable ML Anomalies

Customizable machine learning (ML) based anomalies for Azure Sentinel are now

available for public preview. Security analysts can use anomalies to reduce

investigation and hunting time as well as improve their detections. Typically,

these benefits come at the cost of a high benign positive rate, but Azure

Sentinel’s customizable anomaly models are tuned by our data science team and

trained with the data in your Sentinel workspace to minimize the benign

positive rate, providing out-of-the box value. If security analysts need to

tune them further, however, the process is simple and requires no knowledge of

machine learning. ... A new rule type called “Anomaly” has been added to Azure

Sentinel’s Analytics blade. The customizable anomalies feature provides

built-in anomaly templates for immediate value. Each anomaly template is

backed by an ML model that can process millions of events in your Azure

Sentinel workspace. You don’t need to worry about managing the ML run-time

environment for anomalies because we take care of everything behind the

scenes. In public preview, all built-in anomaly rules are enabled by default

in your workspace.

Most of the tools, including Fawkes, take the same basic approach. They make

tiny changes to an image that are hard to spot with a human eye but throw off

an AI, causing it to misidentify who or what it sees in a photo. This

technique is very close to a kind of adversarial attack, where small

alterations to input data can force deep-learning models to make big mistakes.

Give Fawkes a bunch of selfies and it will add pixel-level perturbations to

the images that stop state-of-the-art facial recognition systems from

identifying who is in the photos. Unlike previous ways of doing this, such as

wearing AI-spoofing face paint, it leaves the images apparently unchanged to

humans. Wenger and her colleagues tested their tool against several widely

used commercial facial recognition systems, including Amazon’s AWS

Rekognition, Microsoft Azure, and Face++, developed by the Chinese company

Megvii Technology. In a small experiment with a data set of 50 images, Fawkes

was 100% effective against all of them, preventing models trained on tweaked

images of people from later recognizing images of those people in fresh

images.

Most of the tools, including Fawkes, take the same basic approach. They make

tiny changes to an image that are hard to spot with a human eye but throw off

an AI, causing it to misidentify who or what it sees in a photo. This

technique is very close to a kind of adversarial attack, where small

alterations to input data can force deep-learning models to make big mistakes.

Give Fawkes a bunch of selfies and it will add pixel-level perturbations to

the images that stop state-of-the-art facial recognition systems from

identifying who is in the photos. Unlike previous ways of doing this, such as

wearing AI-spoofing face paint, it leaves the images apparently unchanged to

humans. Wenger and her colleagues tested their tool against several widely

used commercial facial recognition systems, including Amazon’s AWS

Rekognition, Microsoft Azure, and Face++, developed by the Chinese company

Megvii Technology. In a small experiment with a data set of 50 images, Fawkes

was 100% effective against all of them, preventing models trained on tweaked

images of people from later recognizing images of those people in fresh

images.

The first step to encouraging more diversity within the cyber security

workforce is representation. Businesses need to look at their teams and

collaborate with their community and industry to create a platform that will

inspire individuals into industries they may not have considered before. For

example, company representatives at events act as role models, and their

individual passion can be a strong inspiration and draw for a wide range of

candidates. For this reason, it’s vital that security and cloud teams – and in

particular members from diverse backgrounds – have a voice on traditional

media and social platforms. Diverse voices should be seen and heard in

newspapers, on corporate blogs, and in broadcast, where they can share insight

into their careers and expertise, encouraging new talent to join the industry

and their business specifically. Similarly, mentorship programmes help

businesses to attract and retain talent. For those moving into the industry,

changing companies, or transitioning into a new role, having a mentor provides

support, the comfort of representation, and showcases their

achievements.

The first step to encouraging more diversity within the cyber security

workforce is representation. Businesses need to look at their teams and

collaborate with their community and industry to create a platform that will

inspire individuals into industries they may not have considered before. For

example, company representatives at events act as role models, and their

individual passion can be a strong inspiration and draw for a wide range of

candidates. For this reason, it’s vital that security and cloud teams – and in

particular members from diverse backgrounds – have a voice on traditional

media and social platforms. Diverse voices should be seen and heard in

newspapers, on corporate blogs, and in broadcast, where they can share insight

into their careers and expertise, encouraging new talent to join the industry

and their business specifically. Similarly, mentorship programmes help

businesses to attract and retain talent. For those moving into the industry,

changing companies, or transitioning into a new role, having a mentor provides

support, the comfort of representation, and showcases their

achievements.

Quote for the day:

"Leadership is absolutely about inspiring action, but it is also about guarding against mis-action." -- Simon Sinek

How to stop AI from recognizing your face in selfies

Most of the tools, including Fawkes, take the same basic approach. They make

tiny changes to an image that are hard to spot with a human eye but throw off

an AI, causing it to misidentify who or what it sees in a photo. This

technique is very close to a kind of adversarial attack, where small

alterations to input data can force deep-learning models to make big mistakes.

Give Fawkes a bunch of selfies and it will add pixel-level perturbations to

the images that stop state-of-the-art facial recognition systems from

identifying who is in the photos. Unlike previous ways of doing this, such as

wearing AI-spoofing face paint, it leaves the images apparently unchanged to

humans. Wenger and her colleagues tested their tool against several widely

used commercial facial recognition systems, including Amazon’s AWS

Rekognition, Microsoft Azure, and Face++, developed by the Chinese company

Megvii Technology. In a small experiment with a data set of 50 images, Fawkes

was 100% effective against all of them, preventing models trained on tweaked

images of people from later recognizing images of those people in fresh

images.

Most of the tools, including Fawkes, take the same basic approach. They make

tiny changes to an image that are hard to spot with a human eye but throw off

an AI, causing it to misidentify who or what it sees in a photo. This

technique is very close to a kind of adversarial attack, where small

alterations to input data can force deep-learning models to make big mistakes.

Give Fawkes a bunch of selfies and it will add pixel-level perturbations to

the images that stop state-of-the-art facial recognition systems from

identifying who is in the photos. Unlike previous ways of doing this, such as

wearing AI-spoofing face paint, it leaves the images apparently unchanged to

humans. Wenger and her colleagues tested their tool against several widely

used commercial facial recognition systems, including Amazon’s AWS

Rekognition, Microsoft Azure, and Face++, developed by the Chinese company

Megvii Technology. In a small experiment with a data set of 50 images, Fawkes

was 100% effective against all of them, preventing models trained on tweaked

images of people from later recognizing images of those people in fresh

images. Agile Transformation: Bringing the Porsche Experience into the Digital Future with SAFe

Agile means, in fact, many things, but above all, it is a shared commitment. What really matters are the underlying values such as openness, self-commitment, focus. Not to forget the main principles behind agile work: customer orientation, embracing change and continuous improvement, empowerment and self-organization, simplicity, and transparency. In other words, what we learned quite early on is the importance to establish not only ambitious goals but also a shared vision across teams. That requires bringing together different goals and building alignment around a common purpose. Furthermore, we have learned that it is important to focus on incremental change. We now focus on a small number of topics and pursue them persistently. Transformation takes time. Lifelong learning also means that change is an ongoing process — it never ends. Sometimes, change may be hard, but we are not alone. It affects many areas outside the Digital Product Organization and it is essential that we take others along on the journey. Finally, it is important to keep in mind that successful and long-lived companies are usually the ones that learn to be agile and stable at the same time.Recruiting and retaining diverse cloud security talent

The first step to encouraging more diversity within the cyber security

workforce is representation. Businesses need to look at their teams and

collaborate with their community and industry to create a platform that will

inspire individuals into industries they may not have considered before. For

example, company representatives at events act as role models, and their

individual passion can be a strong inspiration and draw for a wide range of

candidates. For this reason, it’s vital that security and cloud teams – and in

particular members from diverse backgrounds – have a voice on traditional

media and social platforms. Diverse voices should be seen and heard in

newspapers, on corporate blogs, and in broadcast, where they can share insight

into their careers and expertise, encouraging new talent to join the industry

and their business specifically. Similarly, mentorship programmes help

businesses to attract and retain talent. For those moving into the industry,

changing companies, or transitioning into a new role, having a mentor provides

support, the comfort of representation, and showcases their

achievements.

The first step to encouraging more diversity within the cyber security

workforce is representation. Businesses need to look at their teams and

collaborate with their community and industry to create a platform that will

inspire individuals into industries they may not have considered before. For

example, company representatives at events act as role models, and their

individual passion can be a strong inspiration and draw for a wide range of

candidates. For this reason, it’s vital that security and cloud teams – and in

particular members from diverse backgrounds – have a voice on traditional

media and social platforms. Diverse voices should be seen and heard in

newspapers, on corporate blogs, and in broadcast, where they can share insight

into their careers and expertise, encouraging new talent to join the industry

and their business specifically. Similarly, mentorship programmes help

businesses to attract and retain talent. For those moving into the industry,

changing companies, or transitioning into a new role, having a mentor provides

support, the comfort of representation, and showcases their

achievements. 3 areas of implicitly trusted infrastructure that can lead to supply chain compromises

Once the server a software repository is hosted on is compromised, an attacker can do just about anything with the repositories on that machine if the users of the repository are not using signed git commits. Signing commits works much like with author-signed packages from package repositories but brings that authentication to the individual code change level. To be effective, this requires every user of the repository to sign their commits, which is weighty from a user perspective. PGP is not the most intuitive of tools and will likely require some user training to implement, but it’s a necessary trade-off for security. Signed commits are the one and only way to verify that commits are coming from the original developers. The user training and inconvenience of such an implementation is a necessary inconvenience if you want to prevent malicious commiters masquerading as developers. This would have also made the HTTPS-based commits of the PHP project’s repository immediately suspicious. Signed commits do not, however, alleviate all problems, as a compromised server with a repository on it can allow the attacker to inject themselves into several locations during the commit process.Quote for the day:

"Leadership is absolutely about inspiring action, but it is also about guarding against mis-action." -- Simon Sinek