Outbound Email Errors Cause 93% Increase in Breaches

Egress CEO Tony Pepper said the problem is only going to get worse with

increased remote working and higher email volumes, which create prime

conditions for outbound email data breaches of a type that traditional DLP

tools simply cannot handle. “Instead, organizations need intelligent

technologies, like machine learning, to create a contextual understanding of

individual users that spots errors such as wrong recipients, incorrect file

attachments or responses to phishing emails, and alerts the user before they

make a mistake,” he said. The most common breach types were replying to

spear-phishing emails (80%), emails sent to the wrong recipients (80%) and

sending the incorrect file attachment (80%). Speaking to Infosecurity, Egress

VP of corporate marketing Dan Hoy, said businesses reported an increase in

outbound emails since lockdown, “and more emails mean more risk.” He called

this a numbers game which has increased risk as remote workers are more

susceptible and likely to make mistakes the more they are removed from

security and IT teams. According to the research, 76% of breaches were caused

by “intentional exfiltration.” Hoy confirmed this is a combination of

employees innocently trying to do their job and not cause harm by sending

files to webmail accounts, but this does increase risk “and you cannot ignore

the malicious intent.”

‘The demand for cloud computing & cybersecurity professionals is on the rise’

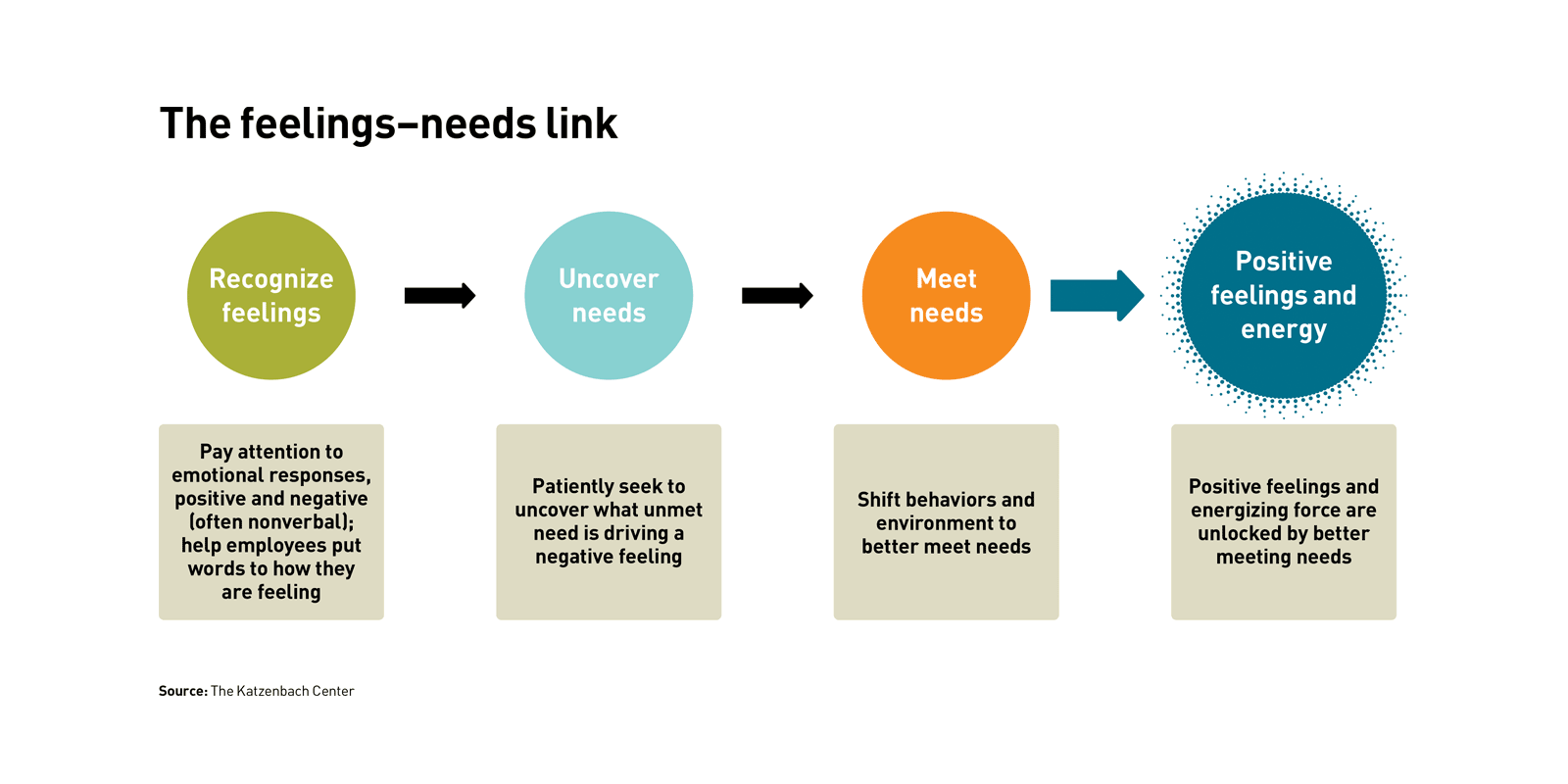

The COVID-19 pandemic undoubtedly has disrupted the normalcy of every company

across every sector. At Clumio, our primary focus continues to be the health

and well-being of our people. While tackling the situation, we also need to

keep pace with our professional duties. We made the transition to remote work

immediately and are in constant touch with our employees to ensure they don’t

feel isolated and remain focused on their work. We are encouraging employees

to follow the best practices of remote work and motivating them to spend time

on their emotional, mental and physical wellbeing during this time. We conduct

Zoom happy hours frequently to stay connected and have fun. As part of the

session, we also celebrated a virtual babyshower of one of our colleagues

recently. We had our annual summer picnic and created wonderful memories while

maintaining social distance, but staying together. During this time, we

have also launched the India Research and Development center in Bangalore. Our

India Center will drive front-end innovation and research to build cloud

solutions. India has a huge talent pool in technology, and it is only growing.

We have also started virtual hiring and onboarding during the pandemic.

AI investment to increase but challenges remain around delivering ROI

ROI on AI is still a work in progress that requires a focus on strategic

change. As companies progress in AI use, they often shift their focus from

automating internal employee and customer processes to delivering on

strategic goals. For example, 31% of AI leaders report increased revenue,

22% greater market share, 22% new products and services, 21% faster

time-to-market, 21% global expansion, 19% creation of new business models,

and 14% higher shareholder value. In fact, the AI-enabled functions showing

the highest returns are all fundamental to rethinking business strategies

for a digital-first world: strategic planning, supply chain management,

product development, and distribution and logistics. The study found that

automakers are at the forefront of AI excellence, as they accelerate AI

adoption to deliver on every part of their business strategy, from upgrading

production processes and improving safety features to developing

self-driving cars. Of the 12 industries benchmarked in the study, automotive

employs the largest AI teams. With the government actively supporting AI

under its Society 5.0 program, Japanese companies lead the pack in AI

adoption.

The future of .NET Standard

.NET 5 and all future versions will always support .NET Standard 2.1 and

earlier. The only reason to retarget from .NET Standard to .NET 5 is to gain

access to more runtime features, language features, or APIs. So, you can

think of .NET 5 as .NET Standard vNext. What about new code? Should you

still start with .NET Standard 2.0 or should you go straight to .NET 5? It

depends. App components: If you’re using libraries to break down your

application into several components, my recommendation is to use netX.Y

where X.Y is the lowest number of .NET that your application (or

applications) are targeting. For simplicity, you probably want all projects

that make up your application to be on the same version of .NET because it

means you can assume the same BCL features everywhere. Reusable libraries:

If you’re building reusable libraries that you plan on shipping on NuGet,

you’ll want to consider the trade-off between reach and available feature

set. .NET Standard 2.0 is the highest version of .NET Standard that is

supported by .NET Framework, so it will give you the most reach, while also

giving you a fairly large feature set to work with. We’d generally recommend

against targeting .NET Standard 1.x as it’s not worth the hassle

anymore.

Fintech sector faces "existential crisis" says McKinsey

After growing more than 25% a year since 2014, investment into the sector

dropped by 11% globally and 30% in Europe in the first half of 2020, says

McKinsey, citing figures from Dealroom. In July 2020, after months of

Covid-19-related lockdowns in most European countries, the drop was even

steeper, 18% globally and 44% in Europe, versus the previous year. "This

constitutes a significant challenge for fintechs, many of which are still

not profitable and have a continuous need for capital as they complete their

innovation cycle: attracting new customers, refining propositions and

ultimately monetizing their scale to turn a profit," states the McKinsey

paper. "The Covid-19 crisis has in effect shortened the runway for many

fintechs, posing an existential threat to the sector." Analyzing fundraising

data for the last three years from Dealroom, the conulstancy found that as

much as €5.7 billion will be needed to sustain the EU fintech sector through

the second half of 2021 — a point at which some sort of economic normalcy

might begin to emerge. It is not clear where these funds will come from,

however. Fintechs are largely unable to access loan bailout schemes due to

their pre-profit status.

Artificial Intuition: A New Generation of AI

Artificial intuition is a simple term to misjudge in light of the fact that

it seems like artificial emotion and artificial empathy. Nonetheless, it

varies fundamentally. Experts are taking a shot at artificial emotions so

machines can mirror human behavior all the more precisely. Artificial

empathy aims to distinguish a human’s perspective in real-time. Along these

lines, for instance, chatbots, virtual assistants and care robots can react

to people all the more properly in context. Artificial intuition is more

similar to human impulse since it can quickly survey the entirety of a

circumstance, including extremely inconspicuous markers of explicit

movement. The fourth era of AI is artificial intuition, which empowers

computers to discover threats and opportunities without being determined

what to search for, similarly as human instinct permits us to settle on

choices without explicitly being told on how to do so. It’s like a seasoned

detective who can enter a wrongdoing scene and know immediately that

something doesn’t appear to be correct or an experienced investor who can

spot a coming pattern before any other person.

Attacked by ransomware? Five steps to recovery

Arguably the most challenging step for recovering from a ransomware attack

is the initial awareness that something is wrong. It’s also one of the most

crucial. The sooner you can detect the ransomware attack, the less data may

be affected. This directly impacts how much time it will take to recover

your environment. Ransomware is designed to be very hard to detect. When you

see the ransom note, it may have already inflicted damage across the entire

environment. Having a cybersecurity solution that can identify unusual

behavior, such as abnormal file sharing, can help quickly isolate a

ransomware infection and stop it before it spreads further. Abnormal file

behavior detection is one of the most effective means of detecting a

ransomware attack and presents with the fewest false positives when compared

to signature based or network traffic-based detection. One additional method

to detect a ransomware attack is to use a “signature-based” approach. The

issue with this method, is it requires the ransomware to be known. If the

code is available, software can be trained to look for that code. This is

not recommended, however, because sophisticated attacks are using new,

previously unknown forms of ransomware.

Struggling to Secure Remote IT? 3 Lessons from the Office

To prepare for the arrival of CCPA, business leaders told us they spent an

average of $81.9 million on compliance during the last 12 months. Yet

despite making investments in hiring (93%), workforce training (89%), and

purchasing new software or services to ensure compliance (95%), 40% still

felt unprepared for the evolving regulatory landscape. Why? Because the root

causes were not addressed. Perhaps their IT operations and security teams

worked in silos, creating complexity and narrowing their visibility into

their IT estates. Maybe their teams were completely unaware that other

departments introduced their own software into the environment. Or more

commonly, the organization used legacy tooling that wasn't plugged into the

endpoint management or security systems of the IT teams. These are just some

of the root causes that keep organizations in the dark and prone to

exploits. While the transition to remote work was swift, it has presented

businesses with an opportunity to face these issues head-on. As workforces

continue to work remotely, CISOs and CIOs now have the chance to evaluate

how they effectively manage risk in the long term, which includes running

continuous risk assessments and investing in solutions that deliver rapid

incident response and improved decision-making.

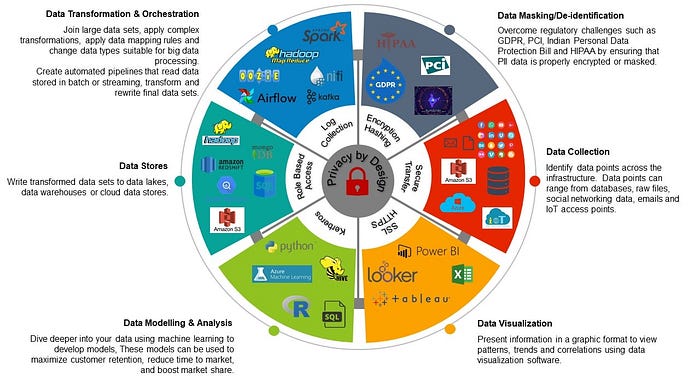

CTO challenges around the return to the workplace

Every CTO tells us that the digital transformation and change management

programmes designed to address the relentless regulatory, competitor,

innovation and customer challenges must go ahead as planned, regardless of the

pandemic. You may be tackling automating end-to-end electronic trading

workflows or creating mobile framework applications. Whatever the focus,

hampering the journey towards electronification, firms stumble over the

limitations of legacy systems; trading desks still depend on quotes, orders

and trades are processed from a multitude of external trading platforms, and

inconsistency, lag and gaps all result in costly errors, which are missed

opportunities at best, and regulatory reporting breaches and huge fines at

worst. In the quest for efficiencies, mitigation of risk, and achieving

seamless and future-proofed IT architecture, firms must automate to meet their

regulatory obligations and deliver client, management and regulatory

transparency. And this hasn’t even touched on achieving an ambition to create

end-to-end, freely flowing models of perfectly clean, ordered and

well-governed data. Every CTO needs to apply extraction and visualisation

layers, and mine the data for valuable insights that can be fed further

upstream.

The Case for Explainable AI (XAI)

Despite the numerous benefits to developing XAI, many formidable challenges

persist. A significant hurdle, particularly for those attempting to establish

standards and regulations, is the fact that different users will require

different levels of explainability in different contexts. Models that are

deployed to effectuate decisions that directly impact human life, such as those

in hospitals or military environments, will produce different needs and

constraints than ones utilized in low-risk situations There are also nuances

within the performance-explainability trade-off. Infrastructure and systems

designers are constantly balancing the demands of competing interests. ... There

are also a number of risks associated with explainable AI. Systems that produce

seemingly-credible but actually-incorrect results would be difficult to detect

for most consumers. Trust in AI systems can enable deception by way of those

very AI systems, especially when stakeholders provide features that purport to

offer explainability where they actually do not. Engineers also worry that

explainability could give rise to vaster opportunities for exploitation by

malicious actors. Simply put, if it is easier to understand how a model converts

input into output, it is likely also easier to craft adversarial inputs that are

designed to achieve specific outputs.

Quote for the day:

"Great leaders go forward without stopping, remain firm without tiring and remain enthusiastic while growing" -- Reed Markham