Should States Ban Mandatory Human Microchip Implants?

“U.S. states are increasingly enacting legislation to pre-emptively ban

employers from forcing workers to be ‘microchipped,’ which entails having a

subdermal chip surgically inserted between one’s thumb and index finger,"

wrote the authors of the report. "Internationally, more than 50,000 people

have elected to receive microchip implants to serve as their swipe keys,

credit cards, and means to instantaneously share social media information.

This technology is especially popular in Sweden, where chip implants are more

widely accepted to use for gym access, e-tickets on transit systems, and to

store emergency contact information.” ... “California-based startup Science

Corporation thinks that an implant using living neurons to connect to the

brain could better balance safety and precision," Singularity Hub wrote. "In

recent non-peer-reviewed research posted on bioarXiv, the group showed a

prototype device could connect with the brains of mice and even let them

detect simple light signals.” That same piece quotes Alan Mardinly, who is

director of biology at Science Corporation, as saying that the advantages of a

biohybrid implant are that it "can dramatically change the scaling laws of how

many neuros you can interface with versus how much damage you do to the

brain."

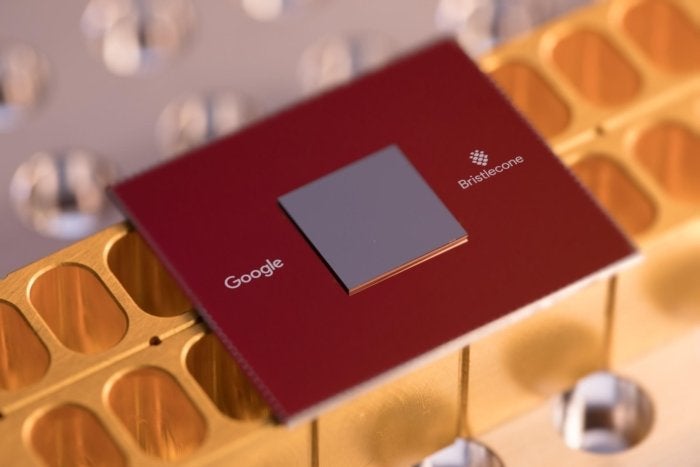

AI revolution drives demand for specialized chips, reshaping global markets

There’s now a shift toward smaller AI models that only use internal corporate

data, allowing for more secure and customizable genAI applications and AI

agents. At the same time, Edge AI is taking hold, because it allows AI

processing to happen on devices (including PCs, smartphones, vehicles and IoT

devices), reducing reliance on cloud infrastructure and spurring demand for

efficient, low-power chips. “The challenge is if you’re going to bring AI to

the masses, you’re going to have to change the way you architect your

solution; I think this is where Nvidia will be challenged because you can’t

use a big, complex GPU to address endpoints,” said Mario Morales, a group vice

president at research firm IDC. “So, there’s going to be an opportunity for

new companies to come in — companies like Qualcomm, ST Micro, Renesas,

Ambarella and all these companies that have a lot of the technology, but now

it’ll be about how to use it. ... Enterprises and other organizations are also

shifting their focus from single AI models to multimodal AI, or LLMs capable

of processing and integrating multiple types of data or “modalities,” such as

text, images, audio, video, and sensory input. The input from diverse

resources creates a more comprehensive understanding of that data and enhances

performance across tasks.

How to Address an Overlooked Aspect of Identity Security: Non-human Identities

Compromised identities and credentials are the No. 1 tactic for cyber threat

actors and ransomware campaigns to break into organizational networks and

spread and move laterally. Identity is the most vulnerable element in an

organization’s attack surface because there is a significant misperception

around what identity infrastructure (IDP, Okta, and other IT solutions) and

identity security providers (PAM, MFA, etc.) can protect. Each solution only

protects the silo that it is set up to secure, not an organization’s complete

identity landscape, including human and non-human identities (NHIs),

privileged and non-privileged users, on-prem and cloud environments, IT and OT

infrastructure, and many other areas that go unmanaged and unprotected. ...

Most organizations use a combination of on-prem management tools, a mix of one

or more cloud identity providers (IdPs), and a handful of identity solutions

(PAM, IGA) to secure identities. But each tool operates in a silo, leaving

gaps and blind spots that cause increased attacks and blind spots. 8 out of 10

organizations cannot prevent the misuse of service accounts in real-time due

to visibility and security being sporadic or missing. NHIs fly under the radar

as security and identity teams sometimes don’t even know they exist.

Version Control in Agile: Best Practices for Teams

With multiple developers working on different features, fixes, or updates

simultaneously, it’s easy for code to overlap or conflict without clear

guidelines. Having a structured branching approach prevents confusion and

minimizes the risk of one developer’s work interfering with another’s. ... One

of the cornerstones of good version control is making small, frequent commits.

In Agile development, progress happens in iterations, and version control

should follow that same mindset. Large, infrequent commits can cause headaches

when it’s time to merge, increasing the chances of conflicts and making it

harder to pinpoint the source of issues. Small, regular commits, on the other

hand, make it easier to track changes, test new functionality, and resolve

conflicts early before they grow into bigger problems. ... An organized

repository is crucial to maintaining productivity. Over time, it’s easy for

the repository to become cluttered with outdated branches, unnecessary files,

or poorly named commits. This clutter slows down development, making it harder

for team members to navigate and find what they need. Teams should regularly

review their repositories and remove unused branches or files that are no

longer relevant.

Abusing MLOps platforms to compromise ML models and enterprise data lakes

Machine learning operations (MLOps) is the practice of deploying and

maintaining ML models in a secure, efficient and reliable way. The goal of

MLOps is to provide a consistent and automated process to be able to rapidly

get an ML model into production for use by ML technologies. ... There are

several well-known attacks that can be performed against the MLOps lifecycle

to affect the confidentiality, integrity and availability of ML models and

associated data. However, performing these attacks against an MLOps platform

using stolen credentials has not been covered in public security research. ...

Data poisoning: This attack involves an attacker having access to the raw data

being used in the “Design” phase of the MLOps lifecycle to include

attacker-provided data or being able to directly modify a training dataset.

The goal of a data poisoning attack is to be able to influence the data that

is being trained in an ML model and eventually deployed to production.

... Model extraction attacks involve the ability of an attacker to steal

a trained ML model that is deployed in production. An attacker could use a

stolen model to extract sensitive training data such as the training weights

used, or to use the predictive capabilities used in the model for their own

financial gain.

Get Going With GitOps

GitOps implementations have a significant impact on infrastructure automation

by providing a standardized, repeatable process for managing infrastructure as

code, Rose says. The approach allows faster, more reliable deployments and

simplifies the maintenance of infrastructure consistency across diverse

environments, from development to production. "By treating infrastructure

configurations as versioned artifacts in Git, GitOps brings the same level of

control and automation to infrastructure that developers have enjoyed with

application code." ... GitOps' primary benefit is its ability to enable peer

review for configuration changes, Peele says. "It fosters collaboration and

improves the quality of application deployment." He adds that it also empowers

developers -- even those without prior operations experience -- to control

application deployment, making the process more efficient and streamlined.

Another benefit is GitOps' ability to allow teams to push minimum viable

changes more easily, thanks to faster and more frequent deployments, says Siri

Varma Vegiraju, a Microsoft software engineer. "Using this strategy allows

teams to deploy multiple times a day and quickly revert changes if issues

arise," he explains via email.

Balancing proprietary and open-source tools in cyber threat research

First, it is important to assess the requirements of an organization by

identifying the capabilities needed, such as threat intelligence platforms or

malware analysis tools. Next, evaluating open-source tools which can be

cost-effective and customizable, but may require community support and

frequent updates. In contrast, proprietary tools could offer advanced

features, dedicated support, and better integration with other products.

Finally, think about scalability and flexibility, as future growth may

necessitate scalable solutions. ... The technology is not magic, but it is a

powerful tool to speed up processes and bolster security procedures while also

reducing the gap between advanced and junior analysts. However, as of today,

the technology still requires verification and validation. Globally, the need

for security experts with a dual skill set in security and AI will be in high

demand. Because the adoption of generative AI systems increases, we need

people who understand these technologies because threat actors are also

learning. ... If a CISO needs to evaluate effectiveness of these tools, they

first need to understand their needs and pain points and then seek guidance

from experts. Adopting generative AI security solutions just because it is the

latest trend is not the right approach.

Get your IT infrastructure AI-ready

Artificial intelligence adoption is a challenge many CIOs grapple with as they

look to the future. Before jumping in, their teams must possess practical

knowledge, skills, and resources to implement AI effectively. ... AI

implementation is costly and the training of AI models requires a substantial

investment. "To realize the potential, you have to pay attention to what it's

going to take to get it done, how much it's going to cost, and make sure

you're getting a benefit," Ramaswami said. "And then you have to go get it

done." GenAI has rapidly transformed from an experimental technology to an

essential business tool, with adoption rates more than doubling in 2024,

according to a recent study by AI at Wharton ... According to Donahue, IT

teams are exploring three key elements: choosing language models, leveraging

AI from cloud services, and building a hybrid multicloud operating model to

get the best of on-premise and public cloud services. "We're finding that

very, very, very few people will build their own language model," he said.

"That's because building a language model in-house is like building a car in

the garage out of spare parts." Companies look to cloud-based language models,

but must scrutinize security and governance capabilities while controlling

cost over time.

What is an EPMO? Your organization’s strategy navigator

The key is to ensure the entire strategy lifecycle is set up for success

rather than endlessly iterating to perfect strategy execution. Without

properly defining, governing, and prioritizing initiatives upfront, even the

best delivery teams will struggle to achieve business goals in a way that

drives the right return for the organization’s investment. For most

organizations, there’s more than one gap preventing desired results. ... The

EPMO’s job is to strip away unnecessary complexity and create frameworks that

empower teams to deliver faster, more effectively, and with greater focus. PMO

leaders should ask how this process helps to hit business goals faster. So by

eliminating redundant meetings and scaling governance to match project size

and risk, delivery timelines can shorten. This kind of targeted adjustment

keeps momentum high without sacrificing quality or control. ... For an EPMO to

be effective, ideally it needs to report directly to the C-suite. This matters

because proximity equals influence. When the EPMO has visibility at the top,

it can drive alignment across departments, break down silos, drive

accountability, and ensure initiatives stay connected to overall business

objectives serving as the strategy navigator for the C-suite.

Data Center Hardware in 2025: What’s Changing and Why It Matters

DPUs can handle tasks like network traffic management, which would otherwise

fall to CPUs. In this way, DPUs reduce the load placed on CPUs, ultimately

making greater computing capacity available to applications. DPUs have been

around for several years, but they’ve become particularly important as a way

of boosting the performance of resource-hungry workloads, like AI training, by

completing AI accelerators. This is why I think DPUs are about to have their

moment. ... Recent events have underscored the risk of security threats linked

to physical hardware devices. And while I doubt anyone is currently plotting

to blow up data centers by placing secret bombs inside servers, I do suspect

there are threat actors out there vying to do things like plant malicious

firmware on servers as a way of creating backdoors that they can use to hack

into data centers. For this reason, I think we’ll see an increased focus in

2025 on validating the origins of data center hardware and ensuring that no

unauthorized parties had access to equipment during the manufacturing and

shipping processes. Traditional security controls will remain important, too,

but I’m betting on hardware security becoming a more intense area of concern

in the year ahead.

Quote for the day:

"Nothing in the world is more common

than unsuccessful people with talent." -- Anonymous