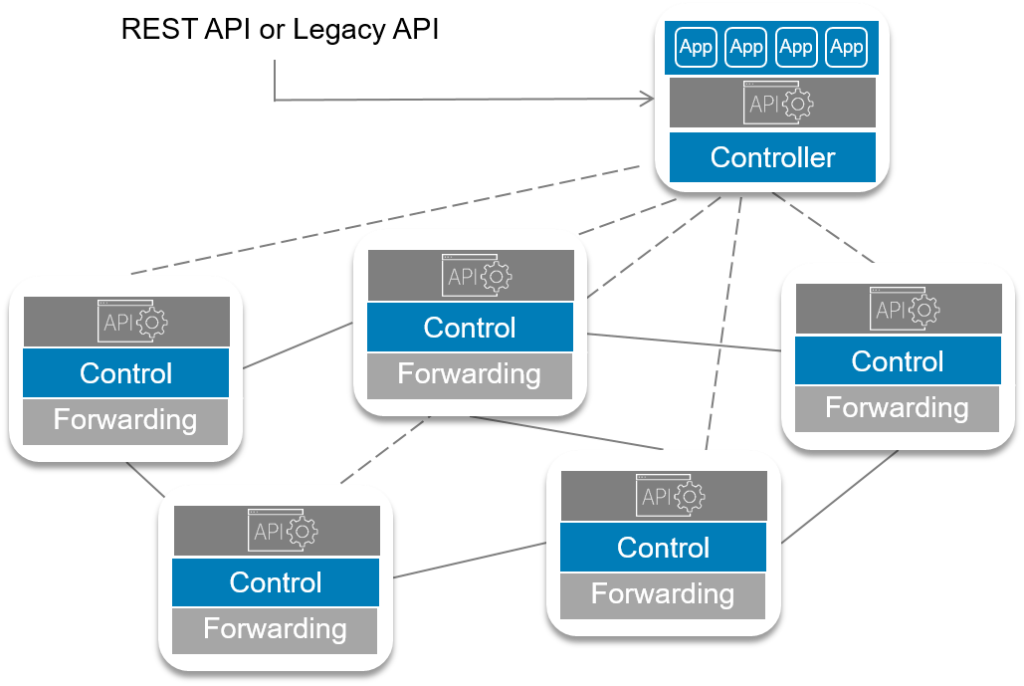

The Mastercard blockchain is a permissioned blockchain, which will allow participants to maintain the distributed ledger without sacrificing scalability or performance, Sota explains in the video. ... "Our blockchain technology can be used for clearing in near real-time card payment transactions eliminating consolidation and improving settlement," he said. According to Mastercard, its technology boasts four key differentiators to others in the space, spanning privacy, flexibility, scalability, and the reach of the company's settlement network. Mastercard said its blockchain provides privacy by ensuring that transaction details are shared only amongst the participants of a transaction while maintaining a fully auditable and valid ledger of transactions, but still allowing partners to use the blockchain APIs alongside other Mastercard APIs

IT, OT, IoT: Does Hitachi Have a Dictionary for This Alphabet Soup?

At one end of the spectrum, and most notably in this “industrial reinvested as software” class, is GE. GE, better known for building gas plants, jet engines and wind turbines, is reinventing itself as a software company. Under former CEO Jeff Immelt, and current head of all things digital, Bill Ruh, the company is investing hundreds of millions of dollars to build capability in the software space. GE is applying its Predix software offering both to its own business units but, more importantly, is attempting to become the software provider of choice for a host of third-party industrial organizations. At the other end of the spectrum lie the traditional technology vendors who, despite not having significant industrial experience themselves, have long histories of delivering technologies to industrial operations.

Architecture Patterns to Consider When Designing an Enterprise Data Lake

Virtually every enterprise-level organization requires encryption for stored data, if not universally, at least for most classifications of data other than that which is publicly available. All leading cloud providers support encryption on their primary objects store technologies (such as AWS S3) either by default or as an option. Likewise, the technologies used for other storage layers such as derivative data stores for consumption typically offer encryption as well. Encryption key management is also an important consideration, with requirements typically dictated by the enterprise’s overall security controls. Options include keys created and managed by the cloud provider, customer-generated keys managed by the cloud-provider, and keys fully created and managed by the customer on-premises.

Why Tech Giants See Singapore As The Next AI Hub

Singapore-based Marvelstone on Monday dovetailed the announcement by the Chinese conglomerate – owner of the South China Morning Post – by revealing it was setting up an AI hub of its own in the city state, which would incubate 100 start ups every year. It said its hub would be “the world’s biggest” when it opens next year. ... The government also showed it is serious about the country’s AI prospects when it announced the development of a dedicated data science consortium, and pledged Sg$150 million to industry research. In the Lattice80 complex, located in Singapore’s central business district, Ko said he was confident the government would follow through with its pledge to foster the industry. “Firstly, it’s about diversity … other Asian cities like Tokyo are also trying to be AI hubs, but they are more homogenous. Singapore’s advantage is that it is welcoming to all, and there is strong government support,” he said.

The prevalence of AI-powered IoT devices inspires mixed emotions

The easy path forward would be to continue developing connected devices without taking people’s fears into consideration. However, this is both unethical and unadvisable from a practical standpoint. Unsecured devices put multiple parties at risk, from the person using the product to the company pulling data from it. A better approach to the situation lies in analyzing the strengths, weaknesses, opportunities, and threats AI- and IoT-enabled devices offer. This will require addressing such pain points as IoT standards, privacy measures, and security. It could also involve education, job training, and general change management. But whether we’re looking at something as mundane as faster streaming or as grand as smart cities, the internet of things — when bolstered by artificial intelligence — has potential to impact every aspect of our lives.

Three Things Data Scientists Can Do To Help Themselves And Their Organizations

In the brave new world of business analytics fueled by big data, there has been significant discussion about the evolving roles of C-suite executives, including the CEO, CTO, and CIO. That discussion is now expanding to include the CMO plus the new roles of CDO and CDS. I do not have an MBA and I usually don’t undertake risky behavior, such as telling a CEO how to run her or his business. However, it is entirely appropriate for the CMO, CDO, and CDS to step up to the challenges of leading and directing the analytics, big data, and data science efforts of their organization, respectively. It is also appropriate for these execs to stand firm against corporate cultures and naysayers that resist big data analytics projects with these types of remarks: a) “Let’s wait and see how it develops elsewhere”; b) “We have always done big data”; or c) “What’s the ROI? Show me the numbers.”

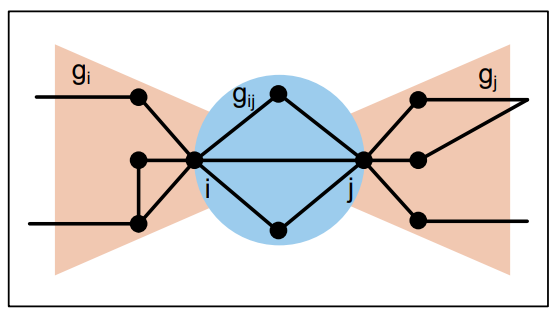

The cryptoeconomics of scaling blockchains

A key shortcoming of the current generation of blockchain technologies is their limits when it comes to performance and scalability. For instance, the entire Bitcoin network can only handle seven transactions per second, compared with over 2,000 transactions per second on the VISA network and millions of transactions per second handled by any top tier consumer application. That has made it impossible for the current generation of blockchain networks to handle big data applications. Is the poor performance of blockchains an engineering problem? It is not, at least not entirely. The problem is actually inherent to the incentive-driven design of blockchains, known as cryptoeconomics. Incentives in Bitcoin consensus Blockchain is useful because it allows untrusted and non-corporative parties to work together and maintain a system. Let’s look at the example of the Bitcoin network.

Stuck between Design Thinking and Lean Startup? Take a hybrid approach

There are now so many different kinds of innovation: design innovation, business model innovation, digital innovation. And so many ways to organizefor innovation: innovation labs, innovation centers, corporate accelerator programs. More significantly, there has been a growth of two schools of thought in corporate innovation: Design Thinking and Lean Startup. Suddenly corporate innovators feel the need to be trained in both. But many consultancies practice or train in only one. And wherever corporate innovators sit, there is growing pressure to be more entrepreneurial. More agile. To increase speed to market. To be more like that startup accelerator your boss visited. ... The best way to tackle this would be to learn about these new approaches, test them on real innovation projects, and then adapt them so that they’re really practical and work in corporations.

What Are The Security Threats For The Cloud

Surprisingly, although cloud security is so important seeing the different data breaches we have seen around the globe, over 40% of the IT managers have no plans of purchasing ‘security-as-a-service’ solutions. This raises the question, how well such companies are prepared for a future where cloud becomes more and more important as well as criminals are targeting cloud solution on a wider scale. The security of the data in your cloud is vital for companies. Being hacked can have serious consequences for a company as well as on a personal level, seeing the Target CEO who was fired after a data breach. Once your cloud is hacked, your company has a serious issue, depending on the severity of the hack. Therefore it is wise to be aware of the security issues when dealing with the cloud. This infographic might help to achieve that.

Tech Giants Are Paying Huge Salaries for Scarce A.I. Talent

At the top end are executives with experience managing A.I. projects. In a court filing this year, Google revealed that one of the leaders of its self-driving-car division, Anthony Levandowski, a longtime employee who started with Google in 2007, took home over $120 million in incentives before joining Uber last year through the acquisition of a start-up he had co-founded that drew the two companies into a court fight over intellectual property. Salaries are spiraling so fast that some joke the tech industry needs a National Football League-style salary cap on A.I. specialists. “That would make things easier,” said Christopher Fernandez, one of Microsoft’s hiring managers. “A lot easier.” There are a few catalysts for the huge salaries. The auto industry is competing with Silicon Valley for the same experts who can help build self-driving cars. Most of all, there is a shortage of talent, and the big companies are trying to land as much of it as they can. Solving tough A.I. problems is not like building the flavor-of-the-month smartphone app.

Quote for the day:

"Nothing so conclusively proves a man's ability to lead others as what he does from day to day to lead himself." -- Thomas J. Watson