Companies are starting to cast the net farther afield, taking on graduates from a far wider range of disciplines. Virtusa often looks for people with a background in the arts, says Gabrault, because alongside their analytical skills they are creative and can play a key role in user experience, and make sure a product is actually something people want to interact with. Teamwork is also important. IoT is not about beavering away on solo projects, but involves interaction with other teams, end users and customers. “Candidates need to show that they can empathise with the client,” adds Owen. Helping students become “work-ready” is one of the driving forces behind Fast Track, a programme run by the Future of British Manufacturing. It matches students from some of the UK’s leading universities with companies, to help them develop their next big innovation or connected product.

Demystifying The Dark Science Of Data Analytics

Deeper analytics knowledge can also help IT leaders understand why the approach often seems so mysterious. "Data science, in its best form, is an extremely creative endeavor," Johnston says. "There is not necessarily a need for managers to understand the internals of every analysis, just as owners of a software project need not understand the underlying technological internals." ... Unlike IT, where solutions are often obvious and widely adopted by enterprises worldwide, analytics processes are frequently unique and individualized. "Choosing the best analytical method is sometimes straightforward, sometimes art," Magestro says. "For example, looking for cause-effect relationships in data usually means some kind of regression, and looking for similar characteristics in large customer datasets likely involves clustering algorithms."

Select Your Agile Approach That Fits Your Context

By definition, the team finishes the work at the end of that time. The PO decides if any unfinished work moves to the next iteration or farther down the product roadmap. If your team uses iterations as in Scrum, the iteration starts with the ranked backlog and ends with the demo and retrospective. If your team uses flow, you can demo and retrospect at any time. To be fair, iteration-based agile approaches don’t prevent you from demoing or retrospecting at any time. ... Teams might have trouble finishing stories in a timebox or iteration. There can be any number of reasons for their trouble. Here are three common problems I’ve seen: the stories are too large; the people are multitasking on several stories or worse, projects; and the team is not working as a team to finish stories. If the team can’t finish because of multitasking, a cadence might make that even worse. However, visualizing their work might make a difference.

APIs Need to Be Released, Too!

Would it come as a surprise to hear that at the core of each and every one of these priorities are APIs and DevOps? So, just what is an API? API stands for Application Programming Interface and it’s a highly common software development term – an initialism you’re bound to have come across. In some form or another, development has always relied on interfaces. Without going too deep, APIs are primarily concerned with enabling communications between ‘private’ and ‘public’ interfaces. Private interfaces are used internally between individual developers and development teams. These aren’t accessible to third parties and can be changed as often as required. This is in stark contrast to public interfaces, which are exposed to third parties – be they internal or outside the company – and shouldn’t change often as other services using these interfaces may break or stop functioning.

Quantum physics boosts artificial intelligence methods

A popular computing technique for classifying data is the neural network method, known for its efficiency in extracting obscure patterns within a data set. The patterns identified by neural networks are difficult to interpret, as the classification process does not reveal how they were discovered. Techniques that lead to better interpretability are often more error-prone and less efficient. “Some people in high-energy physics are getting ahead of themselves about neural nets, but neural nets aren’t easily interpretable to a physicist,” said USC’s physics graduate student Joshua Job, co-author of the paper and guest student at Caltech. The new quantum program is “a simple machine learning model that achieves a result comparable to more complicated models without losing robustness or interpretability,” Job said.

How Close Are You Really?

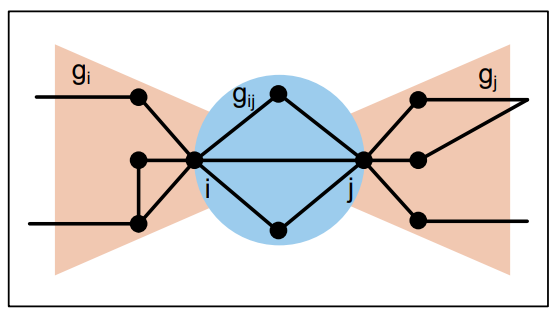

The network of links between individuals—their social network—has long fascinated social scientists. These networks are neither random nor entirely ordered. Instead, they occupy a middle ground in which people are strongly linked to a few individuals they know well, with weaker links to a larger group of friends and coworkers plus extremely weak links to a wide range of casual acquaintances. Social scientists measure the strength of these links using a variety of indicators, such as how often a person calls another, whether that call is reciprocated, the time the two people spend speaking, and so on. But these indicators are often difficult and time-consuming to measure. So network theorists would dearly love to have some way of measuring the strength of ties from the structure of the network itself.

The Future of Enigma and Data

In practice, to build a data marketplace, the Enigma protocol needs to implement the infrastructure for a decentralized database, with storage and computational abilities that far exceed those that blockchains offer. While all blockchains are, in a manner of speaking, protocols for decentralized computing and data storage, their poor scalability and lack of privacy features limit potential use-cases. We need a second-layer network that can handle more data, faster, and can provide better privacy features — and that’s where the Enigma protocol comes in. Our protocol is based on the ideas presented in the 2015 Enigma whitepaper, as well as in our subsequent work (paper, thesis). It aspires to complement a blockchain (of any kind) with an off-chain data network (essentially — a single, always-on decentralized database), in much the same way that payment networks (e.g., Raiden) offer better financial transactions scalability.

Could Your Reactive Cyber Security Approach Put You Out of Business?

One scenario could involve your organisation becoming the victim of ransomware where an attacker hijacks your data and demands compensation for it. Without paying up, your operations come to a screeching halt, and your revenue plummets overnight. Another would be having sensitive customer or employee information fall into the wrong hands. This can lead to everything from identify theft to corporate espionage. Even basic information, like email addresses, phone numbers and billing addresses can be of significant value to cyber criminals and open a can of worms. You also have to consider the level of disruption that comes along with an attack. Not only does downtime cost your business serious money, it can tarnish your brand reputation, and many customers may end up turning to competitors.

Digital brains are as error-prone as humans

The algorithms that make up these neural networks can unintentionally boost these biases, giving them undue importance in their decision making. Writing in the WSJ, Professor Crawford said: 'These systems “learn” from social data that reflects human history, with all its biases and prejudices intact. 'Algorithms can unintentionally boost those biases, as many computer scientists have shown. 'It’s a minor issue when it comes to targeted Instagram advertising but a far more serious one if AI is deciding who gets a job, what political news you read or who gets out of jail. 'Only by developing a deeper understanding of AI systems as they act in the world can we ensure that this new infrastructure never turns toxic.' Research has already demonstrated that AI systems trained using such data can be flawed.

The Role of Data in the Financial Sector

What makes the financial sector even more interesting from a big data standpoint is the constant stream of new regulations and reporting standards that bring new data sources and more complex metrics into financial systems. ... The ForEx markets, as mentioned earlier, trade 24 hours per day, from morning in Sydney to evening in New York, except for a small window during the weekend. Additionally, algorithmic trading has been used in the financial markets for a long time in one form or another. The NYSE introduced its Designated Order Turnaround (DOT) system in the early 1970s for routing orders to trading desks, where the orders were executed manually. Now, algorithmic trading systems break very large orders into smaller pieces that are executed automatically based on time, price, and volume, optimized for market parameters.

Quote for the day:

"Defragmenting data silos is key for accelerating research." -- Joerg Kurt Wegner

No comments:

Post a Comment