How IT and Security Leaders Are Addressing the Current Social & Economic Landscape

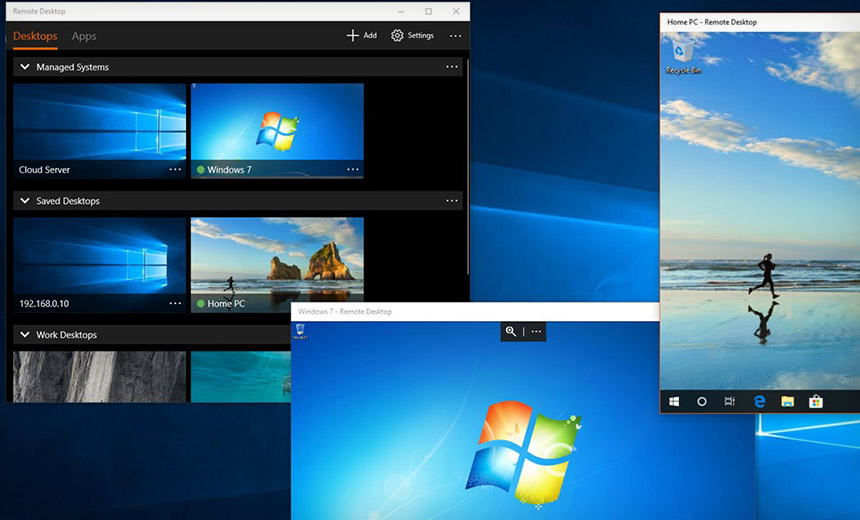

Despite the security and overall organizational preparedness concerns, IT and security leaders share some notes of encouragement. The majority (68%) of IT leaders agree that their technology infrastructure was prepared to adequately address employees working from home. On an even brighter note, 81% of security leaders believe that their existing security infrastructure can adequately address the current working from home demands, and 67% feel that their security infrastructure is fully prepared to handle the range of risks associated. As more and more individuals are getting their jobs done from home, 71% of IT leaders say that the current situation has created a more positive view of remote workplace policies and will likely impact how they plan for office space, tech staffing and overall staffing in the future. In order to address the new work environment due to COVID-19, 44% of IT leaders will need to acquire new technology solutions and services.

Hackers Hit Food Supply Company

DarkOwl said its analysis shows the attackers have managed to steal some 2,600 files from Sherwood. The stolen data includes cash-flow analysis, distributor data, business insurance content, and vendor information. Included in the dataset are scanned images of driver's licenses of people in Sherwood's distribution network. The threat actors posted screen shots of a chat they had with Coveware, a ransomware mitigation firm that Sherwood had hired to help deal with the crisis. The conversation shows that Sherwood has been dealing with the attack since at least May 3rd , according to DarkOwl's research. The screenshots also suggest that Sherwood at one point was willing to pay $4.25 million and later $7.5 million to get its data back. In an emailed statement, a Sherwood spokeswoman said the company does not comment on active criminal investigations. ... According to DarkOwl, on Monday the attackers updated Happy Blog with news of their plan to next auction off personal data belonging to Madonna.

5 Ways to Detect Application Security Vulnerabilities Sooner to Reduce Costs and Risk

Human error is always a security concern, especially when it comes to credentials. Just consider how many times you’ve heard of developers committing code only to later realize they’d accidentally included a password. These errors can lead to high-cost consequences for organizations. There are many tools that scan for secrets and credentials that can be accidentally committed to a source code repository. One example is Microsoft Credential Scanner (CredScan). Perform this scan in the PR/CI build to identify the issue as soon as it happens so they can be changed before this becomes a problem. Once an application is deployed, you can continue to scan for vulnerabilities through the following automated continuous delivery pipeline capabilities. Unlike SAST, which looks for potential security vulnerabilities by examining an application from the inside—at the source code—Dynamic Application Security Testing (DAST) looks at the application while it is running to identify any potential vulnerabilities that a hacker could exploit.

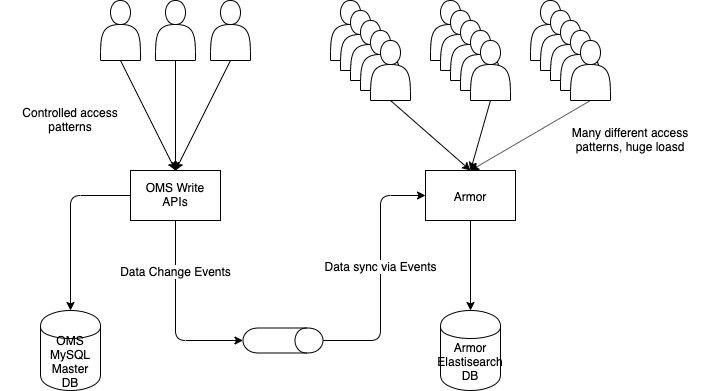

For me, it is that asynchronous programming is such a paradigm shift in a system architecture that it should be analyzed very differently from a “synchronous” system. We analyzed response times but never thought how many concurrent requests there would be at any point because, in a synchronous system, the calling system is itself limited in how many concurrent calls it can generate, because of threads getting blocked for every request. This is not true for asynchronous systems, and hence a different mental model is required to understand causes and outcomes. Any large software system (especially in the current environment of dependent microservices) is essentially a data flow pipeline and any attempt to scale which does not expand the most bottlenecked part of the pipeline is useless in increasing data flow. We thought of pushing a huge amount of data through our pipeline by making Armor alone asynchronous and failed to distinguish between a matter of Speed (doing this faster) from a matter of Volume (doing a lot of it at the same time).

The downside of resilient leadership

Where does resilience come from? It’s a muscle that can be developed early on through a strong family life or a mentor relationship, or from positive experiences that help ready children and young adults for life’s tests in later years. But resilience is often also forged at young ages through adverse experiences that force children to rely on what psychologists call an “internal locus of control,” a concept developed in the 1950s by American psychologist Julian Rotter. When challenged, these young people decide that they are going to be in charge of their own fate and not let their circumstances define them. ... One of the messages these future leaders told themselves, or that was hammered into them by a parent, was “don’t be a victim.” Nobody would wish tough circumstances on another person, and yet it was in the moments of being tested that they discovered what they were made of. Adversity built a quiet confidence in them, because they went through tough times and knew they could do it again.

Why the cloud journey is hard

Cloud journey- Conway’s Law states: “The structure of any system designed by an organisation is isomorphic to the structure of the organisation,” which means software or automated systems end up shaped like the organisational structure they’re designed in or designed for, according to Wikipedia. This could be why some organisations find it difficult to fully embrace cloud adoption as certain legacy organisational structures just don’t fit into a more demanding agile oriented cloud environment. Nico Coetzee, Enterprise Architect for Cloud Adoption and Modern IT Architecture at Ovations, elaborates: “Every company that embarks on its cloud journey can count on some deliverables not going as planned. There are many reasons for the failure of certain modernisation projects and cloud journeys, but it might come as a surprise to hear that the most common reason could be as simple as traditional structures.” If we go back to Melvin E Conway’s research on ‘How do committees invent?’ from 1967, there are some key insights.

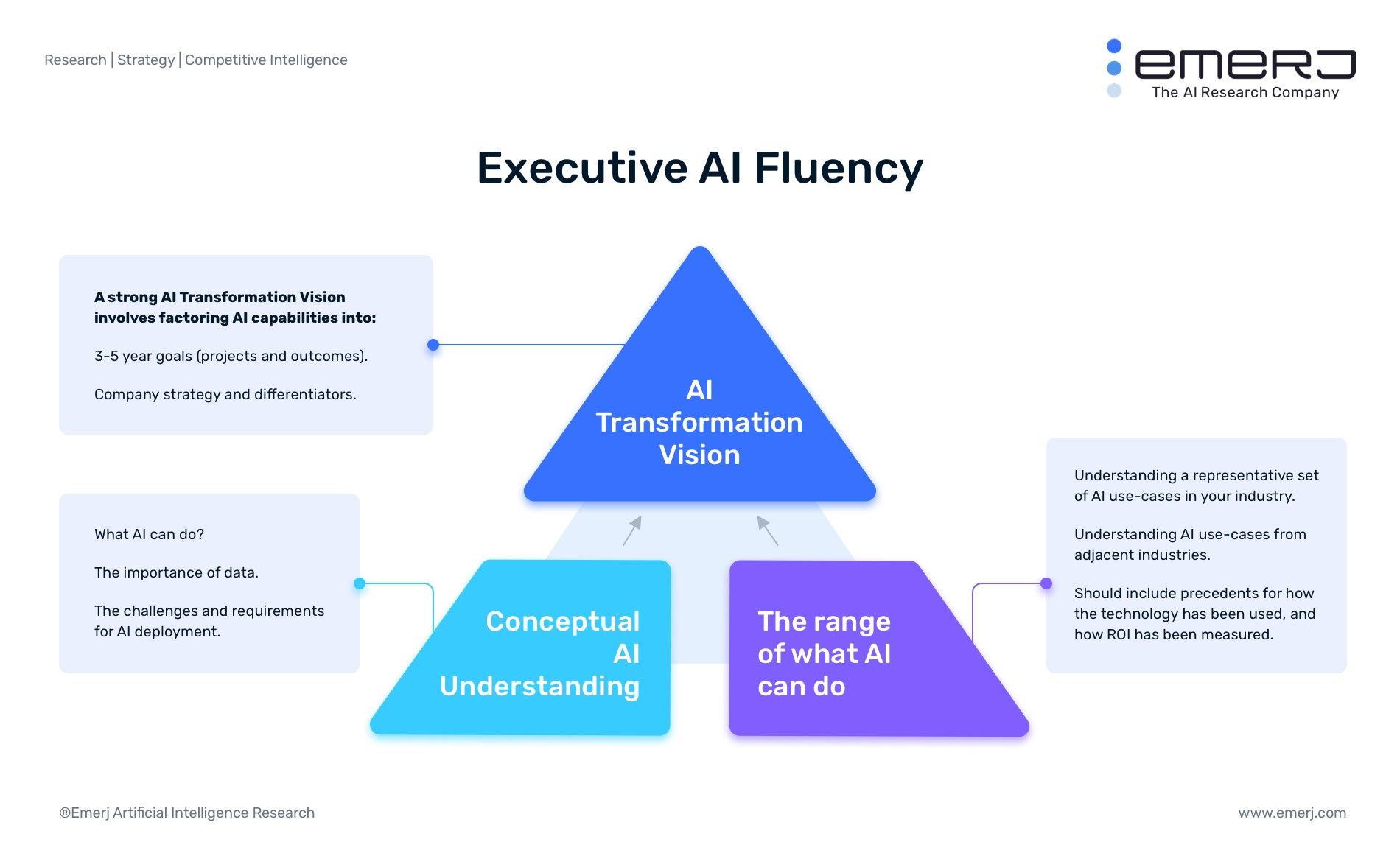

Executive AI Fluency – Ending the Cycle of Failed AI Proof-of-Concept Projects

Executives cannot understand AI in a purely conceptual fashion. They need practical use-cases for the types of AI projects they are brainstorming – and it is even better (at least initially) to have examples within their industry or related industries. One example of a strong AI use-case in banking is fraud detection. Some banks and AI vendors report to have lowered their rate of false-positive results for financial fraud using predictive analytics solutions. A wide range of use cases allows leadership to better detect where AI opportunities might lie within the company and decide which projects deserve the most attention of the many that could be applied. Banking leaders should be able to expect a chatbot solution to provide their customers basic answers to common and simple questions. Bank leadership should not expect their chatbot to be able to handle complex conversations, or draw upon rich context from previous email or phone conversations with the client. The technology is simply not at that level today. In this way working with AI is more strategic than the “plug and play” nature of IT solutions.

US Treasury Warning: Beware of COVID-19 Financial Fraud

FinCEN notes that medical-related fraud scams, including fake cures, tests, vaccines and services, may require customers to pay via a pre-paid card instead of a credit card; require the use of a money services business or convertible virtual currency; or require that the buyer send funds via an electronic funds transfer to a high-risk jurisdiction. The agency notes that scams involving nondelivery of medical-related goods often occur through websites, robocalls or on the darknet. Scams involving price gouging include cases where individuals have been selling surplus items or newly acquired bulk shipments of goods - such as masks, disposable gloves, isopropyl alcohol, disinfectants, hand sanitizers, toilet paper and other paper products - at inflated prices, FinCEN explains. "Payment methods vary by scheme and can include the use of pre-paid cards, money services businesses, credit card transactions, wire transactions, or electronic fund transfers," it notes. ... "FinCEN is correct in its assertion that there will be a huge increase in all types of cybercrimes, especially related to medical scams and related cyberattacks, says former FBI agent Jason G. Weiss

How the UK pensions industry is paving the way for open data sharing ecosystems

While some questions remain over how the regulatory standards from the pensions dashboard and Open Banking (a separate regulation focused on building transparency and open sharing into the banking industry) can be applied to a wider Open Finance initiative, the pension dashboard’s architecture — federated digital identity, UMA, and interoperability through secure Open APIs — provides a viable model for Open Finance. Crucially, these technologies conform to open standards, meaning the architecture that underpins them can be updated and synced with any new technology, preventing the formation of any legacy systems and allowing for consistent innovation. When adopted across the financial services ecosystem, they would create a variety of secure, trustworthy, and user-friendly tools that would empower users to engage more meaningfully with their finances. Picture it: financial advisors and brokers could deliver important financial advice more completely, immediately, and visibly through the kind of seamless user experiences that are currently the preserve of digital native sectors.

NCSC discloses multiple vulnerabilities in contact-tracing app

The encryption vulnerability in the beta app has arisen because the app does not encrypt proximity contact event data, and the data is not independently encrypted before it is sent to the central servers. This, said Levy, means that when data is transferred to the back-end, it is only protected by the transport layer security (TLS) protocol, so that if Cloudflare was compromised in some way, cyber criminals could access that data. He pointed out that this was something else that was sacrificed at first because of the need for speed. Finally, Levy noted some ambiguities and errors in statements made about the beta app. Among these was a statement that “the infrastructure provider and the healthcare service can be assumed to be the same entity”. This suggests that the NCSC trusts the network bridging the gap between user devices and the central NHS servers in the same way as it trusts the whole of the NHS, which is clearly not the case.

Quote for the day:

"You must learn to rule. It's something none of your ancestors learned." -- Frank Herbert