Quote for the day:

"It is not such a fierce something to lead once you see your leadership as part of God's overall plan for his world." -- Calvin Miller

Boards don’t need cyber metrics — they need risk signals

Decision-makers want to know whether risk is increasing or decreasing, whether

controls are effective, and whether the organization can limit damage when

prevention fails. Metrics are therefore useful when they clarify those

questions. “Time is really the universal metric because everyone can understand

time,” Richard Bejtlich, strategist and author in residence at Corelight, tells

CSO. “How fast do we detect problems, and how fast do we contain them. Dwell

time, containment time. That’s the whole game for me.” Organizations cannot

prevent every intrusion, Bejtlich argues, but they can measure how quickly they

recognize and contain one. ... Wendy Nather, a longtime CISO who is now an

advisor at EPSD, cautions against equating measurement with understanding. “When

you are reporting to the board, there are some things you just cannot count that

you have to report anyway,” she tells CSO. She points to incidents, near misses,

and changes in assumptions as examples. “Anything that changes your assumptions

about how you’re managing your security program, you should be bringing those to

the board, even if you can’t count them,” Nather says. Regular metrics can

create a rhythm of predictability, and that predictability could lull board

members into a false sense of security. “Metrics are very seductive,” she says.

“They lead us toward things that can be counted, that happen on a regular

basis.” The result may be a steady flow of data that obscures structural risk or

emerging weaknesses, Nather warns.

Decision-makers want to know whether risk is increasing or decreasing, whether

controls are effective, and whether the organization can limit damage when

prevention fails. Metrics are therefore useful when they clarify those

questions. “Time is really the universal metric because everyone can understand

time,” Richard Bejtlich, strategist and author in residence at Corelight, tells

CSO. “How fast do we detect problems, and how fast do we contain them. Dwell

time, containment time. That’s the whole game for me.” Organizations cannot

prevent every intrusion, Bejtlich argues, but they can measure how quickly they

recognize and contain one. ... Wendy Nather, a longtime CISO who is now an

advisor at EPSD, cautions against equating measurement with understanding. “When

you are reporting to the board, there are some things you just cannot count that

you have to report anyway,” she tells CSO. She points to incidents, near misses,

and changes in assumptions as examples. “Anything that changes your assumptions

about how you’re managing your security program, you should be bringing those to

the board, even if you can’t count them,” Nather says. Regular metrics can

create a rhythm of predictability, and that predictability could lull board

members into a false sense of security. “Metrics are very seductive,” she says.

“They lead us toward things that can be counted, that happen on a regular

basis.” The result may be a steady flow of data that obscures structural risk or

emerging weaknesses, Nather warns. The Enterprise AI Postmortem Playbook: Diagnosing Failures at the Data Layer

Your first rule of the playbook is to treat AI incidents as data incidents – until proven otherwise. You should start by tagging the failure type. Document whether it’s a structure issue, retrieval misalignment, conflict with metric definition, or other categories. Ideally, you want to assign the issue to an owner and attach evidence to force some discipline into the review. Try to classify the issue into clearly defined buckets. For example, you can classify into these four buckets: structural failure, retrieval misalignment, definition conflict, or freshness failure. Once this part is clear, the investigation becomes more focused. The goal with this step is to isolate the data fault line. ... The next step is to move one layer deeper. Identify the source table behind the retrieved context. You also want to confirm the timestamp of the last refresh. Check whether any ingestion jobs failed, partially completed, or ran late. Silent failures are common. A job may succeed technically while loading incomplete data. As you go through the playbook continue tracing upstream. Find the transformation job that shaped the dataset. Look at recent schema changes. Check whether any business rules were updated. The idea here is to rebuild the exact path that led to the output. Try to not make any assumptions at this stage about model behavior – simply keep tracing until the process is complete. Don’t be surprised if the model simply worked with what it was given.Top Attacks On Biometric Systems (And How To Defend Against Them)

Presentation attacks, often referred to as spoofing attacks, occur when an

attacker presents a fake biometric sample to a sensor (like a camera or

microphone) in an attempt to impersonate a legitimate user. Common examples

include printed photos, video replays, silicone masks, prosthetics or

synthetic fingerprints. More recently, high-quality deepfake videos have

become a powerful new tool in the attacker’s arsenal. ... Passive liveness

techniques, which analyze subtle physiological and behavioral signals without

requiring user interaction, are particularly effective because they reduce

friction while improving security. However, liveness detection must be

resilient to unknown attack methods, not just tuned to detect known spoof

types. ... Not all biometric attacks happen in front of the sensor. Replay and

injection attacks target the biometric data pipeline itself. In these

scenarios, attackers intercept, replay or inject biometric data, such as

images or templates, directly into the system, bypassing the sensor entirely.

... Defensive strategies must extend beyond the biometric algorithm. Secure

transmission, encryption in transit, device attestation, trusted execution

environments and validation that data originates from an authorized sensor are

all essential. ... Although less visible to end users, attacks targeting

biometric templates and databases can pose long-term risks. If biometric

templates are compromised, the impact extends far beyond a single breach.

Presentation attacks, often referred to as spoofing attacks, occur when an

attacker presents a fake biometric sample to a sensor (like a camera or

microphone) in an attempt to impersonate a legitimate user. Common examples

include printed photos, video replays, silicone masks, prosthetics or

synthetic fingerprints. More recently, high-quality deepfake videos have

become a powerful new tool in the attacker’s arsenal. ... Passive liveness

techniques, which analyze subtle physiological and behavioral signals without

requiring user interaction, are particularly effective because they reduce

friction while improving security. However, liveness detection must be

resilient to unknown attack methods, not just tuned to detect known spoof

types. ... Not all biometric attacks happen in front of the sensor. Replay and

injection attacks target the biometric data pipeline itself. In these

scenarios, attackers intercept, replay or inject biometric data, such as

images or templates, directly into the system, bypassing the sensor entirely.

... Defensive strategies must extend beyond the biometric algorithm. Secure

transmission, encryption in transit, device attestation, trusted execution

environments and validation that data originates from an authorized sensor are

all essential. ... Although less visible to end users, attacks targeting

biometric templates and databases can pose long-term risks. If biometric

templates are compromised, the impact extends far beyond a single breach.Open-source security debt grows across commercial software

High and critical risk findings remain widespread. Most codebases contain at

least one high risk vulnerability, and nearly half contain at least one

critical risk issue. Those rates dipped slightly from the prior year even as

total vulnerability counts rose. Supply chain attacks add another layer of

risk. Sixty five percent of surveyed organizations experienced a software

supply chain attack in the past year. ... “As AI reshapes software

development, security teams will have to continue to adapt in turn. Security

budgets and security guidelines should reflect this new reality. Leaders

should continue to invest in tooling and education required to equip teams to

manage the drastic increase in velocity, volume, and complexity of

applications,” Mackey said. Board level reporting also requires adjustment as

vulnerability volumes rise. ... Outdated components appear in nearly every

audited environment. More than nine in ten codebases contain components that

are several years out of date or show no recent development activity. A large

share of components run many versions behind current releases. Only a small

fraction operate on the latest available version. This maintenance debt

intersects with regulatory obligations. The EU Cyber Resilience Act entered

into effect in late 2024, with key reporting requirements taking effect in

2026 and broader enforcement following in 2027.

High and critical risk findings remain widespread. Most codebases contain at

least one high risk vulnerability, and nearly half contain at least one

critical risk issue. Those rates dipped slightly from the prior year even as

total vulnerability counts rose. Supply chain attacks add another layer of

risk. Sixty five percent of surveyed organizations experienced a software

supply chain attack in the past year. ... “As AI reshapes software

development, security teams will have to continue to adapt in turn. Security

budgets and security guidelines should reflect this new reality. Leaders

should continue to invest in tooling and education required to equip teams to

manage the drastic increase in velocity, volume, and complexity of

applications,” Mackey said. Board level reporting also requires adjustment as

vulnerability volumes rise. ... Outdated components appear in nearly every

audited environment. More than nine in ten codebases contain components that

are several years out of date or show no recent development activity. A large

share of components run many versions behind current releases. Only a small

fraction operate on the latest available version. This maintenance debt

intersects with regulatory obligations. The EU Cyber Resilience Act entered

into effect in late 2024, with key reporting requirements taking effect in

2026 and broader enforcement following in 2027. The agentic enterprise: Why value streams and capability maps are your new governance control plane

The enterprise is currently undergoing a seismic pivot from generative AI,

which focuses on content creation, to agentic AI, which focuses on goal

execution. Unlike their predecessors, these agents possess “structured

autonomy”: the ability to perceive contexts, plan actions and execute across

systems without constant human intervention. For the CIO and the enterprise

architect, this is not merely an upgrade in automation speed; it is a

fundamental shift in the firm’s economic equation. We are moving from

labor-centric workflows to digital labor capable of disassembling and

reassembling entire value chains. ... In an agentic enterprise, the value

stream map is no longer just a diagram; it is the control plane. It must

explicitly define the handoff protocols between human and digital agents. In

my opinion, Value stream maps must move from static documents stored in a

repository to context documents used to drive agentic automation. ... If a

value stream does not exist, you cannot automate it. For new agentic

workflows, do not map the current human process. Instead, use an

outcome-backwards approach. Work backward from the concrete deliverable (e.g.,

customer onboarded) to identify the minimum viable API calls required. Before

granting write access, run the new agentic stream in shadow mode to validate

agent decisions against human outcomes.

The enterprise is currently undergoing a seismic pivot from generative AI,

which focuses on content creation, to agentic AI, which focuses on goal

execution. Unlike their predecessors, these agents possess “structured

autonomy”: the ability to perceive contexts, plan actions and execute across

systems without constant human intervention. For the CIO and the enterprise

architect, this is not merely an upgrade in automation speed; it is a

fundamental shift in the firm’s economic equation. We are moving from

labor-centric workflows to digital labor capable of disassembling and

reassembling entire value chains. ... In an agentic enterprise, the value

stream map is no longer just a diagram; it is the control plane. It must

explicitly define the handoff protocols between human and digital agents. In

my opinion, Value stream maps must move from static documents stored in a

repository to context documents used to drive agentic automation. ... If a

value stream does not exist, you cannot automate it. For new agentic

workflows, do not map the current human process. Instead, use an

outcome-backwards approach. Work backward from the concrete deliverable (e.g.,

customer onboarded) to identify the minimum viable API calls required. Before

granting write access, run the new agentic stream in shadow mode to validate

agent decisions against human outcomes.Beyond compliance: Building a culture of data security in the digital enterprise

Cyber compliance is something organisations across industrial sectors take

seriously, especially with new regulations getting introduced and

non-compliance having consequences such as hefty penalties. Hence, businesses

are placing compliance among their top priorities. However, hyper-focusing

only on compliance can lead to tunnel vision, crippling creativity, and

innovation. It fails to offer a comprehensive risk assessment due to the

checklist approach it follows, exposing organizations to vulnerabilities and

fast-evolving threats. Having a compliance-first mindset can lead to

incomplete risk assessment, creating blind spots and security gaps in security

provisions. ... With businesses relying on data for operations, customer

engagement, and decision-making, ensuring data security protects both users

and organisations. Data breaches have severe consequences, including financial

losses, reputational damage, customer churn, and regulatory penalties. With

data moving across on-premises data centers, cloud platforms, third-party

ecosystems, remote work environments, and AI-driven applications, there is a

need for a holistic, culture-driven approach to cybersecurity. ... Data

protection traditionally was focused on safeguarding the perimeter by securing

networks and systems within the physical boundaries where data was normally

stored.

Cyber compliance is something organisations across industrial sectors take

seriously, especially with new regulations getting introduced and

non-compliance having consequences such as hefty penalties. Hence, businesses

are placing compliance among their top priorities. However, hyper-focusing

only on compliance can lead to tunnel vision, crippling creativity, and

innovation. It fails to offer a comprehensive risk assessment due to the

checklist approach it follows, exposing organizations to vulnerabilities and

fast-evolving threats. Having a compliance-first mindset can lead to

incomplete risk assessment, creating blind spots and security gaps in security

provisions. ... With businesses relying on data for operations, customer

engagement, and decision-making, ensuring data security protects both users

and organisations. Data breaches have severe consequences, including financial

losses, reputational damage, customer churn, and regulatory penalties. With

data moving across on-premises data centers, cloud platforms, third-party

ecosystems, remote work environments, and AI-driven applications, there is a

need for a holistic, culture-driven approach to cybersecurity. ... Data

protection traditionally was focused on safeguarding the perimeter by securing

networks and systems within the physical boundaries where data was normally

stored. If you thought RTO battles were bad, wait until AI mandates start taking hold across the industry

With the advent of generative AI and the incessant beating of the drum by

executives hellbent on unlocking productivity gains, we could see a revival of

the dreaded workforce mandate –- only this time with AI. We’ve already had a

glimpse of the same RTO tactics being used with AI over the last year. In

mid-2025, Microsoft introduced new rules aimed at boosting AI use across the

company, with an internal memo warning staff that “using AI is no longer

optional”. ... As with RTO mandates, we’re now reaching a point where upward

mobility within the enterprise could be at risk as a result of AI use. It’s a

tactic initially touted by Dell in 2024 when enforcing its own hybrid work

rules, which prompted a fierce backlash among staff. Forcing workers to use AI

or risk losing out on promotions will have the desired effect executives want,

namely that employees will use the technology, but that’s missing the point

entirely. AI has been framed by many big tech providers as a prime opportunity

to supercharge productivity and streamline enterprise efficiency. We’ve all

heard the marketing jargon. If business leaders are at the point where they’re

forcing staff to use the technology, it begs the question of whether it’s

actually having the desired effect, which recent analysis suggests it’s not.

... Recent analysis from CompTIA found roughly one-third of companies now

require staff to complete AI training.

Architects have the difficult job of understanding tradeoffs between

proprietary cloud services and cross-cloud platforms. For example, should

developers use AWS Glue, Azure Data Factory, or Google Cloud Data Fusion to

develop data pipelines on the respective platforms, or should they adopt a

data integration platform that works across clouds? ... “Managing multicloud

is like learning multiple languages from AWS, Azure, Oracle, and others, and

it’s rare to have teams that can traverse these environments fluidly and

effectively. Plus, services and concepts are not portable among clouds,

especially in cloud-native PaaS services that go beyond IaaS,” says Harshit

Omar, co-founder and CTO at FluidCloud. One way to work around this issue is

to assign an AI agent to support the developer or architect in evaluating

platform selections. ... Standardizing infrastructure and service

configurations across different clouds requires expertise in different naming

conventions, architecture, tools, APIs, and other paradigms. Look for genAI

tools to act as a translator to streamline configurations, especially for

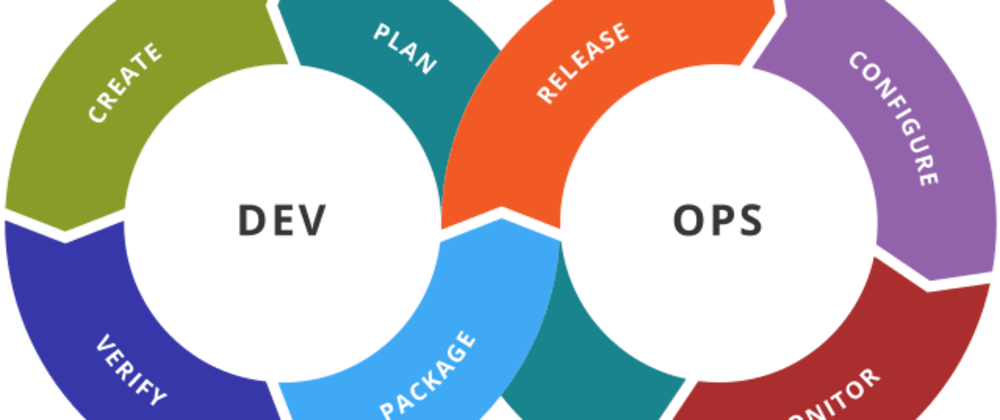

organizations that can templatize their requirements. ... CI/CD,

infrastructure-as-code, and process automation are key tools for driving

efficiency, especially when tasks span multiple cloud environments. Many of

these tools use basic flows and rules to streamline tasks or orchestrate

operations, which can create boundary cases that cause process-blocking

errors.

Architects have the difficult job of understanding tradeoffs between

proprietary cloud services and cross-cloud platforms. For example, should

developers use AWS Glue, Azure Data Factory, or Google Cloud Data Fusion to

develop data pipelines on the respective platforms, or should they adopt a

data integration platform that works across clouds? ... “Managing multicloud

is like learning multiple languages from AWS, Azure, Oracle, and others, and

it’s rare to have teams that can traverse these environments fluidly and

effectively. Plus, services and concepts are not portable among clouds,

especially in cloud-native PaaS services that go beyond IaaS,” says Harshit

Omar, co-founder and CTO at FluidCloud. One way to work around this issue is

to assign an AI agent to support the developer or architect in evaluating

platform selections. ... Standardizing infrastructure and service

configurations across different clouds requires expertise in different naming

conventions, architecture, tools, APIs, and other paradigms. Look for genAI

tools to act as a translator to streamline configurations, especially for

organizations that can templatize their requirements. ... CI/CD,

infrastructure-as-code, and process automation are key tools for driving

efficiency, especially when tasks span multiple cloud environments. Many of

these tools use basic flows and rules to streamline tasks or orchestrate

operations, which can create boundary cases that cause process-blocking

errors.

In perfect harmony: How Emerald AI is turning data centers into flexible grid assets

At the core of Emerald AI is its Emerald Conductor platform. Described by Sivaram as “an AI for AI,” the system orchestrates thousands of AI workloads across one or more data centers, dynamically adjusting operations to respond to grid conditions while ensuring the facility maintains performance. The system achieves this through a closed-loop orchestration platform comprising an autonomous agent and a digital twin simulator. ... A point keenly pointed out by Steve Smith, chief strategy and regulation officer at National Grid, at the time of the announcement: “As the UK’s digital economy grows, unlocking new ways to flexibly manage energy use is essential for connecting more data centers to our network efficiently.” The second reason was National Grid's transatlantic stature - as an American company active in both the UK and US markets - and its commitment to the technology. “They’ve invested in the program and agreed to a demo, which makes them the ideal partner for our first international launch,” says Sivaram. The final, and most important, factor, notes Sivaram, was the access to the NextGrid Alliance, a consortium of 150 utilities worldwide. By gaining access to such a robust partner network, the deal could serve as a springboard for further international projects. This aligns with the company’s broader partnership approach. Emerald AI has already leveraged Nvidia’s cloud partner network to test its technology across US data centers, laying the groundwork for broader deployment and continued global collaboration.7 ways to tame multicloud chaos with generative AI

Architects have the difficult job of understanding tradeoffs between

proprietary cloud services and cross-cloud platforms. For example, should

developers use AWS Glue, Azure Data Factory, or Google Cloud Data Fusion to

develop data pipelines on the respective platforms, or should they adopt a

data integration platform that works across clouds? ... “Managing multicloud

is like learning multiple languages from AWS, Azure, Oracle, and others, and

it’s rare to have teams that can traverse these environments fluidly and

effectively. Plus, services and concepts are not portable among clouds,

especially in cloud-native PaaS services that go beyond IaaS,” says Harshit

Omar, co-founder and CTO at FluidCloud. One way to work around this issue is

to assign an AI agent to support the developer or architect in evaluating

platform selections. ... Standardizing infrastructure and service

configurations across different clouds requires expertise in different naming

conventions, architecture, tools, APIs, and other paradigms. Look for genAI

tools to act as a translator to streamline configurations, especially for

organizations that can templatize their requirements. ... CI/CD,

infrastructure-as-code, and process automation are key tools for driving

efficiency, especially when tasks span multiple cloud environments. Many of

these tools use basic flows and rules to streamline tasks or orchestrate

operations, which can create boundary cases that cause process-blocking

errors.

Architects have the difficult job of understanding tradeoffs between

proprietary cloud services and cross-cloud platforms. For example, should

developers use AWS Glue, Azure Data Factory, or Google Cloud Data Fusion to

develop data pipelines on the respective platforms, or should they adopt a

data integration platform that works across clouds? ... “Managing multicloud

is like learning multiple languages from AWS, Azure, Oracle, and others, and

it’s rare to have teams that can traverse these environments fluidly and

effectively. Plus, services and concepts are not portable among clouds,

especially in cloud-native PaaS services that go beyond IaaS,” says Harshit

Omar, co-founder and CTO at FluidCloud. One way to work around this issue is

to assign an AI agent to support the developer or architect in evaluating

platform selections. ... Standardizing infrastructure and service

configurations across different clouds requires expertise in different naming

conventions, architecture, tools, APIs, and other paradigms. Look for genAI

tools to act as a translator to streamline configurations, especially for

organizations that can templatize their requirements. ... CI/CD,

infrastructure-as-code, and process automation are key tools for driving

efficiency, especially when tasks span multiple cloud environments. Many of

these tools use basic flows and rules to streamline tasks or orchestrate

operations, which can create boundary cases that cause process-blocking

errors.

/articles/developer-joy-productivity/en/smallimage/developer-joy-productivity-thumbnail-1748854717017.jpg)