Quote for the day:

"To strongly disagree with someone, and yet engage with them with respect, grace, humility and honesty, is a superpower" -- Vala Afshar

Is ‘sovereign cloud’ finally becoming something teams can deploy – not just discuss?

Historically, sovereign cloud discussions in Europe have been driven primarily

by risk mitigation. Data residency, legal jurisdiction, and protection from

international legislation have dominated the narrative. These concerns are

valid, but they have framed sovereign cloud largely as a defensive measure – a

way to reduce exposure – rather than as an enabler of innovation or value

creation. Without a clear value proposition beyond compliance, sovereign cloud

has struggled to compete with hyperscale public cloud platforms that offer

scale, maturity, and rich developer ecosystems. The absence of enforceable

regulation has further compounded this. ... Policymakers and enterprises are

also beginning to ask a more practical question: where does sovereign cloud

actually create the most value? The answer increasingly points to innovation

ecosystems, critical national capabilities, and trust. First, there is a growing

recognition that sovereign cloud can underpin domestic innovation, particularly

in areas such as AI, advanced research, and data-intensive start-ups.

Organisations working with sensitive datasets, intellectual property, or public

funding often require cloud environments that are both scalable and secure. ...

Second, the sovereign cloud is increasingly being aligned with critical digital

infrastructure. Sectors like healthcare, energy, transportation, and defence

depend on continuity, accountability, and control.

Historically, sovereign cloud discussions in Europe have been driven primarily

by risk mitigation. Data residency, legal jurisdiction, and protection from

international legislation have dominated the narrative. These concerns are

valid, but they have framed sovereign cloud largely as a defensive measure – a

way to reduce exposure – rather than as an enabler of innovation or value

creation. Without a clear value proposition beyond compliance, sovereign cloud

has struggled to compete with hyperscale public cloud platforms that offer

scale, maturity, and rich developer ecosystems. The absence of enforceable

regulation has further compounded this. ... Policymakers and enterprises are

also beginning to ask a more practical question: where does sovereign cloud

actually create the most value? The answer increasingly points to innovation

ecosystems, critical national capabilities, and trust. First, there is a growing

recognition that sovereign cloud can underpin domestic innovation, particularly

in areas such as AI, advanced research, and data-intensive start-ups.

Organisations working with sensitive datasets, intellectual property, or public

funding often require cloud environments that are both scalable and secure. ...

Second, the sovereign cloud is increasingly being aligned with critical digital

infrastructure. Sectors like healthcare, energy, transportation, and defence

depend on continuity, accountability, and control. India’s DPDP rules 2025: Why access controls are priority one for CIOs

The security stack has traditionally broken down at the point of data rendering

or exfiltration. Firewalls and encryption protect the data in transit and at

rest, but once the data is rendered on a screen, the risk of data breaches from

smartphone cameras, screenshots, or unauthorized sharing occurs outside of the

security stack’s ability to protect it. ... Poor enterprise access practices

amplify this risk. Over-provisioned user accounts, inconsistent multi-factor

authentication, poor logging, and the absence of contextual checks make it easy

for insider threats, credential compromise, and supply chain breaches to

succeed. Under DPDP, accountability also extends to processors, so third-party

CRM or cloud access must meet the same security standards. ... Shift from trust

by implication to trust by verification. Implement least-privilege access to

ensure users view only required apps and data. Add device posture with device

binding, location, time, watermarking and behavior analysis to deny suspicious

access. ... Implement identity infrastructure for just-in-time access and

automated de-Provisioning based on role changes. Record fine-grained, immutable

logs (user, device, resource, date/time) for breach analysis and annual

retention. ... Enable dynamic, user-level watermarks (injecting username, IP

address, timestamp) for forensic analysis. Prohibit unauthorized screen capture,

sharing, or download activity during sensitive sessions, while permitting

approved business processes.

The security stack has traditionally broken down at the point of data rendering

or exfiltration. Firewalls and encryption protect the data in transit and at

rest, but once the data is rendered on a screen, the risk of data breaches from

smartphone cameras, screenshots, or unauthorized sharing occurs outside of the

security stack’s ability to protect it. ... Poor enterprise access practices

amplify this risk. Over-provisioned user accounts, inconsistent multi-factor

authentication, poor logging, and the absence of contextual checks make it easy

for insider threats, credential compromise, and supply chain breaches to

succeed. Under DPDP, accountability also extends to processors, so third-party

CRM or cloud access must meet the same security standards. ... Shift from trust

by implication to trust by verification. Implement least-privilege access to

ensure users view only required apps and data. Add device posture with device

binding, location, time, watermarking and behavior analysis to deny suspicious

access. ... Implement identity infrastructure for just-in-time access and

automated de-Provisioning based on role changes. Record fine-grained, immutable

logs (user, device, resource, date/time) for breach analysis and annual

retention. ... Enable dynamic, user-level watermarks (injecting username, IP

address, timestamp) for forensic analysis. Prohibit unauthorized screen capture,

sharing, or download activity during sensitive sessions, while permitting

approved business processes.What really caused that AWS outage in December?

The back-story was broken by the Financial Times, which reported the 13-hour

outage was caused by a Kiro agentic coding system that decided to improve

operations by deleting and then recreating a key environment. AWS on Friday shot

back to flag what it dubbed “inaccuracies” in the FT story. “The brief service

interruption they reported on was the result of user error — specifically

misconfigured access controls — not AI as the story claims,” AWS said. ... “The

issue stemmed from a misconfigured role — the same issue that could occur with

any developer tool (AI powered or not) or manual action.” That’s an impressively

narrow interpretation of what happened. AWS then promised it won’t do it again.

... The key detail missing — which AWS would not clarify — is just what was

asked and how the engineer replied. Had the engineer been asked by Kiro “I would

like to delete and then recreate this environment. May I proceed?” and the

engineer replied, “By all means. Please do so,” that would have been user error.

But that seems highly unlikely. The more likely scenario is that the system

asked something along the lines of “Do you want me to clean up and make this

environment more efficient and faster?” Did the engineer say “Sure” or did the

engineer respond, “Please list every single change you are proposing along with

the likely result and the worst-case scenario result. Once I review that list, I

will be able to make a decision.”

The back-story was broken by the Financial Times, which reported the 13-hour

outage was caused by a Kiro agentic coding system that decided to improve

operations by deleting and then recreating a key environment. AWS on Friday shot

back to flag what it dubbed “inaccuracies” in the FT story. “The brief service

interruption they reported on was the result of user error — specifically

misconfigured access controls — not AI as the story claims,” AWS said. ... “The

issue stemmed from a misconfigured role — the same issue that could occur with

any developer tool (AI powered or not) or manual action.” That’s an impressively

narrow interpretation of what happened. AWS then promised it won’t do it again.

... The key detail missing — which AWS would not clarify — is just what was

asked and how the engineer replied. Had the engineer been asked by Kiro “I would

like to delete and then recreate this environment. May I proceed?” and the

engineer replied, “By all means. Please do so,” that would have been user error.

But that seems highly unlikely. The more likely scenario is that the system

asked something along the lines of “Do you want me to clean up and make this

environment more efficient and faster?” Did the engineer say “Sure” or did the

engineer respond, “Please list every single change you are proposing along with

the likely result and the worst-case scenario result. Once I review that list, I

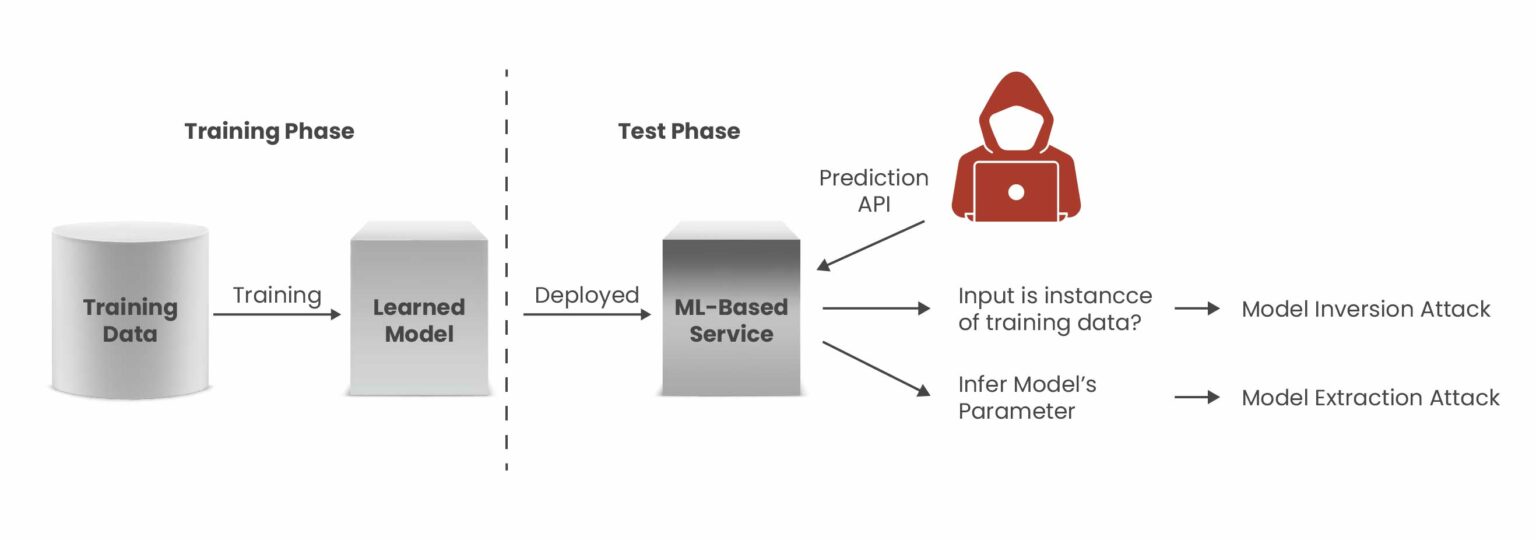

will be able to make a decision.”Model Inversion Attacks: Growing AI Business Risk

A model inversion attack is a form of privacy attack against machine learning

systems in which an adversary uses the outputs of a model to infer sensitive

information about the data used to train it. Rather than breaching a database

or stealing credentials, attackers observe how a model responds to input

queries and leverage those outputs, often including confidence scores or

probability values, to reconstruct aspects of the training data that should

remain private. ... This type of attack differs fundamentally from other ML

attacks, such as membership inference, which aims to determine whether a

specific data point was part of the training set, and model extraction, which

seeks to copy the model itself. ... Successful model inversion attacks can

inflict significant damage across multiple areas of a business. When attackers

extract sensitive training data from machine learning models, organizations

face not only immediate financial losses but also lasting reputational harm

and operational setbacks that continue well beyond the initial incident. ...

Attackers target inference-time privacy by moving through multiple stages,

submitting carefully crafted queries, studying the model’s responses, and

gradually reconstructing sensitive attributes from the outputs. Because these

activities can resemble normal usage patterns, such attacks frequently remain

undetected when monitoring systems are not specifically tuned to identify

machine learning–related security threats.

A model inversion attack is a form of privacy attack against machine learning

systems in which an adversary uses the outputs of a model to infer sensitive

information about the data used to train it. Rather than breaching a database

or stealing credentials, attackers observe how a model responds to input

queries and leverage those outputs, often including confidence scores or

probability values, to reconstruct aspects of the training data that should

remain private. ... This type of attack differs fundamentally from other ML

attacks, such as membership inference, which aims to determine whether a

specific data point was part of the training set, and model extraction, which

seeks to copy the model itself. ... Successful model inversion attacks can

inflict significant damage across multiple areas of a business. When attackers

extract sensitive training data from machine learning models, organizations

face not only immediate financial losses but also lasting reputational harm

and operational setbacks that continue well beyond the initial incident. ...

Attackers target inference-time privacy by moving through multiple stages,

submitting carefully crafted queries, studying the model’s responses, and

gradually reconstructing sensitive attributes from the outputs. Because these

activities can resemble normal usage patterns, such attacks frequently remain

undetected when monitoring systems are not specifically tuned to identify

machine learning–related security threats.It’s time to rethink CISO reporting lines

The age-old problem with CISOs reporting into CIOs is that it could present —

or at least appear to present — a conflict of interest. Cybersecurity

consultant Brian Levine, a former federal prosecutor who serves as executive

director of FormerGov, says that concern is even more warranted today. “It’s

the legacy model: Treat security as a technical function instead of an

enterprise‑wide risk discipline,” he says. ... Enterprise CISOs should be

reporting a notch higher, Levine argues. “Ideally, the CISO would report to

the CEO or the general counsel, high-level roles explicitly accountable for

enterprise risk. Security is fundamentally a risk and governance function, not

a cost‑center function,” Levine points out. “When the CISO has independence

and a direct line to the top, organizations make clearer decisions about risk,

not just cheaper ones." ... Painter is “less dogmatic about where the CISO

reports and more focused on whether they actually have a seat at the table,”

he says. “Org charts matter far less than influence,” he adds. “Whether the

CISO reports to the CIO, the CEO, or someone else, the real question is this:

Are they brought in early, listened to, and empowered to shape how the

business operates? When that’s true, the structure works. When it’s not, no

reporting line will save it.” ... “When the CISO reports to the CIO, risk can

be filtered, prioritized out of sight, or reshaped to fit a delivery

narrative. It’s not about bad actors. It’s about role tension. And when that

tension exists within the same reporting line, risk loses.”

The age-old problem with CISOs reporting into CIOs is that it could present —

or at least appear to present — a conflict of interest. Cybersecurity

consultant Brian Levine, a former federal prosecutor who serves as executive

director of FormerGov, says that concern is even more warranted today. “It’s

the legacy model: Treat security as a technical function instead of an

enterprise‑wide risk discipline,” he says. ... Enterprise CISOs should be

reporting a notch higher, Levine argues. “Ideally, the CISO would report to

the CEO or the general counsel, high-level roles explicitly accountable for

enterprise risk. Security is fundamentally a risk and governance function, not

a cost‑center function,” Levine points out. “When the CISO has independence

and a direct line to the top, organizations make clearer decisions about risk,

not just cheaper ones." ... Painter is “less dogmatic about where the CISO

reports and more focused on whether they actually have a seat at the table,”

he says. “Org charts matter far less than influence,” he adds. “Whether the

CISO reports to the CIO, the CEO, or someone else, the real question is this:

Are they brought in early, listened to, and empowered to shape how the

business operates? When that’s true, the structure works. When it’s not, no

reporting line will save it.” ... “When the CISO reports to the CIO, risk can

be filtered, prioritized out of sight, or reshaped to fit a delivery

narrative. It’s not about bad actors. It’s about role tension. And when that

tension exists within the same reporting line, risk loses.”AI drives cyber budgets yet remains first on the chop list

Cybersecurity budgets are rising sharply across large organisations, but a new multinational survey points to a widening gap between spending on artificial intelligence and the ability to justify that spending in business terms. ... "Security leaders are getting mandates to invest in AI, but nobody's given them a way to prove it's working. You can't measure AI transformation with pre-AI metrics," Wilson said. He added that security teams struggle to translate operational data into board-level evidence of reduced risk. "The problem isn't that security teams lack data. They're drowning in it. The issue is they're tracking the wrong things and speaking a language the board doesn't understand. Those are the budgets that get cut first. The window to fix this is closing fast," Wilson said. ... "We need new ways to measure security effectiveness that actually show business impact, because boards don't fund faster ticket closure, they fund measurable risk reduction and business resilience. We have to show that we're not just responding quickly but eliminating and improving the conditions that allow incidents to happen in the first place," he said. ... Security leaders reported pressure to invest in AI, while also struggling to link those investments to outcomes executives recognise as resilience and risk reduction. The report argues this tension may become harder to sustain if economic conditions tighten and boards begin looking for costs to cut.A cloud-smart strategy for modernizing mission-critical workloads

As enterprises mature in their cloud journeys, many CIOs and senior technology

leaders are discovering that modernization is not about where workloads run —

it’s about how deliberately they are designed. This realization is driving a

shift from cloud-first to cloud-smart, particularly for systems the business

cannot afford to lose. A cloud-smart strategy, as highlighted by the Federal

Cloud Computing Strategy, encourages agencies to weigh the long-term, total

costs of ownership and security risks rather than focusing only on immediate

migration. ... Sticking indefinitely with legacy systems can lead to rising

maintenance costs, inability to support new business initiatives, security

vulnerabilities and even outages as old hardware fails. Many organizations

reach a tipping point where they must modernize to stay competitive. The key

is to do it wisely — balancing speed and risk and having a solid strategy in

place to navigate the complexity. ... A cloud-smart strategy aligns workload

placement with business risk, performance needs and regulatory expectations

rather than ideology. Instead of asking whether a system can move to the

cloud, cloud-smart organizations ask where it performs best. ... Rather than

lifting and shifting entire platforms, teams separate core transaction engines

from decisioning, orchestration and experience layers. APIs and event-driven

integration enable new capabilities around stable cores, allowing systems to

evolve incrementally without jeopardizing operational continuity.

As enterprises mature in their cloud journeys, many CIOs and senior technology

leaders are discovering that modernization is not about where workloads run —

it’s about how deliberately they are designed. This realization is driving a

shift from cloud-first to cloud-smart, particularly for systems the business

cannot afford to lose. A cloud-smart strategy, as highlighted by the Federal

Cloud Computing Strategy, encourages agencies to weigh the long-term, total

costs of ownership and security risks rather than focusing only on immediate

migration. ... Sticking indefinitely with legacy systems can lead to rising

maintenance costs, inability to support new business initiatives, security

vulnerabilities and even outages as old hardware fails. Many organizations

reach a tipping point where they must modernize to stay competitive. The key

is to do it wisely — balancing speed and risk and having a solid strategy in

place to navigate the complexity. ... A cloud-smart strategy aligns workload

placement with business risk, performance needs and regulatory expectations

rather than ideology. Instead of asking whether a system can move to the

cloud, cloud-smart organizations ask where it performs best. ... Rather than

lifting and shifting entire platforms, teams separate core transaction engines

from decisioning, orchestration and experience layers. APIs and event-driven

integration enable new capabilities around stable cores, allowing systems to

evolve incrementally without jeopardizing operational continuity.Enterprises still can't get a handle on software security debt – and it’s only going to get worse

Four-in-five organizations are drowning in software security debt, new

research shows, and the backlog is only getting worse. ... "The speed of

software development has skyrocketed, meaning the pace of flaw creation is

outstripping the current capacity for remediation,” said Chris Wysopal, chief

security evangelist at Veracode. “Despite marginal gains in fix rates,

security debt is becoming a much larger issue for many organizations."

Organizations are discovering more vulnerabilities as their testing programs

mature and expand. Meanwhile, the accelerating pace of software releases

creates a continuous stream of new code before existing vulnerabilities can be

addressed. ... "Now that AI has taken software development velocity to an

unprecedented level, enterprises must ensure they’re making deliberate,

intelligent choices to stem the tide of flaws and minimize their risk," said

Wysopal. The rise in flaws classed as both “severe” and “highly exploitable”

means organizations need to shift from generic severity scoring to

prioritization based on real-world attack potential, advised Veracode. As

such, researchers called for a shift from simple detection toward a more

strategic framework of Prioritize, Protect, and Prove. ... “We are at an

inflection point where running faster on the treadmill of vulnerability

management is no longer a viable strategy. Success requires a deliberate

shift,” said Wysopal.

Four-in-five organizations are drowning in software security debt, new

research shows, and the backlog is only getting worse. ... "The speed of

software development has skyrocketed, meaning the pace of flaw creation is

outstripping the current capacity for remediation,” said Chris Wysopal, chief

security evangelist at Veracode. “Despite marginal gains in fix rates,

security debt is becoming a much larger issue for many organizations."

Organizations are discovering more vulnerabilities as their testing programs

mature and expand. Meanwhile, the accelerating pace of software releases

creates a continuous stream of new code before existing vulnerabilities can be

addressed. ... "Now that AI has taken software development velocity to an

unprecedented level, enterprises must ensure they’re making deliberate,

intelligent choices to stem the tide of flaws and minimize their risk," said

Wysopal. The rise in flaws classed as both “severe” and “highly exploitable”

means organizations need to shift from generic severity scoring to

prioritization based on real-world attack potential, advised Veracode. As

such, researchers called for a shift from simple detection toward a more

strategic framework of Prioritize, Protect, and Prove. ... “We are at an

inflection point where running faster on the treadmill of vulnerability

management is no longer a viable strategy. Success requires a deliberate

shift,” said Wysopal.Protecting your users from the 2026 wave of AI phishing kits

To protect your users today, you have to move past the idea of reactive

filtering and embrace identity-centric security. This means your software needs

to be smart enough to validate that a user is who they say they are, regardless

of the credentials they provide. We’re seeing a massive shift toward behavioral

analytics. Instead of just checking a password, your platform should be looking

at communication patterns and login behaviors. If a user who typically logs in

from Chicago suddenly tries to authorize a high-value financial transfer from a

new device in a different country, your system should do more than just send a

push notification. ... Beyond the tech, you need to think about the “human”

friction you’re creating. We often prioritize convenience over security, but in

the current climate, that’s a losing bet. Implementing “probabilistic approval

workflows” can help. For example, if your system’s AI is 95% sure a login is

legitimate, let it through. If that confidence drops, trigger a more rigorous

verification step. ... The phishing scams of 2026 are successful because they

leverage the same tools we use for productivity. To counter them, we have to be

just as innovative. By building identity validation and phishing-resistant

protocols into the core of your product, you’re doing more than just securing

data. You’re securing the trust that your business is built on.

To protect your users today, you have to move past the idea of reactive

filtering and embrace identity-centric security. This means your software needs

to be smart enough to validate that a user is who they say they are, regardless

of the credentials they provide. We’re seeing a massive shift toward behavioral

analytics. Instead of just checking a password, your platform should be looking

at communication patterns and login behaviors. If a user who typically logs in

from Chicago suddenly tries to authorize a high-value financial transfer from a

new device in a different country, your system should do more than just send a

push notification. ... Beyond the tech, you need to think about the “human”

friction you’re creating. We often prioritize convenience over security, but in

the current climate, that’s a losing bet. Implementing “probabilistic approval

workflows” can help. For example, if your system’s AI is 95% sure a login is

legitimate, let it through. If that confidence drops, trigger a more rigorous

verification step. ... The phishing scams of 2026 are successful because they

leverage the same tools we use for productivity. To counter them, we have to be

just as innovative. By building identity validation and phishing-resistant

protocols into the core of your product, you’re doing more than just securing

data. You’re securing the trust that your business is built on.

No comments:

Post a Comment