When your teammate is a machine: 8 questions CISOs should be asking about AI

There are many potential benefits that can flow from incorporating AI into

security technology, according to Rebecca Herold, an IEEE member and founder

of The Privacy Professor consultancy: streamlining work to shorten finish

times for projects, the ability to make quick decisions, to find problems more

expeditiously. But, she adds, there are a lot of half-baked instances being

employed and buyers "end up diving into the deep end of the AI pool without

doing one iota of scrutiny about whether or not the AI they view as the HAL

9000 savior of their business even works as promised." She also warns

that when "flawed AI results go very wrong, causing privacy breaches, bias,

security incidents, and noncompliance fines, those using the AI suddenly

realize that this AI was more like the dark side of HAL 9000 than they had

even considered as being a possibility." To avoid having your AI teammate

tell you, "I'm sorry, Dave, I'm afraid I can't do that," when you are asking

for results that are accurate, non-biased, privacy-protective, and in

compliance with data protection requirements, Herold advises that every CISO

ask eight questions

Generative AI needs humans in the loop for widespread adoption

Generative AI by itself has many positives, but it is currently a work in

progress and it will need to work with humans for it to transform the world -

which it is almost certain to do. This blending of man and machine is best

described as “AI with humans in the loop” and it is already being widely

adopted by businesses who want to cut operating costs and improve customer

services, but also realise that humans will be crucial if these objectives are

to be achieved. One of the sectors embracing this new normal is in financial

journalism. Reuters managing director Sue Brooks announced that AI will be

used to cover news stories and will create a “golden age” of news. Crucially,

she also said it was vital there “was always a human in the loop to ensure

total accuracy”. Reuters content now has automated time-coded transcripts and

translation of many languages into English, part of the Reuters Connect

service. Brooks went on to say that this meld would “free up brain power to be

creative and put all these tools in your toolbox to create magical experiences

for readers”.

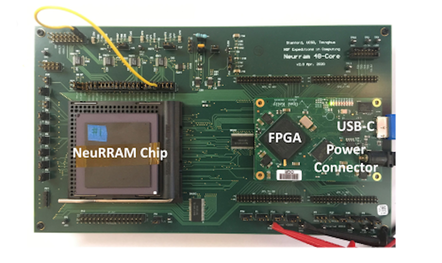

AI chip adds artificial neurons to resistive RAM for use in wearables, drones

According to Weier Wan, a graduate researcher at Stanford University and one

of the authors of the paper, published in Nature yesterday, NeuRRAM has been

developed as an AI chip that greatly improves energy efficiency of AI

inference, thereby allowing complex AI functions to be realized directly

within battery-powered edge devices, such as smart wearables, drones, and

industrial IoT sensors. "In today's AI chips, data processing and data storage

happen in separate places – computing unit and memory unit. The frequent data

movement between these units consumes the most energy and becomes the

bottleneck for realizing low-power AI processors for edge devices," he said.

To address this, the NeuRRAM chip implements a "compute-in-memory" model,

where processing happens directly within memory. It also makes use of

resistive RAM (RRAM), a memory type that is as fast as static RAM but is

non-volatile, allowing it to store AI model weights.

The CISO role has changed, and CISOs need to change with it

Perhaps the best way to improve security—and make the CISO’s job a little

easier—is not reliant on technology. A change in culture is the best way to

truly create an organization where security is top of mind. CISOs, part of upper

management, but also part of the security team, are uniquely positioned to lead

this change – both with other leaders and those they lead. A security-first

culture requires embedding security in everything a business does. Developers

should be enabled to create secure code that is free from vulnerabilities and

resistant to attacks as soon as it is written, as opposed to being a

consideration much later in the SDLC. Designated security champions from the

developer ranks should lead this charge, acting as both coach and cheerleader.

This approach means that security is not being mandated from above, but part of

the team’s DNA and backed up by management. This cannot be an overnight change,

and may be met with resistance. But the threat landscape is too complex, too

advanced and too ubiquitous for any one person or even a small team to handle

alone.

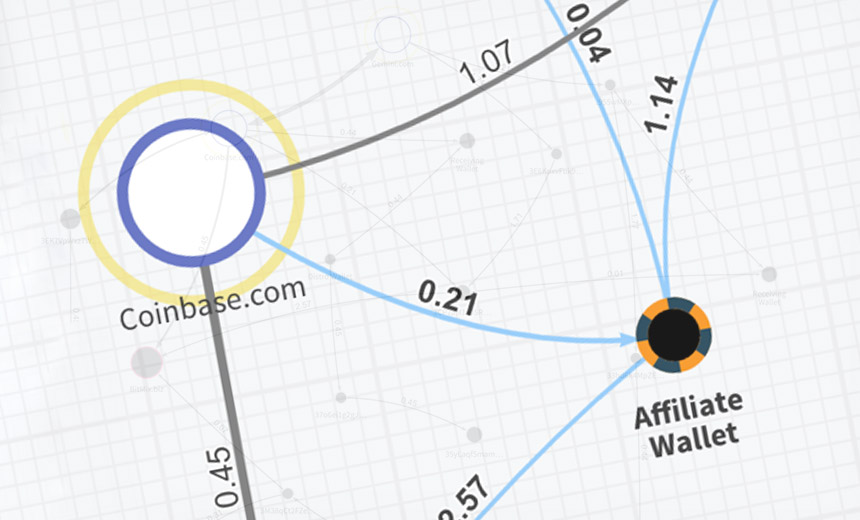

Hosting Provider Accused of Facilitating Nation-State Hacks

The allegations, whether true or not, are a reminder that cybercrime doesn't

operate in a vacuum. Rather, there's a burgeoning service and support ecosystem.

Services include initial access brokers who provide on-demand access to victims,

botnet owners who facilitate malware-laden phishing attacks, and repacking

services that make malware tougher to spot. They also include

ransomware-as-a-service operators who lease their code to business partners, the

affiliates who use it to infect victims, and cryptocurrency money laundering

services that help criminals - operating online or off - convert their

ill-gotten gains into cash. Online attackers require infrastructure for

launching their attacks. Some make use of bulletproof service providers, which

provide VPS and other types of hosting services in return for a promise,

typically for a relatively high fee, that customers can do whatever they like.

Halcyon's report alleges that Cloudzy functionally operates in a similar manner,

due to a lack of proper oversight, including allowing cryptocurrency-using

customers to be able to remain anonymous.

The tug-of-war between optimization and innovation in the CIO’s office

The downside of prioritizing optimization is the risk of overlooking

opportunities for innovation that could have long-term impacts on the

organization’s growth and relevance. Think game-changing new systems, such

as AI, that increase supply chain efficiency, or automating steps in

manufacturing that speeds up productivity and reduces costs at the same

time. Usually, the value of a business is directly defined by the

innovations that can drive it. Think about the services we use now, from

food delivery to home sharing, with the draw being better customer

experiences through innovation. Emphasizing innovation enables companies to

stay ahead of the curve, attracting customers with cutting-edge products and

services. ... These mistakes will kill a company. Taking resources away from

innovation and spending them on making things work as they should removes

business value. I think we’re going to see a great many businesses spend so

much money to fix past mistakes that they’ll end up throwing in the

towel.

Flight to cloud drives IaaS networking adoption

IDC describes IaaS cloud networking as a foundational networking layer that

allows large enterprises and technology providers to connect data centers,

colocation environments, and cloud infrastructure. With IaaS networking, the

network infrastructure and services are scalable and available on-demand,

provisioned and consumed just like any other cloud service. That makes this

infrastructure more scalable and agile than traditional approaches to

networking, according to IDC. Direct cloud connects/interconnects is the

largest segment of IaaS networking, accounting for more than half of all IaaS

networking revenue. The four other major segments of the IaaS networking

market are cloud WAN (transit), IaaS load balancing, IaaS service mesh, and

cloud VPNs (to IaaS clouds), according to IDC. Cloud WAN, which includes cloud

middle-mile and core transit networks, is the fastest-growing segment of IaaS

networking, with a forecasted five-year compound annual growth rate of 112%,

says IDC. IaaS service meshes are also expected to see strong growth, with a

forecasted five-year compound annual growth rate of 68%.

The rise of Generative AI in software development

AI is accelerating the process of going from zero to one – it jumpstarts

innovation, releasing developers from the need to start from scratch. But the

1 to n problem remains – they start faster but will quickly have to deal with

issues like security, governance, code quality, and managing the entire

application lifecycle. The largest cost of an application isn't creating it –

it's maintaining it, adapting it, and ensuring it will last. And if

organisations were already struggling with tech debt (code left behind by

developers who quit, vendors who sunset apps and create monstrous workloads to

take care of) now they'll also have to handle massive amounts of AI-generated

code that their developers may or may not understand. As tempting as it may be

for CIOs to assume they can train teams on how to prompt AI and use it to get

any answers they need, it might be more efficient to invest in technologies

that help you leverage Gen AI in ways that you can actually see, control and

trust. This is why I believe that in the future, fundamentally, everything

will be delivered on top of AI-powered low-code platforms.

Will law firms fully embrace generative AI? The jury is out | The AI Beat

On one hand, gen AI is shaking up the legal industry, with companies like

Everlaw adding options to their product portfolio, while Thomson Reuters can

integrate with Microsoft 365 Copilot to power legal content generation

directly in Word. On the other hand, lawyers tend to be a conservative bunch —

and in this case, attorneys would likely be wise to be cautious, with

headlines like “New York lawyers sanctioned for using fake ChatGPT cases in

legal brief” going viral. Another problem is that their clients may not feel

comfortable with law firms using gen AI — a new survey found that one-third of

consumer respondents said they’re against any use of gen AI in the legal

field. ... But with Everlaw’s new gen AI now available in beta, lawyers can go

beyond just clustering data at the aggregate level to querying, summarizing

and otherwise extracting details from documents to get what they need. For

example, the company says that while it typically takes hours for a legal

professional to compose a statement of facts, it can now happen in about 10

seconds, delivering legal teams a rough draft to edit and fact check.

Vulnerability Management: Best Practices for Patching CVEs

In a perfect world, you would analyze all CVEs first to determine the priority

order for patching. But this just isn’t scalable due to the sheer number of

vulnerabilities and how frequently CVEs are discovered. In reality, only a

handful of CVEs actually affect your software. Of course, there’s no way to

know for certain how a CVE affects your application until it has been

analyzed, but because there are so many, including those from transitive

dependencies, it is nearly impossible to analyze them all before new CVEs are

discovered or in the time between a tight release schedule. Instead, we

recommend you start by patching all critical and high-severity CVEs without

analysis. ... Preventing, detecting and patching CVEs needs to be a shared

responsibility between developers and security teams. It is not sustainable

for security teams to bear the responsibility of managing and patching CVEs

alone. Development teams can often be hesitant to push frequent updates for

fear that updates to software libraries will create bugs in their software.

Quote for the day:

"Our greatest battles are with our own

minds." -- Jameson Frank