Quote for the day:

“The entrepreneur builds an enterprise; the technician builds a job.” -- Michael Gerber

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 17 mins • Perfect for listening on the go.

If AI Owns the Decision, What Happens to Your Bank? 4 Smart Moves Now Will Aid Survival

The article from The Financial Brand explores the transformative role of

artificial intelligence in reshaping consumer financial decision-making and

the banking landscape. As AI tools become more sophisticated, they are moving

beyond simple automation to provide hyper-personalized financial coaching and

autonomous management. This shift allows consumers to delegate complex

tasks—such as optimizing savings, managing debt, and selecting investment

portfolios—to algorithms that analyze vast amounts of real-time data. For

financial institutions, this evolution presents both a challenge and an

opportunity; banks must transition from being mere transactional platforms to

becoming proactive financial partners. The integration of generative AI is

particularly highlighted as a catalyst for creating more intuitive user

interfaces that can explain financial nuances in natural language. However,

the piece also emphasizes the critical importance of trust and transparency.

For AI to be truly effective in a banking context, providers must ensure

ethical data usage and maintain a "human-in-the-loop" approach to mitigate

algorithmic bias and security risks. Ultimately, the future of banking lies in

a hybrid model where technology handles the heavy analytical lifting, enabling

customers to achieve better financial health through data-driven confidence

and streamlined digital experiences.

The article from The Financial Brand explores the transformative role of

artificial intelligence in reshaping consumer financial decision-making and

the banking landscape. As AI tools become more sophisticated, they are moving

beyond simple automation to provide hyper-personalized financial coaching and

autonomous management. This shift allows consumers to delegate complex

tasks—such as optimizing savings, managing debt, and selecting investment

portfolios—to algorithms that analyze vast amounts of real-time data. For

financial institutions, this evolution presents both a challenge and an

opportunity; banks must transition from being mere transactional platforms to

becoming proactive financial partners. The integration of generative AI is

particularly highlighted as a catalyst for creating more intuitive user

interfaces that can explain financial nuances in natural language. However,

the piece also emphasizes the critical importance of trust and transparency.

For AI to be truly effective in a banking context, providers must ensure

ethical data usage and maintain a "human-in-the-loop" approach to mitigate

algorithmic bias and security risks. Ultimately, the future of banking lies in

a hybrid model where technology handles the heavy analytical lifting, enabling

customers to achieve better financial health through data-driven confidence

and streamlined digital experiences.AI tool poisoning exposes a major flaw in enterprise agent security

In this VentureBeat article, Nik Kale examines the emerging threat of AI tool

poisoning, which exposes a fundamental flaw in enterprise agent security

architectures. Modern AI agents select tools from shared registries by

matching natural-language descriptions, but these descriptions lack human

verification. This oversight enables selection-time threats like tool

impersonation and execution-time issues such as behavioral drift. While

traditional software supply chain controls like code signing and Software Bill

of Materials (SBOMs) effectively ensure artifact integrity, they fail to

address behavioral integrity—whether a tool actually does what it claims. A

malicious tool might pass all artifact checks while containing

prompt-injection payloads or altering its server-side behavior

post-publication to exfiltrate sensitive data. To counter this, Kale proposes

a runtime verification layer using the Model Context Protocol (MCP). This

system employs discovery binding to prevent bait-and-switch attacks, endpoint

allowlisting to block unauthorized network connections, and output schema

validation to detect suspicious data patterns. By implementing a

machine-readable behavioral specification, organizations can establish a

tamper-evident record of a tool's intended operations. Kale advocates for a

graduated security model, beginning with mandatory endpoint allowlisting, to

protect enterprise AI ecosystems from the growing risks of automated agent

manipulation and data theft.

In this VentureBeat article, Nik Kale examines the emerging threat of AI tool

poisoning, which exposes a fundamental flaw in enterprise agent security

architectures. Modern AI agents select tools from shared registries by

matching natural-language descriptions, but these descriptions lack human

verification. This oversight enables selection-time threats like tool

impersonation and execution-time issues such as behavioral drift. While

traditional software supply chain controls like code signing and Software Bill

of Materials (SBOMs) effectively ensure artifact integrity, they fail to

address behavioral integrity—whether a tool actually does what it claims. A

malicious tool might pass all artifact checks while containing

prompt-injection payloads or altering its server-side behavior

post-publication to exfiltrate sensitive data. To counter this, Kale proposes

a runtime verification layer using the Model Context Protocol (MCP). This

system employs discovery binding to prevent bait-and-switch attacks, endpoint

allowlisting to block unauthorized network connections, and output schema

validation to detect suspicious data patterns. By implementing a

machine-readable behavioral specification, organizations can establish a

tamper-evident record of a tool's intended operations. Kale advocates for a

graduated security model, beginning with mandatory endpoint allowlisting, to

protect enterprise AI ecosystems from the growing risks of automated agent

manipulation and data theft.

Why OT security needs bilingual leaders

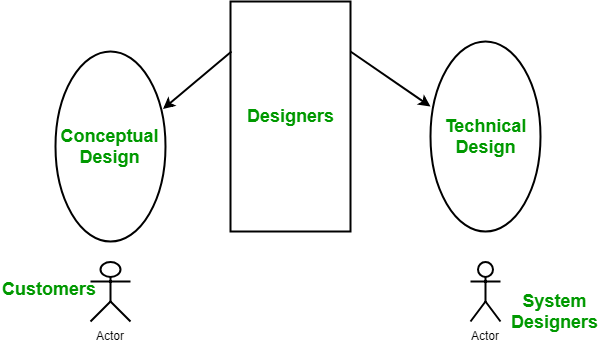

The article from e27 emphasizes the critical necessity for "bilingual"

leadership in the realm of Operational Technology (OT) security to bridge the

widening gap between industrial operations and Information Technology (IT). As

critical infrastructure becomes increasingly digitized, the traditional silos

separating shop-floor engineers and corporate cybersecurity teams have become

a significant liability. The author argues that true bilingual leaders are

those who possess a deep technical understanding of industrial control systems

alongside a sophisticated grasp of modern cybersecurity protocols. These

leaders act as essential translators, capable of explaining the nuances of

"uptime" and physical safety to IT departments, while simultaneously

articulating the urgency of threat landscapes and data integrity to plant

managers. The piece highlights that the convergence of these two worlds often

results in friction due to differing priorities—where IT focuses on

confidentiality, OT prioritizes availability. By fostering leadership that

speaks both "languages," organizations can implement holistic security

frameworks that do not compromise production efficiency. Ultimately, the

article contends that the future of industrial resilience depends on a new

generation of executives who can navigate the complexities of both the digital

and physical domains, ensuring that cybersecurity is integrated into the very

fabric of industrial engineering rather than treated as an external

afterthought.

The article from e27 emphasizes the critical necessity for "bilingual"

leadership in the realm of Operational Technology (OT) security to bridge the

widening gap between industrial operations and Information Technology (IT). As

critical infrastructure becomes increasingly digitized, the traditional silos

separating shop-floor engineers and corporate cybersecurity teams have become

a significant liability. The author argues that true bilingual leaders are

those who possess a deep technical understanding of industrial control systems

alongside a sophisticated grasp of modern cybersecurity protocols. These

leaders act as essential translators, capable of explaining the nuances of

"uptime" and physical safety to IT departments, while simultaneously

articulating the urgency of threat landscapes and data integrity to plant

managers. The piece highlights that the convergence of these two worlds often

results in friction due to differing priorities—where IT focuses on

confidentiality, OT prioritizes availability. By fostering leadership that

speaks both "languages," organizations can implement holistic security

frameworks that do not compromise production efficiency. Ultimately, the

article contends that the future of industrial resilience depends on a new

generation of executives who can navigate the complexities of both the digital

and physical domains, ensuring that cybersecurity is integrated into the very

fabric of industrial engineering rather than treated as an external

afterthought.

The agentic future has a technical debt problem

In the article "The Agentic Future Has a Technical Debt Problem," Barr Moses

argues that the rapid, competitive deployment of AI agents is mirroring the

early mistakes of the cloud migration era. Drawing on a survey of 260

technology practitioners, Moses highlights a significant disconnect between

engineering leaders and the "builders" on the ground. While leadership often

maintains a high level of confidence in system reliability, nearly two-thirds

of organizations admitted to deploying agents faster than their teams felt

prepared to support. This haste has led to a massive accumulation of technical

debt; over 70% of fast-deploying builders anticipate needing to significantly

rearchitect or rebuild their systems. Critical operational foundations, such

as observability, governance, and traceability, are frequently sacrificed for

speed, leaving engineers to deal with agents that access unauthorized data or

lack manual override switches. The survey reveals that visibility into agent

behavior remains a primary blind spot, with most production issues being

discovered via customer complaints rather than automated monitoring.

Ultimately, the piece warns that without a shift toward prioritizing

infrastructure and instrumentation, the industry faces an inevitable "rebuild

reckoning." Moving forward, organizations must bridge the perception gap

between management and developers to ensure that agentic systems are not just

shipped, but are sustainable and controllable.

The article "In Regulated Industries, Faster Testing Still Has to Be

Defensible" explores the delicate balance software engineering teams in

sectors like healthcare and finance must maintain between rapid AI-driven

innovation and stringent compliance requirements. While there is significant

pressure from stakeholders to accelerate release cycles through generative AI

for test generation and defect analysis, the author emphasizes that speed must

not come at the expense of auditability. In regulated environments, software

must not only function correctly but also possess a comprehensive audit trail,

including documented validation, end-to-end traceability, and clear evidence

of control. The piece argues that AI-generated artifacts should be subject to

the same rigorous version control and formal human review as traditional

engineering outputs, as accountability cannot be delegated to an algorithm.

Crucially, traceability should be integrated early into the planning phase

rather than treated as a post-development cleanup task. Ultimately, the

adoption of AI in quality engineering is most effective when it strengthens

release discipline and supports human-led verification processes. By

prioritizing narrow scopes, clear data access policies, and ongoing education,

organizations can leverage modern technology to achieve faster delivery

without sacrificing the defensibility of their testing records or risking

non-compliance with regulatory frameworks.

The article "In Regulated Industries, Faster Testing Still Has to Be

Defensible" explores the delicate balance software engineering teams in

sectors like healthcare and finance must maintain between rapid AI-driven

innovation and stringent compliance requirements. While there is significant

pressure from stakeholders to accelerate release cycles through generative AI

for test generation and defect analysis, the author emphasizes that speed must

not come at the expense of auditability. In regulated environments, software

must not only function correctly but also possess a comprehensive audit trail,

including documented validation, end-to-end traceability, and clear evidence

of control. The piece argues that AI-generated artifacts should be subject to

the same rigorous version control and formal human review as traditional

engineering outputs, as accountability cannot be delegated to an algorithm.

Crucially, traceability should be integrated early into the planning phase

rather than treated as a post-development cleanup task. Ultimately, the

adoption of AI in quality engineering is most effective when it strengthens

release discipline and supports human-led verification processes. By

prioritizing narrow scopes, clear data access policies, and ongoing education,

organizations can leverage modern technology to achieve faster delivery

without sacrificing the defensibility of their testing records or risking

non-compliance with regulatory frameworks.DevSecOps explained for growing technology businesses

The article "DevSecOps explained for growing technology businesses," authored

by Clear Path Security Ltd, details how small-to-medium enterprises (SMEs) can

integrate security into their development lifecycles without sacrificing

speed. The article defines DevSecOps as a cultural and procedural shift where

security is woven into daily delivery flows rather than being a separate

concluding step. For growing firms, the primary advantage lies in reducing

expensive rework and late-stage surprises by catching vulnerabilities early.

The framework rests on three pillars: people, process, and tooling. Instead of

overwhelming teams with complex enterprise-grade protocols, the author

suggests a risk-based, gradual implementation focusing on high-impact areas

like customer-facing apps and sensitive data handling. Core initial controls

should include automated code scanning, dependency checks, and secret

detection. Success is measured not by the volume of tools, but by practical

metrics like the reduction of post-release vulnerabilities and the speed of

high-priority remediation. To ensure adoption, businesses are advised to

follow a phased 90-day plan, starting with visibility and basic automation

before scaling complexity. Ultimately, the piece argues that DevSecOps acts as

a business enabler, fostering confidence and stability by aligning development

speed with robust risk management through lightweight, proportionate controls

that fit the organization’s specific size and technical needs.

Cuts are coming: is now the time to upskill?

The article "Cuts are coming: is now the time to upskill?" explores the

critical need for IT professionals to embrace continuous learning amidst a

volatile tech landscape defined by rising redundancies and the disruptive

influence of artificial intelligence. Despite persistent skills shortages, the

job market has tightened significantly, forcing individuals to take greater

personal responsibility for their professional development, often through

self-funded and self-directed methods. This shift is characterized by a move

away from traditional classroom settings toward agile micro-credentials,

cloud-based labs, and specialized certifications in high-demand areas like

cloud computing, data analytics, and cybersecurity. While organizations

recognize that upskilling existing talent is more cost-effective and

resilience-building than external hiring, employer-led investment in training

has paradoxically declined over the last decade. Consequently, workers are

increasingly motivated by job security concerns, with a majority considering

reskilling to maintain their relevance. However, the article highlights an "AI

trust paradox," noting that many businesses struggle to implement

transformative AI because they lack the necessary foundational data skills and

internal expertise. Ultimately, staying competitive in the modern economy

requires a proactive approach to skill acquisition, as the widening gap

between institutional needs and available talent places the onus of career

longevity squarely on the individual professional.

The article "Cuts are coming: is now the time to upskill?" explores the

critical need for IT professionals to embrace continuous learning amidst a

volatile tech landscape defined by rising redundancies and the disruptive

influence of artificial intelligence. Despite persistent skills shortages, the

job market has tightened significantly, forcing individuals to take greater

personal responsibility for their professional development, often through

self-funded and self-directed methods. This shift is characterized by a move

away from traditional classroom settings toward agile micro-credentials,

cloud-based labs, and specialized certifications in high-demand areas like

cloud computing, data analytics, and cybersecurity. While organizations

recognize that upskilling existing talent is more cost-effective and

resilience-building than external hiring, employer-led investment in training

has paradoxically declined over the last decade. Consequently, workers are

increasingly motivated by job security concerns, with a majority considering

reskilling to maintain their relevance. However, the article highlights an "AI

trust paradox," noting that many businesses struggle to implement

transformative AI because they lack the necessary foundational data skills and

internal expertise. Ultimately, staying competitive in the modern economy

requires a proactive approach to skill acquisition, as the widening gap

between institutional needs and available talent places the onus of career

longevity squarely on the individual professional.Cloud Security Alliance Expands Agentic AI Governance Work

The Cloud Security Alliance (CSA) has significantly expanded its commitment to

securing agentic AI systems through the introduction of three major governance

milestones aimed at "Securing the Agentic Control Plane." During the CSA

Agentic AI Security Summit, the organization’s CSAI Foundation announced the

launch of the STAR for AI Catastrophic Risk Annex, a dedicated initiative

running from mid-2026 through 2027 to address high-stakes risks associated

with advanced AI autonomy. Furthermore, the CSA achieved authorization as a

CVE Numbering Authority via MITRE, allowing it to formally track and

categorize vulnerabilities specific to the AI landscape. In a strategic move

to standardize security protocols, the CSA also acquired two critical

specifications: the Agentic Autonomous Resource Model and the Agentic Trust

Framework. The latter, developed by Josh Woodruff of MassiveScale.AI,

integrates Zero Trust principles into AI agent operations and aligns with

international standards like the NIST AI Risk Management Framework and the EU

AI Act. These developments reflect the CSA’s proactive approach to managing

the security challenges posed by autonomous AI entities, ensuring that

governance, risk management, and compliance keep pace with rapid technological

evolution. By centralizing these resources, the CSA aims to provide a unified,

transparent architecture for organizations to safely deploy and manage agentic

technologies within their enterprise cloud environments.

In the article "Stop treating identity as a compliance step: it’s

infrastructure now," Harry Varatharasan of ComplyCube argues that identity

verification (IDV) has transcended its traditional role as a back-office

compliance task to become foundational digital infrastructure. Across fintech,

telecoms, and government services, IDV now serves as the primary mechanism for

establishing trust and preventing fraud at scale. Varatharasan highlights a

significant industry shift where businesses prioritize orchestration and

interoperability, moving toward single, reusable identity layers rather than

fragmented, siloed checks. For IDV to function as true infrastructure, it must

exhibit three defining characteristics: reliability at scale, trust by design,

and—most importantly—interoperability that addresses both technical

compatibility and legal liability transfer. The author notes that while the

UK’s digital identity consultation is a vital milestone, policy frameworks

still struggle to keep pace with the industry's current reality, where the

boundaries between public and private verification systems are already

dissolving. Fragmentation remains a major hurdle, increasing compliance costs

and creating user friction through repetitive verification steps. Ultimately,

the article emphasizes that the focus must shift from simply mandating

verification to governing it as a shared, portable resource, ensuring that

national standards reflect the modern integrated digital economy and future

cross-sector needs, while providing a seamless experience for the end-user.

In the article "Stop treating identity as a compliance step: it’s

infrastructure now," Harry Varatharasan of ComplyCube argues that identity

verification (IDV) has transcended its traditional role as a back-office

compliance task to become foundational digital infrastructure. Across fintech,

telecoms, and government services, IDV now serves as the primary mechanism for

establishing trust and preventing fraud at scale. Varatharasan highlights a

significant industry shift where businesses prioritize orchestration and

interoperability, moving toward single, reusable identity layers rather than

fragmented, siloed checks. For IDV to function as true infrastructure, it must

exhibit three defining characteristics: reliability at scale, trust by design,

and—most importantly—interoperability that addresses both technical

compatibility and legal liability transfer. The author notes that while the

UK’s digital identity consultation is a vital milestone, policy frameworks

still struggle to keep pace with the industry's current reality, where the

boundaries between public and private verification systems are already

dissolving. Fragmentation remains a major hurdle, increasing compliance costs

and creating user friction through repetitive verification steps. Ultimately,

the article emphasizes that the focus must shift from simply mandating

verification to governing it as a shared, portable resource, ensuring that

national standards reflect the modern integrated digital economy and future

cross-sector needs, while providing a seamless experience for the end-user.

Stop treating identity as a compliance step. It’s infrastructure now

In the article "Stop treating identity as a compliance step: it’s

infrastructure now," Harry Varatharasan of ComplyCube argues that identity

verification (IDV) has transcended its traditional role as a back-office

compliance task to become foundational digital infrastructure. Across fintech,

telecoms, and government services, IDV now serves as the primary mechanism for

establishing trust and preventing fraud at scale. Varatharasan highlights a

significant industry shift where businesses prioritize orchestration and

interoperability, moving toward single, reusable identity layers rather than

fragmented, siloed checks. For IDV to function as true infrastructure, it must

exhibit three defining characteristics: reliability at scale, trust by design,

and—most importantly—interoperability that addresses both technical

compatibility and legal liability transfer. The author notes that while the

UK’s digital identity consultation is a vital milestone, policy frameworks

still struggle to keep pace with the industry's current reality, where the

boundaries between public and private verification systems are already

dissolving. Fragmentation remains a major hurdle, increasing compliance costs

and creating user friction through repetitive verification steps. Ultimately,

the article emphasizes that the focus must shift from simply mandating

verification to governing it as a shared, portable resource, ensuring that

national standards reflect the modern integrated digital economy and future

cross-sector needs, while providing a seamless experience for the end-user.

In the article "Stop treating identity as a compliance step: it’s

infrastructure now," Harry Varatharasan of ComplyCube argues that identity

verification (IDV) has transcended its traditional role as a back-office

compliance task to become foundational digital infrastructure. Across fintech,

telecoms, and government services, IDV now serves as the primary mechanism for

establishing trust and preventing fraud at scale. Varatharasan highlights a

significant industry shift where businesses prioritize orchestration and

interoperability, moving toward single, reusable identity layers rather than

fragmented, siloed checks. For IDV to function as true infrastructure, it must

exhibit three defining characteristics: reliability at scale, trust by design,

and—most importantly—interoperability that addresses both technical

compatibility and legal liability transfer. The author notes that while the

UK’s digital identity consultation is a vital milestone, policy frameworks

still struggle to keep pace with the industry's current reality, where the

boundaries between public and private verification systems are already

dissolving. Fragmentation remains a major hurdle, increasing compliance costs

and creating user friction through repetitive verification steps. Ultimately,

the article emphasizes that the focus must shift from simply mandating

verification to governing it as a shared, portable resource, ensuring that

national standards reflect the modern integrated digital economy and future

cross-sector needs, while providing a seamless experience for the end-user.The rapidly evolving digital assets and payments regulatory landscape: What you need to know

The Dentons alert outlines Australia’s sweeping regulatory overhaul of digital

assets and payments, signaling the end of previous legal ambiguities. Central

to this shift is the Corporations Amendment (Digital Assets Framework) Act

2026, which, starting April 2027, integrates cryptocurrency exchanges and

custodians into the Australian Financial Services Licence (AFSL) regime via

new categories: Digital Asset Platforms and Tokenised Custody Platforms.

Concurrently, a new activity-based payments framework replaces the outdated

"non-cash payment facility" concept with Stored Value Facilities (SVF) and

Payment Instruments. This system captures diverse services like payment

initiation and digital wallets, while excluding self-custodial software. Key

consumer protections include a mandate for licensed providers to hold client

funds in statutory trusts and enhanced disclosure for stablecoin issuers.

Furthermore, "major SVF providers" exceeding AU$200 million in stored value

will face prudential oversight by APRA. While exemptions exist for small-scale

platforms and low-value services, the firm emphasizes that the transition is

complex. With ASIC’s "no-action" position set to expire on June 30, 2026, and

parallel AML/CTF obligations already in effect, businesses must urgently

assess their licensing needs. This landmark reform ensures that digital asset

and payment providers operate under a rigorous, transparent framework

equivalent to traditional financial services.