6 deadly sins of enterprise architecture

The simplest way to build out enterprise software is to leverage the power of

various tools, portals, and platforms constructed by outsiders. Often 90%+ of

the work can be done by signing a purchase order and writing a bit of glue code.

But trusting the key parts of an enterprise to an outside company has plenty of

risks. Maybe some private equity firm buys the outside firm, fires all the good

workers, and then jacks up the price knowing you can’t escape. Suddenly

instantiating all your eggs in one platform starts to fail badly. No one

remembers the simplicity and consistency that came from a single interface from

a single platform. Spreading out and embracing multiple platforms, though, can

be just as painful. The sales team may promise that the tools are designed to

interoperate and speak industry standard protocols, but that gets you only

halfway there. Each may store the data in an SQL database, but some use MySQL,

others use PostgreSQL and others use Oracle. There’s no simple answer. Too many

platforms creates a Tower of Babel. Too few brings the risk of vendor lock-in

and all the pain of opening that email with the renewal contract.

Manufacturing firms make early bets on the industrial metaverse

The building blocks of the industrial metaverse are “frequently proprietary,

siloed and standalone,” according to a recent report by Miller and Forrester

colleagues. Digital twins — which might use IoT sensor data and 3D modelling to

provide a real-time picture of a piece of equipment or factory, for example —

are perhaps closest to realization, but are still limited in some senses. “The

reality today is that most digital twins are still asset- and vendor-specific,”

Miller told Computerworld, with the same manufacturer responsible for both

hardware and software. For example, an ABB robot may be sold with an ABB digital

twin, or a Siemens motor will come with a Siemens digital twin — but getting

them to work together can be a challenge. While these types of tools offer clear

benefits for customers, firms that own multiple products from multiple vendors

will eventually want “one digital twin of how the factory or the line is

operating, not 100 digital twins of the different components,” said Miller. Even

the most advanced precursor technologies, such as factory-spanning digital

twins, tend to be the product of a partnership with one vendor.

How businesses can vet their cybersecurity vendors

Companies can’t assume that the vendor is telling the truth. Particularly in the

authentication market, where there is currently no standardised testing to

confirm solutions pass metrics such as ‘phishing resistance’. When talking to a

vendor, whilst it may seem simple, the organisation should first ask the vendor:

How does the tool prevent social engineering and AiTM attacks? Whilst some

solutions might say passwordless or ‘phishing-resistant’, they could instead

simply hide the password so that authentication is more convenient, but the

vulnerability remains. The team needs to determine if the solution eliminates

passwords from both the authentication flow and account recovery flow, should

the user lose their typical login device. And the tool must implement “verifier

impersonation protection” to thwart AiTM/proxy-based attacks. Getting the

security team to conduct their research beforehand enables them to come prepared

to ask detailed questions and can help bypass the buzzwords that vendors use to

uncover the truth. To go a step further, vetting the vendor can allow security

teams to learn more about the tool and uncover the truth.

Hidden dangers loom for subsea cables, the invisible infrastructure of the internet

Subsea cables can fall under a wide range of regulatory regimes, laws and

authorities. At national level, there may be several authorities involved in

their protection, including national telecom authorities, authorities under

the NIS Directive, cybersecurity agencies, national coastguard, military, etc.

There are also international treaties in place to be considered, establishing

universal norms and the legal boundaries of the sea. ... Challenges for subsea

cable resilience: Accidental, unintentional damage through fishing or

anchoring has so far been the cause of most subsea cable

incidents; Natural phenomena such as undersea earthquakes or landslides

can have a significant impact, especially in places where there is a high

concentration of cables; Chokepoints, where many cables are installed

close to each other, are single points of failure, where one physical attack

could strain the cable repair capacity; Physical attacks and cyberattacks

should be considered as threats for the subsea cables, the landing points, and

the ICT at the landing points.

Datacentre operators ‘hesitant’ over how to proceed with server farm builds as AI hype builds

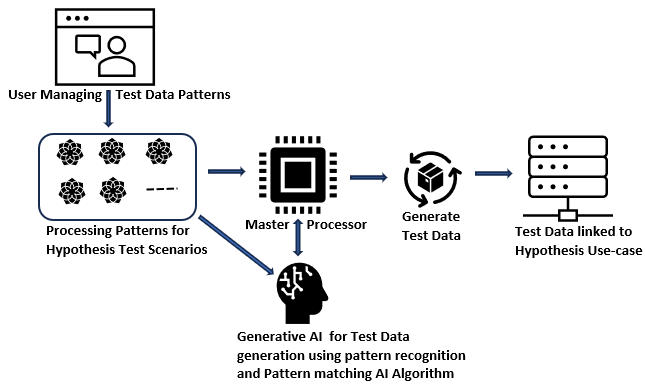

“The developments in generative AI and the increasing use of a wide range of

AI-based applications in datacentres, edge infrastructure and endpoint devices

require the deployment of high-performance graphics processing units and

optimised semiconductor devices,” said Alan Priestley, vice-president analyst

at Gartner. “This is driving the production and deployment of AI chips.” And

while Gartner’s figures suggest the AI trend is going to continue to take the

world of tech by storm, the market watcher’s recently published Hype Cycle for

emerging technologies lists generative AI as being at the “peak of inflated

expectations”, which might go some way to explaining why operators are

reluctant to rush to kit out their sites to accommodate this trend. For

colocation operators that are targeting hyperscale cloud firms, many of which

regularly talk up the potential for generative AI to transform how enterprises

operate, there is perhaps less reticence, said Onnec’s Linqvist.

Developers: Is Your API Designed for Attackers?

The security firm analyzed 40 public breaches to see what role APIs played in

security problems, which Snyder featured in his 2023 Black Hat conference

presentation. The issue might be built-in vulnerabilities, misconfigurations

in the API, or even a logical flaw in the application itself — and that means

it falls on developers to fix it, Snyder said. “It’s a range of things, but it

is generally with their own APIs,” Snyder told The New Stack. ”It is in their

domain of influence, and honestly, their domain of control, because it is

ultimately down to them to build a secure API.” The number of breaches

analyzed is small — it was limited to publicly disclosed breaches — but Snyder

said the problem is potentially much more pervasive. ... In the last

couple of months, he said, security researchers who work on this space have

uncovered billions of records that could have been breached through poor API

design. He pointed to the API design flaws in basically every full-service

carrier’s frequent flyer program, which could have exposed entire datasets or

allowed for the awarding of unlimited miles and hotel points.

Rethinking Cybersecurity: The Power of the Hacker Mindset

Embracing a hacker mindset involves adopting an external viewpoint of your

business to uncover vulnerabilities before they’re exploited. This includes

embracing practices like ethical hacking and penetration testing. While

forming a specialised ethical hacking team is an option, embedding this

mindset within cyber teams and your wider business is equally effective. Key

to this transformation is upskilling. Businesses should be offering training

to encourage creative thinking when it comes to cybersecurity. Instead of

waiting for breaches to learn from mistakes, being proactive is crucial.

Regular, monthly upskilling for cybersecurity and IT teams, rather than every

six months or even a year, keeps them on the front foot. Encouraging a hacking

mindset also shouldn’t be confined to cyber experts; all employees should

undergo cyber awareness training. In this fight, businesses and individuals

aren’t alone. Numerous training platforms are available, but choosing those

that concentrate on providing practical, hands-on skills rooted in real-world

attack scenarios is essential.

How to get started with prompt engineering

Joseph Reeve leads led a team of people working on features that require

prompt engineering at Amplitude, a product analytics software provider. He has

also built internal tooling to make it easier to work with LLMs. That makes

him a seasoned professional in this emerging space. As he notes, "the great

thing about LLMs is that there’s basically no hurdle to getting started—as

long as you can type!" If you want to assess someone's prompt engineering

advice, it's easy to test-drive their queries in your LLM of choice. Likewise,

if you're offering prompt engineering services, you can be sure your employers

or clients will be using an LLM to check your results. So the question of how

you can learn about prompt engineering—and market yourself as a prompt

engineer—doesn't have a simple, set answer, at least not yet. "We're

definitely in the 'wild west' period," says AIPRM's King. "Prompt engineering

means a lot of things to different people. To some it's just writing prompts.

To others it's fine-tuning and configuring LLMs and writing prompts.

Australia’s new cybersecurity strategy: Build “cyber shields” around the country

The first shield proposes a long-term education of citizens and businesses so

by 2030 they understand cyberthreats and how to protect themselves. This

"shield" comes with a plan B that plans for citizens and businesses to have

proper supports in place so that when they are the victim of cyber-attack,

they're able to get back up off the mat very quickly. The second shield is for

safer technology. The federal government will have software treated like any

other consumer product that is deemed insecure. "So, in 2030 our vision for

safe technology is a world where we have clear global standards for digital

safety in products that will help us drive the development of security into

those products from their very inception," O'Neil said. ... The fourth

proposed shield will focus on protecting Australian's access to critical

infrastructure, with the Home Affairs and Cybersecurity minister saying that

"part of this year will be about government lifting up its own cyber defences

to make sure we're protecting our country."

Modeling Asset Protection for Zero Trust – Part 2

The goal when modeling the data environment for a Zero Trust initiative is to

have the information available to decide what data should be available when,

where, and by whom. That requires you to know what data you have, its value to

the business, and the risk level if lost. The information is used to inform an

automated rules engine that enforces governance based on the state of the data

request journey. It is not to define or modify a data model. Hopefully, you

already have this information catalogued. From a digital asset perspective,

most companies think of their data as their crown jewels so the data pillar

might be the most important pillar. One challenge with data is that

applications supply data access. Many applications are not written to support

modern authentication mechanisms and don’t handle the protocols needed to

integrate with contemporary data environments so the applications might not

support a Zero Trust data model. Hopefully, you’re already experimenting with

current mechanisms for your microservice environment. But, if not, as with any

elephant, you eat it one bite at a time.

Quote for the day:

"Your time is limited, so don't waste

it living someone else's life." -- Steve Jobs

/filters:no_upscale()/articles/domain-driven-cloud/en/resources/50figure-1-1694511686282.jpg)