Understanding Data Security Posture Management for Protecting Cloud Data

To help organizations protect their data from data loss, a new approach emerged

in 2022 in the form of data security posture management (DSPM). Today it is

proving to be a critical tool for effective data security because of its laser

focus on the data layer. DSPM allows organizations to identify all their

sensitive data, monitor and identify risks to business-critical data, and

remediate and protect that information. To get a better handle on this new

approach and what it does, let’s consider what DSPM is not. ... DSPM’s ability

to autonomously discover, monitor, and remediate risk creates an effective tool

for an organization’s security posture. Beyond that, your DSPM solution of

choice needs to operate in a manner that doesn’t require deployment of agents

everywhere. Your DSPM should be easy to get up and running and allow you to

quickly realize benefits by mining meaningful amounts of data to deliver

visibility into what's going on within your environment from a risk perspective.

DSPM solutions are proven to deliver accurate results and offer significant ROI

for organizations.

Arctic Wolf CEO on Incident Response, M&A, Cyber Insurance

Many organizations struggle with preparing for a security incident even if they

have an internal security team and have procured cyber insurance, Schneider

says. Businesses often haven't prepared their systems or documented escalation

paths or how their environment is set up, which makes it nearly impossible to

quickly get information over to an incident response provider in the event of an

attack, Schneider says. "The less time that you're spending on compiling

information, the more time you're able to spend on remediating the threat and

the less time you've taken between an incident occurring and the beginning of a

response," Schneider says. Most companies don't know what they need to have

documented or prepared in the event of a security incident and therefore end up

reaching out to their insurance provider or incident responder while an attack

is taking place to see what questions they have, Schneider says. Although the

answers to these questions are relatively static, he says it takes a lot of time

to gather the information needed to respond

UK government introduces revised data reform bill to Parliament

“Co-designed with business from the start, this new bill ensures that a vitally

important data protection regime is tailored to the UK’s own needs and our

customs,” said science, innovation and technology secretary Michelle Donelan.

“Our system will be easier to understand, easier to comply with, and take

advantage of the many opportunities of post-Brexit Britain. No longer will our

businesses and citizens have to tangle themselves around the barrier-based

European GDPR [General Data Protection Regulation]. “Our new laws release

British businesses from unnecessary red tape to unlock new discoveries, drive

forward next-generation technologies, create jobs and boost our economy.” The

government added the revised bill will also support increased international

trade without creating extra costs for businesses already compliant with

existing data protection rules, as well as boost public confidence in the use of

artificial intelligence (AI) technologies by clarifying the circumstances in

which safeguards apply to automated decision-making.

Municipal CISOs grapple with challenges as cyber threats soar

"The diversity of our business services and the corresponding diversity of

systems is unparalleled in that no organization does what our municipal

government does," Michael Makstman, CISO for the City and County of San

Francisco and co-chair of the Coalition of City CISOs, tells CSO. "We fly

planes, we pave roads, we provide public safety services," Makstman says. "We

operate one of the largest, if not the largest, trauma centers on the West

Coast. We support many legal professionals for some of the largest legal firms

in the country. At the same time, we make sure that vulnerable populations have

access to food and care. We have an outstanding municipal transportation

network. We have buses and subways and our world-famous cable car." ... CISOs of

municipal organizations of all sizes are required to deftly handle the politics

of the governments they serve and the individual service providers themselves,

Hamilton says. CISOs are not always welcomed into agencies that do not directly

employ them.

Decoding Digital Twins: Exploring the 6 main applications and their benefits

Although the roots of digital twins go back to NASA’s Apollo program in 1970,

the concept of creating digital replicas of physical assets and

visualizing/simulating/predicting in a virtual world is extremely suitable for

companies that are trying to make Industry 4.0 a reality or are aiming toward

future industrial metaverse projects. Make no mistake: While the definition of a

digital twin may be straightforward, its applications are numerous. In 2020, we

published our first market research on the topic and showcased that there may,

in fact, be 200 or more different types of digital twins. The feedback we

received from you was that classification helps to ensure apple-to-apple digital

twin comparisons, but questions remain about the hotspots of activity.

Therefore, as part of our new 233-page Digital Twin Market Report 2023-2027, we

classified 100 real digital twin projects along the three dimensions and found

six main areas of activity. These six digital twin application hotspots cover

two thirds of all digital twin projects we analyzed.

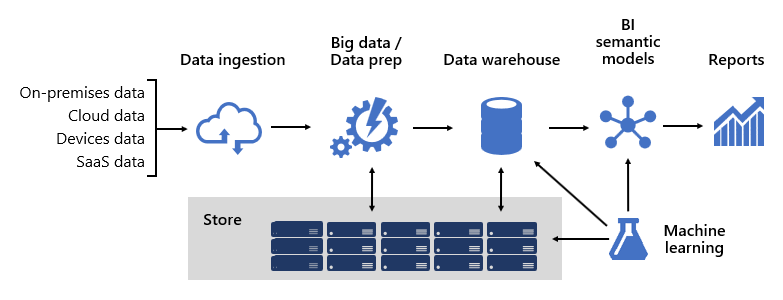

Cloud trends 2023: Cost management surpasses security as top priority

For the first time, since Flexera began its annual survey of cloud

decision-makers, security was not the top challenge reported by respondents. As

revealed in the Flexera 2023 State of the Cloud Report, released on March 8,

2023, 82% of respondents from across all organizations indicated that their top

cloud challenge is managing cloud spend, edging out security at 79%.These

shifting challenges may be the result of organizations becoming increasingly

comfortable with cloud security, while needing to manage the greater spend

associated with their increased reliance on cloud services. Lack of resources or

expertise was reported as a top cloud challenge by 78% of respondents, making it

the third major cloud challenge for today’s businesses. ... Cloud cost

management responsibilities are often spread across teams within an

organization. Year over year, vendor management and finance or accounting

teams have less responsibility for cloud expenses. Instead, initiatives are

shifting to finops teams. Finops, the practice of cloud cost management, is a

growing priority.

Why IT communications fail to communicate

If you prefer to communicate via documentation — and encourage everyone in your

organization to follow suit — four facets of communication are getting in your

way. Language: Every natural language, be it English, Latin, or even Esperanto,

is imprecise at best. Synonyms are approximate, not exact; words are defined by

other words, leading us down the path of infinite recursion; different people

bring different vocabularies and assumptions to their attempts to interpret what

they’re reading. ... Disambiguation: No matter how even the best writers might

try, they’ll never create a document that’s completely free of ambiguity and

entangled logic. In making the attempt, many find themselves trudging along the

literary path of a different profession for which ambiguity and the likelihood

of misinterpretation are equally problematic ... Disagreements: No matter how

well a business analyst (going back to our app dev example) describes their

design, the stakeholders they’ve worked with to create it aren’t always going to

agree on all points. Stakeholder disagreements unavoidably turn into design

compromises and, worse, inconsistent specifications.

Cloud Native Testing Trends for 2023

Testing in a cloud native environment can be challenging, as it involves testing

across multiple platforms and services, using a diverse set of tools that can

vary greatly across teams and workflows. The distributed nature of cloud native

applications means that testing must be performed on a larger scale, with more

components to be tested. DevOps teams must also consider the impact of the

underlying infrastructure on testing, as changes to the infrastructure can

affect the behavior of the application. To overcome these challenges,

organizations are adopting a cloud native testing strategy that incorporates

automation and integrates testing into the development process. ... DevOps

engineers are increasingly taking ownership of testing, and tools like Testkube

can help them easily integrate testing into their workflows. By taking a

collaborative approach to testing, DevOps engineers can ensure that testing is

done throughout the development life cycle, reducing the risk of bugs slipping

through to production.

Stress-Test Your Software to Prevent a Southwest-Type Calamity

Stress tests typically subject a software system to very large workloads in the

form of a high volume of requests or a high rate of failure in individual

components. “The idea is to simulate a worst-case scenario with potentially

unpredictable side effects,” Padhye says. Testing reveals how a system will

react to slowdowns, memory leaks, security issues, and data corruption. “Across

performance-based testing, stress tests must be paired with load tests,” Feloney

advises. “For example, spike tests examine how a system will fare under sudden,

high ramp-up traffic, and soak tests examine the system’s sustainability over a

long period.” Stress tests can either be performed in an isolated environment

designed for quality purposes, or directly on the live customer-facing

deployment. “While it sounds scary, testing a live deployment is far more

representative of a real extreme scenario, because it also incorporates the

human factor presented by users responding to the simulated events in a

hard-to-predict way,” Padhye explains.

Innovating in an economic downturn: 4 tips

During a downturn, you may lose the ability to hire full-time employees but

still have things to do and room in your budget. Finance might be more open to a

capital expense than an operational expense during these times. This is a

perfect opportunity to bring in outside help to take care of your distractions

so your team can spend time and energy on innovation. Distractions take a lot of

time and effort but aren’t core to what an organization does. For example,

organizations today spend a lot of time supporting their applications and

systems. As a result, many choose to hire outside firms to handle these

activities so that their internal teams can focus on innovation and projects

that grow their top line. ... Sometimes you simply don’t have internal resources

with an invention mindset or experience innovating. Consultants can help fill

the gap, facilitating discussions that drive innovation and partnering with your

teams to show them how to work through the innovation process. External experts

provide a critical outside perspective and facilitate conversations that drive

meaningful innovation.

Quote for the day:

"Leadership without mutual trust is a

contradiction in terms." -- Warren Bennis