7 ways to ruin your IT leadership reputation

Be mindful of the decisions you make. “One careless choice can ruin your

reputation and your career,” warns Jim Durham, CIO of Solar Panels Network USA,

a national solar panel installation company. “By being aware of the risks and

taking responsibility for your actions, you can minimize the damage and learn

from your mistakes,” he advises. A careless decision can be anything from

selecting the wrong technology to mishandling sensitive data. “Not only are

these actions career-destructive, but they can also have lasting negative

effects on your enterprise,” Durham notes. CIOs are always pressured by

management to make the right decision. It’s important to remember, however, that

even the best strategies and intentions can sometimes lead to disastrous

results. “If you’re unsure about a decision, it’s always better to err on the

side of caution and consult with your team before making a final call,” Durham

suggests. Failure is never an option, particularly major failures. “It

shows that you’re not capable of handling important tasks,” Durham

states.

Cyber Skills Shortage is Caused by Analyst Burnout

Data shows skilled and experienced professionals are leaving the industry due to

burnout and disillusionment. In the UK, the cybersecurity workforce reportedly

shrank by 65,000 last year, and according to a recent study, one in three

current cybersecurity professionals are planning to change professions.

According to ISACA’s State of Cybersecurity 2022 report, the top reasons for

cybersecurity professionals leaving include being recruited by other companies

(59%), poor financial incentives (48%), limited promotion and development

opportunities (47%), high levels of work-related stress (45%) and lack of

management support (34%). When discussing the skills shortage, many, by default,

think of businesses struggling to recruit for their internal cybersecurity

vacancies. However, this is equally challenging for specialist providers of

consulting and managed cybersecurity services. Businesses increasingly rely on

third-party managed services, particularly mid-size organizations, where

outsourcing to a Managed Security Service Provider (MSSP) represents a much more

commercially viable solution with considerably less up-front investment.

Data privacy is expensive — here’s how to manage costs

“The true cost of data privacy, broadly, is their trust with their customers,”

said Akbar Mohammed, lead data scientist, Fractal AI. “In this era of customers

increasingly becoming tech-savvy, as soon as they realize that their data isn’t

secure, the company will risk loss of trust from consumers. This eventually

results in a lot of business disruption.” Almost all companies that need to

collect data for their operations should have a data privacy infrastructure in

place. Companies should also set up dedicated security and compliance teams

surveying data and technology assets along with maintaining an aggressive threat

detection policy. It’s imperative for companies today to have a data strategy

and have policy and procedures governed by a data governance entity. “For large

organizations, it’s best to have regular audits or assessments and get

privacy-related certifications,” Mohammad said. “Lastly, train your people and

make the entire organization aware of your activities, your policies.”

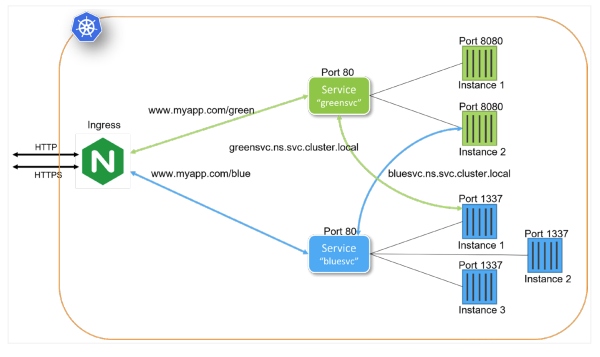

Architectural Patterns for Microservices With Kubernetes

Kubernetes provides many constructs and abstractions to support service and

application Deployment. While applications differ, there are foundational

concepts that help drive a well-defined microservices deployment strategy.

Well-designed microservices deployment patterns play into an often-overlooked

Kubernetes strength. Kubernetes is independent of runtime environments. Runtime

environments include Kubernetes clusters running on cloud providers, in-house,

bare metal, virtual machines, and developer workstations. When Kubernetes

Deployments are designed properly, deploying to each of these and other

environments is accomplished with the same exact configuration. In grasping the

platform independence offered by Kubernetes, developing and testing the

deployment of microservices can begin with the development team and evolve

through to production. Each iteration contributes to the overall deployment

pattern. A production deployment definition is no different than a developer's

workstation configuration.

High data quality key to reducing supply chain disruption

With so many obstacles to overcome, the supply chain needs a saviour – and many

experts are pointing to big data to fill the role. Prince believes data will

become more important in this new era. He says that after Brexit, “there is

uniquely new importance placed on master data, given the customs and other

regulatory impacts of moving goods between the two markets”. Also, the greater

risks posed in global trade and the need to be resilient mean that the

predictive capabilities of data could be crucial. ... The potential of big data

is clear – but to get the best results, the data involved needs to be accurate.

“Data quality takes on many forms, including accuracy, completeness, timeliness,

precision, and granularity,” says Laney. He points out that most organisations

don’t have n-tier visibility in their supply chain, which means they don’t

understand what is happening beyond the first tier of suppliers in the chain.

They may also have incomplete data on where items are in the supply chain or

when disruptions will happen.

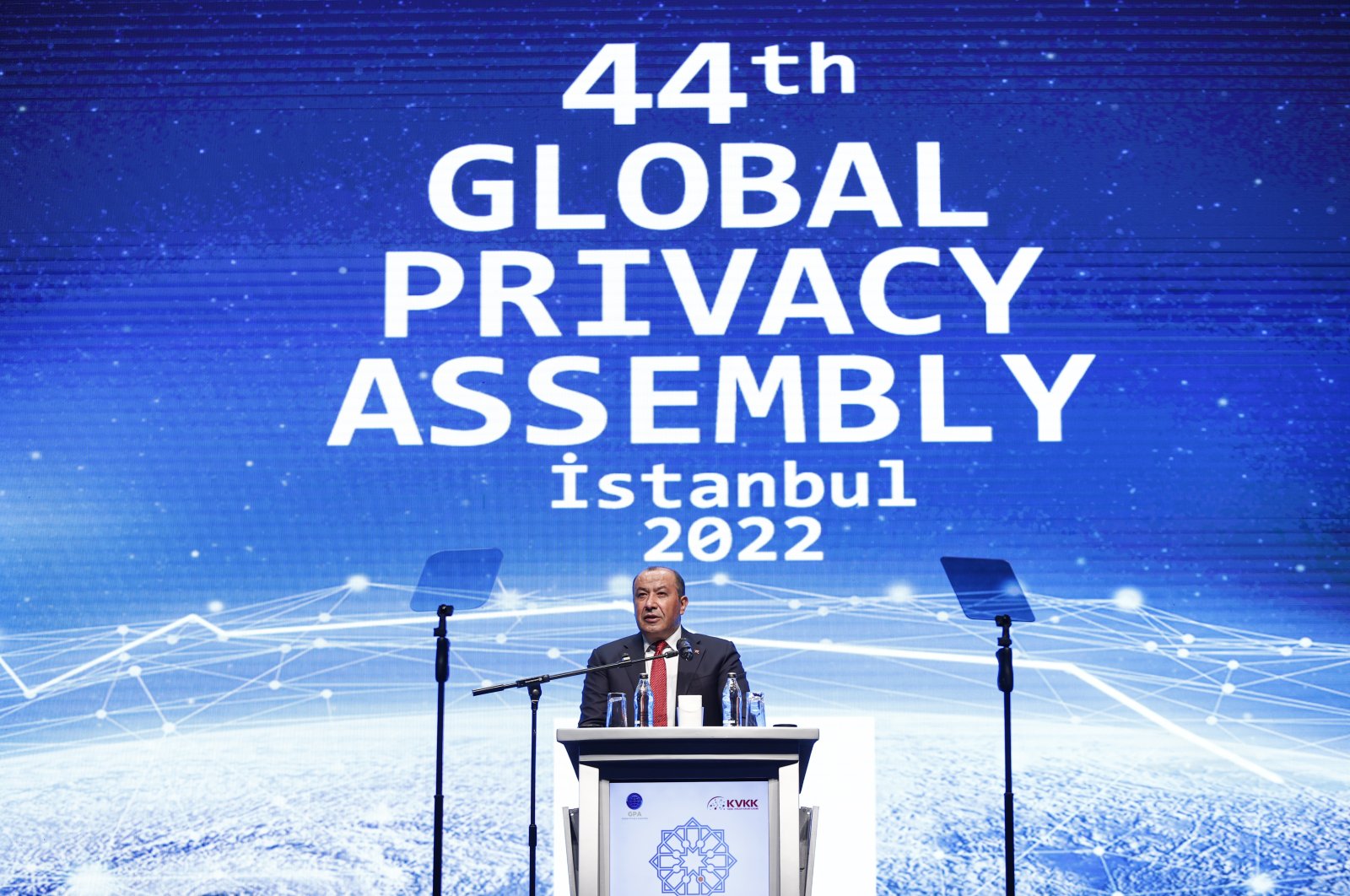

Privacy assembly in Istanbul calls for adaptation to new necessities

Explaining that new challenges and needs emerged with the development of

artificial intelligence (AI) and the metaverse, Koç underlined that protecting

personal data should be a requirement. "Unfortunately, we pay the price for the

comfort and efficiency provided by technology in the age of data, with privacy,"

he said. KVKK Chair Faruk Bilir, for his part, said that since the foundation's

membership to the assembly, Türkiye has given significant importance to

international efforts in the field. KVKK leads initiatives for the protection

and awareness of personal data and privacy in line with the laws and regulations

adopted since 2016, he added. "The protection of individuals' privacy emerges as

an unchanging fact of the changing world," Bilir said. The protection of privacy

is an indicator of civilization, Bilir said underlining the importance of a

human-oriented approach. Law and ethics are complementary elements to the

human-oriented approach, he added. "Technology is indispensable for us, our

privacy is our priority," Bilir said.

CISA Releases Performance Goals for Critical Infrastructure

Among the newly recommended measures are implementation of multifactor

authentication, making sure to revoke the login credentials of former employees,

disabling Microsoft Office macros and prohibiting the connection of unauthorized

devices, perhaps by disabling AutoRun. The document also recommends that the

operational technology side have a single leader responsible for cybersecurity

and that OT and IT staff work to improve their relationship. Organizations

should "sponsor at least one 'pizza party' or equivalent social gathering per

year" to be attended by the two cybersecurity teams. DHS says it will actively

solicit feedback about the goals in the coming months and has set up a GitHub

discussions page. The department's next plan is to roll out cybersecurity goals

tailored to each sector of critical infrastructure in conjunction with the

agencies closest to each sector, such as the Environmental Protection Agency for

water systems.

Will Twitter Sink or Swim Under Elon Musk's Direction?

Musk's accompanying "let that sink in" tweet could be, in terms of bang for the

buck, the most groan-inducing dad joke of all time. But it shouldn't hide the

very real business and security challenges facing Musk, who's already CEO of

Tesla and SpaceX, as he takes the helm of a social network sporting 230 million

customers. "The bird is freed," Musk tweeted late Thursday, before the $44

billion deal closed Friday, and Twitter filed for delisting from the New York

Stock Exchange as it goes private. Like so much with Musk, commentators have

been attempting to deduce his planned intentions on numerous fronts. Musk styles

himself as a showman, having once tweeted - apparently about nothing in

particular - that "the most entertaining outcome is the most likely." ... What

state Twitter might be in once Musk is done with it remains unclear. Then again,

when you're the richest person in the world, what's a few billion here or there,

especially if it keeps people talking about you and guessing at your next

move?

How to turbocharge collaboration in innovation ecosystems

Whether you call it socialization or use any other term, the human dimension of

innovation is often overlooked or obscured. In part, this is because technology

and the covid-19–induced migration to online platforms have garnered a great

deal of attention. It’s important to remind managers that innovating as a

special form of problem-solving is best tackled by empowering the workforce.

Collaboration can be jump-started from many directions, but it can be only as

vibrant as the company’s underlying culture of curiosity, learning, and

continuous adaptation. In the Veezoo–AXA story, the formal process failed to

reach a breakthrough. It was the involvement of specific individuals who were

keen to see the collaboration through—often on their own terms—that led to

success in building an innovation ecosystem. In fact, it is often through the

behaviors and work of certain people that effective structure and discipline

emerge across an ecosystem.

Data Quality as the Centerpiece of Data Mesh

After all, data quality is always context-dependent and the domain teams will

best know the business context of the data. From a data quality perspective,

data mesh makes good sense as it allows data quality to be defined in a

context-specific way–for example, the same data point can be considered “good”

for one team but “bad” for another, depending on the context. As an example,

let’s take a subscription price column with a fair amount of anomalies in it.

Team A is working on cost optimization while Team B is working on churn

prediction. As such, price anomalies will be more of an important data quality

issue for Team B than for Team A. To make it easier to facilitate ownership

between data products (which can be database tables, views, streams, CSV files,

visualization dashboards, etc.), the data mesh framework suggests each product

should have a Service Level Objective. This will act as a data contract, to

establish and enforce the quality of the data it provides: timeliness, error

rates, data types, etc.

Quote for the day:

"Humility is a great quality of

leadership which derives respect and not just fear or hatred." --

Yousef Munayyer