An interesting development is the return of apps from the cloud to on premises. Many companies that moved to the cloud to reduce costs got nasty sticker shock. The survey found that organizations that use public cloud spend 26 percent of their annual IT budget on public cloud computing, just 6 percent using public cloud came in under budget, and 35 percent overspent in their use of public cloud resources. Why? It's because of the cost of reserved instances. Many apps start in the cloud in a virtualized instance like Amazon EC2, but once developed and running regularly, they need a more permanent home, especially if this is a high-scaling app. So, the customer moves to reserved instances, where more resources can be brought to play and the instance is permanent, not temporary. And while cloud service providers offer discounts up front, the costs still can add up fast and become unexpectedly expensive.

As humankind takes giant leaps in terms of technology, AI ensures machines and gadgets imitate human actions, perceive the environment around, and adjust according to a diverse set of circumstances. Many global companies are working through smart technologies like AI to directly improve the consumer experience across education, daily life, and commerce. At a time when the Indian government is pushing both the digital payments and the financial inclusion agenda, AI can and must offer some real tangible benefits like tighter security and risk management in a world that is increasingly complex and moving at the speed of light. The Indian financial sector has been quick to realise the potential of AI in operations. Over the last few months, Indian PSU heavyweights like SBI and Bank of Baroda have invested heavily in AI platforms to improve efficiencies and offer enhanced services. SBI uses a chat assistant called SBI Intelligent Assistant (SIA), which resolves queries of NRI customers exactly like a bank representative would without the need to wait in queue for customer service.

Per the announcement, the new program based on MonetaGo’s financial services network technology will be integrated through standardized SWIFT financial messages. The banks will purportedly deploy a shared distributed ledger network, that complies with industry-level governance, security and data privacy requirements in order to improve the efficiency and security of their financial products and procedures. According to Kiran Shetty, CEO of SWIFT India, the company will digitize trade processes, while MonetaGo will provide “fraud mitigation solutions to avoid double-financing and check authenticity of e-way Bill.” E-way Bill is an electronically generated bill for the specific movement of goods with a value more than 50,000 rupees ($700). "Given India's focus on a digital infrastructure which is supported by both policy and technological innovation, it makes sense that large institutional players are interested in these products and initiatives," said Jesse Chenard, CEO of MonetaGo.

Hyperscale cloud reliability and the art of organic collaboration

Connecting network policies to logical formulas that capture their intent has been a recurring topic in networking research, but prior tools were ad hoc, written for a specialized format and hardly extensible. Microsoft Research’s satisfiability modulo theories solver Z3 is a state-of-the-art theorem prover that is specifically tailored to capture domains that are found commonly in software and hardware descriptions. It is used prominently in software verification, testing, and analysis. By 2012, network verification was an area of nascent interest, and the Azure network was going through early stages of build-out. It quickly became evident that ACLs could be expressed directly as logical formulas and that the machinery that reasons about such formulas was well-suited and sufficiently efficient for checking properties of ACLs. Jayaraman and Bjørner developed the SecGuru tool, replacing manual what-if policy reviews with automated analysis for ACL updates.

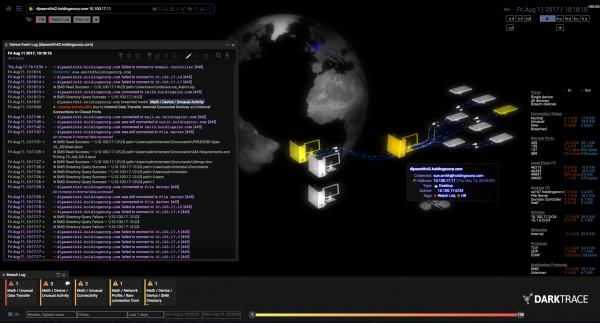

9 cyber security predictions for 2019

In 2019, we’ll see how the EU will react to those complaints. That will provide some much-needed clarity regarding the risk that GDPR and other privacy regulations present. If the GDPR doesn’t react, then that’s telling, too. It sends the message not to take the regulation seriously. Rising concern over how companies use and protect personal information will encourage many Americans to hold those companies more accountable. “The reaction by consumers to constant security breaches and other unethical information disclosures (e.g., Facebook) leads U.S. consumers to demand more default privacy and control over their own information,” says CSO contributor Roger Grimes. Grimes expects to see an effort to enact privacy laws similar to GDPR nationally in 2019. The California Consumer Privacy Act has already passed into law and goes into effect in 2020. On November 1, Sen. Ron Wyden introduced a bill titled the Consumer Data Protection Act (CDPA), which has stiff penalties, including jail time, for privacy violations.

Machine learning, meet quantum computing

The big advantage of quantum computing is that it allows an exponential increase in the number of dimensions it can process. While a classical perceptron can process an input of N dimensions, a quantum perceptron can process 2N dimensions. Tacchino and co demonstrate this on IBM’s Q-5 processor. Because of the small number of qubits, the processor can handle N = 2. This is equivalent to a 2x2 black-and-white image. The researchers then ask: does this image contain horizontal or vertical lines, or a checkerboard pattern? It turns out that the quantum perceptron can easily classify the patterns in these simple images. “We show that this quantum model of a perceptron can be used as an elementary nonlinear classifier of simple patterns,” say Tacchino and co. They go on to show how it could be used in more complex patterns, albeit in a way that is limited by the number of qubits the quantum processor can handle.

Dell XPS 13: The best Linux laptop of 2018

What makes it a "Developer Edition" besides the top-of-the-line hardware is its software configuration. Canonical, Ubuntu's parent company, and Dell worked together to certify Ubuntu 18.04 LTS on the XPS 13 9370. This worked flawlessly on my review system. Now, Ubuntu runs without a hitch on almost any PC, but the XPS 13 was the first one I'd seen that comes with the option to automatically install the Canonical Livepatch Service. This Ubuntu Advantage Support package automatically installs critical kernel patches in such a way you won't need to reboot your system. With new Spectre and Meltdown bugs still appearing, you can count on more critical updates coming down the road. The XPS 13's hardware is, in a word, impressive. My best of breed laptop came with an 8th-generation Intel Coffee Lake Core i7-8550U processor. This eight-core CPU runs at 4Ghz. The system comes with 16GB of RAM.

How open source is fuelling an explosion in fintech innovation

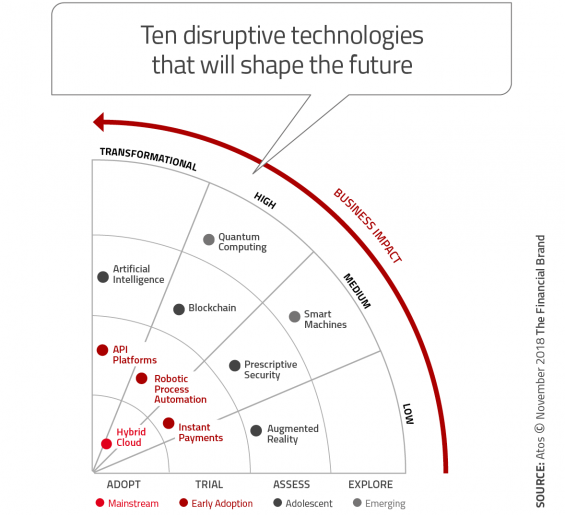

The open technologies that fintech services are built upon are new and speak to the new age of financial services in the palm of consumers’ hands. “Fintech firms are establishing themselves not only as significant players in the industry, but also as the benchmark for financial services,” states Ernst & Young in its Fintech Adoption Index. “Their new propositions are increasingly attractive to consumers who are underserved by existing financial services providers, and their use will only rise as fintech awareness grows, consumer concerns fall, and technological advancements, such as open APIs, reduce switching costs.” The blockchain is a central technology that many fintech services have built themselves upon, especially across the P2P payments space. AI, Big Data and the cloud are all vital components of the services fintech companies are innovate with. Together with open APIs and intuitive UI’s, these technologies form a new toolbox that each startups, in particular, are exploiting.

Inside the chief data privacy officer role with Barbara Lawler

While GDPR sucked all the oxygen out of the room and continues to drive new or revised privacy rules around the globe, it is important to keep in mind that no single country or region owns the rules. Each country interprets privacy according to its cultural norms and legal frameworks. Other international efforts such as APEC’s Cross Border Privacy Rules, the EU-US Privacy Shield, along with legal data protection regulations and frameworks in 126 countries and across 50 U.S. states prove that responsibly handling people’s data is serious and critical for business success. New on the horizon is the California Consumer Privacy Act (CCPA) of 2018, inspired by GDPR but carrying its own unique set of requirements. It’s highly likely that other U.S. states will replicate some of it or all of it. This is currently driving renewed dialog and debate in the U.S.

“Microsoft systematically collects data on a large scale about the individual use of Word, Excel, PowerPoint and Outlook. “Covertly, without informing people, Microsoft does not offer any choice with regard to the amount of data, or possibility to switch off the collection, or ability to see what data are collected, because the data stream is encoded,” Privacy Company wrote in a blog post covering its findings. While Microsoft is considered a data processor, the report warned that the way it collects data from users for diagnostics means it should be classified as a joint controller as defined in article 26 of the GDPR. The DPIA report recommended IT administrators for Dutch government users configure the “zero exhaust” setting in Microsoft Office to prevent sensitive data from being leaked and centrally prohibit the use of Microsoft Connected Services for spell checking and language translation, as well as disabling access to SharePoint Online, OneDrive Online and the web version of Office 365 Live.

Quote for the day:

"Good leaders make people feel that they're at the very heart of things, not at the periphery." -- Warren G. Bennis