AI hallucination mitigation: two brains are better than one

LLMs have been characterized as stochastic parrots — as they get larger, they

become more random in their conjectural or random answers. These “next-word

prediction engines” continue parroting what they’ve been taught, but without a

logic framework. One method of reducing hallucinations and other genAI-related

errors is Retrieval Augmented Generation or “RAG” — a method of creating a more

customized genAI model that enables more accurate and specific responses to

queries. But RAG doesn’t clean up the genAI mess because there are still no

logical rules for its reasoning. In other words, genAI’s natural language

processing has no transparent rules of inference for reliable conclusions

(outputs). What’s needed, some argue, is a “formal language” or a sequence of

statements — rules or guardrails — to ensure reliable conclusions at each step

of the way toward the final answer genAI provides. Natural language processing,

absent a formal system for precise semantics, produces meanings that are

subjective and lack a solid foundation. But with monitoring and evaluation,

genAI can produce vastly more accurate responses.

The Courtroom Factor in GenAI’s Future

There are a lot of moving parts. You kind of hit that on the head. Certainly,

every day there’s something new, some development, but let me focus on my area

of expertise, which is litigation and where I see some of the domestic

generative AI litigation perhaps trending or where I think we’re going to see an

increase in litigation going forward. I think that’s going to be twofold. I

think you’re going to continue to see the intellectual property issues attended

to generative AI litigated. I think that’s one area that’s inevitable. I think

the other area that we’re really going to start to see, and we already are

seeing an uptick in litigation, is in the use and deployment of generative AI by

companies. Let me frame it this way. As companies attempt to take advantage of

the promise of generative AI, they’re going to, they already have, and they will

continue to deploy generative AI tools, and generative AI system, more advanced

systems in terms of machine learning, and generative aspects of AI in their

businesses. I think we’ll see a steady increase in use -- and some folks would

say misuse -- of AI. It’s trickling out where plaintiffs allege that the

business or the entity has done something wrong using AI.

Next-Gen DevOps: Integrate AI for Enhanced Workflow Automation

In DevOps, the ability to anticipate and prevent outages can mean the difference

between success and catastrophic failure. In such situations, AI-powered

predictive analytics can empower teams to stay one step ahead of potential

disruptions. Predictive analytics uses advanced algorithms and machine learning

models to analyze vast amounts of data from various sources, such as application

logs, system metrics, and historical incident reports. It then identifies

patterns, correlations, and detects anomalies within this data to provide early

warnings of impending system failures or performance degradation. This enables

teams to take proactive measures before issues escalate into full-blown outages.

... Doing things by hand introduces the possibility of human error and is way

too time-intensive — so it comes as no surprise that the industry is turning

toward automation. Tools that utilize artificial intelligence can identify

potential issues by analyzing code repositories at speeds that cannot be

replicated by humans. On the ground level, this means that various potential

issues — bottlenecks in terms of performance, code that doesn’t meet best

practices or internal standards, security liabilities and code smells — can be

identified quickly and at scale.

Key MITRE ATT&CK techniques used by cyber attackers

Half of the top threats are ransomware precursors that could lead to a

ransomware infection if left unchecked, with ransomware continuing to have a

major impact on businesses. Despite a wave of new software vulnerabilities,

humans remained the primary vulnerability that adversaries took advantage of in

2023, comprising identities to access cloud service APIs, execute payroll fraud

with email forwarding rules, launch ransomware attacks, and more. As

organizations migrate to the cloud and rely on a growing array of SaaS

applications to manage and access sensitive information, identities are the ties

that bind all these systems together. Adversaries have quickly learned that

these systems house the information they want and that valid and authorized

identities are the most expedient and reliable way into those systems.

Researchers noted several broader trends impacting the threat landscape, such as

the emergence of generative AI, the continued prominence of remote monitoring

and management (RMM) tool abuse, the prevalence of web-based payload delivery

like SEO poisoning and malvertising, the increasing necessity of MFA evasion

techniques, and the dominance of brazen but highly effective social engineering

schemes such as help desk phishing.

Data management trends: GenAI, governance and lakehouses

Nearly every major database and data platform vendor had some form of generative

AI news in 2023. Some vendors included generative AI as a tool to act as an

assistant, helping users to conduct different tasks. Managing data platforms and

writing different types of data queries has long been a complicated exercise and

generative AI simplifies it. Among the many vendors that integrated some form of

AI assistant, Dremio launched its Text-to-SQL AI-powered tool in June, which

enables users to generate SQL queries more easily. In August, Couchbase

announced Capella iQ, a generative AI tool that helps developers write database

application code. Also in August, SnapLogic rolled out its SnapGPT AI tool to

help users build data pipelines using natural language. ... Whether it's for AI,

data operations or analytics, the topic of data governance is increasingly

important. Being able to understand where data comes from, how to make it

available and use it is important for security, privacy, accuracy and

reliability. Over the course of 2023, multiple vendors expanded and enhanced

data governance capabilities to help manage data.

The importance of "always-ready" data

Imagine living in a world where data is prepared on an ongoing basis – that is,

data prepared so quickly, regardless of the amount, that it is always ready.

Such a reality would enable enterprises to respond promptly to evolving business

needs and unexpected challenges. Moreover, it would minimize backlogs of tickets

and requests, granting data engineers time to be more proactive and productive.

One way to facilitate this is through the use of a cloud data lakehouse. With

it, data can be prepared directly on cloud storage, without the long load times

that ETL- or ELT-based (extract, load, and transform) data processing typically

takes. For enterprises that manage complicated and data-heavy workloads, the

result is game-changing on multiple fronts. Agile data infrastructure

underscored by superior cost performance will give enterprises an efficient

means of adapting to changing market dynamics, new projects, and fluctuating

customer demands. Beyond the flexibility it grants data engineers, always-ready

data also empowers them to conduct ad-hoc queries and analytics as a way to

derive actionable insights and predictions on the fly.

AI is embedded in everything that we do

AI is embedded in everything that we do and it is becoming visible in every

aspect of software development and operations. Impact of AI in DevOps can be

felt through efficiency and speed (of SW development and delivery), automation

in testing, security (real time alerts) and optimization of cloud resources.

Tools such as Pilot, Code Whisperer have reduced the time it takes to create

business logic and propagation to production environment is swift, allowing the

team to produce digital assets quickly. AI helps in automating CI/CD pipeline.

By leveraging AI-powered monitoring and management tools, DevOps teams can

automate routine tasks, predict performance issues, retract errors quickly, and

optimize resource utilization across diverse cloud platforms. AI-driven

solutions help DevOps teams to dynamically allocate resources, detect anomalies,

and enforce compliance across multi-cloud deployments. Thus, DevOps teams are in

a better position to get actionable insights and have intelligent

decision-making capabilities in multi-cloud environment. AI technologies can

help build automated workflows and improve collaboration and experiment

tracking.

Why public cloud providers are cutting egress fees

This customer discontent is not lost on cloud providers, who are initiating a

significant shift in their pricing strategies by reducing these charges. Google

Cloud announced it would eliminate egress fees, a strategic move to attract

customers from its larger competitors, AWS and Microsoft. This was not merely a

pricing play but also a response to regulatory pressures, greater competition,

and the significantly lower cost of hardware in the past several years. The

cloud computing landscape has changed, and providers are continually looking for

ways to differentiate themselves and attract more users. Today the competition

is not only other public cloud providers but managed service providers (MSPs)

and regional cloud services. Microclouds are also emerging, driven mainly by

generative AI and the need to find more cost-effective cloud alternatives for

using GPU-powered systems on demand. Changing governmental policies and market

demand also put pressure on providers to remove or reduce these fees. The best

example is the European Data Act, which is aimed at fostering competition by

making it easier for customers to switch providers.

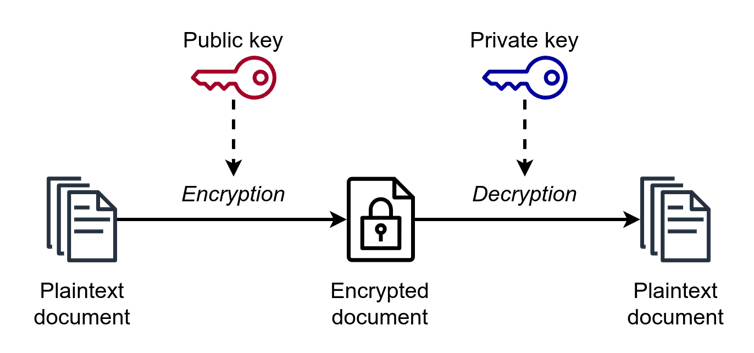

Redefining multifactor authentication: Why we need passkeys

Authenticator apps, designed to provide a second layer of security beyond

traditional passwords, have been lauded for their simplicity and added

security. However, they are not without flaws. One significant issue is MFA

fatigue, a phenomenon where users, overwhelmed by frequent authentication

requests or simply following a single password spray attack, inadvertently

grant access to attackers. Additionally, attacker-in-the-middle (AiTM)

techniques such as Evilginx2 exploit the communication between the user and

the service, bypassing the newer code-matching experience provided by modern

authenticator apps. ... IP fencing may have a role in restricting privileged

IT accounts as a fourth factor of authentication (after password,

authenticator app, and device) for privileged IT accounts, but it does not

scale to regular users because of the advent of privacy features in operating

systems like Apple’s iOS (beginning in version 15) make IP fencing unrealistic

since all connections are shielded behind Cloudflare. Security operations

center (SOC) analysts struggle to identify these connections if the identity

system is not designed to authenticate both the user and the device.

As Attackers Refine Tactics, 'Speed Matters,' Experts Warn

Experts regularly recommend keeping abreast of tactics used by groups such as

Scattered Spider and reviewing defenses to ensure they can cope. "Thwarting

Muddled Libra requires interweaving tight security controls, diligent

awareness training and vigilant monitoring," Unit 42 said in a blog post. The

researchers particularly recommend having baselines of typical activity and

configurations, especially to spot unexpected changes in infrastructure,

dormant accounts becoming active, a sharp increase in remote management tool

usage, a sudden surge in multifactor authentication push requests, or the

sudden appearance of red-team tools in the environment. "If you see

red-teaming tools in your environment, make sure there is an authorized

red-team engagement underway," Unit 42 said. "One SOC we worked with had a

company logo sticker on the wall for each red team they'd caught." Some

effective defenses involve a heavy dose of process and procedure, rather than

just technology. Especially with MFA and someone who appears to have lost

their phone and is trying to reenroll, which shouldn't happen often, "put

additional scrutiny on changes to high-privileged accounts," Unit 42 said.

Quote for the day:

"Good things come to people who wait,

but better things come to those who go out and get them. " --

Anonymous

/filters:no_upscale()/articles/technical-decision-buy-in/en/resources/15Picture1-1710153443158.jpg)

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/13292777/acastro_181017_1777_brain_ai_0001.jpg)