Quote for the day:

"Listen with curiosity, speak with honesty act with integrity." -- Roy T Bennett

CIOs rethink public cloud as AI strategies mature

Regulatory and compliance concerns are a big driver toward the private cloud or

on-premises solutions, says Bastien Aerni, vice president of strategy and

technology adoption at GTT. Many companies are shifting their sensitive

workloads to private clouds as a piece of broader multicloud and hybrid

strategies to support agentic AI and other complex AI initiatives, he adds.

“Most of the time, AI is touching confidential data or business-critical data,”

Aerni says. “Then the thinking about the architecture and what the workload

should be public vs. private, or even on-prem, is becoming a true question.” The

public cloud still provides maximum scalability for AI projects, and in recent

years, CIOs have been persuaded by the number of extra capabilities available

there, he says. “In some of the conversations I had with CIOs, let’s say five

years ago, they were mentioning, ‘There are so many features, so many tools,’”

Aerni adds. ... “The paradox is clear: AI workloads are driving both

massive cloud growth and selective repatriation simultaneously, because the

market is expanding so rapidly it’s accommodating multiple deployment models at

once,” Kirschner says. “What we are seeing is the maturation from a naive

‘everything-to-the-cloud’ strategy toward intelligent, workload-specific

decisions.”

Regulatory and compliance concerns are a big driver toward the private cloud or

on-premises solutions, says Bastien Aerni, vice president of strategy and

technology adoption at GTT. Many companies are shifting their sensitive

workloads to private clouds as a piece of broader multicloud and hybrid

strategies to support agentic AI and other complex AI initiatives, he adds.

“Most of the time, AI is touching confidential data or business-critical data,”

Aerni says. “Then the thinking about the architecture and what the workload

should be public vs. private, or even on-prem, is becoming a true question.” The

public cloud still provides maximum scalability for AI projects, and in recent

years, CIOs have been persuaded by the number of extra capabilities available

there, he says. “In some of the conversations I had with CIOs, let’s say five

years ago, they were mentioning, ‘There are so many features, so many tools,’”

Aerni adds. ... “The paradox is clear: AI workloads are driving both

massive cloud growth and selective repatriation simultaneously, because the

market is expanding so rapidly it’s accommodating multiple deployment models at

once,” Kirschner says. “What we are seeing is the maturation from a naive

‘everything-to-the-cloud’ strategy toward intelligent, workload-specific

decisions.”India’s DPDP law puts HR under the microscope—Here’s why that’s a good thing

At first glance, DPDP appears to mirror other data privacy frameworks like GDPR or CCPA. There’s talk of consent, purpose limitation, secure storage, and rights of the data principal (i.e., the individual). But the Indian legislation’s implications ripple far beyond IT configurations or privacy policies. “Mention data protection, and it often gets handed off to the legal or IT teams,” says Gupta. “But that misses the point. Every team that touches personal data is responsible under this law.” For HR departments, this shift is seismic. Gupta underscores how HR sits atop a “goldmine” of personal information—addresses, Aadhaar numbers, medical history, performance reviews, family details, even biometric data in some cases. And this isn't limited to employees; applicants and former workers are also in scope. ... With India housing thousands of global capability centres and outsourcing hubs, DPDP challenges multinationals to look inward. The emphasis so far has been on protecting customer data under global laws like GDPR. But now, internal data practices—especially around employees—are under the scanner. “DPDP is turning the lens inward,” says Gupta. “If your GCC in India tightens data practices, it won’t make sense to be lax elsewhere.”3 ways developers should rethink their data stack for GenAI success

Traditional data stacks optimized for analytics, for the most part, don’t

naturally support the vector search and semantic retrieval patterns that GenAI

applications require. Thus, real-time GenAI data architectures need native

support for embedding generation and vector storage as first-class citizens.

This could mean integrating data with vector databases like Pinecone,

Weaviate, or Chroma as part of the core infrastructure. It may also mean

searching for multi-modal databases that can support all of your required data

types out of the box without needing a bunch of separate platforms. Regardless

of the underlying infrastructure, plan for needing hybrid search capabilities

that combine traditional keyword search with semantic similarity, and consider

how you’ll handle embedding model updates and re-indexing. ... Maintaining

data relationships and ensuring consistent access patterns across these

different storage systems is the real challenge when working with these

various data types. While some platforms are beginning to offer enhanced

vector search capabilities that can work across different data types, most

organizations still need to architect solutions that coordinate multiple

storage systems. The key is to design these multi-modal capabilities into your

data stack early, rather than trying to bolt them on later when your GenAI

applications demand richer data integration.

Traditional data stacks optimized for analytics, for the most part, don’t

naturally support the vector search and semantic retrieval patterns that GenAI

applications require. Thus, real-time GenAI data architectures need native

support for embedding generation and vector storage as first-class citizens.

This could mean integrating data with vector databases like Pinecone,

Weaviate, or Chroma as part of the core infrastructure. It may also mean

searching for multi-modal databases that can support all of your required data

types out of the box without needing a bunch of separate platforms. Regardless

of the underlying infrastructure, plan for needing hybrid search capabilities

that combine traditional keyword search with semantic similarity, and consider

how you’ll handle embedding model updates and re-indexing. ... Maintaining

data relationships and ensuring consistent access patterns across these

different storage systems is the real challenge when working with these

various data types. While some platforms are beginning to offer enhanced

vector search capabilities that can work across different data types, most

organizations still need to architect solutions that coordinate multiple

storage systems. The key is to design these multi-modal capabilities into your

data stack early, rather than trying to bolt them on later when your GenAI

applications demand richer data integration. Cyber Hygiene Protecting Your Digital and Financial Health

Digital transformation has reshaped the commercial world, integrating

technology into nearly every aspect of operations. That has brought incredible

opportunities, but it has also opened doors to new threats. Cyber attacks are

more frequent and sophisticated, with malevolent actors targeting everyone

from individuals to major corporations and entire countries. It is no

exaggeration to say that establishing, and maintaining, effective cyber

hygiene has become indispensable. According to Microsoft’s 2023 Digital

Defense Report, effective cyber hygiene could prevent 99% of cyber attacks.

Yet cyber hygiene is not just about preventing attacks, it is also central to

maintaining operational stability and resilience in the event of a cyber

breach. In that event robust cyber hygiene can limit the operational,

financial, and reputational impact of a cyber attack, thereby enhancing an

entity’s overall risk profile. ... Even though it’s critical, data suggests

that many organizations struggle to implement even basic cyber security

measures effectively. For example, a 2024 survey by Extrahop, a Seattle-based

cyber security services provider, found that over half of the respondents

admitted to using at least one unsecured network protocol, making them

susceptible to attacks.

Digital transformation has reshaped the commercial world, integrating

technology into nearly every aspect of operations. That has brought incredible

opportunities, but it has also opened doors to new threats. Cyber attacks are

more frequent and sophisticated, with malevolent actors targeting everyone

from individuals to major corporations and entire countries. It is no

exaggeration to say that establishing, and maintaining, effective cyber

hygiene has become indispensable. According to Microsoft’s 2023 Digital

Defense Report, effective cyber hygiene could prevent 99% of cyber attacks.

Yet cyber hygiene is not just about preventing attacks, it is also central to

maintaining operational stability and resilience in the event of a cyber

breach. In that event robust cyber hygiene can limit the operational,

financial, and reputational impact of a cyber attack, thereby enhancing an

entity’s overall risk profile. ... Even though it’s critical, data suggests

that many organizations struggle to implement even basic cyber security

measures effectively. For example, a 2024 survey by Extrahop, a Seattle-based

cyber security services provider, found that over half of the respondents

admitted to using at least one unsecured network protocol, making them

susceptible to attacks.Are Data Engineers Sleepwalking Towards AI Catastrophe?

Data engineers are already overworked. Weigel cited a study that indicated 80%

of data engineering teams are already overloaded. But when you add AI and

unstructured data to the mix, the workload issue becomes even more acute.

Agentic AI provides a potential solution. It’s natural that overworked data

engineering teams will turn to AI for help. There’s a bevy of providers

building copilots and swarms of AI agents that, ostensibly, can build, deploy,

monitor, and fix data pipelines when they break. We are already seeing agentic

AI have real impacts on data engineering teams, as well as the downstream data

analysts who ultimately are the ones requesting the data in the first place.

... Once human data engineers are out of the loop, bad things can start

happening, Weigel said. They potentially face a situation where the volume of

data requests–which originally were served by human data engineers but now are

being served by AI agents–is beyond their capability to keep up. ... “We’re

now back in the dark ages, where we were 10 years ago [when we wondered] why

we need data warehouses,” he said. “I know that if person A, B, and C ask a

question, and previously they wrote their own queries, they got different

results. Right now, we ask the same agent the same question, and because

they’re non-deterministic, they will actually create different queries every

time you ask it.

Data engineers are already overworked. Weigel cited a study that indicated 80%

of data engineering teams are already overloaded. But when you add AI and

unstructured data to the mix, the workload issue becomes even more acute.

Agentic AI provides a potential solution. It’s natural that overworked data

engineering teams will turn to AI for help. There’s a bevy of providers

building copilots and swarms of AI agents that, ostensibly, can build, deploy,

monitor, and fix data pipelines when they break. We are already seeing agentic

AI have real impacts on data engineering teams, as well as the downstream data

analysts who ultimately are the ones requesting the data in the first place.

... Once human data engineers are out of the loop, bad things can start

happening, Weigel said. They potentially face a situation where the volume of

data requests–which originally were served by human data engineers but now are

being served by AI agents–is beyond their capability to keep up. ... “We’re

now back in the dark ages, where we were 10 years ago [when we wondered] why

we need data warehouses,” he said. “I know that if person A, B, and C ask a

question, and previously they wrote their own queries, they got different

results. Right now, we ask the same agent the same question, and because

they’re non-deterministic, they will actually create different queries every

time you ask it. How cybercriminals are weaponizing AI and what CISOs should do about it

Security teams are using AI to keep up with the pace of AI-powered cybercrime, scanning large volumes of data to surface threats earlier. AI helps scan massive amounts of threat data, surface patterns, and prioritize investigations. For example, analysts used AI to uncover a threat actor’s alternate Telegram channels, saving significant manual effort. Another use case: linking sockpuppet accounts. By analyzing slang, emojis, and writing styles, AI can help uncover connections between fake personas, even when their names and avatars are different. AI also flags when a new tactic starts gaining traction on forums or social media. ... As more defenders turn to AI to make sense of vast amounts of threat data, it’s easy to assume that LLMs can handle everything on their own. But interpreting chatter from the underground is not something AI can do well without help. “This diffuse environment, rich in vernacular and slang, poses a hurdle for LLMs that are typically trained on more generic or public internet data,” Ian Gray, VP of Cyber Threat Intelligence at Flashpoint, told Help Net Security. The problem goes deeper than just slang. Threat actors often communicate across multiple niche platforms, each with its own shorthand and tone.How To Keep AI From Making Us Stupid

The allure of AI is undeniable. It drafts emails, summarizes lengthy reports,

generates code snippets, and even whips up images faster than you can say

“neural network.” This unprecedented convenience, however, carries a subtle

but potent risk. A study from MIT has highlighted concerns that overuse of AI

tools might be degrading our thinking capabilities. That degradation is the

digital equivalent of using a GPS so much that you forget how to read a map.

Suddenly, your internal compass points vaguely toward convenience and not much

else. When we offload critical cognitive tasks entirely to AI, our muscles for

those tasks can begin to atrophy, leading to cognitive offloading. ... Treat

AI-generated content like a highly caffeinated first draft — full of energy

but possibly a little messy and prone to making things up. Your job isn’t to

simply hit “generate” and walk away, unless you enjoy explaining AI

hallucinations or factual inaccuracies to your boss. Or worse, your audience.

Always, always, aggressively edit, proofread, and, most critically, fact-check

every single output. ... The real risk isn’t AI taking over our jobs;

it’s us letting AI take over our brains. To maintain your analytical edge,

continuously challenge yourself. Practice skills that AI complements but

doesn’t replace, such as critical thinking, complex problem-solving, nuanced

synthesis, ethical judgment, and genuine human creativity.

The allure of AI is undeniable. It drafts emails, summarizes lengthy reports,

generates code snippets, and even whips up images faster than you can say

“neural network.” This unprecedented convenience, however, carries a subtle

but potent risk. A study from MIT has highlighted concerns that overuse of AI

tools might be degrading our thinking capabilities. That degradation is the

digital equivalent of using a GPS so much that you forget how to read a map.

Suddenly, your internal compass points vaguely toward convenience and not much

else. When we offload critical cognitive tasks entirely to AI, our muscles for

those tasks can begin to atrophy, leading to cognitive offloading. ... Treat

AI-generated content like a highly caffeinated first draft — full of energy

but possibly a little messy and prone to making things up. Your job isn’t to

simply hit “generate” and walk away, unless you enjoy explaining AI

hallucinations or factual inaccuracies to your boss. Or worse, your audience.

Always, always, aggressively edit, proofread, and, most critically, fact-check

every single output. ... The real risk isn’t AI taking over our jobs;

it’s us letting AI take over our brains. To maintain your analytical edge,

continuously challenge yourself. Practice skills that AI complements but

doesn’t replace, such as critical thinking, complex problem-solving, nuanced

synthesis, ethical judgment, and genuine human creativity.Governance meets innovation: Protiviti’s strategy for secure, scalable growth in BFSI and beyond

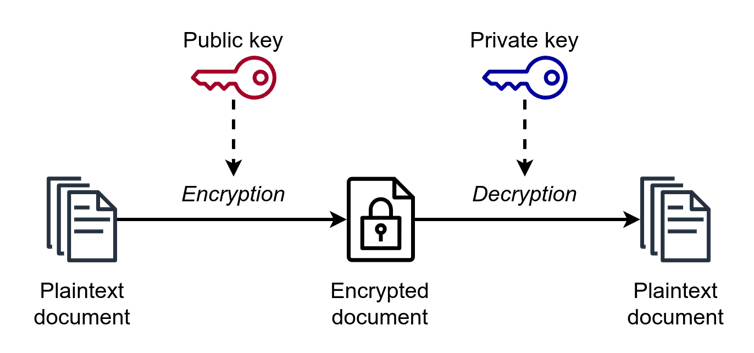

In today’s BFSI landscape, technology alone is no longer a differentiator. True competitive advantage lies in the orchestration of innovation with governance. The deployment of AI in underwriting, the migration of customer data to the cloud, or the use of IoT in insurance all bring immense opportunity—but also profound risks. Without strong guardrails, these initiatives can expose firms to cyber threats, data sovereignty violations, and regulatory scrutiny. Innovation without governance is a gamble; governance without innovation is a graveyard. ... In cloud transformation projects, for instance, we work with clients to proactively assess data localisation risks, cloud governance maturity, and third-party exposures, ensuring resilience is designed from day one. As AI adoption scales across financial services, we bring deep expertise in Responsible AI governance. From ethical frameworks and model explainability to regulatory alignment with India’s DPDP Act and the EU AI Act, our solutions ensure that automated systems remain transparent, auditable, and trustworthy. Our AI risk models integrate regulatory logic into system design, bridging the gap between innovation and accountability.Cybercriminals take malicious AI to the next level

Cybercriminals are tailoring AI models for specific fraud schemes, including

generating phishing emails tailored by sector or language, as well as writing

fake job posts, invoices, or verification prompts. “Some vendors even market

these tools with tiered pricing, API access, and private key licensing,

mirroring the [legitimate] SaaS economy,” Flashpoint researchers found. “This

specialization leads to potentially greater success rates and automated complex

attack stages,” Flashpoint’s Gray tells CSO. ... Cybercrime vendors are also

lowering the barrier for creating synthetic video and voice, with deepfake as a

service (DaaS) offerings ... “This ‘prompt engineering as a service’ (PEaaS)

lowers the barrier for entry, allowing a wider range of actors to leverage

sophisticated AI capabilities through pre-packaged malicious prompts,” Gray

warns. “Together, these trends create an adaptive threat: tailored models become

more potent when refined with illicit data, PEaaS expands the reach of threat

actors, and the continuous refinement ensures constant evolution against

defenses,” he says. ... Enterprises need to balance automation with expert

analysis, separating hype from reality, and continuously adapt to the rapidly

evolving threat landscape. “Defenders should start by viewing AI as an

augmentation of human expertise, not a replacement,” Flashpoint’s Gray

says.

Cybercriminals are tailoring AI models for specific fraud schemes, including

generating phishing emails tailored by sector or language, as well as writing

fake job posts, invoices, or verification prompts. “Some vendors even market

these tools with tiered pricing, API access, and private key licensing,

mirroring the [legitimate] SaaS economy,” Flashpoint researchers found. “This

specialization leads to potentially greater success rates and automated complex

attack stages,” Flashpoint’s Gray tells CSO. ... Cybercrime vendors are also

lowering the barrier for creating synthetic video and voice, with deepfake as a

service (DaaS) offerings ... “This ‘prompt engineering as a service’ (PEaaS)

lowers the barrier for entry, allowing a wider range of actors to leverage

sophisticated AI capabilities through pre-packaged malicious prompts,” Gray

warns. “Together, these trends create an adaptive threat: tailored models become

more potent when refined with illicit data, PEaaS expands the reach of threat

actors, and the continuous refinement ensures constant evolution against

defenses,” he says. ... Enterprises need to balance automation with expert

analysis, separating hype from reality, and continuously adapt to the rapidly

evolving threat landscape. “Defenders should start by viewing AI as an

augmentation of human expertise, not a replacement,” Flashpoint’s Gray

says.

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/13292777/acastro_181017_1777_brain_ai_0001.jpg)